Meta Closes the Open-Source Door on Frontier AI

Meta's Superintelligence Labs will ship its first flagship models under a closed license, ending the company's open-source-first strategy at the frontier tier.

Meta built its AI brand on open weights. Llama became the reference point for every open-source lab, every enterprise team that wanted to self-host, every researcher who couldn't afford the API bill. That positioning - Meta as the anti-OpenAI, the company that didn't lock the weights behind a paywall - was a genuine strategic asset. According to reporting first published on April 6, it's now being quietly dismantled.

Meta Superintelligence Labs, the new division created around Alexandr Wang after Meta's $14 billion investment in Scale AI, will release its first flagship models under a hybrid license. The largest, most capable models stay proprietary. Smaller derivatives may eventually get open weights, but the frontier tier goes dark.

The logic, per sources familiar with the plan, includes two factors: safety reviews before any open release, and the need to protect model specifications from being exploited - a direct reference to DeepSeek, which built competitive models by training on top of Llama outputs.

TL;DR

- Meta Superintelligence Labs will release new models under a hybrid license - proprietary at the frontier, open weights possible for smaller derivatives

- The pivot follows Llama 4's underperformance and DeepSeek's exploitation of Meta's open-source code

- Alexandr Wang, 28, now runs Meta's AI strategy after Meta's $14 billion bet on Scale AI

- Two flagship models in development: Avocado (text/reasoning, delayed) and Mango (image/video)

- Meta is offering $100 million pay packages and has already poached 20+ researchers from OpenAI

The $14 Billion Calculation

Wang's hire wasn't just a talent acquisition. Meta acquired a 49% non-voting stake in Scale AI for roughly $14-15 billion - a deal that valued Scale AI at $29 billion and installed its founder as Meta's Chief AI Officer. Wang was 19 when he left MIT to start Scale AI. He's 28 now and responsible for the most expensive AI bet Zuckerberg has made.

| Metric | Value |

|---|---|

| Meta stake in Scale AI | 49% non-voting |

| Scale AI valuation | $29 billion |

| Meta total AI spending commitment | $600 billion |

| New researcher sign-on packages | Up to $100 million |

| Researchers poached from OpenAI | 20+ |

| Avocado model delay | Originally March 2026, still unreleased |

The Scale AI deal gives Meta something it was missing: a coherent data labeling and evaluation pipeline. Wang's team built the infrastructure that OpenAI and Anthropic use to assess their models. Now that capability is inside the company building the models.

What the deal doesn't provide is a shortcut to competitive output. Llama 4, released in April 2025, "wildly underperformed expectations" against rivals, according to multiple reports. The follow-up model, internally codenamed Avocado, was scheduled for March 2026 but was delayed after internal benchmarks showed it trailing Google's Gemini on coding, reasoning, and writing. Meta acknowledged its next models "may not be competitive across the board."

Why Open Source No Longer Makes Sense for Meta

The open-source strategy made sense in 2023. OpenAI had a large lead and a closed API. Meta could undercut on price (free), build goodwill with the developer community, and let external researchers find the flaws. Zuckerberg framed it as democratization. It was also just good competitive positioning against a stronger opponent.

That calculus shifted when DeepSeek entered. DeepSeek didn't just build a competitive model - it did so partly by training on top of Llama's open weights, then released its own model openly. Meta gave away a competitive advantage and got a competitor with similar capabilities in return.

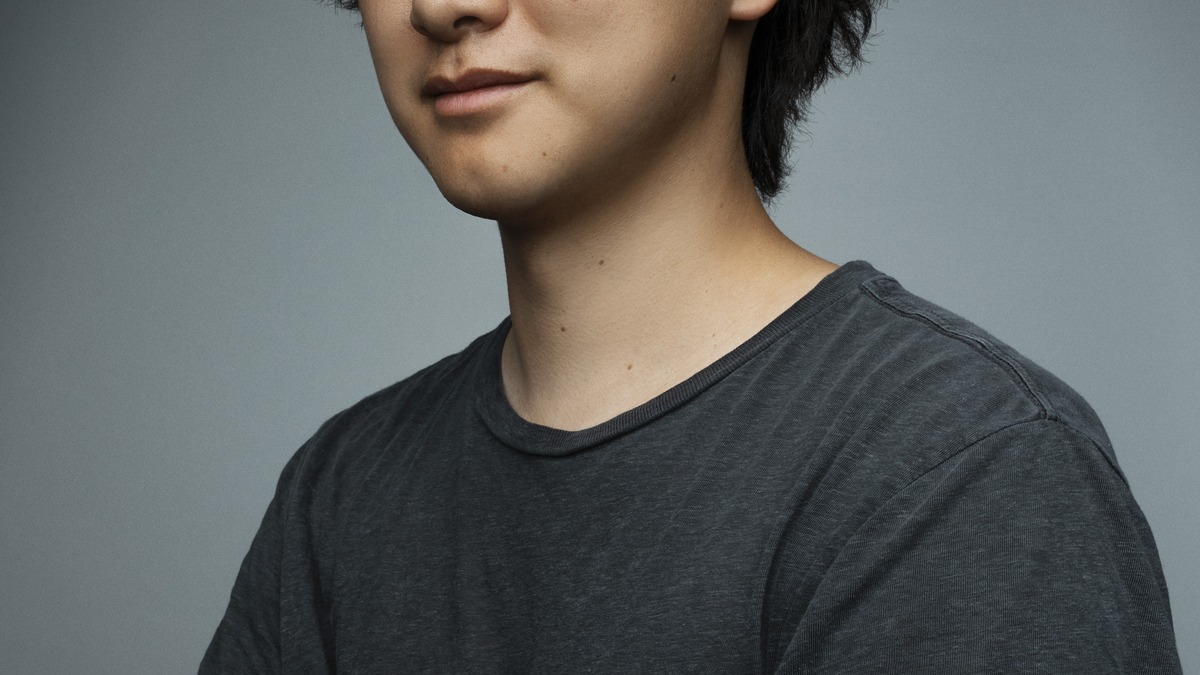

Alexandr Wang in his role as Meta's Chief AI Officer. He was 19 when he founded Scale AI after leaving MIT.

Source: commons.wikimedia.org

Alexandr Wang in his role as Meta's Chief AI Officer. He was 19 when he founded Scale AI after leaving MIT.

Source: commons.wikimedia.org

The second factor is financial. Meta has committed to $600 billion in AI spending. At that scale, giving away the crown jewel model weights stops being a clever competitive move and starts looking like a subsidy to competitors. The open-source ecosystem doesn't write checks back to Meta. Consumer products on WhatsApp and Instagram do.

Wang's stated vision is "personal superintelligence" - AI systems tailored to individual goals, delivered through Meta's consumer platforms. That's a product strategy, not a research strategy. Products get protected.

What Avocado and Mango Tell Us About the Strategy

The two models under development at Meta Superintelligence Labs have different jobs.

Avocado is a text-based LLM focused on coding and reasoning. It was originally planned for March 2026, delayed after benchmarks showed it trailing Gemini. This is the model slated for the eventual hybrid open-source release - the smaller derivative that might eventually get open weights. The delay signals that Meta won't ship until the model can hold its own, which is a change from the Llama 4 release cycle.

Mango is an image and video generation model targeted for first-half 2026. Meta is also collaborating with Midjourney and Black Forest Labs on a video product internally codenamed "Vibes." The image and video space is where Meta has the clearest consumer distribution advantage - Instagram and WhatsApp have the pipes; they need the models.

Both names signal something about the hybrid strategy. Avocado (the LLM that might eventually be open) is the lower-stakes bet. Mango (the generation model tied to consumer products) is the one that needs to be locked down.

Counter-Argument - Meta Won't Actually Go Full Closed

There are reasons to be skeptical that this pivot will hold. Meta has announced open-source commitments before and delivered them late or partially. Zuckerberg has framed open-source as a philosophical position, not just a business one - and he's been consistent about that framing for years.

The licensing structure also isn't a complete reversal. Meta's plan, as reported, still involves eventual open weights for some models, just not the frontier tier. Llama's distribution channel - the developer community that built tools, fine-tunes, and derivatives on top of it - doesn't evaporate overnight. Meta still benefits from that ecosystem.

Meta gave away a competitive advantage and got a competitor with similar capabilities in return.

The competitive pressure from Alibaba is also worth noting. Qwen models continue to push the open-source frontier, and any gap Meta leaves in the open-weight market gets filled quickly. If Meta goes dark at the frontier and Alibaba delivers a competitive open model, Meta loses the developer loyalty it spent years building without gaining the moat it's hoping to create.

Mark Zuckerberg has championed open-source AI as a philosophical and strategic position since 2023.

Source: commons.wikimedia.org

Mark Zuckerberg has championed open-source AI as a philosophical and strategic position since 2023.

Source: commons.wikimedia.org

What the Market Is Missing

The coverage of this story has mostly framed it as a corporate strategy shift - Meta pivoting from open to closed as competitive pressures mount. That framing is accurate but incomplete.

The subtler story is what this says about the sustainability of open-source AI at the frontier tier. Meta is the only company with the consumer reach to make an open-source frontier strategy financially defensible. It has 3 billion daily active users and direct distribution through WhatsApp, Instagram, and Facebook. If Meta can't justify keeping frontier weights open - with that distribution advantage - it's hard to see how anyone else can.

The gap between open-source and proprietary AI already narrowed dramatically in 2025, with open models reaching near-parity on major benchmarks. That narrowing was partly a result of the Llama ecosystem. If Meta retreats from that role, the gap may widen again, but this time the proprietary players include Meta itself.

Wang's $14 billion mandate is to build systems that beat OpenAI and Anthropic in the consumer market. The open-source strategy was the old management's play. The new play is: close the weights, protect the specs, use Zuckerberg's distribution. Whether $600 billion and 20+ researchers poached from OpenAI is enough to make that work is the actual question. The Llama 4 models already showed Meta what happens when you ship before you're ready.

Sources:

Last updated