Meta's $100B AMD Bet Is a Direct Shot at Nvidia

Meta and AMD signed a 6-gigawatt, multi-year GPU pact worth up to $100B - announced days after a separate Nvidia expansion, signaling Meta's deliberate strategy to break single-vendor dependence in AI compute.

Meta just handed AMD a lifeline worth up to $100 billion. Seven days earlier, it handed Nvidia one too.

On February 24, AMD and Meta announced a 6-gigawatt, multi-year, multi-generation agreement to deploy AMD Instinct GPUs across Meta's AI infrastructure. The deal - which includes a semi-custom GPU variant, next-generation AMD CPUs, and rack-scale architecture co-developed through the Open Compute Project - is one of the largest AI hardware commitments ever disclosed.

It's also the clearest evidence yet that Meta is running a deliberate two-vendor strategy, using its enormous spending power to ensure it's never fully captive to any single chip supplier.

TL;DR

- Meta and AMD signed a 6GW, multi-year GPU agreement worth an estimated $60B-$100B

- AMD issued Meta warrants to buy 160 million AMD shares at $0.01 - roughly 10% of the company - tied to deployment milestones

- The deal covers custom MI450-based GPUs, 6th Gen EPYC "Venice" CPUs, and AMD Helios rack-scale infrastructure

- First 1GW deployment starts H2 2026; full 6GW expected by 2030

- Meta announced a separate multi-billion Nvidia expansion just seven days earlier

The Deal Structure

What Meta Is Buying

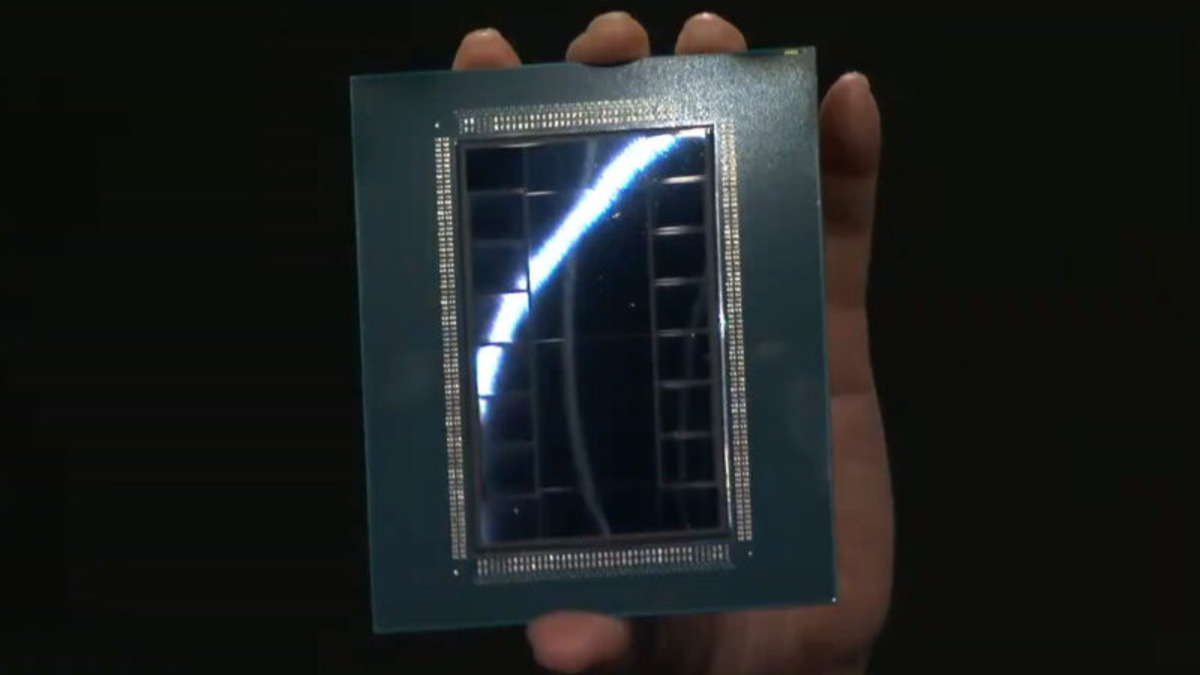

The AMD agreement spans multiple hardware generations. The first deployment phase begins in the second half of 2026, powered by a custom AMD Instinct MI450-based GPU purpose-built for Meta's workloads. The MI450 is AMD's latest Instinct accelerator, built on CDNA 5 architecture, manufactured on a 2nm-class TSMC node, with 432GB of HBM4 memory and close to 40 petaflops of FP4 compute performance.

With the GPU, Meta will deploy AMD's 6th Gen EPYC processors - codenamed "Venice" - designed with workload-specific optimizations. The entire stack runs on ROCm, AMD's open-source GPU computing platform, and sits within AMD Helios, a rack-scale architecture co-developed by AMD and Meta through the Open Compute Project.

AMD estimates each gigawatt of deployment will generate "significant double-digit billions of revenue." At six gigawatts, analysts place the total contract value between $60 billion and $100 billion over the agreement's lifetime through roughly 2030.

The AMD Instinct MI455X, displayed publicly for the first time at CES 2026. The MI450-based custom GPU destined for Meta's data centers is based on the same CDNA 5 architecture, with 432GB of HBM4 memory and close to 40 petaflops of FP4 compute.

The AMD Instinct MI455X, displayed publicly for the first time at CES 2026. The MI450-based custom GPU destined for Meta's data centers is based on the same CDNA 5 architecture, with 432GB of HBM4 memory and close to 40 petaflops of FP4 compute.

What AMD Is Giving Away

To close the deal, AMD issued Meta performance-based warrants to acquire up to 160 million shares of AMD common stock at an exercise price of $0.01 per share. At AMD's current price, that represents roughly 10% of the company.

The warrants vest in tranches tied to a set of conditions: GPU purchase milestones from one to six gigawatts, AMD stock price thresholds escalating to $600 per share, and additional technical and commercial conditions. AMD CEO Lisa Su characterized the warrant structure as reserved for "transformational partnerships," designed to "align incentives so both companies benefit from Meta's foundational models' success."

The financial engineering is notable. AMD is essentially granting Meta a performance-linked equity stake in exchange for guaranteed long-term revenue. For AMD, it is a bet that the relationship justifies the dilution. For Meta, it's leverage that compounds with every deployment milestone.

The Competitive Subtext

| Deal | Announced | GPU Volume | Estimated Value |

|---|---|---|---|

| Meta + Nvidia | Feb 17, 2026 | Millions of Blackwell + Rubin GPUs | Tens of billions (undisclosed) |

| Meta + AMD | Feb 24, 2026 | 6GW Instinct (MI450+) | $60B-$100B |

| AMD + OpenAI | Oct 2025 | 6GW Instinct (MI450+) | Undisclosed |

Seven days separate Meta's Nvidia expansion and its AMD agreement. That isn't coincidence - it's leverage. By signing with both suppliers in rapid succession, Meta signals to each vendor that the other is a credible alternative. It also extracts better commercial terms from both: Nvidia knows AMD is in the picture; AMD knows it has to compete on price, performance, and partnership terms to stay there.

"We're excited to form a long-term partnership with AMD to deploy efficient inference compute and deliver personal superintelligence. This is an important step for Meta as we diversify our compute. I expect AMD to be an important partner for many years to come."

- Mark Zuckerberg, CEO, Meta

The language is deliberate. "Diversify our compute" isn't an engineering statement - it's a procurement policy. Meta is spending $135 billion on AI infrastructure in 2026 alone, with a commitment to $600 billion in total US data center investment by 2028. At that scale, depending on a single supplier is a strategic risk. Zuckerberg is distributing that risk across AMD and Nvidia in a way that keeps both competing for Meta's next contract.

Who Benefits

AMD

The Meta deal is transformational for AMD's data center business in a way that no previous win has been. The company enters 2026 with a 35% CAGR target and a $20+ earnings-per-share goal. Su told investors at AMD's most recent analyst event that the Meta engagement is "vertically integrated" - meaning AMD isn't simply supplying commodity GPUs but co-designing hardware tailored to Meta's specific inference and training workloads.

That depth of collaboration creates switching costs in AMD's favour for future generations. Once Meta's Llama models - as we looked at in our Llama 4 Maverick profile - are optimised for the custom MI450 architecture, migrating to a different chip requires significant re-engineering. AMD is effectively embedding itself into Meta's AI stack.

The warrant structure boosts this. If Meta deploys all six gigawatts and AMD hits its stock price targets, AMD shareholders will face dilution. But AMD management is betting that the revenue and the operating leverage from a $100B customer relationship more than offsets that cost.

Lisa Su at the AMD CES 2026 keynote, where she unveiled the full Instinct MI400 product lineup and outlined AMD's AI infrastructure ambitions for the year.

Lisa Su at the AMD CES 2026 keynote, where she unveiled the full Instinct MI400 product lineup and outlined AMD's AI infrastructure ambitions for the year.

Meta

Meta gets hardware tailored to its actual workloads - not off-the-shelf GPUs optimised for OpenAI's training runs - and it gets a financial instrument that rises in value as AMD's stock appreciates. The warrant is equity upside that comes for free if Meta meets its own deployment targets.

It also gets negotiating power with Nvidia. Every gigawatt Meta commits to AMD is a gigawatt Nvidia has to fight harder to win back in the next cycle.

Who Pays

Nvidia

Nvidia still controls AI compute. Its H100 and Blackwell architectures are deeply embedded across the hyperscaler ecosystem, and the Meta Nvidia deal announced the previous week was major. But the AMD agreement introduces a price ceiling on what Nvidia can charge Meta from now on. If AMD's MI450 can handle Meta's inference workloads at competitive performance-per-watt metrics, Meta has a credible reason to shift more volume to AMD at renewal time.

The ROCm software ecosystem - historically AMD's weakest point - is maturing. With Meta and OpenAI both running production workloads on ROCm, the library coverage and tooling support that Nvidia's CUDA enjoys are narrowing. As we covered in our CUDA programming guide, CUDA's ecosystem advantage has long been Nvidia's most durable competitive moat. That moat is eroding.

AMD Shareholders

The 160-million-share warrant is the hidden cost in this deal. At current AMD prices, that represents an estimated $20-25 billion in potential dilution if Meta exercises all tranches. AMD management is treating this as a cost of customer acquisition at scale - reasonable for a relationship that could generate $100 billion in revenue. But the dilution risk is real and will weigh on the stock if AMD misses its growth targets or if Meta's deployment pace slows.

What the Market Is Missing

The AMD-Meta deal is being covered mainly as a hardware story. It's also a software story.

ROCm is now running at production scale across two of the largest AI compute deployments in the world - Meta and OpenAI. That matters because the largest barrier to enterprise adoption of AMD AI infrastructure has never been raw GPU performance. It has been the question of whether the software ecosystem - drivers, libraries, compiler toolchains - would keep pace. Large-scale production commitments from Meta and OpenAI are the clearest signal yet that AMD's software stack has crossed a credibility threshold.

For enterprises currently assessing AI infrastructure, the case for looking beyond Nvidia just got considerably stronger. AMD is no longer an underdog positioning itself as a future alternative. It is, by the metrics that matter - revenue commitments, production workloads, ecosystem depth - a present alternative.

The real question isn't whether AMD can challenge Nvidia. That question has been answered. The question is whether AMD can maintain the operational execution needed to fulfil six gigawatts of custom hardware at the schedule Meta requires - and whether ROCm can keep pace with every new model architecture Meta's research teams produce. As we noted when reviewing AMD's home GPU performance, execution at the data center scale is a different problem than silicon performance on paper.

Lisa Su put it plainly in her statement on the deal: "This multi-year, multi-generation collaboration across Instinct GPUs, EPYC CPUs and rack-scale AI systems aligns our roadmaps to deliver high-performance, energy-efficient infrastructure optimized for Meta's workloads, accelerating one of the industry's largest AI deployments and placing AMD at the center of the global AI buildout." Whether AMD can deliver on that ambition at six gigawatts and across five years is the only question the market should be asking.

Sources:

- AMD and Meta Announce Expanded Strategic Partnership to Deploy 6 Gigawatts of AMD GPUs - AMD Investor Relations

- Meta and AMD Partner for Longterm AI Infrastructure Agreement - Meta Newsroom

- AMD and Meta lock in massive 6GW AI hardware pact - TechRadar

- AMD CEO Lisa Su Teases MI450 Ramp, 6GW Meta AI Deal, and 35% Growth Target - MarketBeat

- AMD and Meta Announce a Massive 6GW Deal - ServeTheHome

- Meta and NVIDIA Announce Long-Term Infrastructure Partnership - Meta Newsroom