EXAONE 4.5: LG's Open VLM Beats GPT-5-mini on STEM

LG AI Research released EXAONE 4.5, a 33B open-weight vision-language model that posts higher STEM scores than GPT-5-mini and Claude 4.5 Sonnet - but a non-commercial license caps its real-world reach.

LG AI Research dropped EXAONE 4.5 on April 9 - a 33 billion parameter vision-language model that outscores GPT-5-mini on STEM benchmarks while running on open weights. That combination is rare enough to be worth paying attention to. The catch, as usual, is in the license.

TL;DR

- EXAONE 4.5 is a 33B open-weight vision-language model from LG AI Research, released April 9

- STEM benchmark average of 77.3 vs GPT-5-mini at 73.5 and Claude 4.5 Sonnet at 74.6 (LG's own figures)

- Supports 262K token context, six languages, and runs on a single H200 or four A100-40GB cards

- License is non-commercial - research and academic use only, no production deployment

LG AI Research's official announcement for EXAONE 4.5, released April 9, 2026.

Source: lgresearch.ai

LG AI Research's official announcement for EXAONE 4.5, released April 9, 2026.

Source: lgresearch.ai

The Numbers LG Wants You to See

LG published benchmark comparisons across 13 visual and language tasks. The headline figures are below.

| Benchmark | EXAONE 4.5 | GPT-5-mini | Claude 4.5 Sonnet | Qwen-3 235B |

|---|---|---|---|---|

| STEM avg | 77.3 | 73.5 | 74.6 | 77.0 |

| LiveCodeBench v6 | 81.4 | - | - | - |

| ChartQA Pro | 62.2 | - | - | - |

| MMMU | 78.7 | - | - | - |

| GPQA-Diamond | 80.5 | - | - | - |

| MMLU-Pro | 83.3 | - | - | - |

| AIME 2025 | 92.9% | - | - | - |

For coding, our LiveCodeBench leaderboard currently shows EXAONE 4.5's 81.4 on v6 edging past Google Gemma 4's 80.0 in the same category. Gemma 4 is a comparable open-weight model family, so that comparison carries more weight than the GPT-5-mini contrast.

The AIME 2025 result of 92.9% is striking for a 33B model. That's a competition mathematics benchmark where scores normally require both strong reasoning and multi-step verification.

Architecture Under the Hood

A Hybrid Attention Design

EXAONE 4.5 uses a hybrid attention pattern across 64 main layers. The structure alternates three sliding window attention layers with one global attention layer, repeating that block 16 times. Sliding windows use a 4,096-token context with 40 query heads and 8 KV heads. Global attention layers use NoPE - no positional embedding - which is the same approach used in several recent long-context models.

The vision encoder is a 1.29B parameter component with grouped query attention and 2D RoPE, fused into the language model. Total parameters come to 33B, with 31.7B in the core LLM.

The 262,144-token context window is the practical standout here. That covers roughly 200 pages of dense text or a combination of long documents and images in a single call.

What It Can Process

The model handles text-and-image pairs simultaneously, with documented strengths in:

- Document analysis: contracts, technical drawings, financial statements

- Chart interpretation via ChartQA Pro (62.2, described as highest in its class)

- OCR and structured document reasoning via OmniDocBench (81.2)

- Korean-language tasks through a built-in cultural context module called K-AUT

Language support covers Korean, English, Spanish, German, Japanese, and Vietnamese.

Deployment Requirements

Running at full 256K context demands a single H200 GPU. Alternatively, four A100-40GB cards with tensor parallelism work. The model ships with zero-day support for vLLM, SGLang, TensorRT-LLM, and llama.cpp, so inference infrastructure isn't a problem.

Recommended inference settings are temperature=1.0, top_p=0.95, presence_penalty=1.5 with reasoning mode on by default. For OCR tasks, LG recommends lowering temperature to 0.6.

A sample multimodal input from the EXAONE 4.5 model card, showing the model's document and scene understanding capabilities.

Source: github.com/LG-AI-EXAONE

A sample multimodal input from the EXAONE 4.5 model card, showing the model's document and scene understanding capabilities.

Source: github.com/LG-AI-EXAONE

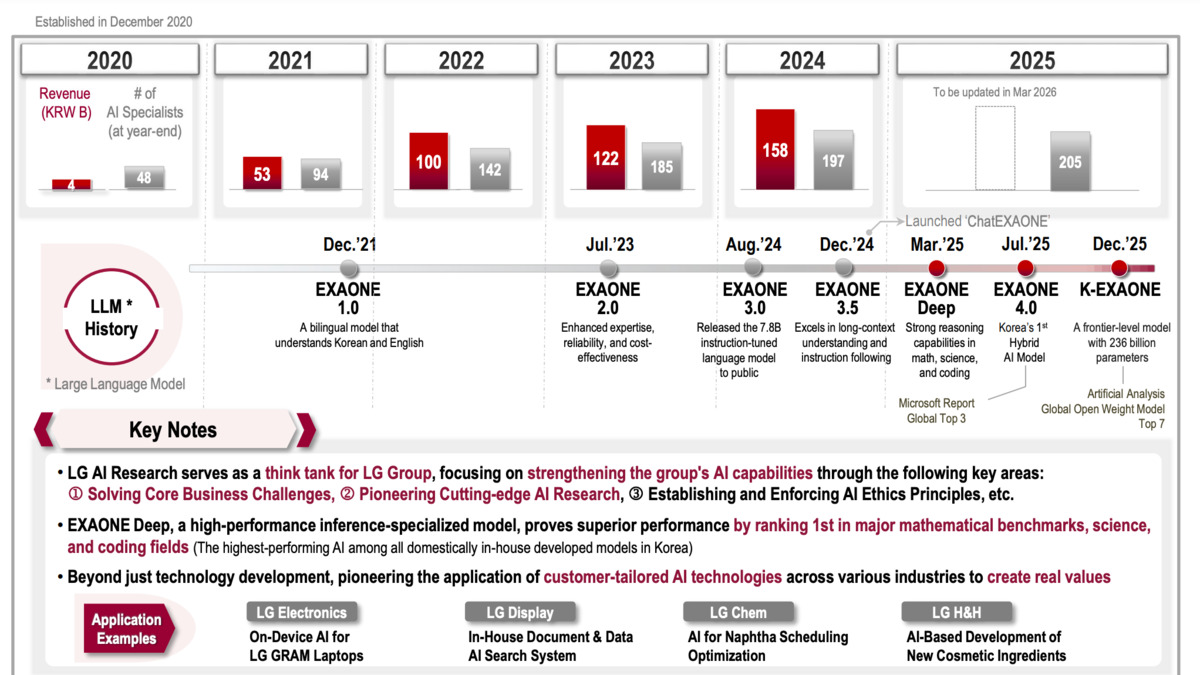

Context: Who LG Is in AI

LG AI Research has been building large models since 2021 when it released the original EXAONE. The lab is backed by LG Group, one of South Korea's largest conglomerates with revenue above $150 billion. Unlike Mistral or Falcon, LG isn't a standalone AI startup seeking enterprise deals - it's an industrial research division with the compute budget to train 33B parameter models and the product teams to deploy them in LG appliances, manufacturing systems, and business software.

That context matters for understanding why they built this model and what benchmarks they chose to highlight.

"EXAONE 4.5 represents LG AI's successful entry into the multimodal era," said Jinsik Lee of LG AI Research.

The "multimodal era" framing positions this as an inflection point for the lab rather than a gradual release. The simultaneous claim that it beats a GPT-5-class model at 33B parameters is the kind of statement that benefits from independent verification.

What It Does Not Tell You

The License Is Non-Commercial

EXAONE 4.5 carries the EXAONE AI Model License Agreement 1.2 - NC designation. That means research, academic, and educational use only. Commercial deployment requires a separate agreement with LG AI Research. This isn't an open-source release in any meaningful enterprise sense, and the "open-weight" framing obscures that distinction.

Compare this to Gemma 4, which ships under Apache 2.0 and can be dropped into production without negotiating terms. Or the recent GLM-5.1 release, which carries its own licensing constraints but targets specific deployment contexts. For teams assessing models to actually ship, the NC license is a blocker that the benchmark table doesn't surface.

LG Chose the Comparisons

The benchmark table compares EXAONE 4.5 against GPT-5-mini, not GPT-5.3, GPT-5.4, or Claude Opus 4.6. Beating a smaller OpenAI model is a meaningful achievement for a 33B parameter open-weight model. Framing it alongside Claude 4.5 Sonnet on STEM - where EXAONE 4.5 scores 77.3 against Sonnet's 74.6 - is accurate but selective. The missing columns are where the frontier models pull ahead.

Independent evaluations on LMSYS Chatbot Arena, our coding benchmarks leaderboard, and third-party agent evals haven't yet landed for this release. Until they do, the benchmark table represents LG's best case.

Knowledge Cutoff Is December 2024

The training data knowledge cutoff is December 2024, which means the model is already 16 months behind real-world events. For document analysis on historical contracts and technical drawings, this doesn't matter much. For anything requiring current knowledge, the cutoff is a practical constraint.

Hardware Isn't Cheap

A single H200 costs roughly $30,000 to $40,000 on the secondary market. Four A100-40GBs run $8,000 to $12,000 each. This is not a model that runs on consumer hardware. The "open weight" framing suggests accessibility - the actual infrastructure requirements suggest otherwise.

EXAONE 4.5 is a truly capable multimodal model from a lab that rarely gets coverage outside Korea. The STEM benchmark numbers are real, the architecture choices are defensible, and the 262K context window is useful for the document-heavy use cases LG built it for. The non-commercial license is the honest limiting factor. Research teams assessing vision-language models for document processing have a new option. Production teams don't, unless they negotiate with LG directly.

Sources: