LeRobot v0.5.0: First Humanoid, 10x Training Speed

Hugging Face ships its largest LeRobot update yet: Unitree G1 humanoid support, Pi0-FAST VLA, Real-Time Chunking, 10x faster image training, and PEFT/LoRA fine-tuning for large robot policies.

Hugging Face merged 200+ PRs and brought on 50+ new contributors for LeRobot v0.5.0, released March 9. The headline is the first humanoid integration - the Unitree G1 - but the release does considerably more than add one robot to the supported list. Training throughput, inference latency, fine-tuning ergonomics, and simulation infrastructure all moved forward in a single version.

TL;DR

- LeRobot v0.5.0 ships the first humanoid: full Unitree G1 support (both 23 and 29 DoF variants) with teleoperation, loco-manipulation, and MuJoCo sim

- Pi0-FAST brings autoregressive VLA inference, 5x faster than diffusion-based Pi0, plus 10x compression of action tokens via DCT + BPE

- Real-Time Chunking eliminates chunk-boundary pauses with async generation and a flow-matching guidance term

- Image training runs 10x faster after fixing data pipeline bottlenecks; video encoding is 3x faster with parallel encoding

- PEFT/LoRA fine-tuning added for SmolVLA, Pi0, and other large VLAs - requires just

pip install lerobot[peft] - LeRobot paper accepted as an ICLR 2026 poster

Unitree G1 - First Humanoid in the Project

LeRobot has supported tabletop arms and mobile bases since early versions, but humanoids have been out of scope. That changes with v0.5.0. The Unitree G1 - available in 23 and 29 degree-of-freedom configurations - is now a first-class platform in the framework.

What "support" actually means here is worth unpacking. This isn't just a driver shim. The integration covers:

- Teleoperation via the Homunculus Exoskeleton, an open-source 7 DoF whole-body control rig

- Loco-manipulation - coordinated locomotion plus arm control, using either the open-source HolosomaLocomotionController or NVIDIA's GR00T-WholeBodyControl

- MuJoCo simulation with accurate G1 dynamics for training without hardware

- Dataset recording and policy training directly in the LeRobot pipeline

- Real-time inference with RTC support for sub-100ms chunk transitions (more on this below)

The recommended training config pairs Pi0.5 with bfloat16 precision and gradient checkpointing. For deployment, --rtc.enabled=true is the default recommendation.

A robotic arm setup - the kind of hardware LeRobot v0.5.0 now supports at the humanoid level, with teleoperation, sim-to-real transfer, and on-device inference.

Source: unsplash.com

A robotic arm setup - the kind of hardware LeRobot v0.5.0 now supports at the humanoid level, with teleoperation, sim-to-real transfer, and on-device inference.

Source: unsplash.com

The Unitree G1 is also notable for context in the broader humanoid space. It's a commercially available platform in the $20K range, which makes it accessible to academic labs and well-funded open-source projects. Pairing it with a free, Apache-licensed training framework lowers the bar meaningfully.

Pi0-FAST and Real-Time Chunking

Pi0-FAST: autoregressive action prediction

Pi0-FAST replaces diffusion-based flow matching with autoregressive next-token prediction. The backbone is PaliGemma (SigLIP vision tower + Gemma 2B language model), and the pre-trained base is lerobot/pi0fast-base on the Hub.

The key technical contribution is the FAST action tokenizer (lerobot/fast-action-tokenizer). The pipeline:

- Normalize an action chunk of shape

(H, D)using quantile normalization - Apply a Discrete Cosine Transform per action dimension (same algorithm as JPEG)

- Quantize and remove insignificant DCT coefficients

- Flatten to 1D with low-frequency components first

- Apply Byte Pair Encoding over the DCT coefficients

The result is 10x compression of action tokens compared to prior tokenization methods, trained on 1M+ real robot sequences. Inference uses KV-caching. Wall-clock training speed versus the original diffusion-based Pi0: 5x faster.

On the LIBERO benchmark (finetuned from pi0fast-base, 40k steps, 8x H100s, batch 256):

| LIBERO Task | Pi0-FAST Score |

|---|---|

| Spatial | 70.0 |

| Object | 100.0 |

| Goal | 100.0 |

| LIBERO-10 | 60.0 |

| Average | 82.5 |

Real-Time Chunking: removing the pause

Large flow-matching policies produce action chunks of 50+ steps. If inference takes longer than a chunk's execution window, the robot stalls waiting for the next chunk. Real-Time Chunking (RTC), ported from Physical Intelligence's research, solves this with async generation.

RTC asynchronously produces the next chunk while the robot executes the current one. It adds a guidance term to the flow-matching denoising process that forces the new chunk's overlapping timesteps to stay close to what the robot actually executed - keeping motion smooth across boundaries.

Key config parameters:

rtc_config:

enabled: true

execution_horizon: 10 # steps of overlap to maintain consistency with

max_guidance_weight: 10.0 # works well for SmolVLA, Pi0, Pi0.5

prefix_attention_schedule: EXP # exponential decay (recommended)

RTC is compatible with Pi0, Pi0.5, SmolVLA, and Diffusion policies. No reinstall needed beyond the base policy dependencies.

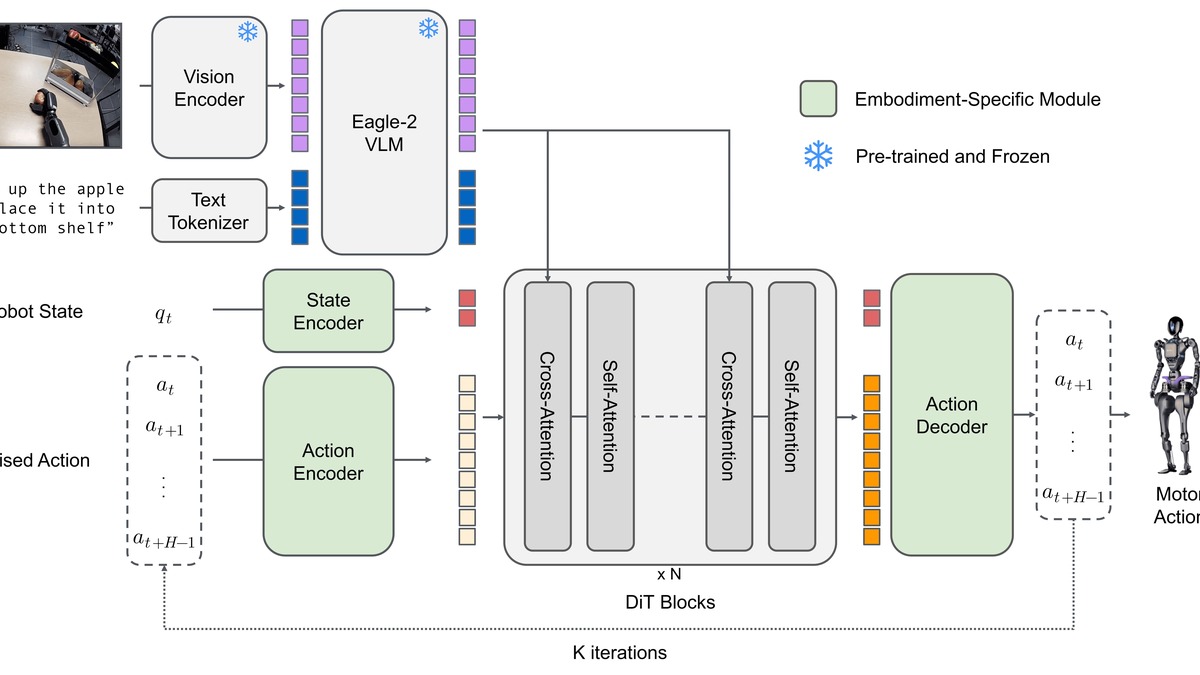

The Vision-Language-Action (VLA) architecture that underpins LeRobot's large policy models, including Pi0-FAST and SmolVLA.

Source: github.com/huggingface/lerobot

The Vision-Language-Action (VLA) architecture that underpins LeRobot's large policy models, including Pi0-FAST and SmolVLA.

Source: github.com/huggingface/lerobot

Training Infrastructure Gains

10x faster image training

The 10x speedup in image training comes from fixing long-standing data pipeline bottlenecks rather than any architectural change. Parallel encoding is now the default across all platforms, and image transforms have been reworked. Video encoding is 3x faster, and streaming video encoding now has zero wait between episodes - previously there was a post-episode pause while encoding completed.

These aren't gradual gains. A pipeline that blocked for 90% of its time on I/O between environment steps is qualitatively different from one that doesn't.

EnvHub and GPU-accelerated simulation

EnvHub lets you load simulation environments directly from the HuggingFace Hub:

python lerobot/scripts/train.py \

--env.type=hub \

--env.hub_path="username/my-custom-env" \

--policy.type=act

The matching NVIDIA IsaacLab-Arena integration brings GPU-accelerated parallel simulation into the pipeline. Running thousands of environment instances simultaneously on a single GPU - the standard RL training setup - is now native to LeRobot rather than requiring external orchestration glue.

This matters for anyone doing reinforcement learning training with LeRobot. The gap between "download a pretrained policy and fine-tune" and "train from scratch with RL" just got smaller.

PEFT/LoRA fine-tuning

Large VLAs like Pi0 and SmolVLA have been expensive to fine-tune on custom robot tasks. v0.5.0 adds LoRA support via HuggingFace PEFT:

pip install lerobot[peft]

python lerobot/scripts/train.py \

--policy.type=smolvla \

--peft.method_type=LORA \

--peft.r=64 \

--training.lr=1e-3

The recommended learning rate with LoRA is 10x higher than full fine-tuning. Default target layers for SmolVLA cover q_proj, v_proj, plus the action-specific projection layers. You can override with --peft.target_modules or add full fine-tuning to specific layers with --peft.full_training_modules.

Compatibility Table

| Component | Requirement |

|---|---|

| Python | 3.12+ (hard minimum) |

| Transformers | v5 |

| Unitree G1 | CycloneDDS v0.10.2, Unitree SDK |

| PEFT/LoRA | pip install lerobot[peft] |

| Pi0-FAST | pip install lerobot[pi] |

| SmolVLA | pip install lerobot[smolvla] |

| RTC | Included with base policy install |

| IsaacLab-Arena | NVIDIA Isaac Sim required |

Where It Falls Short

The Python 3.12 hard minimum will break CI pipelines still running 3.10 or 3.11. The Transformers v5 requirement similarly creates dependency conflicts with projects pinned to v4.x.

The Unitree G1 integration is impressive in scope, but the locomotion controllers (HolosomaLocomotionController and GR00T-WholeBodyControl) add their own dependency chains. Getting the full stack running on new hardware is non-trivial. The docs are detailed but assume familiarity with CycloneDDS networking and the Unitree SDK.

LIBERO benchmark scores for Pi0-FAST look strong on average (82.5), but the 60.0 on LIBERO-10 - the most compositional task - is noticeably weaker. Autoregressive action generation buys training speed at some cost to long-horizon generalization relative to flow-matching approaches.

The NVIDIA Alpamayo robotics model demonstrated what a well-resourced robotics compute pipeline looks like. LeRobot is playing a different game - open, community-driven, hardware-agnostic - but the simulation infrastructure gap remains real for groups that need serious parallel training throughput without Isaac Sim licenses.

The ICLR 2026 paper acceptance gives LeRobot academic legitimacy it didn't have before. The practical news is that v0.5.0 ships enough infrastructure improvements to make the jump from "interesting demo framework" to "production training pipeline" for labs working on robot learning. Whether the humanoid support turns into a serious research track depends on how many G1 owners are also LeRobot users - and the install docs suggest that number is growing.

Sources:

Last updated