Kimi K2.6 - Open Weights, 300 Agents, Top Coding Score

Moonshot AI releases Kimi K2.6 under Modified MIT with open weights on HuggingFace, 300-agent swarm execution, and the highest SWE-Bench Pro score among open models.

Key Specs

| Spec | Value |

|---|---|

| Total Parameters | 1T (MoE) |

| Active Parameters | 32B per token |

| Context Window | 256K tokens |

| Agent Swarm | 300 sub-agents / 4,000 steps |

| SWE-Bench Pro | 58.6% |

| License | Modified MIT |

| Weights | HuggingFace (moonshotai/Kimi-K2.6) |

Moonshot AI released Kimi K2.6 on April 20 with open weights on HuggingFace under a Modified MIT license. The model scores 58.6% on SWE-Bench Pro - ahead of GPT-5.4 at 57.7% and Claude Opus 4.6 at 53.4% - and extends the agent swarm capacity from 100 to 300 simultaneous sub-agents. For developers who need long-horizon autonomous coding and can run or rent a 1T MoE model, this is the most capable open-weight option on the market.

Architecture

K2.6 shares the same base architecture as K2.5: 1 trillion total parameters in a Mixture-of-Experts layout, with only 32 billion activated per forward pass. That ratio gives it frontier-class quality while keeping per-token compute manageable in a multi-tenant serving environment.

Attention and Experts

The model runs 61 layers (including one dense layer), with Multi-head Latent Attention (MLA) on a 7168-dimension hidden state. The expert layer has 384 total experts; 8 are selected per token via a top-k router, with one shared expert that always fires. Vocabulary is 160K tokens, which handles code, multilingual text, and technical symbols without excessive tokenization fragmentation.

Vision

A 400M-parameter MoonViT encoder handles images and video frames. The model can take a screenshot, wireframe, or video clip as input and produce code, which is what enables the "coding-driven design" use case - converting a design mockup into a working React component without a separate vision model in the pipeline.

Kimi Code K2.6 integrated into VS Code via the Agent Client Protocol, showing a multi-step task execution.

Source: github.com/MoonshotAI/kimi-cli

Kimi Code K2.6 integrated into VS Code via the Agent Client Protocol, showing a multi-step task execution.

Source: github.com/MoonshotAI/kimi-cli

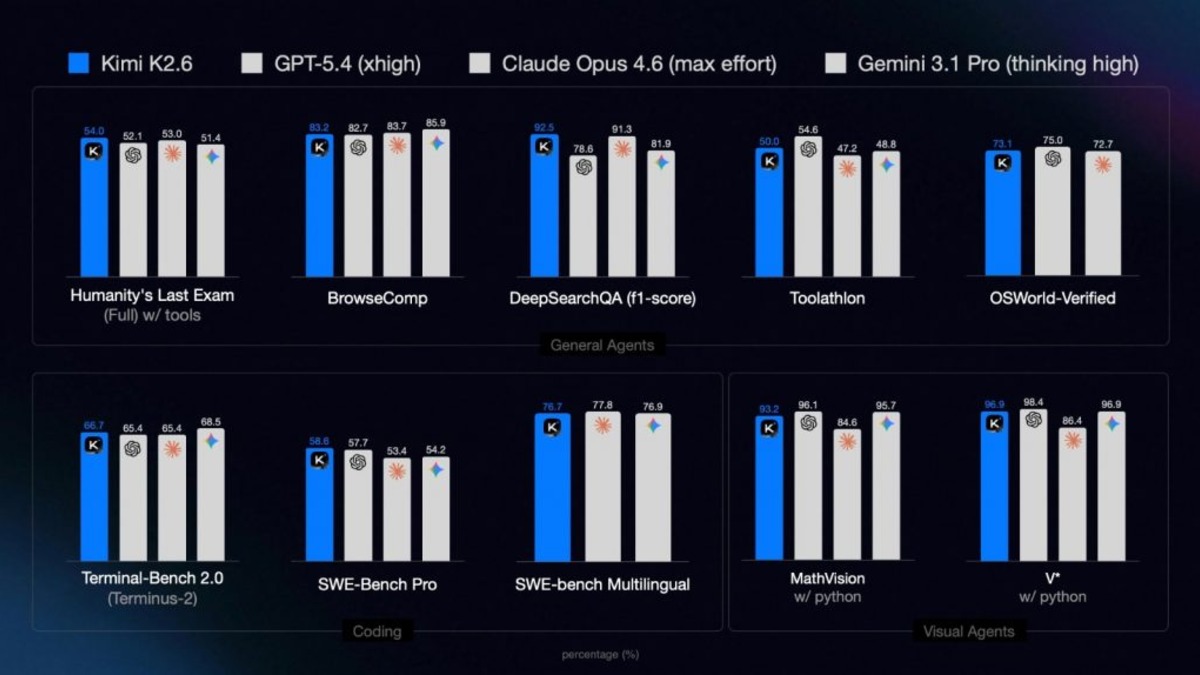

Benchmark Performance

K2.6 leads on the benchmarks most relevant to production coding work. The improvements over K2.5 aren't cosmetic.

| Benchmark | Kimi K2.6 | GPT-5.4 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-Bench Pro | 58.6% | 57.7% | 53.4% | n/a |

| SWE-Bench Verified | 80.2% | n/a | 80.8% | n/a |

| Terminal-Bench 2.0 | 66.7% | n/a | n/a | 68.5% |

| HLE with Tools | 54.0 | 52.1 | 53.0 | n/a |

| Toolathlon | 50.0 | n/a | 47.2 | 48.8 |

| DeepSearchQA (F1) | 92.5 | n/a | 91.3 | n/a |

| BrowseComp | 83.2 | n/a | n/a | n/a |

| LiveCodeBench v6 | 89.6 | n/a | n/a | n/a |

The SWE-Bench Pro gap over Claude Opus 4.6 is substantial at 5 points. The lead over GPT-5.4 is narrower - less than one full point - so calling it definitively ahead on that metric requires caution. Toolathlon, which measures real agentic tool-use rather than solved coding problems, shows a cleaner margin over both Claude and Gemini.

For context on what SWE-Bench Pro actually measures, our earlier coverage of the benchmark's maintainer merge-rate methodology is worth reading before treating these numbers as gospel.

Kimi K2.6 benchmark comparisons across coding, agentic, and reasoning tasks versus frontier closed-weight models.

Source: officechai.com

Kimi K2.6 benchmark comparisons across coding, agentic, and reasoning tasks versus frontier closed-weight models.

Source: officechai.com

Improvements over K2.5

| Metric | K2.5 | K2.6 | Change |

|---|---|---|---|

| Agent Swarm Size | 100 | 300 | +200% |

| Coordinated Steps | 1,500 | 4,000 | +167% |

| SWE-Bench Pro | 50.7% | 58.6% | +7.9pp |

| Claw Eval (pass@3) | 75.4% | 80.9% | +5.5pp |

| HLE with Tools | 50.2 | 54.0 | +3.8 |

| BrowseComp | 74.9 | 83.2 | +8.3 |

The Agent Swarm

The swarm capability is where K2.6 departs most visibly from standard model updates. Moonshot ships a task orchestration layer that distributes work across up to 300 independent sub-agents, each running its own tool-call chain of up to 4,000 steps. The system coordinates heterogeneous skills - one sub-agent handles flame graph analysis, another rewrites the hot path, a third runs benchmarks and reports back.

Two real-world examples from the blog post give a sense of scale. In the first, K2.6 optimized a Qwen3.5 inference implementation in Zig over 12 continuous hours, making roughly 4,000 tool calls and ending up 20% faster than the LM Studio baseline. In the second, it analyzed a Java financial matching engine, refactored the thread topology based on flame graph data, and reached 185% throughput improvement - from 0.43 to 1.24 million trades per second.

These are Moonshot's own results. Independent verification takes time.

Claw Groups

A new feature called Claw Groups lets humans and agents share work at runtime. A developer can take over a specific subtask mid-execution, hand it back, or redirect a sub-agent without stopping the swarm. This addresses one of the practical problems with pure autonomous agents: the inability to course-correct without killing the whole job.

Deployment

K2.6 runs on vLLM, SGLang, and KTransformers. The HuggingFace model card requires transformers>=4.57.1 and supports the standard thinking toggle for switching between extended reasoning and instant-response modes. API access is OpenAI and Anthropic SDK compatible - no client changes needed to point an existing app at Kimi's endpoint.

import openai

client = openai.OpenAI(

api_key="your-api-key",

base_url="https://api.moonshot.ai/v1"

)

response = client.chat.completions.create(

model="kimi-k2.6",

messages=[{"role": "user", "content": "Refactor this function for throughput..."}],

max_tokens=4096,

extra_body={"thinking": {"type": "enabled"}}

)

Native INT4 quantization is supported using the same method as Kimi-K2-Thinking, which cuts VRAM requirements substantially but requires a compatible inference stack.

License Strings

The Modified MIT license allows commercial use with one exception: companies that exceed 100 million monthly active users or $20 million in monthly revenue must display a visible "Kimi K2.6" credit in their user interface. Smaller deployments have no such requirement. This is the same structure as K2.5, and it's the clause that caused friction with Cursor last quarter when Cursor deployed K2.5 without crediting it.

What To Watch

The SWE-Bench Pro score is real, but two caveats are worth holding onto.

First, the lead over GPT-5.4 is 0.9 percentage points - within the noise of evaluation variance. The lead over Claude Opus 4.6 at 5 points is more durable, but Claude Opus 4.6 is not Anthropic's latest effort on coding benchmarks.

Second, running a 1T MoE model isn't something most teams can do on their own hardware. The practical deployment is either the Kimi API or a well-resourced cloud setup with multi-node vLLM. The "open weights" story is real for organizations with A100 or H100 clusters, but for everyone else this is API access with a known model architecture - which is still better than a fully closed system.

Moonshot also continues to iterate quickly. The gap between K2.5 and K2.6 closed in roughly two months. If that pace holds, whatever benchmark position K2.6 occupies today may look different by June. For teams assessing long-horizon coding agents, the Qwen3.6 35B-A3B is worth benchmarking with K2.6 - it runs on far less hardware, and recent scores on SWE-bench Verified put it within range of models that cost ten times more to serve.

K2.6's release puts a second open-weight model in the same week - Alibaba also dropped Qwen3.6-Max-Preview on April 20 with its own frontier-class benchmark claims. The competition on open coding agents is now truly multi-sided, and the gap between open and closed weights on production coding tasks has nearly closed.

Sources:

Last updated