Karpathy's Autoresearch Runs 100 ML Experiments Overnight

Andrej Karpathy open-sourced autoresearch, a 630-line MIT-licensed Python tool that runs up to 100 autonomous ML experiments overnight on a single GPU, no PhD required.

Andrej Karpathy quietly dropped autoresearch last week - a 630-line, MIT-licensed Python script that turns a single GPU into an autonomous ML research loop. You write a Markdown file describing what you want the model to explore. The agent rewrites the training code, runs experiments, keeps whatever improves, and discards the rest. By morning you have a tighter model and a Git log full of validated changes.

It's already past 20,900 GitHub stars, and the forks are piling up fast.

TL;DR

- 630 lines of Python, single GPU, MIT license - no distributed training, no complex config

- Agent autonomously edits

train.py, runs 5-minute training windows, commits only improvements - Tracks val_bpb metric; one overnight run dropped it from 0.9979 to 0.9697 across 126 experiments

- Shopify CEO Tobi Lutke adapted it for internal use and reported a 19% validation improvement

- Current limitation: single-threaded, synchronous - Karpathy has flagged this is the next frontier to solve

How the Experiment Loop Works

The design is deliberately minimal. Three files do almost all the work.

The Control Flow

At each iteration, the agent reads program.md (your instructions), the current train.py, and the history in results.tsv. It proposes a code edit to train.py, runs training for exactly five minutes, and measures val_bpb - validation bits per byte, a loss metric that's independent of vocabulary size. If the score improves, the change gets committed to Git. If it doesn't, it gets rolled back. No human in the loop.

At roughly 12 experiments per hour, a full overnight run produces around 100 attempts. In Karpathy's first published 83-run session, 15 changes survived - an 18% success rate.

What the Agent Can Touch

The architecture separates human-authored code from AI-authored code cleanly. prepare.py handles data prep, BPE tokenizer training, and evaluation utilities - the agent can't modify it. train.py contains the GPT-style model definition, optimizer, and training loop, and that's the only file the agent rewrites. Everything else is off-limits.

Parameters the agent normally tunes include model depth (DEPTH), sequence length, attention window patterns (WINDOW_PATTERN), learning rate schedules, warmup steps, optimizer blends between Muon and AdamW, and weight decay schedules.

Setup

Installation uses uv, the fast Python package manager. Dependencies are minimal - PyTorch plus a few utilities.

# Clone and install

git clone https://github.com/karpathy/autoresearch

cd autoresearch

uv sync

# Prepare data (BPE tokenizer + TinyStories dataset)

python prepare.py

# Start the autonomous experiment loop

python autorun.py

The agent reads program.md from the repo root. Edit that file to steer experiments - target architecture size, dataset, constraints on what to explore. The agent then decides how to get there.

Andrej Karpathy released autoresearch as an MIT-licensed single-file Python tool, describing it as "nanochat LLM training core stripped down to a single-GPU, one file version."

Source: commons.wikimedia.org

Andrej Karpathy released autoresearch as an MIT-licensed single-file Python tool, describing it as "nanochat LLM training core stripped down to a single-GPU, one file version."

Source: commons.wikimedia.org

What Gets Discovered Overnight

The improvements the agent finds aren't trivial config tweaks. In documented runs, it discovered issues like missing scaler multipliers in attention mechanisms, misconfigured AdamW betas, suboptimal weight decay schedules, and value embedding regularization problems. It also found more mundane wins like halving batch sizes and adjusting model aspect ratios (depth 9, dimension 512 instead of depth 8, dimension 512).

Numbers from the First Public Run

The most complete published run went 126 experiments over a single overnight session. Starting val_bpb of 0.9979 dropped to 0.9697 by morning. In a longer two-day depth-12 run Karpathy shared afterward, the agent processed roughly 700 changes and found about 20 that improved validation loss - and all 20 transferred cleanly when applied to a larger depth-24 model.

That last part matters. The improvements aren't overfitted to a toy training run - they generalize up.

The progress chart included in the autoresearch repo tracks validation scores across automated runs, showing how the agent builds up improvements over an overnight session.

Source: github.com/karpathy/autoresearch

The progress chart included in the autoresearch repo tracks validation scores across automated runs, showing how the agent builds up improvements over an overnight session.

Source: github.com/karpathy/autoresearch

Requirements and Compatibility

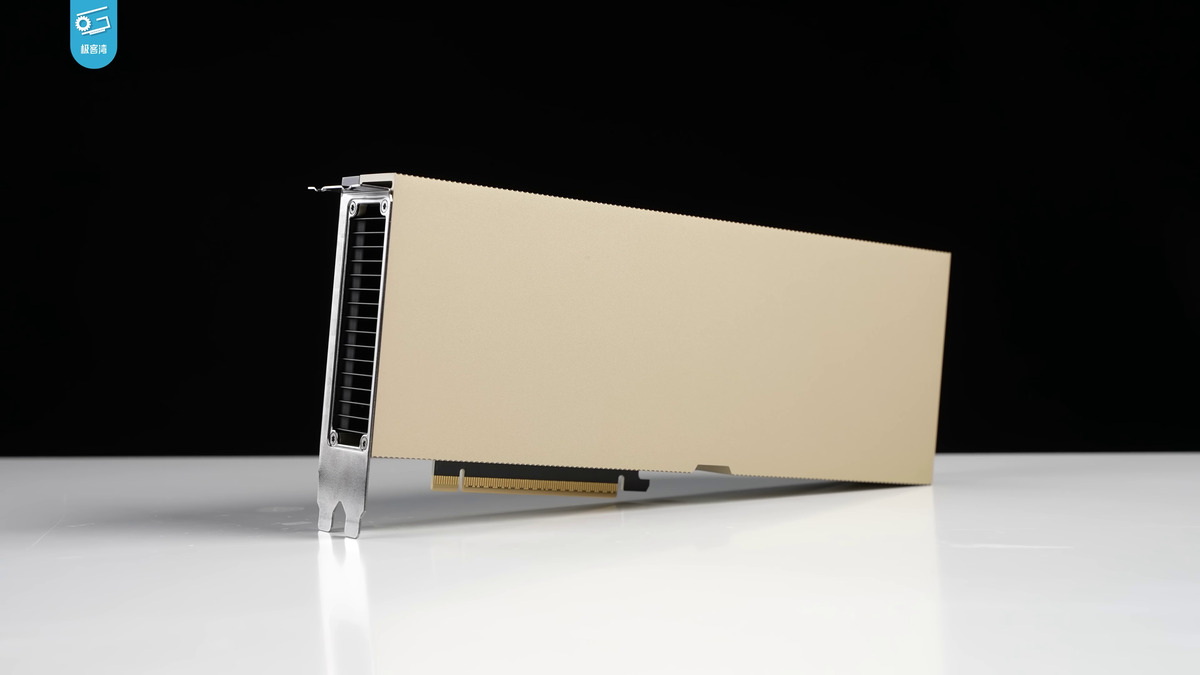

The tool was built and tested on a single H100. That's the reference hardware, but community forks have since expanded the reach considerably.

| Requirement | Details |

|---|---|

| GPU | Single NVIDIA GPU; H100 tested by Karpathy |

| Python | 3.10+ |

| Package manager | uv (fast, replaces pip) |

| Framework | PyTorch |

| Dataset | TinyStories or TinyShakespeare (included) |

| Distributed training | Not supported by design |

| License | MIT |

Three community forks appeared within days of the release: miolini/autoresearch-macos (Apple Silicon / MLX), trevin-creator/autoresearch-mlx (another MLX variant), and jsegov/autoresearch-win-rtx (Windows / RTX GPUs). None of these are official, but their existence suggests the tool runs on consumer hardware with some adaptation.

Shopify CEO Tobi Lutke adapted the framework for an internal query-expansion model project and reported a 19% improvement in validation scores - with the agent-optimized smaller model outperforming a larger model configured through standard manual methods. That's one data point, not a controlled study, but it's a real-world application that goes beyond toy datasets.

The H100 is the reference GPU for autoresearch, but community forks for Apple Silicon and consumer RTX hardware are already active.

Source: commons.wikimedia.org

The H100 is the reference GPU for autoresearch, but community forks for Apple Silicon and consumer RTX hardware are already active.

Source: commons.wikimedia.org

What the Community Built With It

The GitHub Discussions thread is active. Y Combinator president Garry Tan described the project simply: "The bottleneck isn't compute. It's your program.md." That's a fair summary of the design philosophy - the agent handles the search, the human steers it with plain English.

Karpathy has been clear that the current codebase is a proof of concept. In a follow-up post on X, he described the next step: "autoresearch has to be asynchronously massively collaborative for agents (think: SETI@home style). The goal is not to emulate a single PhD student, it's to emulate a research community of them."

That vision isn't in the current release. Right now it's a single thread.

The project fits into a broader pattern of autonomous AI agents being applied to software development workflows - similar to how reinforcement learning is being used in personal AI systems to self-improve on specific tasks. The underlying idea, that an agent iterating on its own code beats manual hyperparameter search, has been validated by distillation and fine-tuning research for a while. Autoresearch makes that loop explicit, cheap, and inspectable.

Where It Falls Short

The model scope is narrow by design. Autoresearch runs GPT-style architectures on TinyStories and TinyShakespeare. It's not set up to train on arbitrary datasets, and it doesn't support distributed training. If you want to run it on a 70B model across 64 GPUs, this isn't your tool.

The synchronous loop is the more fundamental constraint. Each experiment waits for the previous one to finish before starting the next. There's no parallelism across ideas, no way to explore multiple branches of train.py simultaneously. Karpathy's SETI@home framing - swarms of agents collaborating asynchronously on shared state - would require a different coordination layer completely.

Benchmark scope is limited too. Val_bpb on small datasets doesn't necessarily correlate with performance on downstream tasks. An agent that optimizes this metric aggressively could overfit to a quirk of TinyStories. The understanding benchmarks problem hasn't gone away just because a machine is running the experiments instead of a human.

None of these are criticisms of what's been released - Karpathy has been explicit about the scope. Autoresearch is a clean, readable, single-GPU tool for running a lot of low-cost training experiments automatically. It's already 20,900 stars deep, with community ports for Apple Silicon and Windows, and a concrete real-world validation from Shopify. The SETI@home version doesn't exist yet, but the synchronous single-GPU version does, and it works.

Sources: github.com/karpathy/autoresearch - marktechpost.com - analyticsindiamag.com - kenhuangus.substack.com