Inception Ships Mercury 2 - A Diffusion LLM That Hits 1,009 Tokens Per Second

Inception Labs launches Mercury 2, the first diffusion-based reasoning language model, generating over 1,000 tokens per second on Blackwell GPUs at a fraction of the cost of conventional autoregressive models.

Inception Labs just dropped Mercury 2, and the numbers demand attention: 1,009 tokens per second on NVIDIA Blackwell GPUs, 1.7-second end-to-end latency, and pricing that undercuts Gemini 3 Flash by half on input tokens. The catch is that this is not an autoregressive transformer. Mercury 2 is built on diffusion - the same class of architecture that powers Stable Diffusion and DALL-E, repurposed for text.

Key Specs

| Spec | Value |

|---|---|

| Architecture | Diffusion LLM (dLLM) |

| Throughput | 1,009 tokens/sec (Blackwell) |

| E2E Latency | 1.7 seconds |

| Context Window | 128K tokens |

| Input Price | $0.25 / 1M tokens |

| Output Price | $0.75 / 1M tokens |

| Reasoning | Yes (first reasoning dLLM) |

| Features | Tool use, JSON output, tunable inference |

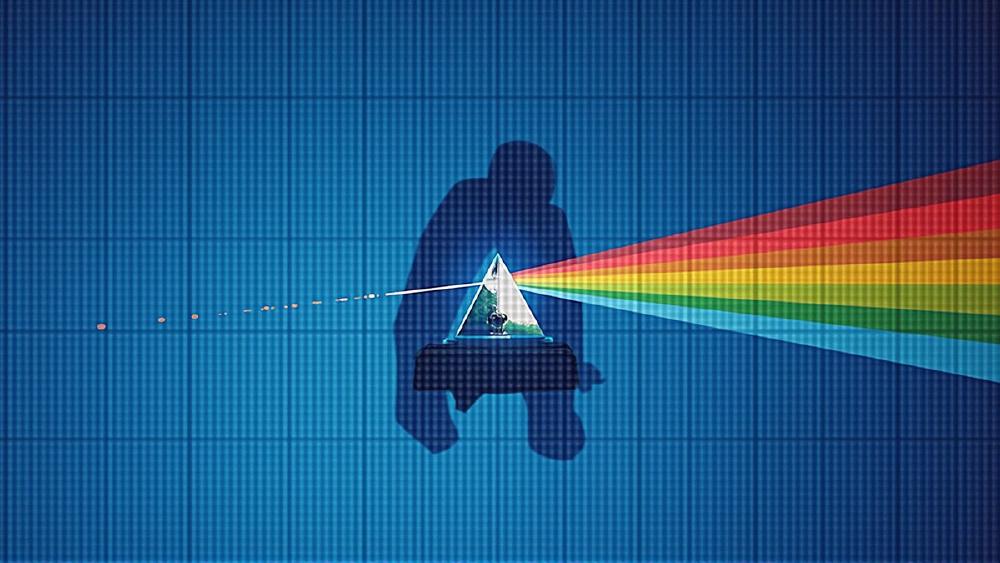

How Diffusion Text Generation Actually Works

Most language models generate text the same way you read it - left to right, one token at a time. GPT, Claude, Gemini, Llama - they all predict the next word, append it, and repeat. This autoregressive approach is well understood and scales predictably, but it creates a hard ceiling: your output speed is bottlenecked by how fast you can run the model once per token.

Mercury 2 throws that out. Instead of sequential prediction, it starts with noise across the entire output length and iteratively refines it - denoising multiple tokens simultaneously in each pass. Think of it as an editor revising a full draft at once rather than typing one word at a time.

The Parallel Refinement Loop

The generation process works in steps. At each step, the model looks at all positions in the output simultaneously and nudges them toward coherence. After a small number of refinement passes (far fewer than the number of output tokens), the text converges. The result is that doubling the output length doesn't double the generation time - a property that autoregressive models simply can't match.

Why This Matters for Agents

Agent loops are where inference latency kills. A coding assistant that needs to call tools, read outputs, and produce follow-up code might run the model dozens of times in a single task. At 96 tokens per second (the median across current models), each call adds real wait time. At 1,009 tokens per second, those loops become near-instant. The same logic applies to voice assistants, real-time search, and any pipeline where LLM calls sit on the critical path.

Benchmarks - Fast, But How Smart?

Inception positions Mercury 2 as a reasoning model, and the benchmarks tell a mixed story. It holds its own against Claude 4.5 Haiku and GPT-5 mini on several evaluations, but falls behind larger frontier models.

| Benchmark | Mercury 2 | Gemini 3 Flash | Claude 4.5 Haiku | GPT-5 mini |

|---|---|---|---|---|

| GPQA Diamond | 74 | 90 | 67 | - |

| LiveCodeBench | 67 | 91 | 62 | - |

| SciCode | 38 | 51 | 43 | - |

| IFBench | 71 | 78 | 54 | - |

| AIME | 91 | 78 | 84 | - |

| TAU | 53 | 80 | 55 | - |

The standout result is AIME (mathematical reasoning) at 91 - beating both Gemini 3 Flash and Claude 4.5 Haiku. SciCode and TAU scores are weaker, suggesting the model's reasoning strength is concentrated in mathematical domains rather than distributed evenly across coding and scientific tasks.

Artificial Analysis ranks Mercury 2 at #20 out of 135 models on their Intelligence Index with a score of 33, which they describe as "well above average among reasoning models in a similar price tier." That phrasing matters: this isn't a frontier-class model. It's a speed-class model with reasoning bolted on.

The Verbosity Problem

One quirk worth flagging: during evaluation, Mercury 2 produced 69 million output tokens - roughly 4x the average model's output of 17 million tokens for the same test suite. The model is verbose. Whether that verbosity reflects deeper reasoning chains or architectural overhead remains unclear, but it directly affects real-world cost calculations despite the low per-token pricing.

The Price War Angle

The pricing is aggressive. Here is how Mercury 2 stacks against the models it's most likely to compete with:

| Model | Input ($/1M) | Output ($/1M) | Blended (3:1) |

|---|---|---|---|

| Mercury 2 | $0.25 | $0.75 | $0.38 |

| Gemini 3 Flash | $0.50 | $3.00 | $1.13 |

| Claude 4.5 Haiku | $1.00 | $5.00 | $2.00 |

| GPT-5 mini | $0.30 | $1.20 | $0.53 |

At $0.38 per million tokens blended, Mercury 2 undercuts Claude 4.5 Haiku by more than 5x and Gemini 3 Flash by nearly 3x. Even against GPT-5 mini, which is already positioned as a budget option, Inception comes in about 30% cheaper. For high-volume production workloads - agent frameworks, search pipelines, batch processing - that gap compounds fast.

Who Built This

Inception Labs was founded by three professors who helped build the theoretical foundations of modern AI:

- Stefano Ermon (CEO) - Stanford professor who co-invented the score-based diffusion methods that now power image and video generation systems globally

- Aditya Grover - UCLA professor, co-creator of foundational work in deep generative models

- Volodymyr Kuleshov - Cornell professor, contributed to flash attention and direct preference optimization research

The company raised $50 million in November 2025 from Microsoft, NVIDIA, and Snowflake. Mercury 2 is their second production release following the original Mercury model in early 2025, which was the first commercial diffusion-based LLM but lacked reasoning capabilities.

What To Watch

Time-to-First-Token is Still Slow

Mercury 2's time to first token sits at 12.74 seconds according to Artificial Analysis - "at the higher end" for its category. Diffusion models need that initial compute burst to set up the denoising process before output starts flowing. For interactive chat, that 12-second cold start is noticeable. For agent loops where you can pipeline requests, it matters less.

Independent Benchmarks Are Sparse

Most published numbers come from Inception's own evaluations. Artificial Analysis has done independent testing and confirms the speed claims, but broader community benchmarking is still pending. The reasoning capability claims in particular need more scrutiny - as we have covered before, self-reported benchmarks from model creators deserve healthy skepticism.

Architecture Lock-In Risk

Mercury 2 is proprietary and API-only. There are no open weights, no self-hosting options, and no way to fine-tune. If the diffusion approach proves viable and you build your stack around it, you are locked into Inception's API. Google DeepMind explored diffusion-based language models with Gemini Diffusion back in May 2025, but hasn't shipped anything production-ready since. The ecosystem around dLLMs is still a one-company show.

The Verbosity Tax

That 4x token output inflation is not free. Even at $0.75 per million output tokens, a model that uses four times as many tokens as competitors to answer the same question isn't actually 5x cheaper - it might break even or cost more on some workloads. Production teams should run their own cost analysis on representative queries before committing.

Mercury 2 isn't going to replace your frontier model for complex analysis or creative work. But that's not what it's for. If you need an LLM in a tight loop - tool calls, voice, search, coding autocomplete - and you're currently bottlenecked by inference latency, a diffusion-based model generating 1,000 tokens per second at sub-dollar pricing is worth a serious evaluation. The architecture is genuinely different, the speed claims hold up under independent testing, and the team has the theoretical pedigree to keep iterating. Whether dLLMs become a real category or stay a niche depends on what comes next from Inception - and whether Google, who has been suspiciously quiet about Gemini Diffusion, decides to compete.

Sources:

- Inception Labs - Introducing Mercury 2

- The Decoder - Inception launches Mercury 2, the first diffusion-based language reasoning model

- Artificial Analysis - Mercury 2 Intelligence, Performance & Price Analysis

- GIGAZINE - Inception Announces Mercury 2, the World's Fastest Diffusion Model-Based Inference LLM

- TestingCatalog - Inception Labs unveils Mercury 2 diffusion and reasoning LLM

- Mayfield - Introducing Inception Labs

Last updated