IBM Granite Embedding R2 Brings 32K Context to Search

IBM's Granite Embedding Multilingual R2 ships with a 64x context window jump, ModernBERT architecture, and Apache 2.0 licensing that makes it enterprise-safe out of the box.

IBM released two new multilingual embedding models on May 14 that close a longstanding gap in its Granite lineup: context. The original R1 generation capped out at 512 tokens, which ruled out most real document retrieval tasks. R2 ships at 32,768 tokens - 64x longer - and carries Apache 2.0 licensing that lets enterprises deploy without a legal review queue.

Key Specs

| granite-embedding-311m-multilingual-r2 | granite-embedding-97m-multilingual-r2 | |

|---|---|---|

| Parameters | 311M | 97M |

| Embedding dims | 768 | 384 |

| Context window | 32,768 tokens | 32,768 tokens |

| Languages | 200+ (52 enhanced) | 200+ (52 enhanced) |

| Architecture | ModernBERT | ModernBERT |

| License | Apache 2.0 | Apache 2.0 |

| Matryoshka | Yes (128 to 768 dims) | No |

The two models target different deployment scenarios. The 311M version competes with proprietary frontier embeddings. The 97M version is the story for teams running constrained inference budgets - IBM says it posts 60.3 on MTEB Multilingual Retrieval, the highest score of any open model under 100 million parameters. That puts it 9.4 points ahead of multilingual-e5-small, which has been the default cheap multilingual option for the past two years.

What Changed from R1

ModernBERT replaces XLM-RoBERTa

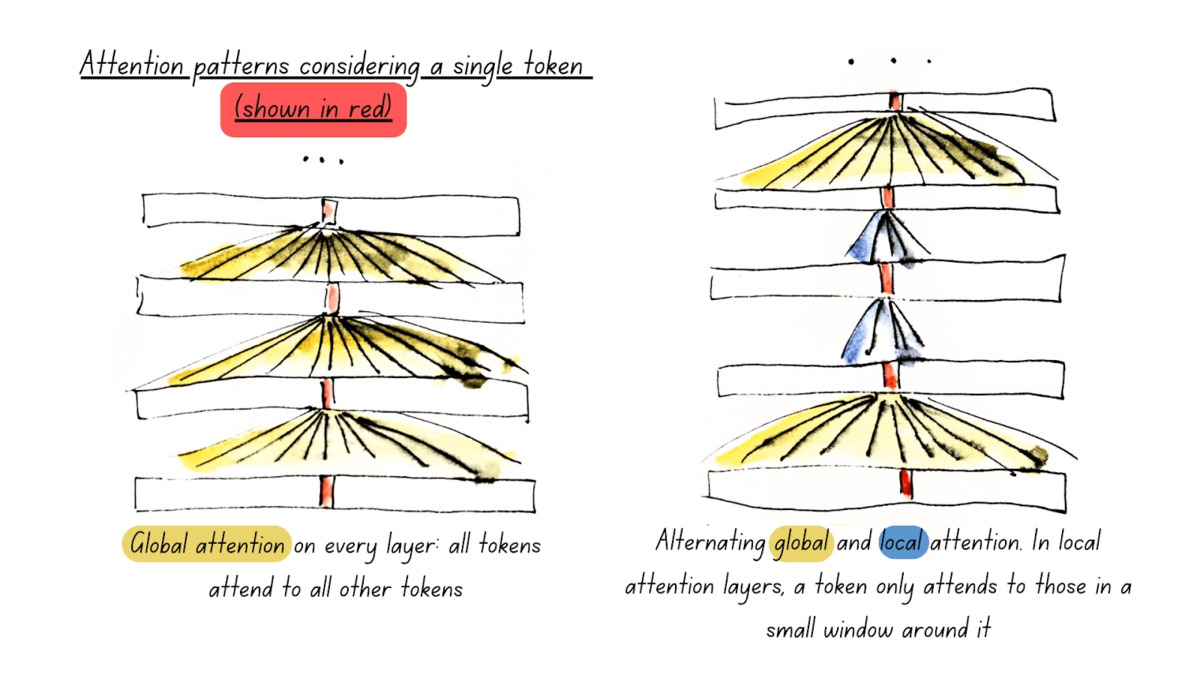

The R1 family was built on XLM-RoBERTa, a solid but aging encoder that dates to 2019. R2 switches to ModernBERT, an encoder architecture redesigned for modern GPU workloads. The practical differences are meaningful: ModernBERT uses rotary position embeddings (RoPE) instead of absolute positional encodings, which makes extending context without quality degradation far cleaner. It also supports Flash Attention 2.0 natively, which matters when you're encoding thousands of documents per second.

IBM also shrunk the layer count in R2 via structured pruning - from 22 to 12 layers for the 97M variant - then ran a recovery training phase to restore quality. The result is higher throughput without a proportional quality drop.

The 64x context jump is the real headline

Going from 512 to 32K tokens isn't a small refinement. It changes what the model can actually embed: full contracts, research papers, extended conversation logs, multi-file codebases. IBM's own ablation shows the long-document retrieval score (LongEmbed benchmark) improved by +34 points on the 311M model and +31.3 points on the 97M model compared to R1.

Code retrieval also got a dedicated upgrade. R2 was trained on nine programming languages - Python, Go, Java, JavaScript, PHP, Ruby, SQL, C, and C++ - which is a deliberate addition for developer-facing RAG pipelines. Code retrieval scores on the 97M model improved by 19.7 points over R1.

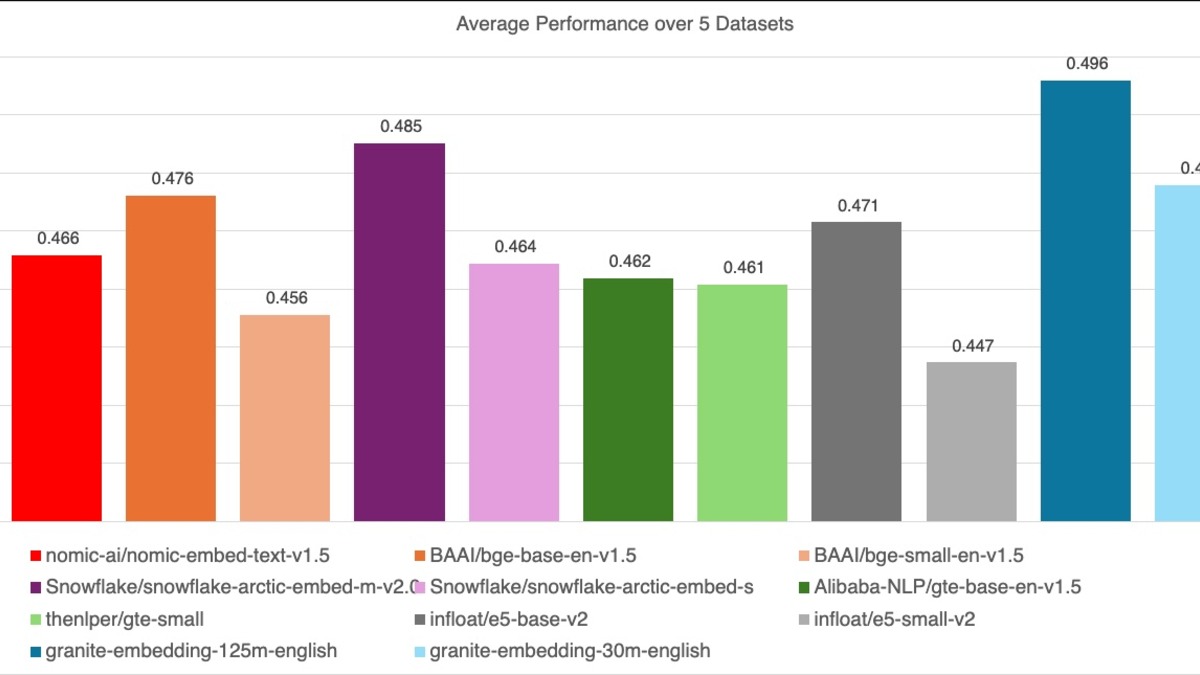

The Benchmark Picture

MTEB Multilingual Retrieval Comparison

| Model | Params | MTEB Score | Context |

|---|---|---|---|

| Cohere embed-v4 | unknown | 65.2 | 512 |

| granite-embedding-311m-r2 | 311M | 65.2 | 32,768 |

| OpenAI text-3-large | unknown | 64.6 | 8,191 |

| granite-embedding-311m-r1 | 311M | ~52.2 | 512 |

| multilingual-e5-small | 118M | 50.9 | 512 |

| granite-embedding-97m-r2 | 97M | 60.3 | 32,768 |

The 311M model matching Cohere embed-v4's MTEB score is the competitive data point IBM will lead with in sales conversations. What the table makes clear, though, is the context window advantage: the 311M R2 scores the same as Cohere while accepting 64x more tokens per document. For workloads that need to embed long documents rather than short passages, that's a real operational difference, not a marketing footnote.

One thing the MTEB score doesn't show is the Matryoshka dimension reduction available in the 311M model. You can compress embeddings from 768 to 128 dimensions and lose only 1.5 MTEB points (65.2 down to 63.7). If you're storing millions of embeddings and storage cost is a real budget line, that's meaningful.

The R2 models swap XLM-RoBERTa for ModernBERT, gaining native Flash Attention 2.0 support and RoPE for cleaner long-context extension.

Source: huggingface.co

The R2 models swap XLM-RoBERTa for ModernBERT, gaining native Flash Attention 2.0 support and RoPE for cleaner long-context extension.

Source: huggingface.co

Enterprise Data Governance

IBM is unusually explicit about training data provenance, and R2 follows that pattern. The models were trained on GneissWeb, IBM's internal dataset composed of commercially licensed sources - no MS-MARCO dependency, no datasets with non-commercial restrictions. The training set also includes IBM-curated synthetic multilingual data and domain-specific internal data, all screened for personal information and profanity before use.

For regulated industries running legal AI, healthcare, or financial RAG pipelines, the data card matters as much as the benchmark. A model that outperforms competitors but was trained on questionable sources creates audit headaches. IBM's approach to data governance is part of the pitch, not just a footnote.

The prior IBM Granite speech work had similar governance framing - see IBM Granite 4.0 1B Speech tops OpenASR Leaderboard for how IBM applied this approach to audio models.

Running R2

Both models are available on Hugging Face and deployable through standard inference frameworks. For most teams, vLLM is the straightforward path:

# Install and run via vLLM

pip install vllm

vllm serve ibm-granite/granite-embedding-311m-multilingual-r2 \

--task embed \

--trust-remote-code

GGUF versions are available for Ollama users who prefer local inference without the GPU memory overhead. IBM also offers a free serverless endpoint for testing at ibm.com/granite.

The 311M model runs at roughly 1,944 documents per second on an H100 at full 768-dimensional output. Compressing to 384 dimensions pushes that to around 2,200 documents per second. The 97M model reaches 2,894 documents per second at 384 dims - about 5.5x faster than jina-embeddings-v5-text-nano, per IBM's benchmarks.

This release lands in a crowded space. Microsoft open-sourced Harrier in March with strong MTEB scores, and Google pushed Gemini Embedding 2 with multimodal support. The MTEB Multilingual Leaderboard as of April 2026 showed the top five open models clustered within a few points of each other, which means context window and license clarity are increasingly the differentiators. R2 does both.

MTEB multilingual retrieval scores across R1 and R2 generations, with the 97M model showing the largest relative improvement.

Source: huggingface.co

MTEB multilingual retrieval scores across R1 and R2 generations, with the 97M model showing the largest relative improvement.

Source: huggingface.co

What To Watch

Does the 32K window hold in production?

Long context benchmarks measure retrieval at idealized conditions. Real RAG pipelines throw noisy, inconsistently structured documents at embedding models, and quality often degrades earlier than benchmarks suggest. Teams assessing R2 should test with their actual document corpus, not just benchmark datasets, before committing to production migration.

The MTEB ceiling is getting crowded

When three models from different organizations land within a point of each other on MTEB Multilingual, the benchmark is approaching saturation. MTEB itself has been discussed in the community as potentially too narrow to reflect real retrieval quality in multilingual settings outside of the 52 languages that receive the most training coverage. The 97M model's advantage in the sub-100M category is clear; the 311M model's advantage over Cohere and OpenAI is real but thin.

Code retrieval is still experimental

IBM lists nine programming language supports for code retrieval, but the training mix skews toward the common ones. Teams using R2 for retrieving uncommon languages - Kotlin, Rust, Swift, Lua - should verify performance before replacing a specialized code embedding model.

If you're new to embeddings and want context on where these models fit in a retrieval pipeline, What Are AI Embeddings? A Plain-English Guide covers the fundamentals.

Sources: