Alibaba's HappyHorse Claims Video AI Crown - No Weights Yet

HappyHorse-1.0 topped the Artificial Analysis Video Arena with a 52-Elo gap over Seedance 2.0 - but the 'open source' model has no public weights, no inference code, and no API.

A 15B-parameter video model called HappyHorse-1.0 appeared anonymously on the Artificial Analysis Video Arena in early April 2026 and right away landed at the top of both the text-to-video and image-to-video rankings. Its promotional page described it as "fully open source with commercial licensing." The weights aren't published. The inference code is not published. The GitHub repository contains a README with the words "coming soon."

The team behind it has since been identified as researchers from Alibaba's newly formed Token Hub unit (ATH), led by Zhang Di - formerly vice president at Kuaishou and the architect of Kling AI. Zhang Di rejoined Alibaba in November 2025. The ATH division itself was only formed in March 2026 under CEO Eddie Wu, consolidating Alibaba's AI units - Tongyi Lab, Qwen, Wukong, and others - into a single group.

The Arena results are real. The open-source claim is not, at least not yet.

TL;DR

- Claim: HappyHorse-1.0 is the #1 open-source video model, beating ByteDance Seedance 2.0 by 52 Elo points in image-to-video on Artificial Analysis Video Arena

- Our take: The Arena rankings are independently verified through blind user preference tests - the lead is real. The "fully open source" label isn't. No weights, no code, no runnable API exist as of April 15, 2026.

- Context: Built by Alibaba's Token Hub (ATH), formed March 2026. Led by the same engineer who built Kling AI at Kuaishou - a model HappyHorse now outranks by 115 Elo points.

What They Showed

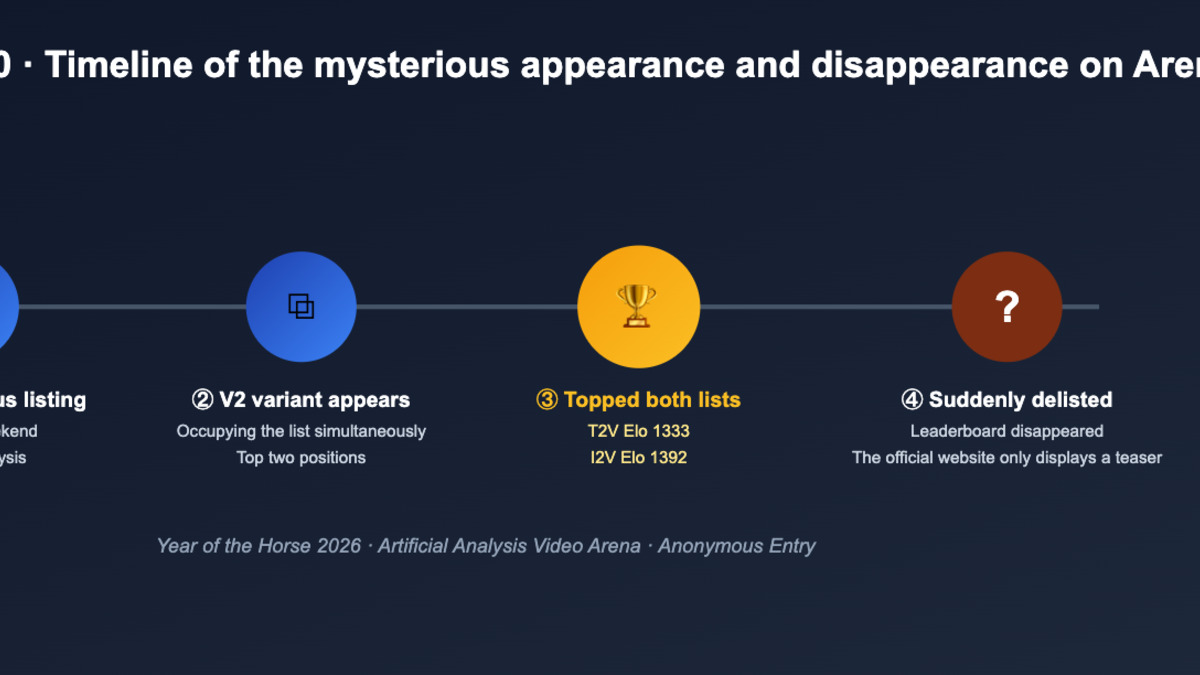

HappyHorse-1.0 debuted on the Arena in two anonymous submissions - a V1 and a V2 variant - both listed without affiliation or paper. The model climbed to the top of both leaderboards within days of appearing.

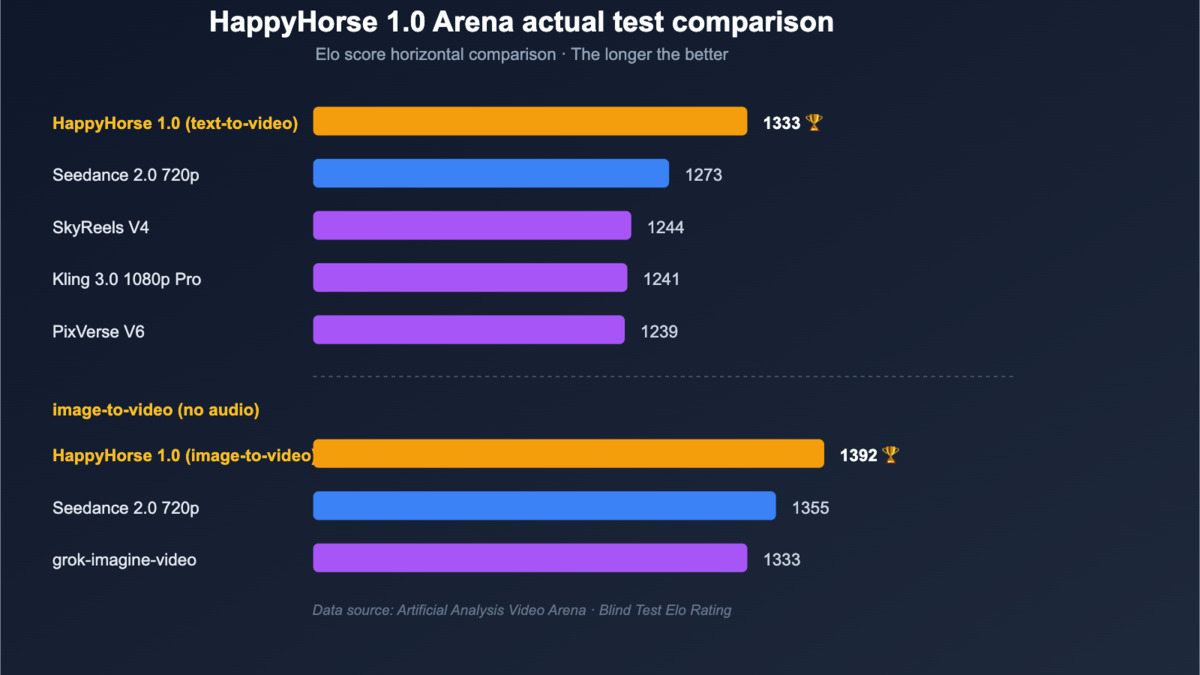

HappyHorse-1.0 Elo scores versus competitors in text-to-video and image-to-video categories, as recorded by Artificial Analysis Video Arena.

Source: help.apiyi.com

HappyHorse-1.0 Elo scores versus competitors in text-to-video and image-to-video categories, as recorded by Artificial Analysis Video Arena.

Source: help.apiyi.com

The Numbers

Current rankings from the Artificial Analysis Video Arena:

| Model | I2V Elo (no audio) | T2V Elo (no audio) |

|---|---|---|

| HappyHorse-1.0 | 1,398 | 1,362 |

| Dreamina Seedance 2.0 | 1,346 | 1,269 |

| grok-imagine-video | 1,327 | - |

| SkyReels V4 | 1,288 | - |

| Kling 3.0 1080p (Pro) | 1,283 | 1,241 |

| PixVerse V6 | 1,316 | 1,239 |

A 52-point gap over Seedance in image-to-video is substantial. Arena Elo scores are computed from millions of head-to-head blind comparisons where users vote without knowing which model produced which output. A 50-point difference is consistently perceivable, not noise.

The Architecture

HappyHorse-1.0 is a 40-layer unified single-stream Transformer. Unlike competitors that run separate models for video generation and audio synthesis, HappyHorse processes text, image, video, and audio tokens together in a single inference pass. That means native lip-sync rather than post-processing alignment - the audio is created with the video, not stapled on afterward.

The model uses 8-step DMD-2 (Distribution Matching Distillation v2) without classifier-free guidance, which compresses inference time substantially. Claimed throughput: a 5-second 1080p clip in approximately 38 seconds on a single H100 GPU. Native lip-sync across seven languages: Mandarin, Cantonese, English, Japanese, Korean, German, and French.

The HappyHorse-1.0 model card as it appeared during Arena submission - 40-layer architecture, 8-step denoising, T2V and I2V top-1 rankings.

Source: help.apiyi.com

The HappyHorse-1.0 model card as it appeared during Arena submission - 40-layer architecture, 8-step denoising, T2V and I2V top-1 rankings.

Source: help.apiyi.com

For a 15B model hitting these numbers, the compute efficiency story is worth taking seriously. Seedance 2.0 is from ByteDance - one of the most well-resourced video AI teams in the world, following months of pressure from Hollywood copyright disputes. Beating it by this margin, with a model this size, requires either a better architecture or a much better training dataset.

What We Tried

The model's official page at happyhorse.mobi lists four artifacts as released: base model weights, distilled model weights, super-resolution module, and inference code. Standard open-source package.

None of those exist publicly.

As of April 15, 2026:

- The GitHub repository

CalvintheBear/HappyHorse-1.0exists with a README, no code, no weights, and "coming soon" placeholders throughout - HuggingFace shows no model card, no weight files, no license file under the

happyhorse-ainamespace - The official website runs a functional demo - confirming the model works in production - but offers no download path

- No public API tier is announced; Alibaba ATH confirmed "private internal testing" status

The sequence of events: anonymous Arena submission, leaderboard peak, brief delisting, then re-listing after the Alibaba attribution became public knowledge.

Source: help.apiyi.com

The sequence of events: anonymous Arena submission, leaderboard peak, brief delisting, then re-listing after the Alibaba attribution became public knowledge.

Source: help.apiyi.com

Reproducibility Table

| What You Need | Current Status |

|---|---|

| Model weights | Not published |

| Distilled version | Not published |

| Super-resolution module | Not published |

| Inference code | Not published |

| API (public) | Not available |

| Minimum hardware (expected) | H100 or A100 with 48GB+ VRAM |

| License | Apache 2.0 (claimed but no license file exists) |

| Demo (web only) | Available at happyhorse.mobi |

Calling something "open source" while publishing zero of the required artifacts is a marketing decision, not a technical one. WaveSpeedAI catalogued the same discrepancy shortly after the model appeared: "HappyHorse-1.0 currently fits the third category - demo access without downloadable materials."

The Gap

Two issues separate the claim from the current state.

"Open Source" Without Artifacts Isn't Open Source

Open source has a specific meaning in the developer community: published weights, published code, a real license. "Open weights" is a narrower claim, and "open access demo" is narrower still. HappyHorse is currently in the third category.

This matters practically. Without weights, developers can't run the model locally, can't fine-tune it, can't audit what the training data contained, and can't build production pipelines that aren't dependent on Alibaba's infrastructure timeline. The Apache 2.0 license claim is meaningless until there's something to apply it to.

Alibaba has a track record of eventually shipping open weights - the Qwen series has been truly open and has driven real adoption. But HappyHorse launched with the "open source" framing front and center, before it was true.

The Benchmark Has a Composition Problem

The Artificial Analysis Video Arena Elo ratings are computed from user preference votes. The composition of the test set matters. One independent analysis found that portrait generation and dubbing scenarios account for over 60% of Arena test cases - exactly the domain where HappyHorse's single-pass audio-video approach has the most obvious advantage.

For dynamic scenes, complex backgrounds, and professional video production content, the model's edge over Seedance 2.0 is less clear. That doesn't mean the ranking is fraudulent. But a model optimized for talking-head video will naturally score higher on an Arena with heavy talking-head representation.

The brief period where both HappyHorse V1 and V2 were delisted from the Arena before reappearing is worth noting. No official explanation was given. The unusual double-anonymous-submission pattern - two variants appearing simultaneously without affiliation - is not standard Arena procedure.

The Arena lead is real. Zhang Di knows how to build video models; his work on Kling at Kuaishou produced one of the most capable video generation systems available before he left. The unified Transformer architecture that produces audio and video in a single pass is technically interesting and, if the weights eventually ship, worth serious evaluation.

When they publish actual weights, this becomes a genuine open-source milestone. Until then, HappyHorse-1.0 is a demo from Alibaba's ATH unit with a leaderboard position and a GitHub repo that says "coming soon." The ATH team confirmed more releases are in the pipeline - for developers, the moment to pay attention is when artifacts land, not when press releases do.

Sources:

- Artificial Analysis Video Arena - Image-to-Video Leaderboard

- Artificial Analysis Video Arena - Text-to-Video Leaderboard

- Alibaba's HappyHorse tops Seedance, China's race for AI talent - South China Morning Post

- Alibaba Token Hub unit formation - TechNode

- Is HappyHorse-1.0 Open Source? What We Can Verify - WaveSpeedAI

- HappyHorse model analysis - apiyi.com

- HappyHorse 1.0 official model page

- HappyHorse-1.0 benchmark analysis - Barchart/ABNewswire