Guide Labs Open-Sources Steerling-8B, an LLM That Shows Its Work

YC-backed startup Guide Labs releases Steerling-8B under Apache 2.0 - an 8.4B parameter model with a built-in concept module that traces every output token back to its training data.

Guide Labs, a San Francisco startup that went through Y Combinator's Winter 2024 batch, today open-sourced Steerling-8B - a 8.4 billion parameter language model built from scratch to be interpretable. Every prediction the model makes is forced through an architectural bottleneck of human-readable concepts, making it possible to trace any output token back to the specific training data patterns that produced it.

The model is available now on Hugging Face and GitHub under the Apache 2.0 license.

The Teardown: What's Actually Inside

Key Specs

| Spec | Value |

|---|---|

| Parameters | 8.4B |

| Architecture | Causal Diffusion LM + iGuide concept module |

| Context length | 4,096 tokens |

| Known concepts | 33,732 |

| Unknown concepts | 101,196 |

| Attention | GQA, 32 heads, 4 KV heads |

| Diffusion block size | 64 tokens |

| Precision | bfloat16 |

| VRAM required | ~18 GB |

| License | Apache 2.0 |

Steerling-8B isn't a standard autoregressive transformer with an interpretability wrapper bolted on after training. It's architecturally different in two fundamental ways.

Causal Diffusion Instead of Autoregression

Rather than producing one token at a time left-to-right, Steerling uses masked diffusion - it produces text by iteratively unmasking tokens within 64-token blocks in order of confidence. Tokens can attend freely within their current block but can only see prior blocks, preserving coherent text generation while enabling multi-token concept-level control.

Guide Labs claims this approach inherits autoregressive-like scaling behavior (scaling exponent b = -0.072 vs -0.074 for standard AR models) while giving the model something autoregressive architectures fundamentally lack: the ability to control whole blocks of tokens through high-level concepts rather than individual tokens.

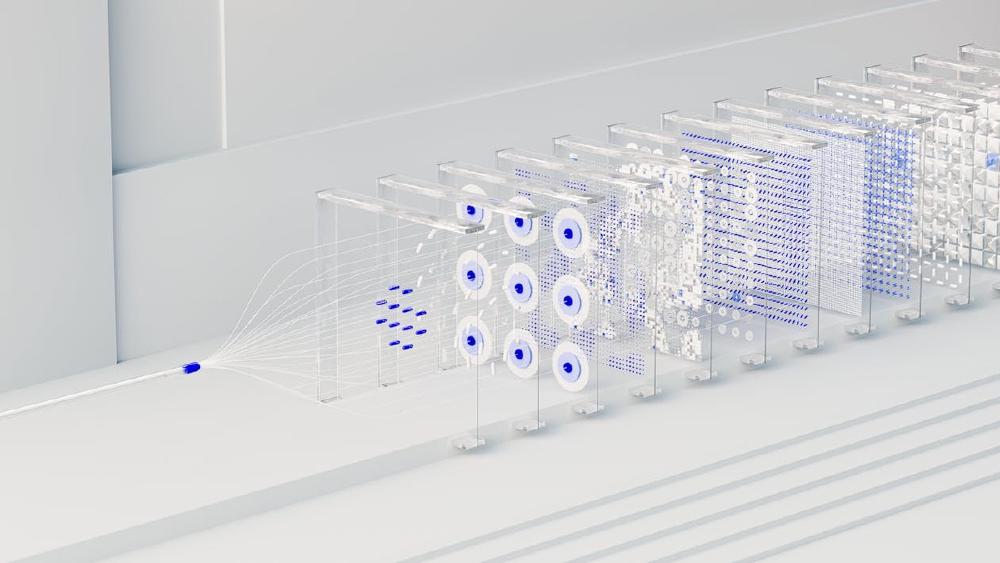

The Concept Module (iGuide)

This is the core technical contribution. Between the transformer backbone and the output head, Guide Labs cut the direct path from hidden states to logits and inserted a narrow bottleneck layer called iGuide.

Here is how it works at inference time:

hidden_states -> known_concept_head(f) -> 33,732 concept activations

unknown_concept_head(g) -> 101,196 residual activations

reconstructed_state = Σ(k_i × K_i) + Σ(u_j × U_j) + ε

reconstructed_state -> language_modeling_head -> logits

The known concept head maps hidden states to human-interpretable concepts - things like "legal," "medical," "politeness," or "humor." The unknown concept head captures residual information that doesn't map cleanly to labeled concepts. The model reconstructs its hidden states as a linear combination of concept embeddings, and only this reconstructed state feeds into the output head.

Because the path from concepts to logits is linear, attributions are exact and additive - not the approximations you get from post-hoc methods like SHAP or saliency maps.

Steering Without Retraining

The linear decomposition means you can directly manipulate concept activations at inference time:

from steerling import SteerlingModel

model = SteerlingModel.from_pretrained("guidelabs/steerling-8b")

# Suppress a concept entirely

model.set_concept_weight("legal_jargon", 0.0)

# Amplify a concept

model.set_concept_weight("politeness", 2.0)

# Generate with modified behavior

output = model.generate("Draft an email to the client...")

No prompt engineering. No RLHF. No retraining. You just turn the knobs on the concepts you care about. As CEO Julius Adebayo put it: "We really design the model from zero so you don't need to do neuroscience."

The Training Pipeline

Steerling-8B was trained end-to-end from scratch on 150 billion tokens from the Nemotron-CC-HQ corpus, with math-focused midtraining on Dolmino Mix. The concept annotations come from Atlas, Guide Labs' automated system that labels training data at chunk level with human-interpretable concepts - no expensive token-level annotations required.

The training loss combines four components: standard language modeling loss, concept presence loss (binary cross-entropy against Atlas labels), an independence loss that penalizes redundancy between known and unknown concept pathways, and a residual reconstruction loss.

A critical finding from their scaling experiments: the concept module behaves as a fixed tax on performance. The overhead is constant and doesn't grow as model size increases - base and concept-module scaling curves nearly overlap across all tested sizes. This suggests the approach could scale well beyond 8B.

Who This Is For

Guide Labs is positioning Steerling-8B for sectors where black-box models aren't acceptable:

- Financial services - Lending decisions where regulators require explanations for why an application was denied

- Healthcare - Clinical decision support where practitioners need to verify the reasoning chain

- Copyright compliance - Tracing outputs to specific training data sources to identify potential IP issues

- Content moderation - Surgically suppressing specific concept categories without degrading overall performance

The model achieves roughly 90% of the capability of comparable 8B models on standard benchmarks (HellaSwag, OpenBookQA, ARC-Challenge, PIQA, WinoGrande). That 10% gap is the price of natural interpretability - and Guide Labs argues it's a price worth paying when you need to actually explain what your model is doing.

The Team

The founders bring serious interpretability credentials. CEO Julius Adebayo holds a PhD from MIT and co-authored the widely cited 2018 NeurIPS paper "Sanity Checks for Saliency Maps," which demonstrated that many existing methods for understanding deep learning models were fundamentally unreliable. That paper was, in many ways, the intellectual origin of Guide Labs - if post-hoc interpretability doesn't work, build interpretability into the architecture from the start.

CSO Aya Abdelsalam Ismail holds a PhD from the University of Maryland and previously worked as a senior ML scientist at Prescient Design (Genentech). The team of roughly nine people collectively has over 20 years of experience in interpretability research and more than two dozen papers at top ML venues.

The company raised a $9 million seed round led by Initialized Capital, with participation from Tectonic Ventures, Y Combinator, Pioneer Fund, and angel investors including Jonathan Frankle (known for the Lottery Ticket Hypothesis).

Where It Falls Short

Let's be direct about the limitations.

| Limitation | Detail |

|---|---|

| Context length | 4,096 tokens - short by 2026 standards |

| Performance gap | ~90% of comparable models, not 100% |

| VRAM floor | 18 GB minimum rules out most consumer GPUs |

| Tooling | Causal diffusion is a different paradigm - existing inference stacks don't apply |

| Training data | Nemotron-CC includes synthetic data from Qwen and DeepSeek, which may carry license effects |

| Validation | Released today; no independent benchmarks exist yet |

The 4K context window is the biggest practical constraint. In a world where Gemini offers 1M tokens and most frontier models handle 128K+, a 4,096-token ceiling limits Steerling-8B to short-form tasks. Guide Labs will need to extend this clearly for the model to be useful in production RAG pipelines or agentic workflows.

The causal diffusion architecture also means you can't just drop Steerling-8B into existing local inference setups that assume autoregressive generation. llama.cpp, vLLM, and the broader ecosystem of inference engines would need explicit support for diffusion-based generation. That's a real adoption barrier.

And the 90% capability figure needs independent verification. Guide Labs assessed on a limited set of benchmarks (HellaSwag, OpenBookQA, ARC-Challenge, PIQA, WinoGrande) - none of which are coding or agentic benchmarks that would test the model in the scenarios enterprises actually care about.

Steerling-8B isn't going to replace anyone's production LLM tomorrow. But it is the first serious attempt to build interpretability into a foundation model at meaningful scale, rather than trying to reverse-engineer a black box after the fact. If the gap between open-source and proprietary models keeps narrowing, the next competitive axis might not be raw capability - it might be trust. Guide Labs is betting early on that future.

The model weights, code, and documentation are live on Hugging Face and GitHub. Install via pip install steerling.

Sources:

Last updated