GPT-5.4 Leaked Twice in Codex Repo PRs - Here Is What We Know

Two pull requests in OpenAI's public Codex GitHub repo referenced GPT-5.4 before being scrubbed - one adding full-resolution vision support, the other a fast mode toggle. Seven force pushes and a deleted employee screenshot confirm this was not intentional.

OpenAI has accidentally revealed GPT-5.4 - twice - through pull requests in its public Codex GitHub repository. Both references were quickly scrubbed via force pushes and edits, but not before screenshots circulated and the community noticed.

This isn't speculation. The PRs are public. The force push history is auditable. And an OpenAI employee's now-deleted screenshot showed GPT-5.4 in the Codex app's model selector.

TL;DR

- PR #13050 (Feb 27) added full-resolution vision support with a minimum model version set to

(5, 4)- changed to(5, 3)after seven force pushes in five hours - PR #13212 (Mar 2) added a

/fastslash command originally described as "toggle Fast mode for GPT-5.4" - scrubbed within three hours - An OpenAI employee accidentally posted a screenshot showing GPT-5.4 in the Codex app model selector, then deleted it

- Prediction markets put GPT-5.4 release before April 2026 at 55%, before June at 74%

- GPT-5.3-Codex shipped just three weeks ago - if GPT-5.4 drops soon, OpenAI will have shipped five GPT-5.x models in seven months

- OpenAI has not commented; their only response has been quietly cleaning up the code

The First Leak - Full-Resolution Vision (Feb 27)

On February 27, OpenAI engineer Curtis "Fjord" Hawthorne (fjord-oai) opened PR #13050 in the Codex repo, titled "Add under-development original-resolution view_image support." The feature adds a flag (view_image_original_resolution) that preserves original PNG, JPEG, and WebP image bytes and sends detail: "original" to the Responses API instead of resizing and compressing them.

The problem: the code set the minimum model version constant to (5, 4) - meaning this feature requires GPT-5.4 or newer. X user Lisan al Gaib (@scaling01) screenshotted it before the cleanup.

What followed was a frantic scrub. The PR received seven force pushes between 19:30 and 22:44 UTC on February 27 - five hours of damage control. The current version of the code now reads MIN_ORIGINAL_RESOLUTION_MODEL_VERSION: (u32, u32) = (5, 3), changed from the original (5, 4).

Seven force pushes to change a two-digit version number. That's not a typo fix.

The Second Leak - Fast Mode Toggle (Mar 2)

Five days later it happened again. On March 2 at 04:07 UTC, OpenAI engineer pash-openai opened PR #13212, adding a fast mode toggle to Codex. The PR introduces a ServiceTier enum with Standard and Fast variants, a /fast slash command in the terminal UI, and service_tier=priority on API requests when enabled.

The original version reportedly contained a direct function call using gpt-5.4 as the model argument and a slash command explicitly described as "toggle Fast mode for GPT-5.4." By 07:14 UTC - about three hours later - the GPT-5.4 references were gone.

The Employee Screenshot

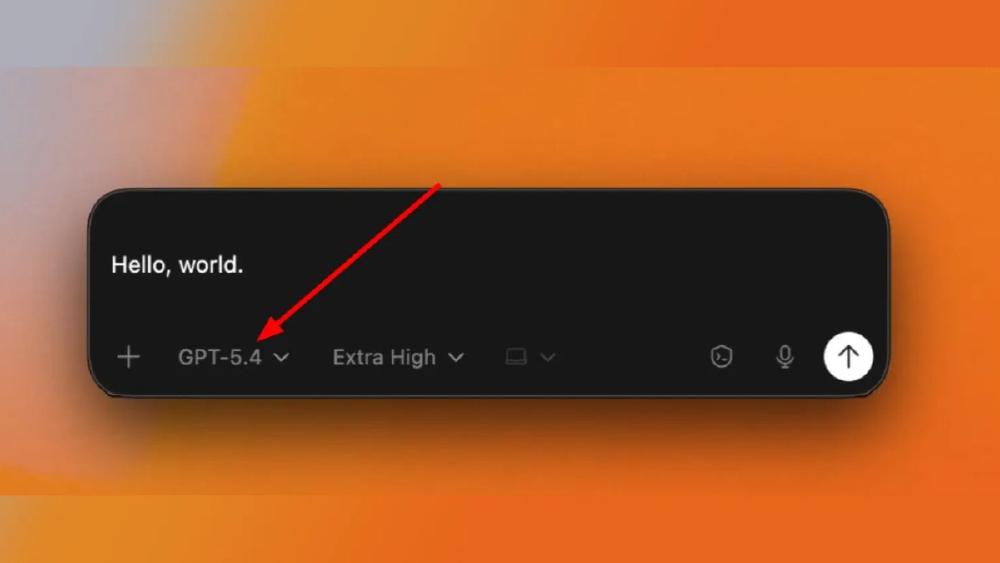

Adding to the evidence: users on X shared screenshots showing GPT-5.4 appearing in the Codex desktop app's model selector dropdown. More damningly, OpenAI employee Tibo (confirmed as Codex contributor tibo-openai via PR #13181) accidentally posted a screenshot on X that included GPT-5.4 in the model picker. The post was deleted.

What GPT-5.4 Appears to Include

Based on the two PRs and surrounding evidence, here is what we can confirm about GPT-5.4 features:

| Feature | Source | Status |

|---|---|---|

| Full-resolution vision | PR #13050 - original-res image passthrough with detail: "original" | Confirmed in code (feature flag) |

| Fast mode / priority tier | PR #13212 - /fast slash command, service_tier=priority | Confirmed in code |

| Model exists internally | Employee screenshot, model selector dropdown | Screenshots circulated, post deleted |

What we cannot confirm: rumors of a 2 million token context window and persistent memory. No evidence for either appeared in the PRs or any credible source. The Codex repo does have several memory-related PRs from late February, but none reference GPT-5.4 specifically or use any branded feature name.

The Pace Is Relentless

The GPT-5 family has shipped at a pace that makes the GPT-4 era look glacial:

| Model | Date | Gap |

|---|---|---|

| GPT-5 | August 7, 2025 | - |

| GPT-5-Codex | September 15, 2025 | 5 weeks |

| GPT-5.1 | November 12, 2025 | 8 weeks |

| GPT-5.2 | December 11, 2025 | 4 weeks |

| GPT-5.3-Codex | February 5, 2026 | 8 weeks |

| GPT-5.4 (leaked) | Not released | 3+ weeks since 5.3 |

Five major model versions in seven months. Prediction markets on Manifold give GPT-5.4 a 55% chance of shipping before April 2026 and 74% before June. Some traders note this would require "unprecedented acceleration" since GPT-5.3's general-purpose variant (non-Codex) has not even shipped yet.

The competitive pressure is obvious. Claude Opus 4.6 launched with agent teams and a 1M context window. Anthropic's Claude Code dominates the coding market with 54% share. DeepSeek V4 is training on Huawei hardware outside the NVIDIA ecosystem entirely. OpenAI cannot afford to slow down.

What This Means

The leak pattern is notable. OpenAI's Codex repo is public by design - it is their open-source terminal coding agent. But having engineers accidentally commit internal model references to a public repo, twice in five days, suggests the development pace is outrunning internal processes. Force-pushing seven times to clean up a version number isn't how a well-coordinated release pipeline looks.

The features themselves are gradual but meaningful. Full-resolution vision support means Codex could work with detailed diagrams, high-DPI screenshots, and architectural drawings without lossy compression destroying detail. A fast mode toggle with priority service tiers suggests OpenAI is building tiered inference - paying more for lower latency - which aligns with their pricing structure for Codex subscriptions.

OpenAI hasn't commented on any of this. Their response has been limited to code cleanup and a deleted employee post. Given the cadence of the GPT-5 family so far, we'll probably not have to wait long for the official announcement.

Sources:

- PR #13050: Original-Resolution Image Support - OpenAI/Codex

- PR #13212: Fast Mode Toggle - OpenAI/Codex

- OpenAI GPT-5.4 Accidentally Leaked in Codex - PiunikaWeb

- GPT-5.4 Leak - Geeky Gadgets

- @scaling01 on X: GPT-5.4 Screenshots

- GPT-5.4 Release Date Prediction Market - Manifold Markets

- GPT-5.3-Codex Announcement - OpenAI

- GPT-5.3-Codex GA for GitHub Copilot - GitHub Blog