900 Google and OpenAI Workers Demand Military AI Limits

Nearly 900 employees across Google and OpenAI sign an open letter titled We Will Not Be Divided, calling on leadership to reject Pentagon demands for unfettered AI access.

TL;DR

- Nearly 900 workers across Google (~800) and OpenAI (~100) have signed an open letter titled "We Will Not Be Divided" demanding clear limits on military uses of AI

- The letter calls on both companies to refuse Pentagon demands for "any lawful use" access - the same terms Anthropic rejected

- Signatures grew from a few hundred on Friday to roughly 900 by Monday, with workers from Salesforce, Databricks, and IBM also voicing support

- Organizers say the effort is unaffiliated with any AI company or political group, and signers can remain anonymous

A cross-company employee revolt is gaining serious momentum. Nearly 900 workers at Google and OpenAI have signed an open letter demanding their employers draw hard lines on how the Pentagon can use their AI systems - the same lines that Anthropic refused to cross.

The letter, titled "We Will Not Be Divided," is the largest coordinated action by AI industry employees since Google's Project Maven walkout in 2018. It represents something new: workers at rival companies banding together against a shared threat rather than fighting their own management in isolation.

What the Letter Says

Standing Together Against Division

The letter calls on Google and OpenAI leadership to "put aside their differences and stand together" against Pentagon demands. Its central argument is that the Defense Department's strategy of negotiating with individual companies - and punishing those who resist - aims to break industry-wide resistance to controversial military applications.

"The Pentagon is negotiating with Google and OpenAI to try to get them to agree to what Anthropic has refused."

That line goes straight at the core issue. After Anthropic rejected Pentagon demands for unfettered access under an "any lawful use" framework - which would cover mass surveillance and autonomous weapons - the Defense Department formally designated Anthropic a supply chain risk. The message to other AI companies was clear: cooperate or face consequences.

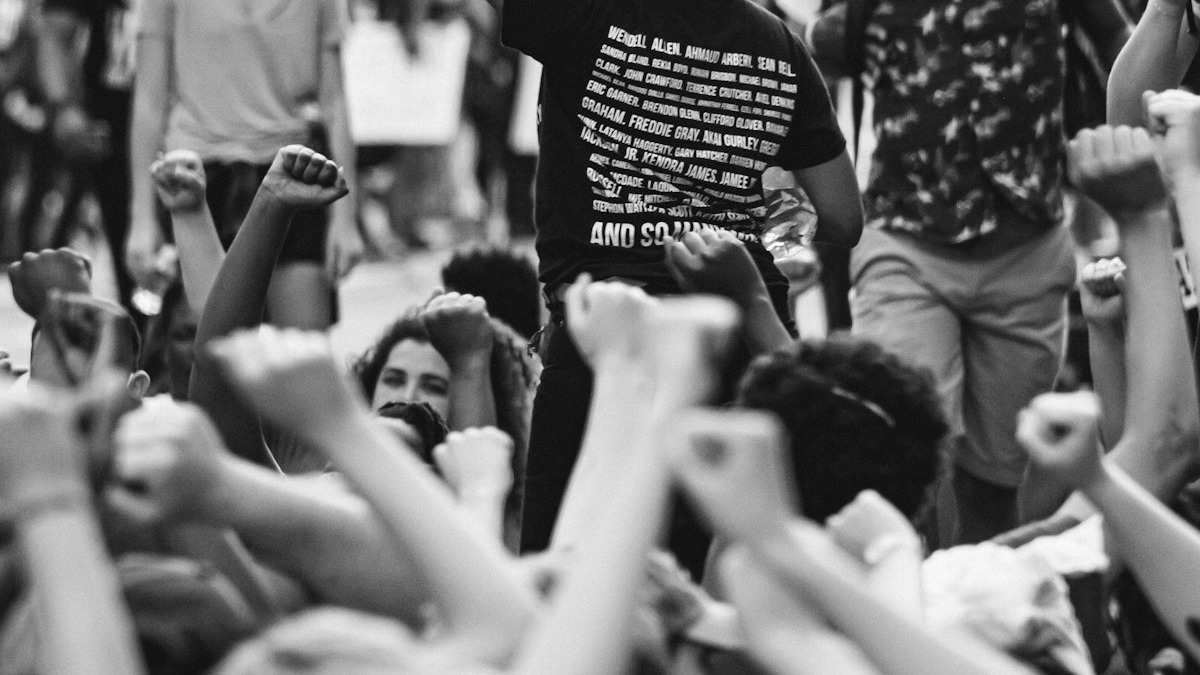

The letter echoes the spirit of the 2018 Project Maven walkout, which forced Google to abandon a military drone analysis contract. Source: Unsplash

The letter echoes the spirit of the 2018 Project Maven walkout, which forced Google to abandon a military drone analysis contract. Source: Unsplash

Specific Demands

The signatories want their employers to refuse Pentagon demands that would enable:

- Mass surveillance programs

- Autonomous weapons systems

- Unrestricted "any lawful use" access to AI models

The letter doesn't call for a blanket ban on government contracts. Instead, it pushes for "clear ethical limits on how those systems are used" - a distinction that matters. Workers aren't asking their companies to walk away from defense work entirely, but to maintain red lines on the most dangerous applications.

How the Signatures Grew

The letter started circulating late last week with only a couple hundred signatures. By Friday evening, it had enough traction to attract attention from reporters. By Monday, the count had surged to roughly 900 - close to 800 from Google and nearly 100 from OpenAI.

Workers at rival AI companies are finding common cause on military AI ethics for the first time since the industry's rapid expansion. Source: Unsplash

Workers at rival AI companies are finding common cause on military AI ethics for the first time since the industry's rapid expansion. Source: Unsplash

The organizers describe themselves as unaffiliated with any AI company or political group. Signatures are verified, but signers can choose to remain anonymous - a design choice that likely explains the high participation rate. Internal dissent at tech companies carries real career risk, and the anonymous option lowers the barrier.

Broader Industry Support

The movement extends beyond Google and OpenAI. Workers at Salesforce, Databricks, and IBM have signed separate letters opposing the Pentagon's designation of Anthropic as a supply chain risk. Advocacy groups like No Tech For Apartheid have also urged companies to reject defense contracts that enable harmful surveillance.

The Context That Made This Possible

Three developments converged to create the conditions for this letter.

The Anthropic standoff - Anthropic's refusal to accept the Pentagon's "any lawful use" terms, and the later supply chain risk designation, showed employees across the industry what happens when a company holds its ground - and what it costs. As we covered, the Pentagon and Anthropic have since resumed talks, but the fundamental disagreement remains unresolved.

The OpenAI backlash - OpenAI's decision to strike a deal with the Pentagon triggered an immediate internal revolt. The staff backlash and user boycott - with ChatGPT uninstalls surging 295% - demonstrated that employees and users share the same concerns about military AI.

U.S. strikes on Iran - The escalation of U.S. military operations against Iran heightened fears that AI systems could be deployed in active conflict scenarios. For employees who joined AI companies to build productivity tools and research assistants, the prospect of their work enabling lethal military operations crosses a fundamental line.

Fears about autonomous weapons systems have deepened as U.S. military operations expand. Source: Unsplash

Fears about autonomous weapons systems have deepened as U.S. military operations expand. Source: Unsplash

The 2018 Precedent

The letter explicitly invokes Google's Project Maven episode. In 2018, thousands of Google employees signed a petition and dozens resigned over the company's contract to provide AI-powered image analysis for military drones. Google ultimately abandoned the contract and adopted AI principles that excluded weapons applications.

But those principles have eroded. Google's current discussions with the Pentagon about using Gemini for classified systems suggest the company has quietly walked back its post-Maven commitments. The letter's signatories are, in effect, asking leadership to remember the promises it made the last time employees forced the issue.

| Event | Year | Outcome |

|---|---|---|

| Google Project Maven walkout | 2018 | Google dropped the drone AI contract |

| Anthropic rejects Pentagon terms | 2026 | Designated a supply chain risk |

| OpenAI Pentagon deal signed | 2026 | Internal revolt, user boycott |

| "We Will Not Be Divided" letter | 2026 | ~900 signatures and growing |

What It Does Not Guarantee

The Power Dynamic Has Shifted

The 2018 walkout succeeded partly because Google wasn't yet dependent on defense revenue and the AI labor market was extraordinarily tight. Both conditions have changed. AI companies now see defense contracts as a path to the kind of recurring, high-margin revenue that investors demand. And the labor market, while still strong for top researchers, is not as lopsided as it was eight years ago.

Management's Likely Response

Neither Google nor OpenAI has publicly responded to the letter. History suggests they'll wait for the news cycle to cool, issue a statement reaffirming their commitment to responsible AI, and continue negotiating with the Pentagon behind closed doors. The question is whether 900 signatures - and the threat of more - changes that calculus.

The "We Will Not Be Divided" letter is the clearest signal yet that the AI industry's workforce doesn't share its leadership's appetite for military contracts. Whether that matters depends on whether employees are willing to do more than sign. In 2018, it took resignations and sustained public pressure to force Google's hand. This time, the stakes - and the revenue on the table - are considerably higher.

Sources: