Google Gemma 4 Ships Four Open Models Under Apache 2.0

Google releases Gemma 4 with a 26B MoE, 31B Dense, and two edge variants under Apache 2.0 - claiming the highest intelligence-per-parameter of any open model.

Google released Gemma 4 on April 2, 2026 - four open-weight models derived from Gemini 3 architecture, all under Apache 2.0. The lineup spans a 31B dense model ranked #3 on LMArena's text leaderboard, a 26B mixture-of-experts variant that activates only 3.8B parameters at inference, and two edge models (E4B and E2B) designed to run on phones and Raspberry Pis. Google calls this "byte for byte, the most capable open models in the world."

TL;DR

| Spec | Gemma 4 31B | Gemma 4 26B A4B | E4B | E2B |

|---|---|---|---|---|

| Parameters | 31B dense | 26B total / 3.8B active | 8B (4.5B effective) | 5.1B (2.3B effective) |

| Context | 256K | 256K | 128K | 128K |

| LMArena (text) | ~1452 | ~1441 | - | - |

| Modalities | Image, video, text | Image, video, text | Image, video, audio, text | Image, video, audio, text |

- Apache 2.0 license replaces Google's old proprietary Gemma license

- Day-one support for Ollama, llama.cpp, vLLM, MLX, Hugging Face Transformers, and more

- Edge models run under 1.5 GB RAM with 2-bit quantization on phones and Raspberry Pi 5

What's in the Box

Gemma 4 ships four models in two tiers. The larger pair targets developers with GPUs; the smaller pair targets edge devices.

The 31B Dense

All 31 billion parameters fire on every token. That makes it the strongest variant for raw quality and fine-tuning. On LMArena's text-only leaderboard, it scores roughly 1452 - comparable to GLM-5 and Kimi K2.5, which carry roughly 30x more parameters. MMLU Pro hits 85.2%, AIME 2026 reaches 89.2%, and LiveCodeBench lands at 80.0%. The 256K context window handles full codebases and long documents in a single prompt.

The 26B MoE (A4B)

This is the efficiency play. The model holds 26 billion total parameters but routes each token through only 3.8 billion active parameters. It runs nearly as fast as a 4B model while scoring 1441 on LMArena - five spots below the dense variant but still #6 among open models. MMLU Pro comes in at 82.6%, AIME 2026 at 88.3%, LiveCodeBench at 77.1%. For latency-sensitive deployments where you can tolerate a small quality drop, this is the variant to watch.

E4B and E2B Edge Models

The "Effective" models are built for phones, Raspberry Pis, and Jetson Nanos. E4B carries 8B total parameters with a 4.5B effective footprint; E2B packs 5.1B with a 2.3B effective footprint. Both support 128K context windows and add native audio input on top of image and video - the larger models don't have audio. On a Raspberry Pi 5, the E2B prefills at 133 tokens per second and decodes at 7.6 tokens per second. With 2-bit quantization, it fits inside 1.5 GB of RAM.

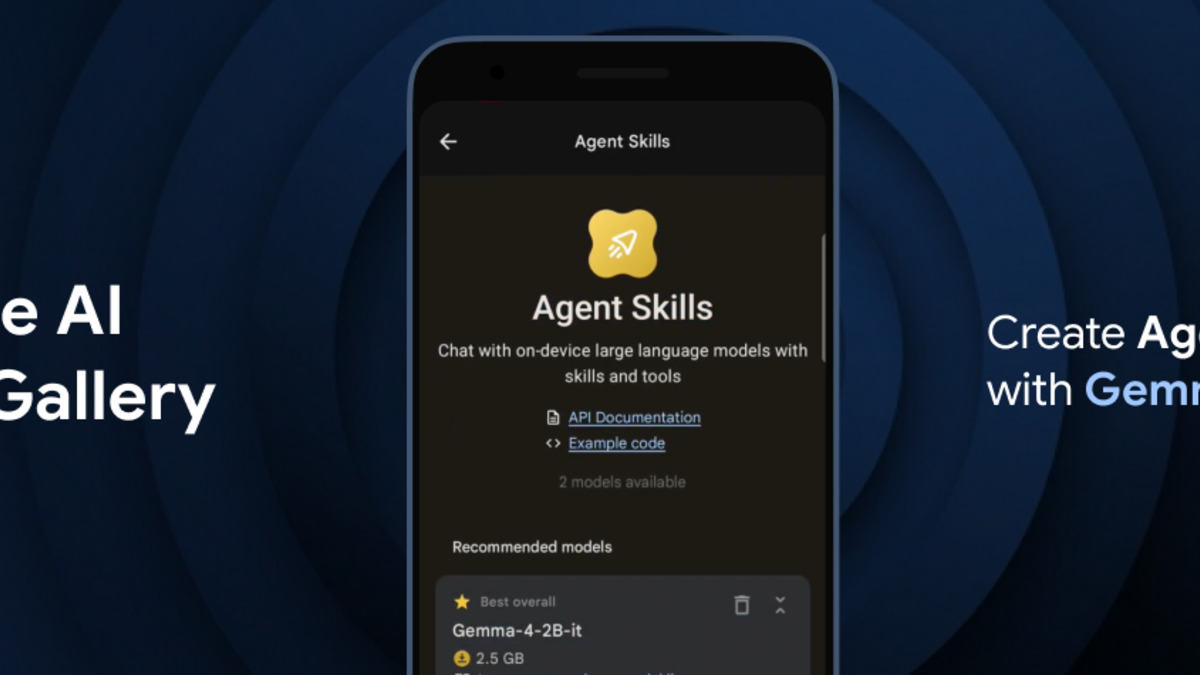

Google's official Gemma 4 banner emphasizing on-device and agentic capabilities.

Source: developers.googleblog.com

Google's official Gemma 4 banner emphasizing on-device and agentic capabilities.

Source: developers.googleblog.com

Benchmarks vs the Field

Google published benchmark numbers that position the 31B and 26B variants against significantly larger models. Independent LMArena rankings back up the top-line claims, though detailed third-party evaluations are still arriving.

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Notes |

|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | Strong general knowledge |

| AIME 2026 | 89.2% | 88.3% | Competition math |

| LiveCodeBench | 80.0% | 77.1% | Real-world coding |

| GPQA Diamond | 84.3% | 82.3% | Graduate-level science |

| MMMU Pro (Vision) | 76.9% | 73.8% | Multimodal reasoning |

| MATH-Vision | 85.6% | 82.4% | Visual math |

How does this stack against competitors? At the ~30B class, the closest open-weight rivals are Qwen 3.5's 27B variant and Llama 4 Scout (109B total, 17B active). Previous comparisons showed Qwen 3.5 winning on multilingual tasks and coding, while Llama 4 Scout won on long-context workloads with its 10M token window. Gemma 4's 31B appears to close some of those gaps on math and reasoning - the 89.2% AIME score is particularly strong. Full independent head-to-head comparisons will take a few weeks to materialize.

Benchmark scores across all four Gemma 4 variants as reported by LMStudio.

Source: lmstudio.ai

Benchmark scores across all four Gemma 4 variants as reported by LMStudio.

Source: lmstudio.ai

The On-Device Story

Google partnered with Qualcomm, MediaTek, and its own Pixel team to optimize Gemma 4 for edge hardware. The E2B and E4B models support 2-bit and 4-bit weight quantization via LiteRT, and Google claims they process 4,000 input tokens across two distinct agentic skills in under three seconds on supported devices.

The Google AI Edge Gallery app now ships Gemma 4 E4B and E2B for both Android and iOS. One demo - Tiny Garden - runs multi-step autonomous agentic workflows completely on-device using voice commands decomposed into function calls. That's native function calling and structured JSON output running locally, with no cloud round-trip.

Arm published a companion blog confirming optimized performance across Arm-based devices, covering Android phones, Chromebooks, and single-board computers. For the home GPU crowd, the 26B MoE fits in approximately 17 GB with default quantization, while the 31B dense needs around 19 GB - both within range of a single consumer GPU with 24 GB VRAM.

Gemma 4's edge models target phones, Raspberry Pis, and IoT devices with under 1.5 GB RAM footprint.

Source: newsroom.arm.com

Gemma 4's edge models target phones, Raspberry Pis, and IoT devices with under 1.5 GB RAM footprint.

Source: newsroom.arm.com

What Changed From Gemma 3

Gemma 3 topped out at 27B parameters with a 128K context window and used Google's proprietary Gemma license. Gemma 4 brings several architectural upgrades:

Per-Layer Embeddings (PLE) inject a small residual signal from a second embedding table into every decoder layer. This helps the smaller models punch above their weight class.

Shared KV Cache lets the last N layers reuse key-value states from earlier layers, cutting redundant projections. That translates to faster inference and lower memory usage, especially on long contexts.

Variable Aspect Ratio Vision with configurable token budgets (70 to 1,120 tokens per image) gives developers a quality-vs-speed knob for image processing. The vision encoder now uses learned 2D positions with multidimensional RoPE.

The context window doubled for the larger models - from 128K to 256K. And the license flipped from Google's custom terms to Apache 2.0, removing commercial restrictions that previously limited enterprise adoption.

Training covered 140+ languages, up from Gemma 3's already broad multilingual support. All four variants support native function calling and structured JSON output - capabilities that Gemma 3 required workarounds for.

The Open-Weight Race

The Apache 2.0 move is the licensing story. Previous Gemma models used Google's own terms, which imposed usage restrictions that made enterprise legal teams nervous. Apache 2.0 puts Gemma 4 on the same licensing footing as Meta's Llama models (which use a custom but permissive license) and Alibaba's Qwen series (Apache 2.0). Google frames this as giving developers "complete digital sovereignty" over their models and data.

Day-one framework support is broad: Hugging Face Transformers, TRL, Transformers.js, vLLM, Ollama, llama.cpp, MLX, LM Studio, NVIDIA NIM/NeMo, SGLang, Unsloth, and more. GGUF quantizations are available through the ggml-org collection. The models are on Hugging Face, Kaggle, Ollama, and Google AI Studio. For local deployment, the MoE variant is the sweet spot - 4B active parameters means you get 26B-class intelligence at 4B-class speed on consumer hardware.

The competitive picture is busy. Llama 4 Scout offers a 10M token context window that Gemma 4 can't touch. Qwen 3.5 leads on multilingual and coding tasks at similar parameter counts. Mistral's small models compete at the edge. Gemma 4's argument is intelligence-per-parameter: more capability per byte of model weight than anything else available. The LMArena #3 ranking for the 31B variant, alongside models with 20-30x more parameters, supports that claim - at least on the benchmarks Google highlights.

What to Watch

Google didn't publish SWE-bench scores with the launch. Given the strong LiveCodeBench numbers (80% for the 31B), the omission is worth noting - SWE-bench tests real-world repository-level coding that single-problem benchmarks don't capture.

The "most capable open model" claim leans on per-parameter efficiency, not absolute performance. Larger models from DeepSeek, Meta, and Alibaba still outperform Gemma 4 on raw scores when parameter count isn't a constraint.

Independent evaluations from the community will fill in the gaps over the coming weeks. The initial LMArena placements are promising, but arena rankings shift as more users test edge cases. For anyone building on open-source models at the 30B class, Gemma 4 right away becomes a required evaluation target - particularly the MoE variant, which offers a rare combination of frontier-class scores at consumer-hardware inference speeds.

Sources:

- Google Blog - Gemma 4 announcement

- Hugging Face - Welcome Gemma 4

- SiliconANGLE - Gemma 4 brings complex reasoning to low-power devices

- 9to5Google - Google announces Gemma 4 with Apache 2.0

- Engadget - Google releases Gemma 4

- LMStudio - Gemma 4 models page

- Arm Newsroom - Gemma 4 on Arm

- Google Developers Blog - Agentic skills on the edge with Gemma 4