Thinking Machines Picks Google Cloud for First GB300 Deal

Mira Murati's AI lab signs a single-digit-billion deal with Google Cloud for GB300 chip access, its first cloud provider commitment, as frontier labs race to lock in next-gen compute.

Mira Murati's Thinking Machines Lab has signed its first cloud provider deal, committing to Google Cloud for access to NVIDIA GB300-powered compute in a contract valued at single-digit billions of dollars. TechCrunch reported the agreement today as an exclusive, noting that the deal gives Thinking Machines priority access to GB300 systems - the Vera Rubin generation of NVIDIA hardware - offering 2x the training and serving throughput of the previous Blackwell lineup.

This is the lab's first formal cloud infrastructure commitment. The deal is non-exclusive, but it establishes Google Cloud as TML's primary cloud partner for model training and deployment.

TL;DR

- Single-digit billions deal between Google Cloud and Thinking Machines Lab, announced April 22

- TML gets GB300 (Vera Rubin) chip access - 2x faster training and serving vs prior generation

- First cloud provider partnership for TML; deal is non-exclusive

- Google bundles storage, Kubernetes, and Spanner with GPU compute

- Exact dollar amount and GB300 deployment timeline are undisclosed

What Google Cloud Is Delivering

The GB300 Chip Stack

GB300 is NVIDIA's Vera Rubin architecture - the generation following Blackwell. Compared to previous B200 systems, Vera Rubin chips deliver twice the training throughput and up to 30x the inference speed relative to H100s, driven by higher HBM3e memory capacity and faster NVLink interconnects. At cluster scale, that interconnect bandwidth - 1.8 TB/s between GPUs - is what makes training runs on very large models practical without stalling on memory transfers.

# NVIDIA GB300 Vera Rubin - specs per node

gpus_per_node: 8

gpu_memory: 288 GB HBM3e per GPU # 2304 GB per node

nvlink_bandwidth: 1.8 TB/s

training_vs_b200: 2x faster

serving_vs_h100: 30x faster

# Source: NVIDIA GTC 2026

Google Cloud began offering GB300 capacity to select customers in early 2026, initially for reinforcement learning and large-scale inference workloads. Thinking Machines is among the first labs to receive production access at scale.

Bundled Infrastructure

Google isn't selling raw GPU slots. The deal packages storage, Kubernetes orchestration through GKE, and Spanner database capacity alongside the compute allocation. That matters operationally. A lab at TML's stage - still pre-product, building the training infrastructure from scratch - benefits from a managed stack rather than integrating storage, networking, and orchestration from separate providers.

Myle Ott, a founding researcher at Thinking Machines, told TechCrunch: "Google Cloud got us running at record speed with the [reliability] needed." That's a practitioner endorsement focused on operations, not benchmarks.

Google operates data centers across the US and Europe - the same infrastructure backbone that Thinking Machines Lab will draw on through the new cloud agreement.

Source: commons.wikimedia.org

Google operates data centers across the US and Europe - the same infrastructure backbone that Thinking Machines Lab will draw on through the new cloud agreement.

Source: commons.wikimedia.org

What Thinking Machines Needs

Training at Scale

TML hasn't disclosed what it's building, but its infrastructure choices make the ambitions readable. In March 2026, NVIDIA committed a gigawatt of Vera Rubin compute directly to the lab, a supply the Financial Times valued at tens of billions of dollars. A gigawatt of AI data center capacity maps to hundreds of thousands of high-end GPUs - frontier scale by any measure. The Google Cloud deal adds a managed cloud layer on top of that raw capacity.

The combination suggests TML is running large training jobs that need both dedicated hardware (the NVIDIA direct deal) and elastic cloud capacity (the Google Cloud deal) to handle workload spikes and deployment infrastructure.

Choosing Google Over Amazon

AWS and Microsoft Azure were the obvious alternatives. Both have comparable GPU inventory and existing relationships with frontier AI labs - Microsoft anchors OpenAI's compute, Amazon has Anthropic's dedicated Trainium cluster. By going to Google Cloud, TML gives Google a reference customer for its GB300 offering, while getting access to a Kubernetes and database stack that Google has spent years hardening for enterprise scale.

The deal is non-exclusive, which preserves TML's ability to add AWS or Azure capacity later. But Google's bundling play - making Spanner, GKE, and storage part of the same contract - creates operational switching costs that a lower GPU price elsewhere can't easily offset.

| Compute Layer | Provider | Status |

|---|---|---|

| Direct Vera Rubin capacity | NVIDIA | Announced March 2026, deployment 2027 |

| Cloud GPU + managed services | Google Cloud | Signed April 2026, timeline undisclosed |

| Enterprise cloud (AWS, Azure) | None confirmed | Non-exclusive deal leaves door open |

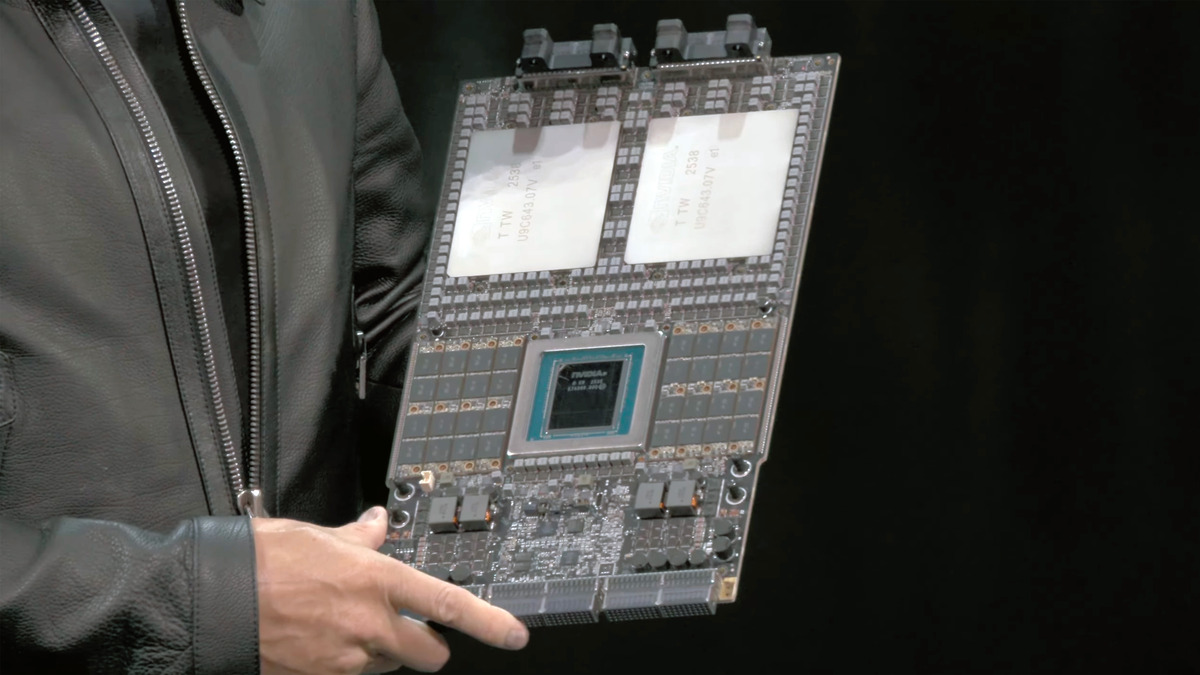

The NVIDIA Vera Rubin Superchip - each board pairs an 88-core Vera CPU with two Rubin GPUs and eight SOCAMM memory modules. Google Cloud is among the first to offer these systems to external customers.

Source: tomshardware.com

The NVIDIA Vera Rubin Superchip - each board pairs an 88-core Vera CPU with two Rubin GPUs and eight SOCAMM memory modules. Google Cloud is among the first to offer these systems to external customers.

Source: tomshardware.com

The Cloud Wars for Frontier Labs

Google Cloud's strategy here mirrors what Microsoft did with OpenAI and Amazon did with Anthropic: get a high-profile frontier lab committed early, before their compute appetite is fully known. Frontier labs are disproportionate buyers - a single training run can consume millions of GPU-hours - and they shape what infrastructure requirements look like for the next generation of AI deployments.

The difference now is that Vera Rubin hardware is newer than what OpenAI or Anthropic locked in during their early cloud commitments. Google can offer a hardware generation advantage that Amazon, with its Trainium line, can't match directly. Whether that translates into a durable competitive moat depends on how quickly AWS scales its own GB300 inventory and how sticky the bundled services turn out to be.

The deal lands as Google Cloud is trying to establish itself as the default cloud for the next wave of frontier AI labs before those labs are well-known enough to have negotiating leverage. Getting Thinking Machines before any public model launch - before the lab's name appears on any benchmark - is the point.

Where It Falls Short

Three things this announcement doesn't answer.

The dollar amount is vague. "Single-digit billions" spans $1B to $9B, an 8x range that changes what the deal signals about TML's scale and Google Cloud's pricing leverage. Neither party disclosed the full contract value or per-GPU pricing terms.

The timeline is missing. GB300 instances at production scale aren't fully generally available yet. The deal is signed, but when Thinking Machines runs its first major training job on GB300 hardware through Google Cloud - and whether that's Q3 2026 or later - isn't clear from the announcement.

The lab's output is still unknown. Thinking Machines Lab has been operating for about 15 months and has not publicly released any models or benchmarks. The infrastructure commitments are enormous relative to its public footprint. That's not unusual for a frontier AI lab in the training phase, but it means there's no independent evidence yet that the compute is producing results that justify the scale of investment.

Sources: TechCrunch exclusive - Google Thinking Machines deal