Google Catches First AI-Built Zero-Day in Wild

Google's Threat Intelligence Group confirmed criminals used an AI model to discover and weaponize a zero-day 2FA bypass - the first documented case of AI-generated exploitation in a real attack campaign.

A Python script landed in Google's threat intelligence pipeline sometime in early 2026 with a strange characteristic. Buried in the comment blocks: educational docstrings explaining what the code does, step by step, in the tone of a developer writing for a student. And a CVSS score - the industry-standard severity rating for software vulnerabilities - that didn't exist anywhere in the public database. The National Vulnerability Database had never assigned it.

That hallucinated CVSS score was enough. Google's Threat Intelligence Group (GTIG) knew: no human exploit writer adds a fake severity score to their own attack tool. An AI had built this.

TL;DR

- Google's Threat Intelligence Group confirmed the first real-world case of criminals using AI to discover and weaponize a zero-day vulnerability

- The exploit - a 2FA bypass in a popular open-source web administration platform - was identified by structural markers typical of LLM-produced code: educational docstrings, textbook Python format, and a hallucinated CVSS score

- Google worked with the vendor to patch quietly before mass exploitation; the criminal group planned to compromise many systems at once

- State-sponsored groups in China, North Korea, and Russia are already running AI across the full attack chain - this criminal case isn't an outlier, it's the leading edge of a wider shift

- GTIG chief analyst John Hultquist: "The game's already begun and we expect the capability trajectory is pretty sharp"

What the Code Gave Away

The script was designed to bypass two-factor authentication on a widely used open-source web administration tool. Google hasn't named the platform - it was patched quietly through responsible disclosure - but the vulnerability class is public: a semantic logic flaw, not a memory corruption bug or a missing input check.

The attack chain: a criminal group used an AI model to identify a zero-day in a web admin tool and built an exploit that bypassed two-factor authentication.

Source: cloud.google.com

The attack chain: a criminal group used an AI model to identify a zero-day in a web admin tool and built an exploit that bypassed two-factor authentication.

Source: cloud.google.com

The semantic flaw that AI found first

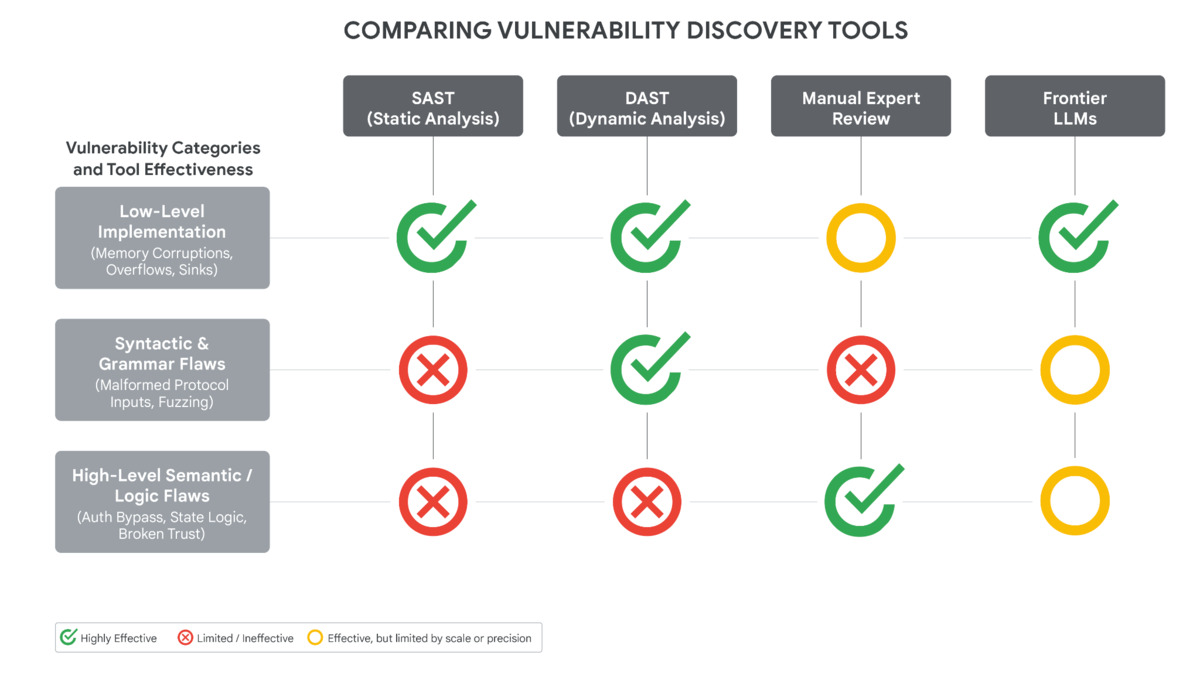

Semantic logic flaws are exactly the kind of vulnerability traditional scanning tools miss. Static analyzers and fuzzers catch memory errors, malformed input handling, and syntax-level problems. Semantic flaws require understanding intent - what a developer meant to do versus what the code actually does.

In this case, the authentication logic contained a hardcoded trust exception that contradicted the 2FA enforcement rule. A developer had introduced it, likely as a convenience measure. Traditional tools saw syntactically valid code. An AI model, capable of reasoning about developer intent and spotting contradictions between policy logic and implementation, found it.

GTIG's report is explicit about why this class of flaw suits AI: "Frontier LLMs excel at identifying these types of high-level flaws and hardcoded static anomalies. Though frontier LLMs struggle to navigate complex enterprise authorization logic, they have an increasing ability to perform contextual reasoning, effectively reading the developer's intent to correlate the 2FA enforcement logic with the contradictions of its hardcoded exceptions."

The telltale markers

The giveaway wasn't the exploit logic itself. Human pentesters write functional exploit scripts too. The giveaway was how the code was organized and commented. Per GTIG's analysis, the script contained:

# CVE-XXXX-XXXX: [REDACTED] Admin Panel - 2FA Authentication Bypass

# CVSS Score: 9.4 (Critical) <-- this score was never assigned in NVD

#

# Description:

# This exploit targets the hardcoded trust exception in the 2FA

# enforcement logic. The developer assumption that certain callers

# should be trusted implicitly contradicts the broader policy...

#

# Usage: python3 exploit.py --target <host> --user <email> --pass <pass>

# --target Target URL (e.g., https://admin.example.com)

# --user Valid account email

# --pass Valid account password

def exploit_2fa_bypass(session, target, username, password):

"""

Authenticate with credentials, then trigger the hardcoded

exception path to bypass secondary authentication. The flaw

arises because the developer assumed internal callers could

be trusted without completing the 2FA flow...

"""

Illustrative structure matching GTIG's description: educational docstrings explaining every step, a hallucinated CVSS score not present in NVD, detailed help menus, and clean textbook Python formatting - all structural markers of LLM-generated code, atypical of human-written attack tools.

Clean, thorough, pedagogical - exactly what a language model produces when it writes code. Human attackers rarely document their own tools this carefully.

The Vulnerability it Targeted

The 2FA bypass required valid user credentials. An attacker couldn't walk in unauthenticated - they needed to already know a username and password for the platform. That lowers the raw severity. In practice, it doesn't matter much: credential stuffing attacks against admin panels are standard operation for criminal groups at scale. Credentials are cheap. Zero-days are expensive. This exploit made the combination efficient.

GTIG's vulnerability tool comparison. Traditional SAST and DAST scanners fail on high-level semantic and logic flaws - the exact class this zero-day falls into. Frontier LLMs succeed where automated tools don't.

Source: cloud.google.com

GTIG's vulnerability tool comparison. Traditional SAST and DAST scanners fail on high-level semantic and logic flaws - the exact class this zero-day falls into. Frontier LLMs succeed where automated tools don't.

Source: cloud.google.com

The comparison chart in GTIG's report is worth sitting with. Manual expert review catches semantic flaws reliably. But expert review doesn't scale - it's measured in hours per target, not systems per hour. Frontier LLMs, per Google's findings, now match expert performance on this vulnerability class and do so at machine speed. The cost of finding this category of flaw just dropped notably for anyone with API access.

Disrupted Before Use

Google's GTIG identified the exploit before the criminal group launched it. The group, described by CyberScoop as having "a strong record of high-profile incidents and mass exploitation," was preparing a broad campaign. Google worked with the platform vendor to patch the flaw through responsible disclosure. By the time the attackers were ready to launch, the flaw no longer existed.

"We finally uncovered some evidence this is happening. This is probably the tip of the iceberg."

That's John Hultquist, chief analyst at GTIG, speaking to CyberScoop. He added: "The game's already begun and we expect the capability trajectory is pretty sharp."

The report confirms that Gemini wasn't used to build the exploit. Neither was Anthropic's Claude Mythos Preview, the restricted model that autonomously discovered thousands of zero-days in its own testing earlier this year. The model used by the criminal group remains unidentified. GTIG has high confidence an AI was involved based on code structure alone - but doesn't know which one.

Ryan Dewhurst of watchTowr, commenting on the broader trend, put it plainly: "AI is already accelerating vulnerability discovery, reducing the effort needed to identify, validate, and weaponize flaws."

State Actors Are Already Running This Playbook

The criminal case is striking as the first confirmed real-world instance. It doesn't arrive in isolation. Since GTIG's February 2026 report on AI-related threats, they've tracked several state-sponsored groups running AI across the full attack chain.

APT45 (North Korea) - North Korean operators submitted thousands of repetitive prompts to AI models to validate CVE exploits at scale, using AI to automate the verification step in vulnerability weaponization.

UNC2814 (China-nexus) - Used Gemini to conduct vulnerability research on targeted systems, outsourcing reconnaissance to a LLM.

APT27 (China) - Used Gemini to develop tools for managing command-and-control infrastructure.

Russia-nexus operators - Deployed AI-created code to obfuscate the CANFAIL and LONGSTREAM malware families, and used AI voice cloning to impersonate journalists in the Overload disinformation campaign targeting Ukraine.

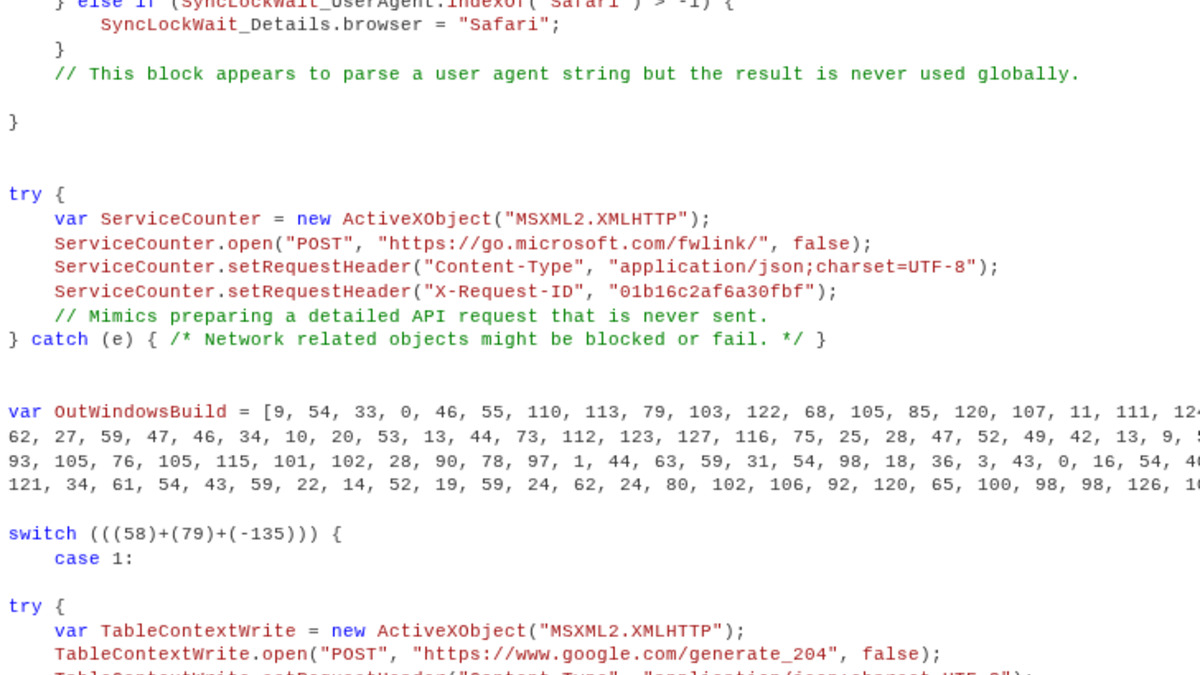

An example of malware code containing the kind of explanatory comments LLMs generate. Comments like "this block appears to parse a user agent string but the result is never used globally" aren't typical of human-written attack tools.

Source: cloud.google.com

An example of malware code containing the kind of explanatory comments LLMs generate. Comments like "this block appears to parse a user agent string but the result is never used globally" aren't typical of human-written attack tools.

Source: cloud.google.com

Criminal groups historically track behind state actors by six to eighteen months. That pattern is holding for AI-enabled offense: state groups ran these workflows through 2025, and criminal organizations are reaching the same capability now.

Both OpenAI's Daybreak program and Anthropic's Project Glasswing are specifically designed to put comparable offensive AI capability in defenders' hands - a direct acknowledgment that the arms race is running and defenders need the same tools.

What Security Teams Should Do Now

Audit your web administration tools. Open-source admin panels are high-value targets. Check whether you're running unpatched versions of any system administration software and review your 2FA implementation for hardcoded exceptions or trust assumptions.

Add semantic logic flaws to your review scope. Traditional SAST and DAST won't find them. Commission expert code review or red-team assessment specifically targeting authentication logic, trust assumptions, and state-machine contradictions in critical systems.

Compress your patch response time. When vendors push security patches for authentication components, treat them as emergency rollouts. The window between disclosure and exploitation is narrowing as AI accelerates attacker capability.

Watch for AI-generated exploit code in threat feeds. The structural markers GTIG identified - excessive educational docstrings, hallucinated CVE metadata, textbook formatting - are detectable. Ask your threat intelligence providers whether they're tracking this signature class.

Assume the next one won't be caught first. GTIG's disruption here was proactive. Most incidents aren't discovered until after exploitation is under way.

Sources:

- Google Cloud Blog - GTIG AI Threat Tracker Report, May 2026

- Google Blog - Threat Intelligence Group Report Overview

- CyberScoop - Google spotted an AI-developed zero-day before attackers could use it

- Engadget - Google announces first-ever discovery of a zero-day exploit made with AI

- BleepingComputer - Google: Hackers used AI to develop zero-day exploit for web admin tool

- The Register - Google says criminals used AI-built zero-day in planned mass hack spree

- Infosecurity Magazine - Hackers Observed Using AI to Develop Zero-Day for the First Time