Google AI Edge Gallery Puts Gemma 4 on Your Phone

Google's AI Edge Gallery officially launched on the Play Store and App Store on April 9, running Gemma 4 E2B and E4B models fully offline on any phone from Android 12 or iOS 17 onward.

The gap between "runs on a phone" and "actually useful on a phone" has been the core problem with on-device AI since the beginning. The models that fit in 2GB of RAM have historically been too weak for serious tasks, and the ones worth using have required a data center or at least a chunky workstation.

Google's answer arrived officially on April 9. The AI Edge Gallery, previously a sideload-only developer demo, went live on the Google Play Store and Apple App Store. It runs Gemma 4's two edge variants - E2B and E4B - completely on-device. No API key, no cloud connection, no data leaving the device unless you manually opt into Gemini text enhancement mode.

TL;DR

- Google AI Edge Gallery is now on Play Store and App Store as of April 9, 2026

- Runs Gemma 4 E2B (under 1.5GB RAM, 2.54GB download) or E4B on mid-range to flagship devices

- Offline by default: PDF summarization, image analysis, audio transcription, code generation, device control

- LiteRT-LM runtime processes 4,000 tokens across two Agent Skills in under 3 seconds

- Also works on Raspberry Pi 5 (7.6 tok/s), Qualcomm Dragonwing IQ8 NPU (31 tok/s), and WebGPU in the browser

What Shipped

The E2B and E4B Models

The Gallery auto-selects between two models based on the device. Flagship phones like the Pixel 10 Pro XL get the E4B. Mid-range hardware lands on E2B, which runs in under 1.5GB of memory on some devices using 2-bit and 4-bit quantized weights. The E2B download weighs in at 2.54GB - sizable, but one-time.

Both models carry a 128K context window and support text, vision, and audio input. They're released under Apache 2.0, and the underlying weights and training code are on Hugging Face and GitHub.

A few months ago, a 2B parameter model doing offline image analysis or audio transcription would have been a party trick. The Gemma 4 architecture change - per-layer embedding that keeps memory footprints small without crushing capability - is what makes E2B actually useful rather than just technically possible.

The LiteRT-LM Runtime

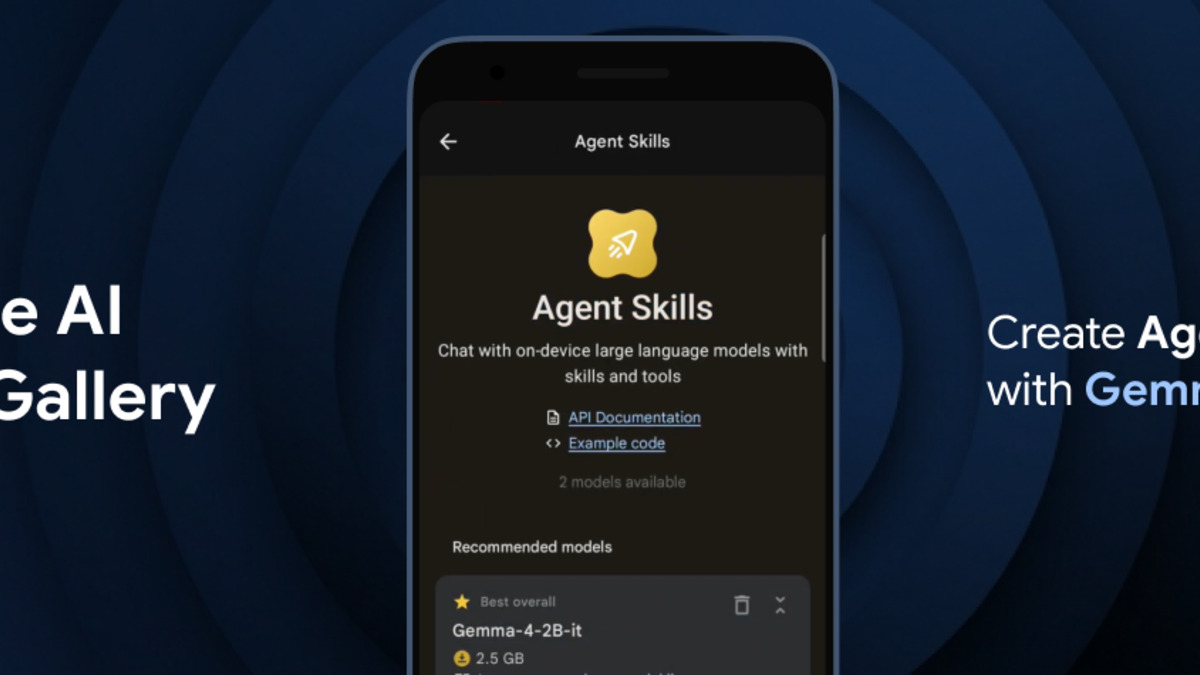

The performance story depends on LiteRT-LM, Google's on-device inference runtime. The headline number: 4,000 input tokens across two Agent Skills in under three seconds. That covers a typical long prompt or PDF section.

LiteRT-LM brings constrained decoding for structured outputs, dynamic CPU/GPU context switching, and memory-mapped per-layer embeddings. Developers building their own apps on top of it get the same runtime that powers the Gallery and Eloquent.

# Install via pip for app developers

pip install ai-edge-litert

# Basic usage example

from ai_edge_litert import Runner

runner = Runner.from_model_path("gemma4-e2b.task")

result = runner.generate("Summarize the following text: ...", max_tokens=256)

LiteRT-LM also exposes constrained decoding, so you can force outputs into valid JSON or other structured formats without post-processing - useful for function-calling workflows inside Agent Skills.

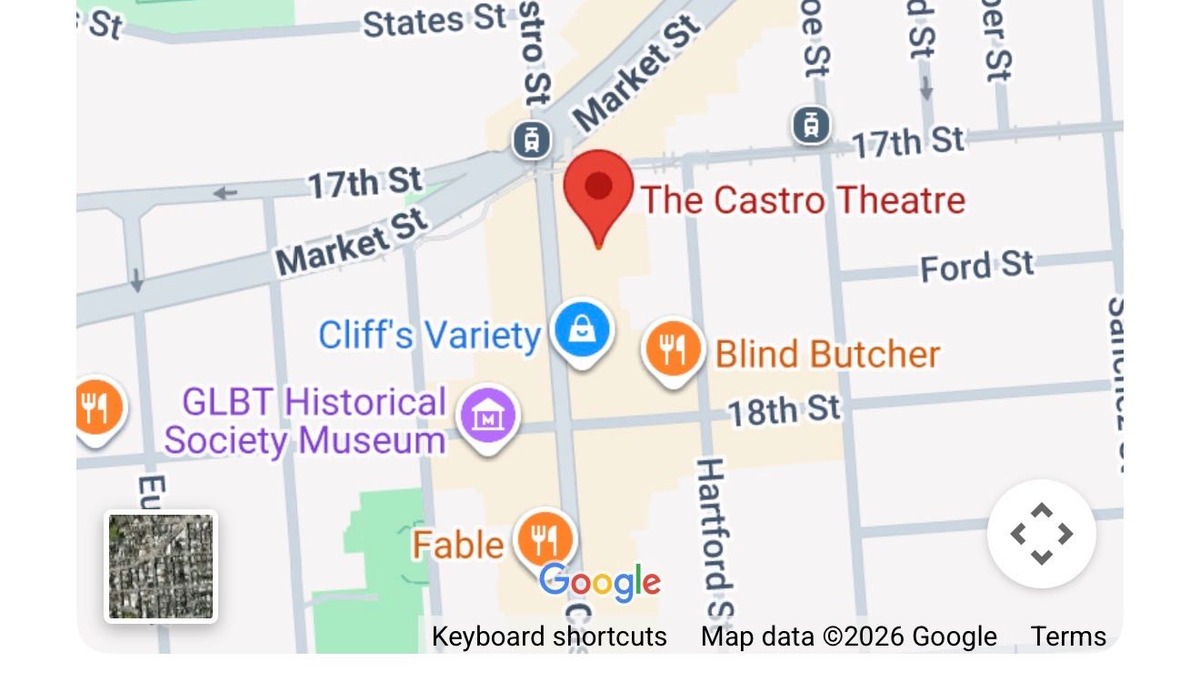

The Agent Skills panel in AI Edge Gallery, showing interactive HTML-based tools including Wikipedia lookup, QR code generation, and map integration.

Source: simonwillison.net

The Agent Skills panel in AI Edge Gallery, showing interactive HTML-based tools including Wikipedia lookup, QR code generation, and map integration.

Source: simonwillison.net

Hardware Requirements and Compatibility

| Platform | Minimum | Performance |

|---|---|---|

| Android | Android 12+ | E2B on mid-range, E4B on flagships |

| iOS | iOS 17+ | E2B on iPhone 15+, E4B on Pro models |

| Raspberry Pi 5 | Pi OS, CPU only | 7.6 decode tokens/s |

| Qualcomm Dragonwing IQ8 | NPU required | 31 decode tokens/s |

| Browser | Chrome/Edge with WebGPU | Variable, GPU-dependent |

| Linux/macOS/Windows | Desktop deployment | Via LiteRT-LM or llama.cpp |

No Hugging Face account is required for the mobile app. Custom models can be loaded by pointing the app at a local file path, which opens up fine-tunes and quantized variants for users who want to swap in something domain-specific.

Component Breakdown

Agent Skills

The previous version of the Gallery treated skills as demo content. This release positions them as an extension mechanism. Eight interactive HTML-based tools ship by default: interactive maps, Wikipedia queries, QR code generation, hash calculation, mood tracking, mnemonic password generation, a text spinner, and a kitchen adventure mini-game. Developers can write and load their own skills with a small JavaScript API.

Simon Willison, who tested the iOS release shortly after it appeared, called it "the first time I've seen a local model vendor release an official app for trying out their models on iPhone," and flagged the Agent Skills demo as the most technically interesting part. His testing did surface an app freeze on follow-up prompts, which Google hasn't publicly acknowledged yet.

Audio Scribe and Ask Image

Audio Scribe handles offline transcription up to 30 seconds per clip. That's short - long recordings need to be split. Ask Image runs multimodal queries against photos taken or uploaded on-device: object identification, document parsing, OCR, and UI understanding all work without sending anything to a server.

Gemma 4 banner from the Google Developers Blog covering the edge model launch.

Source: storage.googleapis.com/gweb-developer-goog-blog-assets

Gemma 4 banner from the Google Developers Blog covering the edge model launch.

Source: storage.googleapis.com/gweb-developer-goog-blog-assets

Eloquent: The First Production App on Top of This Stack

Five days before the Gallery update went live on app stores, Google quietly released a separate app: AI Edge Eloquent, an iOS dictation tool powered by the same Gemma-based ASR stack. It appeared in the App Store on April 7 with no announcement, no press release, and no product blog post.

Eloquent records speech, strips filler words automatically, and offers post-processing options: Key Points, Formal, Short, and Long. In fully offline mode, nothing leaves the device. Cloud mode sends only the cleaned text to Gemini models for further polish, not the raw audio.

The app imports terminology from Gmail contacts on request and supports a custom word list for jargon and names. It's currently English-only and unavailable in parts of Europe. An Android version is listed in the App Store metadata but hasn't shipped yet.

The AI Edge Eloquent dictation interface showing live transcription with cleanup options.

Source: ghacks.net

The AI Edge Eloquent dictation interface showing live transcription with cleanup options.

Source: ghacks.net

Eloquent isn't just a standalone product. It's Google testing whether a Gemma-backed ASR pipeline running fully on-device is ready for consumer software. If it is, the same stack could land inside Gboard, Google Docs, and the Android keyboard within months.

Where It Falls Short

The 30-second cap on Audio Scribe is a real limitation. Anything longer than a short voice note needs to be broken up manually before transcription. Competing apps like Whisper-based local runners on desktop handle arbitrary length without this constraint - and llama.cpp now supports several audio model families at similar quality levels on the same hardware.

Eloquent's European absence is partly a regulatory choice (EU AI Act compliance takes time) and partly a reminder that "open source" at the model level doesn't mean open access at the product level. The weights are available; the app isn't.

The lack of persistent conversation logs in the Gallery app means sessions are ephemeral. Every new query starts fresh unless you copy text out manually. That's acceptable for a developer demo, but it limits practical use for anything that builds on previous context.

Battery consumption during sustained inference hasn't been publicly benchmarked. Running a 4B model for an extended session on a mid-range device is going to draw more power than streaming from the cloud - the math only works in offline mode's favor when network calls are expensive or unavailable.

Android support for the Gallery's full feature set still requires flagships. The E2B model runs on mid-range hardware, but some Agent Skills and the full vision pipeline only activate on devices that pass a performance threshold Google hasn't documented publicly. What that threshold is exactly isn't clear.

The Gallery and Eloquent together represent a real shift in what's deployable on a consumer phone without cloud infrastructure. The E2B model fitting in under 1.5GB of RAM while doing multimodal inference and tool-calling is a different kind of result than the edge demos from two years ago. Whether Google turns this infrastructure into products that reach the 3 billion Android users who never interact with developer apps depends on what the Gboard and Docs teams do with it next.

Sources: