GitHub Copilot Will Train on Your Code This April

Starting April 24, GitHub will use Copilot Free and Pro users' interaction data to train AI models by default - with opt-out buried in settings.

On March 25, GitHub quietly updated its Privacy Statement and Terms of Service. Starting April 24, 2026, the company will use interaction data from Copilot Free, Pro, and Pro+ users to train AI models - opted in by default, with no proactive notification to users.

TL;DR

- GitHub will collect code snippets, accepted suggestions, file names, and chat inputs from personal Copilot users to train AI models - effective April 24

- Opt-out is available in Settings but not on by default; previously opted-out users are unaffected

- Copilot Business and Enterprise users are excluded - the protection runs to paid enterprise plans, not paid personal plans

- Private repo content "at rest" is protected, but code typed during an active Copilot session can be collected

- Community reaction: 59 thumbs-down vs. 3 positive responses in GitHub's own discussion thread

What GitHub Is Changing

The announcement landed in GitHub's changelog on March 25, sandwiched between routine privacy statement housekeeping. The substance: GitHub will now process Copilot interaction data from personal account users "to develop, improve, and train our products, services, and technologies, including AI and machine learning models."

It's the kind of update that looks clerical until you read what "interaction data" actually covers.

The Data GitHub Will Collect

GitHub's policy lists the following as fair game for training once users are opted in:

- Accepted or modified model outputs (which suggestions you keep, which you edit)

- Code snippets provided as context around your cursor

- Comments and documentation you write alongside code

- File names and repository structure

- Navigation patterns within repositories

- Chat inputs and multi-turn conversation history

- Feedback signals like thumbs-up and thumbs-down ratings

That's a detailed behavioral fingerprint of how you write code - not just anonymous usage telemetry.

Who Gets Opted In

The change applies to Copilot Free, Pro, and Pro+ subscribers. It doesn't apply to Copilot Business or Enterprise users, who are covered by existing Data Protection Agreements. Students and teachers are also excluded, as are users who had previously opted out of product improvement data collection.

For the millions of developers using Copilot on personal accounts - including those paying $10 or $19 per month for Pro tiers - the April 24 date is the switch-on point.

The Opt-Out Default Problem

GitHub's chief product officer Mario Rodriguez framed this as a mutual benefit: "We believe the future of AI-assisted development depends on real-world interaction data from developers like you."

Mario Rodriguez, GitHub CPO, who signed off on the policy change.

Source: github.com

Mario Rodriguez, GitHub CPO, who signed off on the policy change.

Source: github.com

The pitch is that opted-in users will see "more accurate code suggestions, better detection of potential bugs, and a deeper understanding of development workflows." Rodriguez added that non-participating users "will still be able to take full advantage of the AI features you know and love" - the implicit acknowledgment that opting out costs users nothing for capabilities.

What it costs GitHub is the data. The opt-out default maximizes that haul. Researchers and legal scholars have argued for years that consent for sensitive data collection should be explicit, not assumed. GitHub isn't legally wrong here, but it's making a choice that focuses on data volume over developer agency.

The developer community noticed. In GitHub's own community discussion thread, the announcement received 59 thumbs-down reactions against just 3 positive ones. Among the comments, only one official representative - Martin Woodward, GitHub's VP of Developer Relations - offered support for the change.

The Private Code Loophole

GitHub's announcement includes a line meant to reassure: private repository content stored "at rest" is not used for training. Issues and discussions at rest are also excluded.

This reassurance has a significant gap. According to The Register's coverage, "code snippets from private repositories can be collected and used for model training while the user is actively engaged with Copilot." If you're working in a private repo and Copilot is active, the code around your cursor - along with any suggestions you accept - is potentially in scope.

This distinction matters for developers at companies where private code represents proprietary logic, unreleased products, or regulated data. Copilot Business and Enterprise plans close this hole contractually. Personal plan users, including solo developers working on commercial projects, don't have that protection unless they opt out.

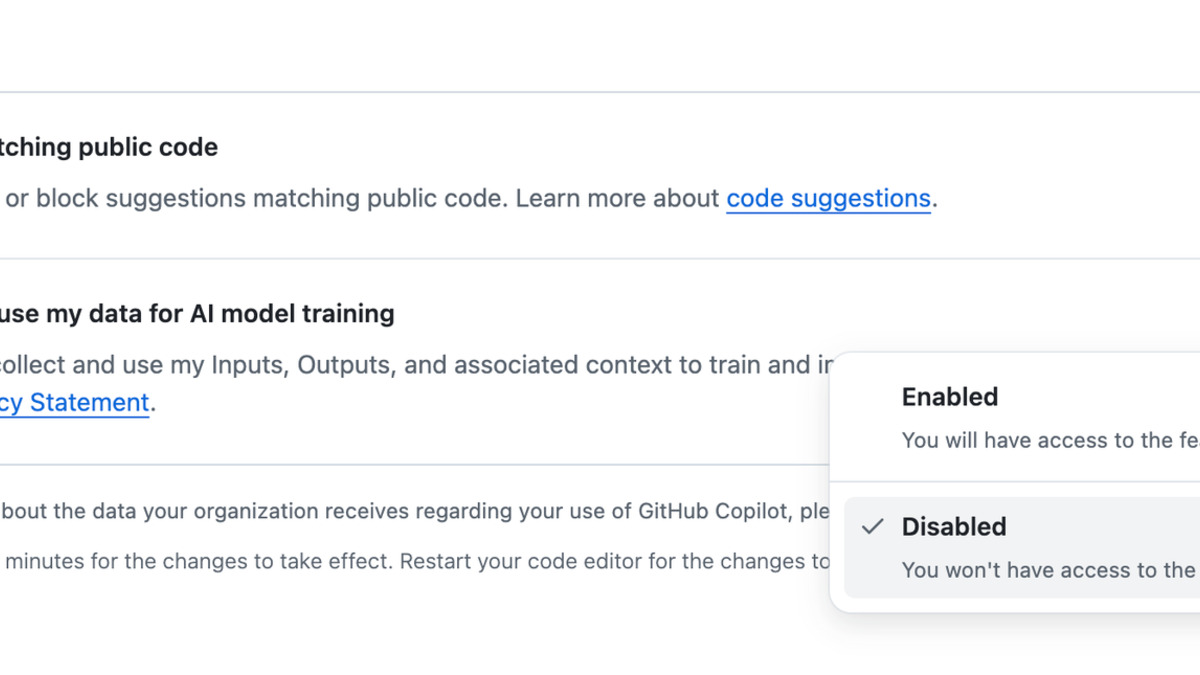

How to Opt Out

The opt-out path is buried three clicks deep in account settings. To disable training data collection:

- Go to your GitHub account settings

- Navigate to

Settings > Copilot > Features - Under Privacy, find "Allow GitHub to use my data for AI model training"

- Set it to Disabled

The opt-out toggle lives under Settings > Copilot > Features > Privacy. You have until April 24 to disable it before collection begins.

Source: howtogeek.com

The opt-out toggle lives under Settings > Copilot > Features > Privacy. You have until April 24 to disable it before collection begins.

Source: howtogeek.com

Users who previously opted out of product improvement data collection retain that preference automatically. Everyone else who hasn't touched this setting is in.

What GitHub Didn't Disclose

GitHub's announcement is remarkable for what it omits. The policy doesn't specify:

- Any minimum interaction threshold before data is included in training

- What anonymization or aggregation techniques are applied

- Whether code that appeared in training data could surface in suggestions to other users

- How long collected data is retained before being used for training

GitHub does commit to not sharing interaction data with "third-party AI model providers for their own independent training." Data may go to Microsoft affiliates - which includes Azure AI and related infrastructure teams - but not, say, OpenAI directly.

The ToS update also added a new Section J covering AI features specifically, and updated the user-produced content license to "explicitly extend to our affiliates and clarifies that it includes using your content for providing and improving AI models." For European Economic Area and UK users, GitHub has designated AI model development as a "legitimate interest" under applicable data protection law - a legal basis that doesn't require consent but can be challenged.

The Business / Enterprise Exception Tells the Story

The clearest signal in this policy is who it doesn't touch. Enterprise customers have contractual protections that personal users don't. GitHub knows those customers' legal and compliance teams would reject an opt-out data collection policy, so Business and Enterprise accounts were never on the table.

Personal users get the opt-out default because GitHub calculated they'd push back less. That calculation appears to be wrong - the community response suggests developers understood what was happening quickly - but the asymmetry shows where the real leverage sits.

This isn't the first time GitHub's Copilot infrastructure has drawn scrutiny. An earlier supply chain attack called RoguePilot showed how Copilot's deep integration with development environments creates attack surface. A Microsoft 365 Copilot bug let the AI read confidential emails that DLP policies were supposed to block. And just last month, GitHub expanded Copilot's multi-model options to include Claude and Codex for all paid users - growing the product's footprint while this policy was presumably in the works.

For developers who want a deeper look at the implications of training data policies more broadly, the open-source vs. proprietary AI guide covers the structural tensions that make this kind of move predictable.

The practical advice is simple: if you use Copilot on a personal account and have concerns about your code being used for training, opt out before April 24. Whether you do or don't, GitHub will have your answer.

Sources:

- Updates to GitHub Copilot interaction data usage policy - GitHub Blog

- GitHub Changelog: Privacy Statement and Terms of Service updates - GitHub Blog

- GitHub: We going to train on your data after all - The Register

- GitHub Enables Copilot Data Collection for AI Training by Default - gHacks

- GitHub Copilot AI training data default opt-out - WinBuzzer

- GitHub jumps on the bandwagon - Help Net Security