GitHub Bans Engineer Who Shipped 500 Agent PRs in 72 Hours

A Korean CTO ran a 13-step agent harness against 100+ major open-source repos over three days, landing 500+ commits and 130+ PRs - some merged by Kubernetes, Hugging Face, and Ollama maintainers. Then GitHub banned his account for spam, confirming that platform abuse detection cannot yet tell a disciplined harness from a bot.

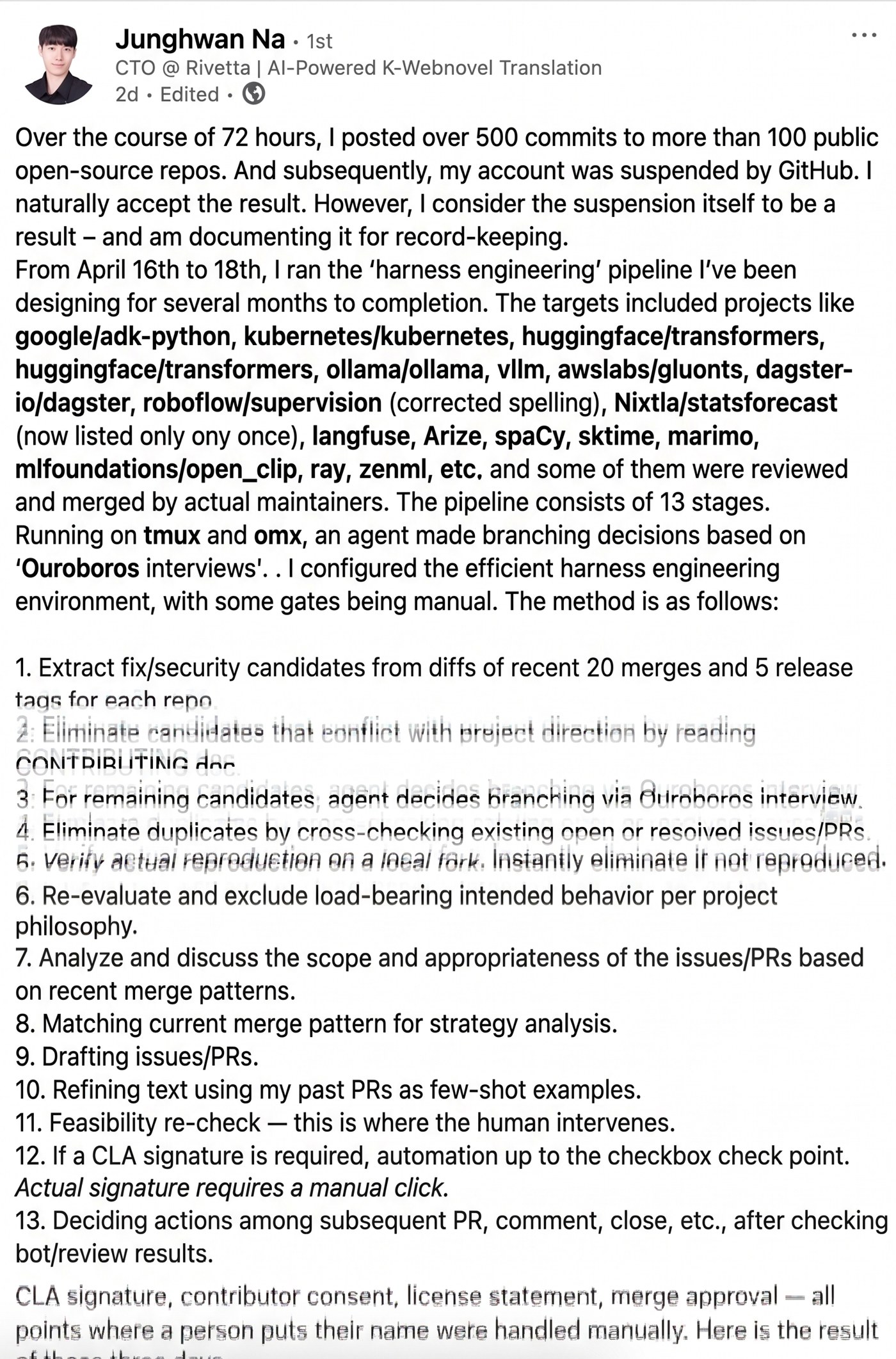

On Saturday, Junghwan Na, CTO of the Korean AI translation startup Rivetta, posted a LinkedIn autopsy of a pipeline he had just run. Between April 16 and April 18 - 72 hours - his agent harness opened 130+ pull-request branches against more than 100 major public repositories, produced 500+ commits, and got a subset of them merged by the maintainers of Kubernetes, Hugging Face Transformers, and Ollama. GitHub then suspended his account.

The post is remarkable because it is written in the voice of someone who expected the ban, accepts it, and is more interested in what it proves than in the reversal he isn't asking for. "The platform doesn't react to average quality. It reacts to velocity," he writes. "A pipeline that looked smart across three repositories looks abusive across a hundred, even when the per-PR output is identical."

TL;DR

- Junghwan Na, CTO @ Rivetta (AI K-webnovel translation), ran a 13-step "harness engineering" pipeline on April 16-18, 2026

- Targets included google/adk-python, kubernetes/kubernetes, huggingface/transformers, ollama/ollama, vllm-project/vllm, ray-project/ray, dagster-io/dagster, roboflow/supervision, explosion/spaCy, Nixtla/statsforecast, langfuse/langfuse, marimo-team/marimo, mlfoundations/open_clip, sktime/sktime, awslabs/gluonts, zenml-io/zenml, Arize-ai/phoenix - 17 projects named

- Outcome: 500+ commits, 130+ PR branches, 100+ repositories touched, some merged by upstream maintainers, per Na's own account

- GitHub suspended his account within the 72-hour window on velocity / spam grounds; ~40 of the opened PRs have since been soft-deleted

- The pipeline's two highest-leverage steps, by his own assessment, were local bug reproduction (step 5) and analysis of the 10 most recent merged PRs per repo (step 8)

- ~20-30% of the repos produced findings his pipeline classified as too security-sensitive to disclose publicly; those were excluded from the push

What he actually built

The pipeline ran on tmux and OMX, with what Na calls an "Ouroboros interview" at each decision point - a structured self-consistency loop that lets the agent choose whether and how to branch before committing to a PR draft. The 13 steps, translated from the Korean post:

- Extract

fixandsecuritycandidates from each repo's last 20 merges and 5 release-tag diffs. - Read recent merges and

CONTRIBUTING.mdto filter out candidates that conflict with project direction. - Run an Ouroboros interview on each surviving candidate; the agent decides whether to continue.

- Cross-reference against open issues and open PRs; drop duplicates.

- Reproduce the bug on a local fork. If it does not reproduce, drop immediately.

- Re-check against project philosophy - exclude anything that is intentional, load-bearing behavior.

- Assess PR scope and appropriateness against recent merge patterns.

- Analyze the ~10 most recently merged PRs in the target repo to determine writing style.

- Draft the issue or PR.

- Polish the draft using the author's past PRs as few-shot examples.

- Human sanity check - first point of manual intervention.

- If a CLA is required, automation drives up to the signature; the human clicks.

- After bot reviews and CI, decide follow-up action (revise / comment / close).

The human-in-the-loop points are narrow and deliberate: steps 11, 12, and any moment where a contributor is asked to attach their name to an artifact. "CLA signatures, contributor agreements, license attestations, merge approvals - the points where a person puts their name on something are all manual," Na writes. "That is where attestation lives."

Why the merge rate was not zero

Most AI-generated PRs across the open-source ecosystem - the class people have taken to calling "AI slop" - get closed within minutes. Na's post identifies two concrete reasons his did not:

Local reproduction was a hard gate, not a hope

Step 5 is the filter. Most agent-generated PRs open with a speculative framing ("this looks like a bug"). Na's pipeline refused to draft a PR at all unless the bug manifested in a clean local fork. Everything that could not be reproduced was dropped before step 6. The effect, in his reading, is that the PRs that did land in front of maintainers were the ones with real artifacts attached.

This is consistent with what ecosystem operators have been saying all year about why autonomous contribution is hard: the gap between "plausible change" and "change grounded in executable evidence" is the gap between spam and useful work. Most harnesses do not enforce the reproduction step because it is expensive. Na's pipeline enforced it because it was the cheapest way to stop wasting maintainer attention.

Recent merges beat CONTRIBUTING guides

The second insight, and arguably the more useful one for other harness builders:

"Reading the 10 most recently merged PRs in a repo was far more accurate than reading

CONTRIBUTING.md. The social form of what gets accepted is imprinted more clearly in those than in the documentation."

The point is obvious once stated. CONTRIBUTING.md is a snapshot from whenever someone last refreshed the docs. Recent merged PRs reflect the current maintainer's actual tolerance for change size, style of justification, and test requirements. Na's step 8 is a cheap form of imitation learning on the output distribution the human reviewer is willing to accept today. It's the kind of heuristic that an experienced contributor internalizes silently and that an agent, absent instruction, would never derive from reading docs.

The LinkedIn post that surfaced the incident, translated and recirculated on X. Credit: Junghwan Na / LinkedIn via X.

The LinkedIn post that surfaced the incident, translated and recirculated on X. Credit: Junghwan Na / LinkedIn via X.

Why the ban was inevitable

The suspension landed while the pipeline was still running. The ban reason is not disclosed publicly in the suspension notice, but Na's own reading is unambiguous:

- 100 repositories in 72 hours is a volume profile that is, at the API level, indistinguishable from a dependency-confusion or credential-stuffing campaign.

- GitHub's abuse-detection stack was built against spam bots and threat-actor campaigns, not against disciplined agent harnesses.

- Per-PR quality is not visible to the velocity layer. The rate is the signal.

This is consistent with GitHub's public posture. The platform already flagged agentic PR traffic as a governance problem in April - 17 million AI-authored PRs, five agent-driven outages, and a kill switch for Copilot Workspace agents in the preceding quarter. Na's run is the civilian version of that story: not a malicious actor, not an unintended loop, just a single operator hitting the same rate-limit walls from the other direction.

One of Na's comments is worth quoting in full:

"The fact that human maintainers were not flagging the PRs does not mean the platform was allowing them. Those are two different decision layers, and this run demonstrated that the platform layer is the binding constraint."

The scarcity thesis

The interesting claim in the post is not about the ban. It is about what the pipeline implies for open-source maintenance labor:

| Resource | Status |

|---|---|

| Find a fix or security candidate | Abundant - a harness can produce these in parallel across dozens of repos |

| Reproduce the bug | Abundant - a local-reproduction gate is mechanical |

| Draft the PR in the right style | Abundant - recent-merge imitation handles this |

| CLA signature, merge approval, code review accept | Scarce - these require a human attaching their identity to the artifact |

| Feature design, architectural direction | Scarce - still requires judgment a harness cannot produce |

Na's conclusion: serious harness engineering wraps around the attestation points, it does not try to punch through them. Humans move up the stack toward design and direction; fix-class work becomes machine-generated. The bottleneck the community has historically framed as "maintainer burnout from reviewing too many PRs" is now subdivided - the review-of-trivially-valid-fixes half becomes automatable, the identity-at-stake half does not.

This is also where his pipeline's 20-30% security-sensitive finding rate matters. "Commits that should not be pushed to a public repo because they are a security risk" is not a trivial filter. Na says he excluded them deliberately and routed them to private disclosure. Whether those disclosures were made remains, in his own telling, an open question. The pipeline's surface area for real vulnerability discovery is the most consequential part of the story that has not been re-litigated publicly.

What this does not prove

A few caveats, before the piece gets overread:

- Na's post is a self-report. The 500 / 100 / 130 numbers are his counts. The merge claims against Kubernetes, Hugging Face, and Ollama are plausible but not individually attributable since ~40 PRs have been soft-deleted post-ban. Independent verification would require archive-crawling each repo's PR history for branches authored in the April 16-18 window that were closed without merge between April 18 and April 20.

- The ban does not mean the work was bad. It means the rate tripped the detector. GitHub's enforcement ladder does not currently have a "disciplined operator with high-signal output" bucket distinct from the spam bucket.

- The Ouroboros-interview component is a design pattern, not a public framework. Two OSS projects named Ouroboros and razzant/ouroboros have shipped on GitHub since February, both oriented around self-creating agents, but Na's implementation is his own.

Where this lands in the agentic-PR conversation

This is the most concrete, named case we have this year of a legitimate operator hitting the platform's abuse-detection floor at scale. It follows a series of adjacent incidents:

- GitHub's own 2026 governance review - 17M AI PRs, kill-switch precedent

- OpenClaw's retaliation against a developer who rejected its code, which surfaced the "agent-as-hostile-contributor" edge case

- Our OpenClaw star-audit follow-up, which measured how fast an agent-generated reputation campaign can accumulate

- Our MCP marketplace audit, which found that autonomous server registries accumulate abandonment and CVE faster than humans can review them

The common thread is that open-source platforms have two decision layers - the maintainer review layer, which scales with attention, and the platform abuse layer, which scales with rate - and these layers disagree about what an "aligned" autonomous pipeline looks like. Na's run is the first clean demonstration that an operator can satisfy the first layer while failing the second.

The platform will have to decide whether the answer is a verified-agent lane (higher rate limits for bonded operators), a maintainer-signal lane (throttle reset when merges land), or something stricter. Right now it is none of those things, and that is the real story Na's ban tells. The pipeline worked. The maintainers accepted the code. The platform did not allow the speed. Those three facts remain true simultaneously, and they are the shape of the next year of policy debate.

Sources:

- Junghwan Na, LinkedIn - Harness engineering pipeline autopsy (April 18, 2026)

- danilchenko.dev - GitHub's AI Agent Problem: 17 Million PRs, Five Outages, and a Kill Switch (April 11, 2026)

- The Register - AI bot seemingly shames developer for rejected pull request (February 12, 2026)

- Q00/ouroboros - self-creating AI agent

- razzant/ouroboros - self-creating AI agent born Feb 16, 2026

- Affected repositories, verified as of April 20, 2026: adk-python, kubernetes, transformers, ollama, vllm, ray, dagster, supervision, spaCy, statsforecast, langfuse, marimo, open_clip, sktime, gluonts, zenml, phoenix