Google Sunsets Vertex AI, Launches Agent Control Plane

Google replaced Vertex AI with the Gemini Enterprise Agent Platform at Cloud Next 2026 - a full-stack control plane that assigns every agent a cryptographic ID and routes all tool calls through a central policy gateway.

Every Vertex AI service is now deprecated as a standalone product. Google made that clear at Cloud Next 2026 in Las Vegas today: "Moving forward, all Vertex AI services and roadmap evolutions will be delivered exclusively through the Agent Platform, rather than as a standalone service." That's not a soft rebrand. It's a platform migration notice.

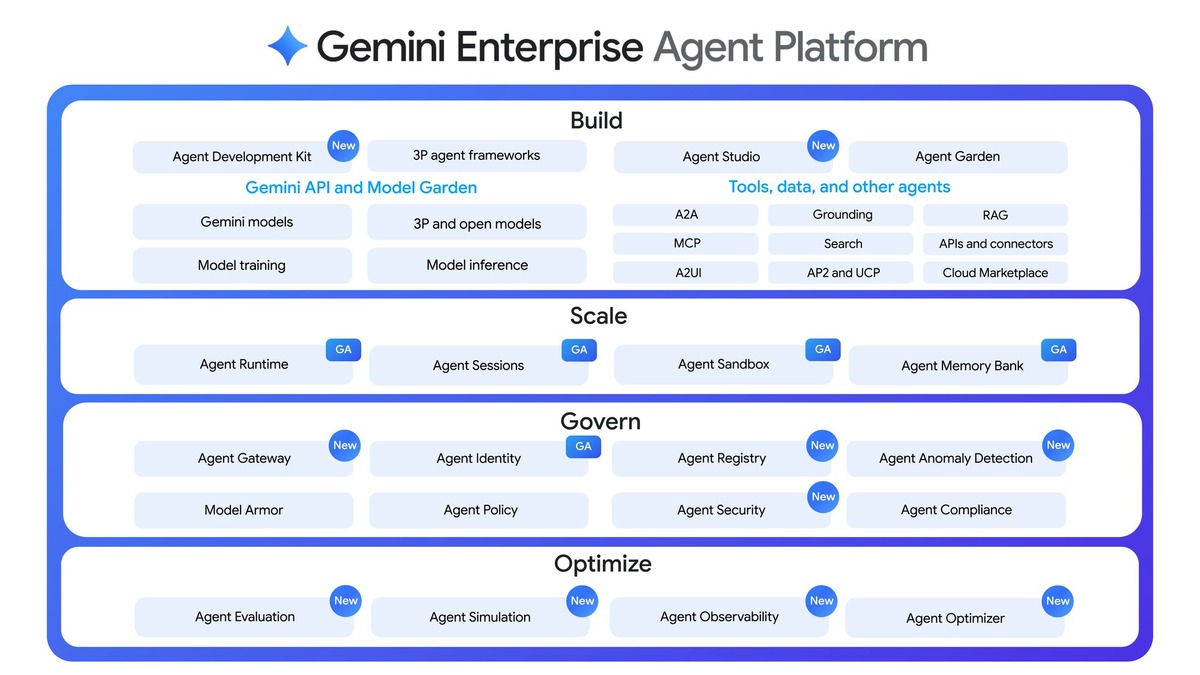

The replacement is the Gemini Enterprise Agent Platform - a four-pillar system for building, scaling, governing, and optimizing AI agents in production. If you showed up to the Cloud Next keynote expecting gradual Vertex AI improvements, you got a different kind of announcement.

TL;DR

- Vertex AI retires as standalone; Gemini Enterprise Agent Platform is its full replacement

- Four pillars: Build (Agent Studio + ADK), Scale (Agent Runtime + Memory Bank), Govern (Agent Identity + Agent Gateway), Optimize (Simulation + Evaluation)

- Every agent gets a unique cryptographic ID; all tool calls route through Agent Gateway, a central policy enforcement point

- ADK already processes 6+ trillion tokens per month; supports Python, Go, Java, Node.js, and C#

- No pricing announced; general availability slated for later in 2026

What Happened to Vertex AI

The Gemini Enterprise Agent Platform takes everything Vertex AI offered - model selection, fine-tuning, model building, notebooks - and adds a layer of agent-specific tooling that Vertex never had. Google's announcement made the relationship explicit: "The new Gemini Enterprise Agent Platform brings the model selection, model building, and agent building capabilities that customers love, together with new features for agent integration, DevOps, orchestration, and security."

For teams already using Vertex AI, the migration isn't optional. New features ship to the Agent Platform only, and existing Vertex AI workflows will need to be ported. Google hasn't given a hard deadline for when standalone Vertex AI access disappears, which is its own kind of pressure.

Inside the Four-Pillar Stack

Build: Agent Studio and ADK

Two entry points exist for building agents. Agent Studio is the low-code path - a visual interface where you design agent logic through drag-and-drop and can publish directly from the UI. The code-first path is the Agent Development Kit (ADK), an open-source framework that supports Python, Go, Java, Node.js, and C#, and already processes more than 6 trillion tokens per month across Gemini models.

A minimal ADK agent definition looks like this:

pip install google-adk

from google.adk.agents.llm_agent import Agent

def get_current_time(city: str) -> dict:

"""Returns the current time in a specified city."""

return {"status": "success", "city": city, "time": "10:30 AM"}

root_agent = Agent(

model='gemini-flash-latest',

name='root_agent',

description="Tells the current time in a specified city.",

instruction="You are a helpful assistant. Use get_current_time for city time requests.",

tools=[get_current_time],

)

Both paths share a runtime. Agent Studio projects export directly to ADK, and ADK agents deploy to Agent Runtime. Agent Garden supplies curated starter templates for common enterprise workflows - code modernization, invoice processing, financial analysis - so teams aren't building from scratch.

Scale: Runtime and Memory Bank

Agent Runtime provisions new agents with sub-second cold starts and supports multi-day autonomous workflows. That second capability matters for processes that truly can't fit inside a single API call budget, like a multi-day due diligence workflow or a continuous monitoring agent.

Memory Bank handles long-term context. It creates and curates memories dynamically from conversations, then surfaces them through Memory Profiles for low-latency recall. The design intent is that agents don't need to re-read thousands of tokens of conversation history on every call - the Memory Bank distills what's worth retaining and provides it on demand. Agent Sessions maps each interaction to a Custom Session ID that can reference CRM records or internal databases, giving agents continuity across separate conversations with the same user.

Govern: Agent Identity and Agent Gateway

This is the part enterprise security teams have been waiting for.

Agent Identity assigns a unique cryptographic ID to every agent the platform creates. Each ID maps to a defined set of authorization policies, and every action the agent takes is logged against that identity. The result is an auditable trail per agent, not just a shared API key that a dozen agents might be using concurrently. This is foundational for any enterprise that needs to answer "which agent did that?" after something goes wrong.

Agent Gateway sits between agents and the tools they call - Google describes it as "air traffic control" for the agent ecosystem. Every tool call routes through Gateway, which applies Model Armor protections, checks for prompt injection attempts, and watches for data leakage patterns. It also handles authentication across the entire agent fleet from a single enforcement point.

Agent Anomaly Detection runs with Gateway using statistical models and LLM-as-a-judge evaluators to flag suspicious behavior in real time: tool misuse, unauthorized data access, reasoning drift. Agent Threat Detection escalates that into detecting active exploitation - reverse shells, connections to known bad IPs. The Agent Security Dashboard ties everything together through Security Command Center, mapping agent-model relationships and scanning OS and language package vulnerabilities.

Google's Gemini Enterprise Agent Platform organizes agent infrastructure across Build, Scale, Govern, and Optimize. Every agent launched to the platform - regardless of how it was built - runs inside the same governance layer.

Source: cloud.google.com

Google's Gemini Enterprise Agent Platform organizes agent infrastructure across Build, Scale, Govern, and Optimize. Every agent launched to the platform - regardless of how it was built - runs inside the same governance layer.

Source: cloud.google.com

Optimize: Simulation and Evaluation

Agent Simulation tests agents against synthetic multi-step workloads with virtualized tools before they hit production. This means you can stress-test a complex workflow without making live API calls or touching real data.

Agent Evaluation runs continuously against production traffic using multi-turn autoraters that score agents on task success and safety. Agent Optimizer takes those scores and automatically clusters failure patterns, then suggests revised system prompts. Agent Observability provides trace-level visibility into agent reasoning chains using OTel-compliant telemetry and standardized dashboards - if an agent makes a bad decision at step four of a twelve-step workflow, you can see exactly where it diverged.

Requirements and Compatibility

The platform runs on Google Cloud only. No self-hosted or on-premise deployment option was announced.

| Capability | Details |

|---|---|

| Languages | Python, Go, Java, Node.js, C# |

| Models | 200+ via Model Garden: Gemini 3.1 Pro, Gemini 3.1 Flash, Gemma 4, Anthropic Claude Opus, Sonnet, and Haiku |

| Protocols | MCP server integration supported via Agent Gateway |

| Existing Vertex AI | All services migrate to the Agent Platform; no new standalone Vertex AI features |

| Availability | General availability date not announced |

| Pricing | Not disclosed |

The platform's MCP support connects to any MCP server, which means the Google Workspace CLI MCP tools built earlier this year should work with agents deployed through Agent Gateway. Google Cloud services are also exposed as MCP endpoints.

Two Products, One Conference

One of the more deliberate choices Google made is splitting the announcement into two distinct products. The Gemini Enterprise Agent Platform targets technical teams - developers, platform engineers, IT architects. Business users get the Gemini Enterprise app, which has a no-code Agent Designer, an Inbox for monitoring agent activity, and simple trigger-based automation.

The split makes sense given where enterprise AI adoption actually is. Agents are furthest along for technical tasks. Security-conscious enterprises aren't ready to give business users a tool that can autonomously execute multi-day workflows with access to production systems. Google is acknowledging the real state of the market rather than promising it'll all just work.

Google Cloud Next 2026 in Las Vegas combined hardware announcements - including eighth-gen TPU chips - with this agent infrastructure platform and a $750M partner fund for agentic AI development.

Source: blog.google

Google Cloud Next 2026 in Las Vegas combined hardware announcements - including eighth-gen TPU chips - with this agent infrastructure platform and a $750M partner fund for agentic AI development.

Source: blog.google

Where It Falls Short

The governance story is compelling on paper. Cryptographic agent IDs and centralized policy enforcement are the right primitives for enterprise agent security. The limitation is scope: all of it only applies to agents launched through Google's platform.

Enterprises running mixed fleets - some agents on AWS Bedrock Agents, some on Anthropic's Managed Agents platform, some self-hosted - get no coverage from Agent Gateway for the non-Google workloads. The "air traffic control" analogy breaks down when planes are departing from three different airports.

Vendor lock-in is a real engineering consideration. The ADK is open-source and supports non-Gemini models including Gemini 3.1 Pro and Anthropic's Claude, which is a genuine plus. But Agent Identity, Agent Gateway, Memory Bank, and Agent Runtime are all Google Cloud services. An organization that adopts this stack for governance is committing to Google Cloud as its agent infrastructure layer.

The Vertex AI migration timeline is still unclear. Google hasn't said when standalone Vertex AI access ends. Teams with significant Vertex AI investments need that information before they can commit to a migration schedule.

Pricing is missing completely. Agent Runtime, Memory Bank, and Agent Gateway have no announced costs. Without numbers, it's impossible to compare this against Azure AI Foundry or AWS Bedrock Agents on anything other than feature coverage.

Early production deployments include Payhawk, which cut expense submission time by 50% using Memory Bank, and PayPal, which built multi-agent payment workflows on ADK.

Source: cloud.google.com

Early production deployments include Payhawk, which cut expense submission time by 50% using Memory Bank, and PayPal, which built multi-agent payment workflows on ADK.

Source: cloud.google.com

Google's eighth-generation TPU infrastructure announced with this platform provides the compute backing these Agent Runtime claims. Whether the governance model holds up at scale, under real adversarial conditions with thousands of concurrent agents firing tool calls through a shared Gateway, is a question that won't be answered at a keynote.

Sources:

- Introducing Gemini Enterprise Agent Platform - Google Cloud Blog

- Google brings agentic development and control under one roof - SiliconAngle

- Google makes an interesting choice with its new agent-building tool - TechCrunch

- Gemini Enterprise Agent Platform overview - Google Cloud Docs

- ADK Quickstart (Python) - adk.dev

- Sundar Pichai shares news from Google Cloud Next 2026 - blog.google