Founder Loses $2,500 After AI-Coded App Leaks Stripe Keys

A startup founder's vibe-coded app exposed Stripe secret keys in frontend code, letting attackers charge 175 customers $500 each before he could rotate the credentials.

Anton Karbanovich, a Cyprus-based founder behind apps like Glossa.live (an AI-powered church translation service), built a paid web app using Anthropic's Claude Code without reviewing the generated code for security basics. Attackers found his Stripe secret API key exposed in the frontend JavaScript, charged 175 customers $500 each, and left him with $2,500 in non-refundable Stripe processing fees - even after he reversed the fraudulent charges.

| Who | Anton Karbanovich, serial entrepreneur based in Limassol, Cyprus, behind Glossa.live and other AI ventures |

| What happened | Stripe secret key (sk_live_...) was exposed in client-side code of a vibe-coded app |

| Damage | 175 customers charged $500 each ($87,500 total); $2,500 in non-refundable Stripe fees after reversals |

| Root cause | AI-generated code placed the Stripe secret key in frontend instead of a backend environment variable |

| His take | "I still don't blame Claude Code. I trusted it too much." |

What Happened

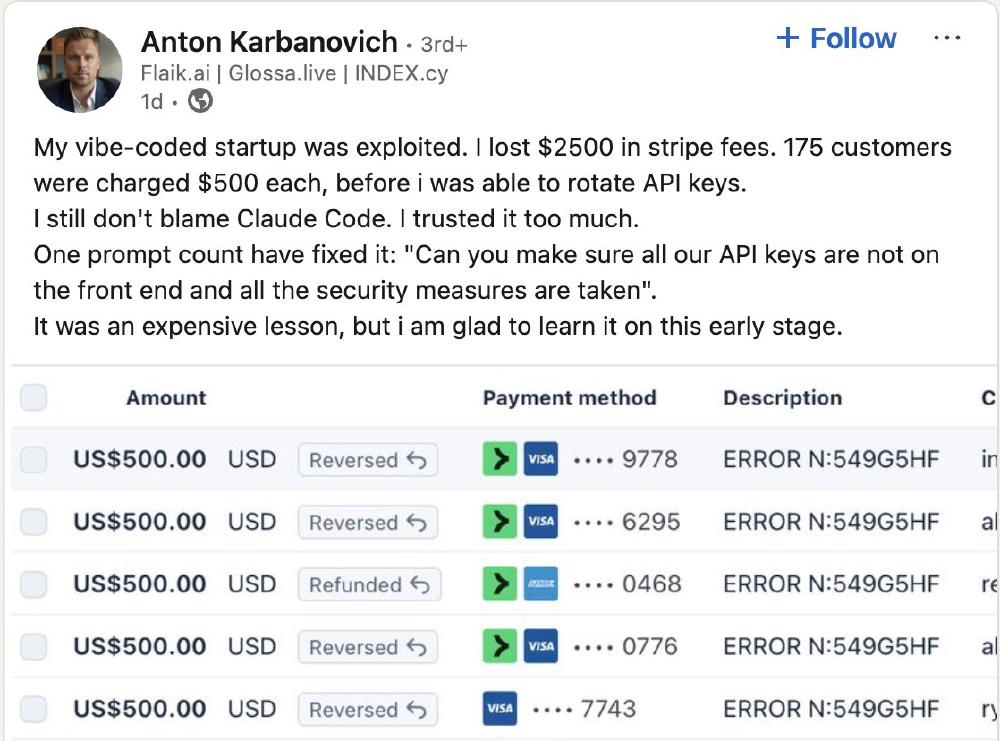

Karbanovich shared the incident on LinkedIn on February 28, where it quickly went viral with hundreds of comments:

My vibe-coded startup was exploited. I lost $2500 in stripe fees. 175 customers were charged $500 each, before I was able to rotate API keys.

I still don't blame Claude Code. I trusted it too much.

One prompt could have fixed it: "Can you make sure all our API keys are not on the front end and all the security measures are taken."

It was an expensive lesson, but I am glad to learn it on this early stage.

The attack is straightforward: when a Stripe secret key is embedded in client-side JavaScript, anyone can view it by opening browser dev tools. With a live secret key, an attacker can make charges against stored payment methods, access customer PII, issue refunds, and even wire funds to external accounts - Stripe's own docs are explicit that "anyone can use your live mode secret key to make an API call on behalf of your account."

In this case, the attackers used the key to charge every stored customer $500 before Karbanovich noticed and rotated his credentials. Stripe reversed the charges but kept its processing fees - $2,500 that Karbanovich will not get back.

The Reaction

The LinkedIn comments - over 260 of them - split into predictable camps.

One side pointed out that this is a beginner-level mistake that predates AI coding tools entirely. Secret keys in frontend code is day-one stuff in any web development course. Several commenters were blunt: if you are charging customers $500 each, you need to review the code handling their payments, regardless of who or what wrote it.

The other side gave Karbanovich credit for transparency. He didn't blame the tool, took ownership of the mistake, and shared it publicly so others could learn. Some noted that the fact he had 175 paying customers at all was an achievement for a solo founder.

Security professionals in the thread offered practical advice: use tools like TruffleHog to scan for exposed secrets in commits, add pre-commit hooks that catch API keys before they ship, and treat AI-generated code with the same scrutiny you'd give a junior developer's pull request.

A Pattern, Not an Outlier

Karbanovich's story is far from unique. As vibe coding accelerates, exposed secrets and missing authentication have become the most common failure mode:

- Moonwell DeFi lost $1.78 million in February 2026 after a price oracle formula written by Claude Opus mispriced a token, letting liquidators drain collateral

- Enrichlead, a SaaS platform whose founder boasted "100% of the code was written by Cursor AI," shut down within 72 hours of launch after users discovered they could unlock all paid features from the browser console

- A Carnegie Mellon study found that while 61% of AI-generated code is functionally correct, only 10.5% is secure - and neither security-focused prompts nor explicit vulnerability hints reliably closed that gap

Evil Martians published a breakdown of the four most common security risks in vibe-coded applications: exposed API keys in frontend code, weak client-side authentication, insecure dependencies, and missing server-side input validation. Karbanovich hit the first one on the list.

The Uncomfortable Takeaway

The core problem isn't that Claude Code wrote insecure code - it's that the developer-in-the-loop pattern that makes AI coding tools safe was skipped entirely. AI code generators optimize for "code that works," not "code that is secure." They will happily place a Stripe secret key in a React component if that is the fastest path to a working payment flow.

Karbanovich's own suggested fix - a single prompt asking Claude to audit for frontend-exposed keys - would likely have caught the issue. But that is exactly the kind of review step that vibe coding's appeal of speed encourages developers to skip.

For anyone building with AI coding tools and handling real money: treat every generated file as untrusted code. Run secret scanners. Put API keys in environment variables on the server. And if you are processing payments, read the Stripe integration code line by line before going live - no matter how tedious that sounds.

Sources: