Inside DeepSeek V4's CANN Stack - Three Delays Explained

DeepSeek V4 has slipped three times since February. Jensen Huang called it a horrible outcome for America. Here is what is actually hard about running a trillion-parameter model on Huawei's CANN framework.

Running a trillion-parameter model is already an infrastructure problem. Running one without touching a single Nvidia GPU is a different kind of engineering problem - one that has delayed DeepSeek V4 three times since February. The model's pre-training completed weeks ago. A smaller variant called V4-Lite leaked through inference providers in March. The benchmarks look frontier-competitive. But the model hasn't shipped, and the core reason is software: DeepSeek is building V4 to run on Huawei's Ascend 950PR chips, which means migrating its entire inference stack from Nvidia's CUDA ecosystem to Huawei's CANN framework. That turns out to be genuinely hard.

On April 15, Nvidia CEO Jensen Huang put the stakes bluntly on the Dwarkesh Podcast: "The day that DeepSeek comes out on Huawei first, that is a horrible outcome for our nation."

CANN Migration Stack - Layer by Layer

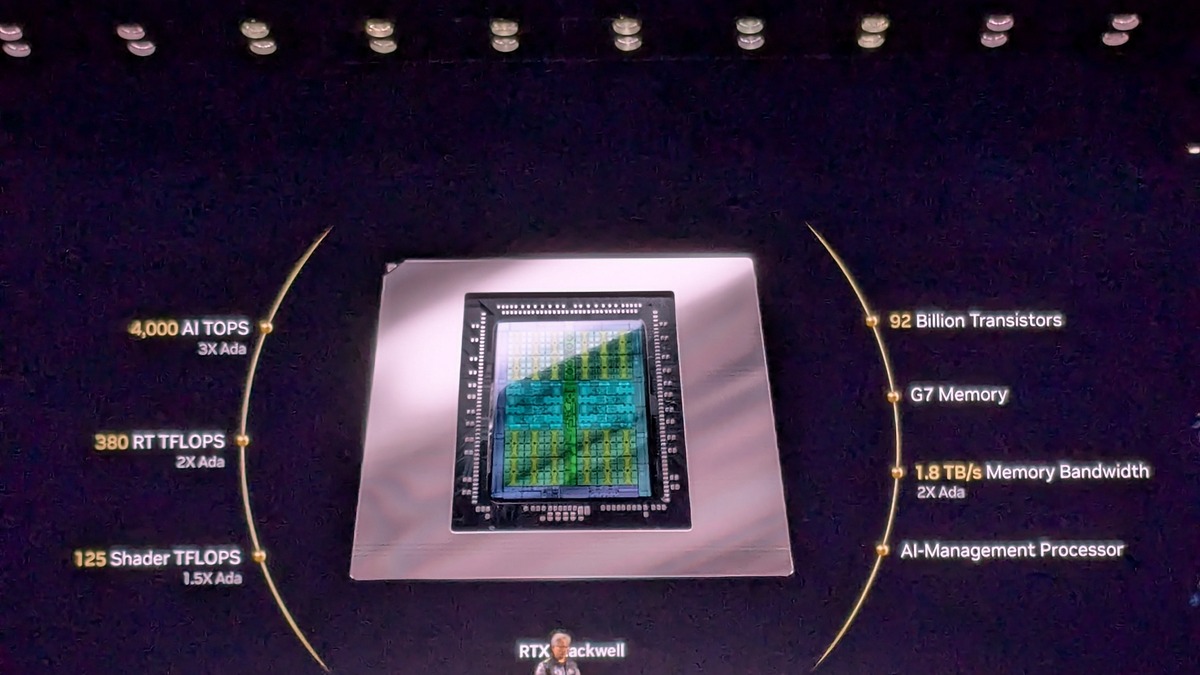

- Hardware: Huawei Ascend 950PR - 112 GB HiBL memory, 1.4 TB/s bandwidth, 2.8x H20 performance per card

- Communication: HCCL (Huawei Collective Communications Library) replaces Nvidia's NCCL

- Compiler: CANN ATC - maps tensor ops to Ascend hardware; kernel fusion completeness is the bottleneck

- Operator library: AscendCL - CANN's equivalent of cuDNN/cuBLAS; still expanding

- Runtime: CANN Next (2026) - adds SIMT programming model, compatible with CUDA-style code

- Framework:

torch_npu(PyTorch) or MindSpore - the interface V4's Python code calls into

How It Works Under the Hood

What CUDA Gives You - and Why It's Hard to Replace

CUDA is 20 years of software infrastructure. The visible part is the programming model: C++ extensions that let developers write kernel code targeting Nvidia GPUs. Underneath sits a compiler that maps tensor operations to GPU threads, a library of pre-tuned kernels (cuDNN, cuBLAS) handling matrix multiply and attention, and NCCL - the collective communication library that synchronizes gradients and activations across thousands of GPUs during training and distributed inference.

The ecosystem depth is the real moat, not the hardware. When something in PyTorch's attention implementation runs slow on an H100, there are profiling tools, community-written optimizations, and a decade of debugging history to draw on. Moving to a new hardware platform means leaving most of that behind.

Huawei's CANN Framework

CANN - Compute Architecture for Neural Networks - plays the same role as CUDA for Huawei's Ascend chips. It includes its own operator library (AscendCL), compiler (ATC), and HCCL for multi-chip communication. CANN has been in development since around 2019 but was never widely used for frontier model training at this scale. Most Chinese labs ran workloads on Nvidia hardware until US export restrictions made that increasingly untenable.

The critical 2026 addition is CANN Next. It introduces a SIMT (Single Instruction, Multiple Thread) programming model - the same execution model CUDA uses. According to analysis from Weijin Research, CANN Next "effectively treats CUDA as a programming standard while adding optimizations specific to Ascend chips." New code can now target Ascend without a full kernel-level rewrite. The problem is that DeepSeek V4 isn't new code. It's a production inference stack built and tuned for Nvidia hardware that now needs to run efficiently on Ascend.

What DeepSeek Actually Had to Rewrite

Reuters confirmed on April 4 that DeepSeek worked directly with Huawei and Cambricon "to make extensive adjustments and rewrites to the model's underlying architecture." Three layers absorbed most of that work.

Expert routing. V4 uses a Mixture-of-Experts design activating roughly 32 billion parameters per token out of around 1 trillion total. Dispatching tokens across expert groups on Ascend required reworking MoE communication patterns to use HCCL instead of NCCL, with different collective operation semantics and different assumptions about chip topology.

Attention kernel fusion. V4's 1-million-token context window requires highly optimized attention kernels. On CUDA, libraries like FlashAttention have years of Nvidia-specific optimization behind them. Porting equivalent attention to CANN means adding custom kernel fusion from scratch and validating it at scale - each kernel has to be correct, fused correctly, and profiled against the compiler's output.

Distributed communication throughput. Ascend's chip interconnect provides 2 TB/s bandwidth, competitive with Nvidia's NVLink on paper. But the software primitives for all-reduce and all-to-all operations in HCCL carry less operational history than NCCL. Any inefficiency in collective communication gets amplified when you're coordinating tokens across the hundreds of chips required to serve a trillion-parameter model in production.

# Standard CUDA inference setup

model.to("cuda:0")

# CANN equivalent via torch_npu (Huawei's PyTorch backend)

import torch_npu

torch.npu.set_device(0)

model.to("npu:0")

The API surface looks nearly identical. The work is in the kernels underneath.

Huawei's Atlas 350 accelerator card, powered by the Ascend 950PR chip, delivers 1.56 petaflops FP4 and 2.8x the H20's performance per card.

Source: trendforce.com

Huawei's Atlas 350 accelerator card, powered by the Ascend 950PR chip, delivers 1.56 petaflops FP4 and 2.8x the H20's performance per card.

Source: trendforce.com

CUDA vs CANN: A Direct Comparison

| Component | Nvidia CUDA | Huawei CANN |

|---|---|---|

| Framework age | 20+ years | ~7 years |

| Primary inference chip | H100/H200 | Ascend 950PR |

| PyTorch integration | torch.cuda (native) | torch_npu (separate package) |

| Communication library | NCCL (mature) | HCCL (growing) |

| Programming model | SIMT | SIMT via CANN Next (2026) |

| Kernel library coverage | Comprehensive | Expanding |

| US export restrictions | Yes, for China | None |

| Training chip (current gen) | H200/Blackwell now | Ascend 950 DT - late 2026 |

When CANN Makes Sense

If you're a Chinese AI lab without legal access to H100s, CANN isn't optional - it's the only path to frontier-class hardware. The Ascend 950PR delivers 2.8x the per-card performance of the H20, which is the highest-end Nvidia chip China can legally buy after US export controls tightened through 2025. That performance gap makes the migration cost worthwhile even when the software ecosystem is younger.

The supply chain commitment from Chinese tech companies reinforces this. Alibaba, ByteDance, and Tencent have placed bulk orders for hundreds of thousands of Ascend units, with prices running roughly 20% higher than earlier Ascend generations due to demand. DeepSeek added "Fast Mode" and "Expert Mode" toggles to its interface on April 8 - a small signal but the kind of infrastructure preparation that precedes a large model launch.

What doesn't make sense yet is building a training-heavy pipeline on CANN. The Ascend 950PR is an inference chip. The 950 DT - the training variant - isn't expected until late 2026. V4's training reportedly ran on older Ascend 910B/910C hardware, which trails Nvidia's current training silicon by several generations. The full Chinese compute independence story is still being assembled.

Nvidia CEO Jensen Huang at the CES 2025 keynote. In April 2026, he called a DeepSeek launch on Huawei hardware "a horrible outcome" for the United States.

Source: commons.wikimedia.org

Nvidia CEO Jensen Huang at the CES 2025 keynote. In April 2026, he called a DeepSeek launch on Huawei hardware "a horrible outcome" for the United States.

Source: commons.wikimedia.org

Known Gotchas

Operator gaps. CANN's operator library has grown but doesn't yet cover every kernel that CUDA developers take for granted. Missing operators surface as unsupported-operation errors or slow fallback implementations. Each gap needs either a custom operator or an architectural workaround, both of which require time and testing.

Kernel fusion decisions. The CANN compiler's fusion choices matter more than they'd on a well-studied CUDA workload. Incorrectly fused kernels produce correct outputs but noticeably worse latency. Diagnosing fusion problems requires profiling tools that are less mature on Ascend than on Nvidia hardware, so iteration cycles take longer.

CANN Next is new. The SIMT compatibility layer shipped this year and has no production track record at trillion-parameter scale. V4 will be its first large-scale test.

HCCL at extreme scale. Collective communication bugs are hard to reproduce and hard to diagnose. A subtle all-reduce inefficiency that costs 1% latency per chip becomes a serious problem when you're running a cluster large enough for a trillion-parameter model. NCCL has years of fixes in this area. HCCL is catching up.

GLM-5 has run on Huawei hardware at smaller scale - and it demonstrated that CANN-based inference is production-viable, not just theoretical. But a trillion-parameter MoE with a 1-million-token context window serving public traffic is a different load than anything the CANN stack has handled. If DeepSeek ships V4 in the coming weeks and inference holds up, that isn't just a product launch. It's proof that China's push for AI chip self-sufficiency has produced something that can run the world's most capable open-weight model without American silicon.

Sources:

- Reuters: DeepSeek V4 to run on Huawei Ascend 950PR

- TrendForce: Decoding DeepSeek V4 and the Huawei Ascend 950PR

- TrendForce: Huawei debuts Atlas 350 on Ascend 950PR

- The Next Web: Jensen Huang on DeepSeek and Huawei chips

- Dwarkesh Podcast: Jensen Huang - TPU competition, why we should sell chips to China

- FindSkill.ai: DeepSeek V4 release date, delays, and CANN migration

- Weijin Research: DeepSeek V4 on Huawei Ascend - CUDA exit implications

- Intelligent Living: Ascend 950PR and the CUDA exit

- aihola.com: DeepSeek V4 drops Nvidia, runs on Huawei