DeepMind's AlphaEvolve Recovered 0.7% of Google's Compute

Google DeepMind's May 2026 AlphaEvolve impact report shows the system running in production across infrastructure, quantum computing, genomics, and commercial partnerships spanning logistics to fintech.

When Google DeepMind released AlphaEvolve earlier this year, the public-facing results were impressive in a controlled-experiment way: novel game theory algorithms, better matrix multiplication, new mathematical lower bounds. Yesterday's follow-up report is a different kind of document. AlphaEvolve isn't just producing paper results. It's running in production across Google's data centers, TPU design pipeline, and a growing list of commercial partnerships - and the numbers it's accumulating are hard to ignore.

TL;DR

- AlphaEvolve recovered 0.7% of Google's worldwide compute through improved data center task scheduling

- A circuit design AlphaEvolve proposed was deemed so efficient it went directly into next-gen TPU silicon

- Commercial partners include Klarna (2x training speed), FM Logistic (15,000+ km saved/year), and Schrödinger (4x MLFF speedup)

- Grid optimization for AC Optimal Power Flow problems moved from 14% to 88% feasibility

- Access is still through Google Cloud's partner program - there's no public API

How AlphaEvolve Works

AlphaEvolve isn't a language model you call through an API. It's a system built on top of Gemini 3.1 Pro and Gemini Flash that runs an evolutionary loop over code. The architecture is straightforward in concept but demanding to deploy:

1. Prompt sampler: assembles task description + program history for the LLM ensemble

2. LLM ensemble:

Gemini Flash → high-throughput candidate generation (breadth)

Gemini Pro → higher-quality suggestions (depth, novel directions)

3. Evaluator: automated metric scores each candidate (throughput, error rate, etc.)

4. Program database: evolutionary selection - high-scoring variants seed the next round

5. Loop: repeat until convergence or compute budget exhausted

The key constraint is step 3. Someone has to write the scoring function before AlphaEvolve can run. Without a programmatic way to assess candidates, the evolutionary loop has nothing to optimize against.

The Dual-Model Setup

The Flash-Pro split is practical engineering. Flash produces a high volume of candidate mutations at lower latency; Pro produces higher-quality suggestions that move the search in new directions. Most gains come from a small fraction of Pro's candidates. Flash provides coverage; Pro provides breakthroughs.

The Evolutionary Loop

The loop extends FunSearch (an earlier DeepMind system) to handle longer programs and a broader class of problems. Instead of evolving single functions, AlphaEvolve can evolve entire algorithm implementations - scheduling policies, kernel code, circuit designs in Verilog. The program database acts as the population: survivors of each generation seed the next.

AlphaEvolve's data center scheduling optimization is running in production, recovering 0.7% of Google's global compute - a significant number at Google's scale.

Source: unsplash.com

AlphaEvolve's data center scheduling optimization is running in production, recovering 0.7% of Google's global compute - a significant number at Google's scale.

Source: unsplash.com

Infrastructure Impact

The clearest signal that AlphaEvolve works is that Google rolled out its results. That's a different bar from a benchmark result on a test set.

Data Center Scheduling

AlphaEvolve found a better policy for scheduling tasks across Google's data centers. The report describes it as "recovering on average 0.7% of global compute resources." The policy runs continuously, not as a one-time optimization pass.

TPU Silicon Design

Jeff Dean described the chip design result directly: "AlphaEvolve proposed a circuit design so counterintuitive yet efficient it was integrated into next-generation TPU silicon." This matters because chip design has long verification cycles. A result from an LLM-based system getting into actual silicon means it survived not just automated verification but expert human review.

The system also improved existing GPU kernel implementations. FlashAttention - a key component in transformer inference and training - saw up to 32.5% speedup through AlphaEvolve-discovered implementations. The matrix multiplication kernel used in Gemini's own training improved by 23%, cutting training time by 1%.

Google Spanner and Compilers

Less flashy but concrete: a 20% reduction in Google Spanner write amplification and a 9% reduction in software storage footprint through compiler optimizations. These aren't tuned for a benchmark. They're launched in Spanner, which is Google's globally distributed relational database.

Scientific Breakthroughs

Across 50 open mathematical problems, AlphaEvolve rediscovered state-of-the-art solutions in 75% of cases and found improved solutions in 20%.

Source: unsplash.com

Across 50 open mathematical problems, AlphaEvolve rediscovered state-of-the-art solutions in 75% of cases and found improved solutions in 20%.

Source: unsplash.com

Mathematics with Terence Tao

Mathematician Terence Tao worked with AlphaEvolve on Erdős problems - open combinatorics questions that have resisted human-designed solutions for decades. Tao said in the report: "Tools such as AlphaEvolve give mathematicians useful new capabilities...greatly improves our intuition." The system also improved lower bounds for the Traveling Salesman Problem and Ramsey numbers.

The baseline for mathematical benchmarks: across 50 open problems tested, AlphaEvolve rediscovered state-of-the-art solutions in 75% of cases and found truly improved solutions in 20%.

Quantum Computing

AlphaEvolve optimized quantum circuits for Google's Willow processor, achieving 10x lower error rates for molecular simulations compared to conventionally optimized baselines. Quantum circuit optimization is a good match for evolutionary search because small improvements in gate sequences compound significantly as circuit depth increases.

Genomics and Grid Optimization

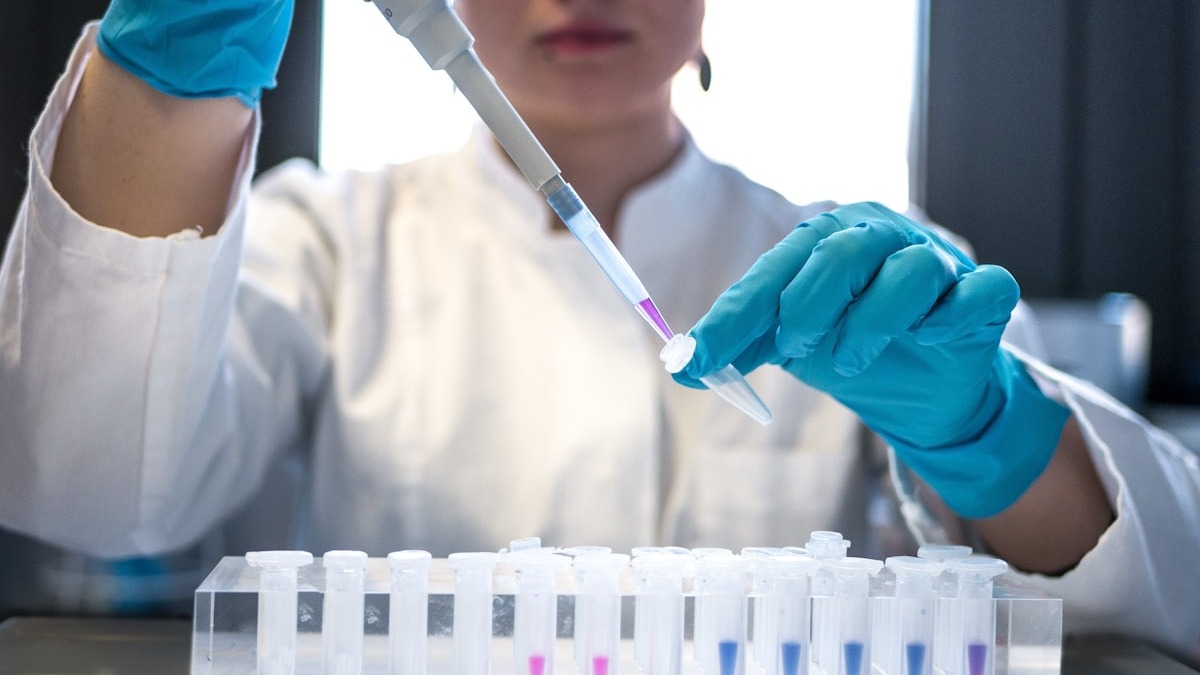

AlphaEvolve improved Google's DeepConsensus DNA sequencing model, reducing variant detection errors by 30%, in a partnership with PacBio.

AlphaEvolve cut DNA variant detection errors by 30% in partnership with PacBio, improving the DeepConsensus sequencing model.

Source: unsplash.com

AlphaEvolve cut DNA variant detection errors by 30% in partnership with PacBio, improving the DeepConsensus sequencing model.

Source: unsplash.com

On electrical grid planning, AlphaEvolve moved feasibility for AC Optimal Power Flow problems from 14% to 88% - a hard combinatorial optimization problem with direct implications for how renewable energy sources can be integrated into grid infrastructure. It also improved natural disaster prediction accuracy by 5% across 20 event categories.

Commercial Partners

| Company | Domain | Result |

|---|---|---|

| Klarna | Fintech/ML | 2x transformer model training speed |

| Substrate | Semiconductors | Multi-fold speedup in lithography simulations |

| FM Logistic | Logistics | 10.4% routing efficiency gain, 15,000+ km saved annually |

| WPP | Marketing | 10% improvement in campaign optimization accuracy |

| Schrödinger | Materials/Biotech | 4x speedup in Machine Learned Force Fields |

The range of domains is worth noting. FM Logistic is a large European logistics operator - the 15,000+ km saved per year is a real operational number, not a benchmark projection. Schrödinger's MLFF speedup is directly relevant to drug discovery pipelines where simulation time is a bottleneck. Klarna doubling transformer training speed at a fintech company is a long way from mathematical research.

Problem Compatibility

AlphaEvolve isn't a general-purpose optimizer. It works on a specific class of problems.

| Requirement | Status | Notes |

|---|---|---|

| Automated evaluation metric | Required | Without a programmatic scoring function, the loop can't run |

| Deterministic or near-deterministic evaluation | Strongly preferred | Noisy metrics slow convergence and mislead selection |

| Problem expressible as code | Required | The algorithm must be representable as executable logic |

| Physical lab experiments | Not compatible | AlphaEvolve can't wait for wet-lab results to score candidates |

| Human judgment as primary quality signal | Not compatible | Manual review as the scoring loop is too slow for the evolutionary cycle |

Where It Falls Short

The report is a curated collection of successes. The selection bias is obvious but worth naming.

AlphaEvolve's results depend completely on the quality of the evaluation function. Writing a good automated scorer for a novel problem is itself a research task requiring domain expertise and iteration. The system can't tell you whether your metric is measuring the right thing - it'll optimize whatever you give it.

The compute budget is also non-trivial. Running AlphaEvolve on a meaningful problem requires substantial GPU time for the evolutionary loop plus Gemini API costs for the LLM ensemble. The infrastructure optimizations at Google are worth their compute cost because 0.7% of Google-scale infrastructure is enormous. For smaller organizations, the math won't work out for every problem class.

Access is still internal or through Google Cloud's early access program. There's no public API. The commercial partners listed in the report worked directly with the DeepMind team. The github.com/google-deepmind/alphaevolve_results repository contains algorithm outputs but not the system itself.

The open-source community built OpenEvolve as an accessible implementation. It runs smaller models and lacks the compute infrastructure behind the Google version - and that gap is probably as much about GPUs as it's about model capability.

The May 7 report is the first time Google has collected AlphaEvolve's production results in one place. The picture it shows is of a system running quietly across Google for months, producing improvements that are small in percentage terms but significant in absolute scale. Getting access to the system itself remains the bottleneck for anyone outside Google Cloud's partner program.

Sources: