Anthropic's Claude Found 22 Firefox CVEs in 14 Days

Claude Opus 4.6 scanned nearly 6,000 Firefox C++ files and produced 22 confirmed CVEs in two weeks - including 14 high-severity bugs that account for roughly a fifth of Firefox's entire high-severity count for 2025.

A two-week automated audit of Firefox's C++ codebase by Anthropic's Frontier Red Team has produced one of the most concrete real-world demonstrations of AI-assisted vulnerability research to date: 22 confirmed CVEs, 14 of them high-severity, found by Claude Opus 4.6 across nearly 6,000 source files.

Mozilla confirmed those 14 high-severity bugs represent almost a fifth of all high-severity Firefox vulnerabilities patched across the entirety of 2025. That's production code. Those are real CVEs, not benchmark scores. Fixes shipped in Firefox 148.0 in February, reaching hundreds of millions of users.

TL;DR

- Claude Opus 4.6 scanned ~6,000 Firefox C++ files and filed 112 bug reports in two weeks

- 22 CVEs confirmed; 14 classified high-severity by Mozilla - roughly a fifth of Firefox's full-year 2025 high-severity count

- Only 2 successful exploits developed out of hundreds of attempts, at a cost of ~$4,000 in API credits

- Mozilla is now integrating AI-assisted analysis into internal security workflows

The Numbers

The audit ran for two weeks in February 2026. Anthropic's Frontier Red Team pointed Claude Opus 4.6 at Firefox's C++ codebase and tracked every output methodically.

| Metric | Result |

|---|---|

| C++ files scanned | ~6,000 |

| Bug reports submitted | 112 |

| CVEs issued | 22 |

| High-severity CVEs | 14 |

| Share of Firefox 2025 high-severity patches | ~20% |

| Exploit development attempts | Hundreds |

| Successful exploits developed | 2 |

| API cost for exploit attempts | ~$4,000 |

The table above puts the central asymmetry in plain view: Claude is truly useful at finding bugs and nearly useless at exploiting them. That's not a flaw in the methodology. It's arguably the most important signal in the entire study.

Firefox serves hundreds of millions of users. Mozilla confirmed that Claude's 14 high-severity finds account for about a fifth of all high-severity patches it shipped in all of 2025.

Source: commons.wikimedia.org

Firefox serves hundreds of millions of users. Mozilla confirmed that Claude's 14 high-severity finds account for about a fifth of all high-severity patches it shipped in all of 2025.

Source: commons.wikimedia.org

How the Audit Worked

Anthropic's team started with calibration: they fed Claude known Firefox CVEs from older versions to verify it could reproduce historical findings before turning it loose on current code. Starting with the JavaScript engine, the model expanded outward to cover the full browser codebase.

First Find: 20 Minutes In

Within 20 minutes of initial exploration, Claude flagged a Use After Free vulnerability in the JavaScript engine - one of the bug classes that coverage-guided fuzzers have targeted for years. The speed is real, though calibration almost certainly helped: the model had been primed on Firefox's historical vulnerability patterns moments before.

Each submission came with a minimal test case, a proof-of-concept, and a candidate patch. Mozilla's engineers could begin landing fixes within hours of receiving a report.

Bugs That Fuzzers Missed

Not all 22 CVEs were retreads of what automated fuzzers already catch. Mozilla confirmed some were "distinct classes of logic errors that fuzzing had not previously identified." That's the more interesting finding. Coverage-guided fuzzing has been Firefox's primary automated testing tool for years. A LLM finding a new category of bugs suggests it's operating on something structurally different from mutation testing - reading code for semantic intent rather than probing it for input-driven crashes.

The 90 lower-severity reports also shipped with the CVEs, suggesting the model wasn't simply improving for severity scoring. Whether those 90 were genuine finds or noise isn't broken down in the published results.

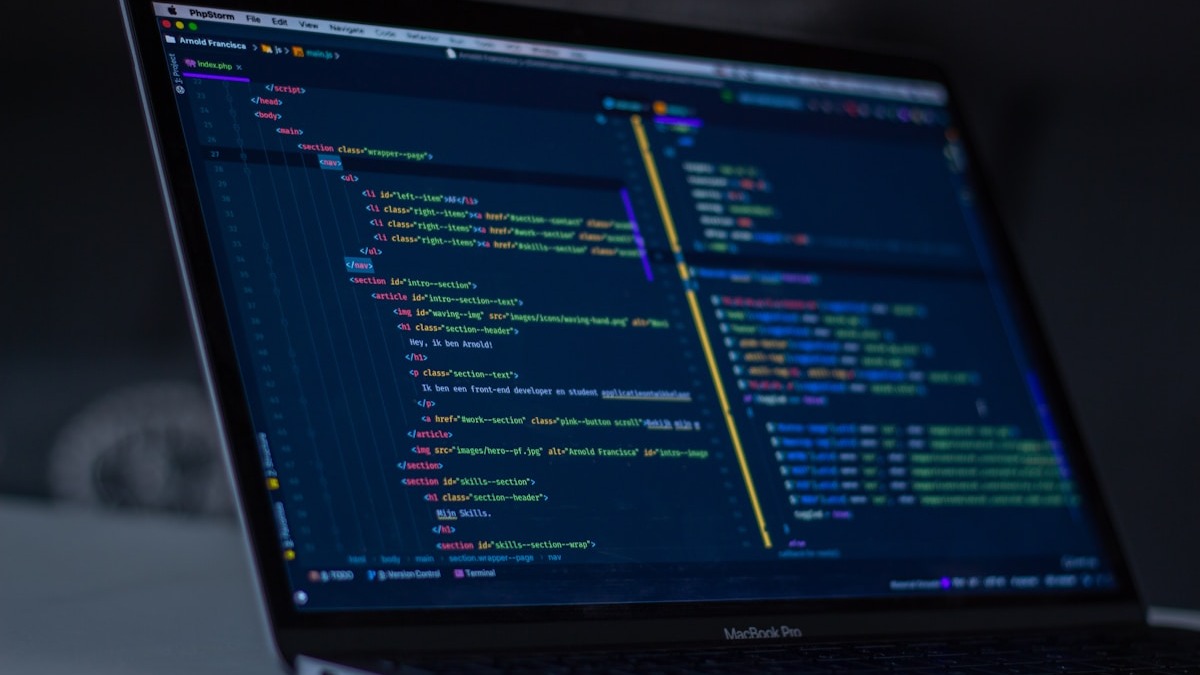

Firefox's C++ codebase is a sprawling system where a single memory management error can become a high-severity vulnerability. Claude found Use After Free bugs and logic errors that traditional fuzzers had missed.

Source: unsplash.com

Firefox's C++ codebase is a sprawling system where a single memory management error can become a high-severity vulnerability. Claude found Use After Free bugs and logic errors that traditional fuzzers had missed.

Source: unsplash.com

The Defender's Edge

The AI-in-security conversation has run on one question for years: does AI help attackers more than defenders? The Firefox audit adds a specific data point to the other side.

Discovery Is Cheap; Exploitation Is Expensive

Anthropic didn't publish the total cost of the discovery phase, but they noted that vulnerability identification costs about one order of magnitude less than exploit development. Translated: finding a bug with Claude costs roughly one-tenth what it costs to build a working exploit with Claude.

The $4,000 spent on exploit development produced two working exploits from hundreds of attempts. From a practical threat-modeling view, that's meaningful. The same model that mapped Firefox's attack surface efficiently couldn't reliably weaponize what it found. A human exploit developer with a disclosed Use After Free and a week of time is still more dangerous than Claude running automated exploit generation at scale.

"As AI accelerates both attacks and defenses, Mozilla will continue investing in the tools, processes, and collaborations that ensure Firefox keeps getting stronger and that users stay protected." - Mozilla statement on the collaboration

Mozilla's decision to integrate AI-assisted analysis into its internal security workflows is the concrete outcome to watch - more so than the press language. Building this into standard process rather than treating it as a one-off research project implies the signal-to-noise ratio was useful enough to maintain.

What It Does Not Tell You

Calibration Complicates the Baseline

The team primed Claude on historical Firefox CVEs before running it on current code. How much of the model's effectiveness at finding the new 22 bugs traces back to that warm-up rather than general code-reasoning ability? The methodology doesn't isolate this. An audit of an unfamiliar codebase with no historical CVE calibration might produce a very different hit rate.

No Human Benchmark

The comparison implied across the study is AI-assisted analysis versus no analysis. That's not the same as AI versus a competent human red team working the same two-week window. Two experienced Firefox security engineers with deep knowledge of the codebase might find the same 22 bugs, more, or different ones. The more useful experiment - a human-only team and a human-AI team auditing the same code in parallel - isn't what was run here.

Conflict of Interest Is Structural

Anthropic's Frontier Red Team isn't a neutral party. This was a joint project with Mozilla, with Mozilla validating which submissions qualified as CVEs. That's a reasonable arrangement - who else would validate Firefox bugs? - but it means the methodology and the threshold for what counted as a finding were set collaboratively. Independent replication on a different codebase would strengthen the claims much.

The 90 lower-severity reports that didn't make the CVE count also deserve more scrutiny. Without a breakdown of false positive rates at the initial discovery stage, it's hard to assess how much human triage time the process actually requires to be useful.

AI-assisted security research still requires human engineers to validate and triage model output - a factor that the Firefox study's published results don't quantify directly.

Source: unsplash.com

AI-assisted security research still requires human engineers to validate and triage model output - a factor that the Firefox study's published results don't quantify directly.

Source: unsplash.com

Where This Sits

The vibe coding security debate has focused on AI introducing vulnerabilities into new code. The Firefox audit runs in the opposite direction: AI identifying vulnerabilities in existing code. Both directions matter, but they're separate problems with separate effects.

For Anthropic, a published collaboration with Mozilla on browser security is external validation that's harder to dismiss than a self-reported coding benchmark. It also fits a visible pattern: Anthropic's Claude Code security launch and its multi-agent code review work both position Claude as suitable for consequential, trust-sensitive engineering tasks. The Firefox study advances that case with verifiable third-party outcomes.

The one number in this study that'll age fastest is the $4,000 cost for exploit development. API costs fall; model capabilities rise. The discovery-to-exploitation cost ratio that currently favors defenders is not a structural guarantee - it's a snapshot of where the technology stands in early 2026.

Sources: