Cerebras IPO 20x Oversubscribed Signals AI Chip Crunch

Cerebras Systems raised its IPO price to $150-$160 per share after investors placed over $10 billion in orders on a $3.5 billion offering - 20 times oversubscribed.

Blackwell GPU allocation slots for enterprise customers outside the hyperscaler tier now stretch past year-end 2026. Wait lists for dedicated NVIDIA capacity run twelve months or longer for organizations below the top tier of enterprise contracts. Into that supply gap, Cerebras Systems has raised its Nasdaq IPO price range to $150-$160 per share - up from $115-$125 - after investor books filled to more than 20 times the number of shares available.

The company set pricing for May 13. At $160 per share on 30 million shares, the offering raises $4.8 billion, up from the original $3.5 billion. Banks underwriting the deal received more than $10 billion in orders before a single share priced. Revenue for 2025 came in at $510 million, up 76% from $290 million in 2024, anchored by a $20 billion compute contract with OpenAI covering 750 megawatts of inference capacity through 2028.

TL;DR

- IPO price raised to $150-$160/share from $115-$125; shares increased to 30M from 28M

- Books filled to more than 20x available shares; investors placed $10B+ in orders on a $3.5B offering

- 2025 revenue: $510M (up 76%), anchored by a $20B OpenAI Master Relationship Agreement for 750MW through 2028

- OpenAI also holds warrants on 33M+ shares and loaned Cerebras $1B at 6% to build out data center infrastructure

- Pricing set for May 13, Nasdaq ticker CBRS; the number tells you how far AI compute demand has outrun supply

The Constraint Behind the Surge

NVIDIA controls AI chip revenue and will for the foreseeable future. What's in question is whether NVIDIA can supply enough compute to meet demand growing faster than its fab allocations can cover. The Cerebras book-build suggests the answer is no, not yet.

Cerebras makes something architecturally different from any NVIDIA product. The Wafer-Scale Engine 3 (WSE-3) occupies an entire 300mm silicon wafer: 4 trillion transistors, 900,000 compute cores, built on TSMC's 5nm process. Instead of stacking chiplets with HBM memory connected through packaging, Cerebras puts the memory directly on the die - removing the bandwidth bottleneck that limits conventional GPU performance on long-context inference workloads. The CS-3 system built around the WSE-3 delivers 125 petaflops of AI compute from a single rack unit.

For large model inference at scale, that distinction has real consequences. Moving weights from HBM to compute cores accounts for most of the latency at context lengths above 32K tokens. Cerebras's architecture skips that transfer. The chip compares to NVIDIA's B200 as follows: 58x larger, 19x more transistors, 250x more on-chip memory, 2,625x more memory bandwidth - all per Cerebras's own product page, which the company publishes publicly.

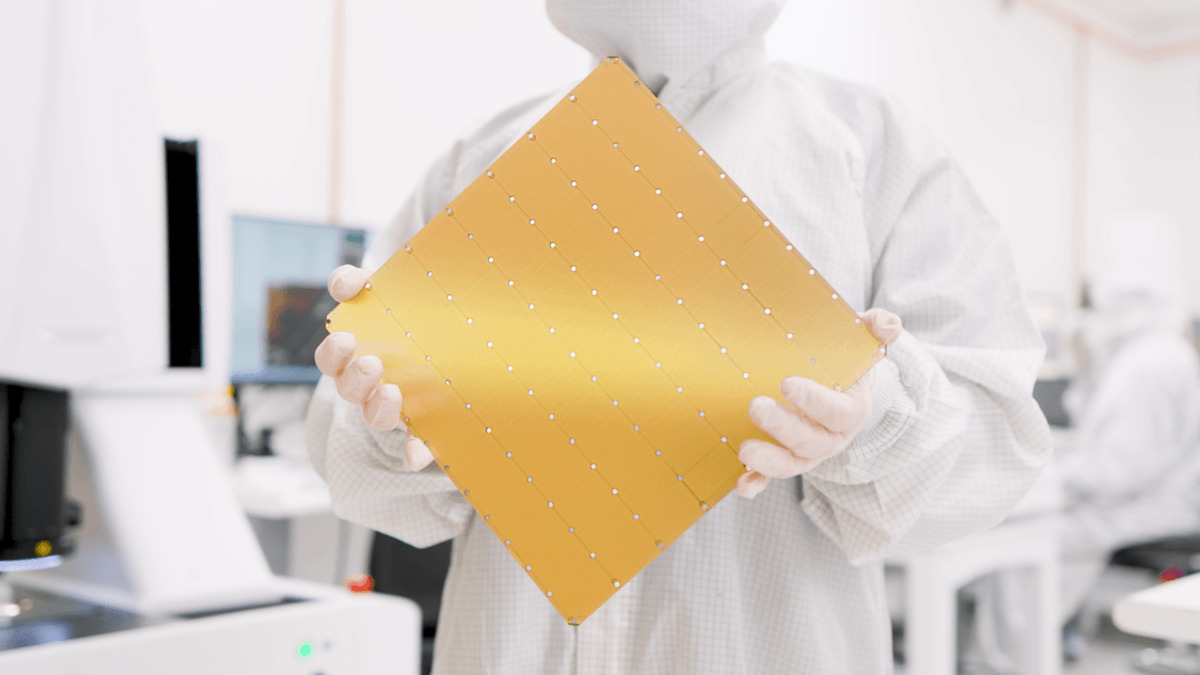

The WSE-3 covers a full 300mm silicon wafer - the largest chip ever built by area.

Source: spectrum.ieee.org

The WSE-3 covers a full 300mm silicon wafer - the largest chip ever built by area.

Source: spectrum.ieee.org

Who Is Supplying What

| Supplier | Technology | Anchor Customer | 2025-2026 Revenue | Where the Bottleneck Lives |

|---|---|---|---|---|

| NVIDIA | Blackwell GPU clusters, NVLink fabric | Azure, GCP, AWS | $140B+ annualized | CoWoS-S packaging, TSMC N4P allocation |

| Cerebras | WSE-3 wafer-scale chip, CS-3 systems | OpenAI ($20B, 750MW) | $510M (2025) | TSMC 300mm wafer line capacity |

| Groq | LPU inference accelerators | Meta, Stripe | Undisclosed (private) | LPU fab production ramp |

| Taalas | Model-weight ASIC chips | TBD | Pre-revenue | Manufacturing scale-up |

NVIDIA's constraint sits in TSMC's CoWoS-S advanced packaging capacity, which runs at full use through 2026 serving NVIDIA and Apple orders. Cerebras uses a different fabrication method completely - the wafer is the chip, no chiplet packaging required - giving it a different supply chain dependency. But it still competes for TSMC wafer starts, and each WSE-3 consumes a full 300mm production wafer.

The OpenAI relationship is more intertwined than a standard compute contract. In January 2026, OpenAI signed a Master Relationship Agreement worth more than $20 billion over three years, with an option to expand by another 1.25 gigawatts through 2030 - potentially pushing total contract value above $50 billion if exercised. OpenAI also loaned Cerebras $1 billion at 6% annual interest, secured by warrants allowing OpenAI to buy more than 33 million shares. One of the company's largest customers also holds a meaningful equity stake and has a direct financial interest in Cerebras reaching this IPO.

The original Cerebras IPO roadshow in April targeted a $22-25 billion valuation. Seven weeks of investor meetings, and the final price range, suggest that investors read the demand signal differently than the initial filing.

Reuters, citing people with knowledge of the deal, reported May 10 that the IPO price range had been raised to $150-$160 per share after books filled to more than 20 times the number of shares on offer.

Who Gets Squeezed

Startups Waiting on Allocations

A startup running large-model inference at low latency in 2026 faces two realistic options: pay cloud providers' premium API pricing, or join a twelve-month wait list for dedicated NVIDIA capacity. H100 spot pricing on secondary markets runs 3-5x list price. Neither is acceptable when latency and cost targets are contractual obligations.

Cerebras's on-chip memory architecture handles exactly this class of problem. Long-context inference - where moving weights from HBM consumes most of the wall-clock time - runs faster on WSE-3 than on conventional GPU stacks. The performance advantage isn't a benchmark artifact; it's a physical consequence of eliminating the memory transfer step.

Inference Providers Locked Into Spot Markets

Real-time inference providers need predictable capacity at a predictable price. NVIDIA's enterprise allocation system doesn't offer that: wait lists shift, spot pricing swings by the quarter, and hyperscaler cloud pricing varies with demand. The Cerebras model - a fixed multi-year compute contract at a contractual price - is the structure inference providers want when building products on fixed gross margins.

NVIDIA committed $40 billion in equity deals to AI customers this year, keeping customers close when hardware allocations can't. Cerebras doesn't need that mechanism for OpenAI; the $1 billion loan and the warrants have already aligned interests more directly than any equity side deal.

The CS-3 system, which houses the WSE-3 and powers the OpenAI compute infrastructure underpinning the IPO's anchor revenue.

Source: cerebras.ai

The CS-3 system, which houses the WSE-3 and powers the OpenAI compute infrastructure underpinning the IPO's anchor revenue.

Source: cerebras.ai

Mid-Size Enterprises Priced Out

Mid-size enterprises without hyperscaler-level purchasing power watch AI compute as a rising cost line even as NVIDIA reports record earnings. The NVIDIA-Groq inference chip deal with OpenAI shows where NVIDIA's engineering investment is flowing - toward the customers it already has, not the queue below them.

What Breaks First

The 20x oversubscription creates a price anchor for the broader AI chip alternatives market. At $4.8 billion raised on $510 million of 2025 revenue, institutional investors have publicly uncovered what they'll pay for a credible non-NVIDIA compute source backed by a real anchor customer. That multiple will follow Groq to its eventual IPO filing and Taalas to its next funding round.

NVIDIA's Blackwell wait times extend if yields at TSMC slip or if hyperscaler demand continues to accelerate ahead of capacity forecasts. A second delay on Blackwell production in 2026 would sharpen demand for alternatives further, and Cerebras would be the only publicly traded company positioned to capture that directly.

TSMC capacity expansion into 2027 and 2028 - with new fabs in Arizona and Japan - is the factor that eventually relieves this constraint. When that capacity comes online on schedule, pricing pressure on GPU alternatives softens and the revenue multiple Cerebras is capturing today becomes harder to sustain at IPO-level prices.

Cerebras prices on May 13 under ticker CBRS. Trading begins May 14.

Sources:

- Let's Data Science: Cerebras Raises Expected IPO Price Range

- Grey Journal: Cerebras Targets $40B Valuation in May IPO

- TECHi: Cerebras IPO 2026 Price Range, Valuation, Risks

- BanklessTimes: NVIDIA Rival Cerebras Files for $3.5B Nasdaq IPO

- Motley Fool: Nvidia Rival Cerebras Unveils IPO Details

- TechCrunch: OpenAI's cozy partner Cerebras is on track for a blockbuster IPO

- Analytics Drift: Cerebras Systems IPO - What the Nasdaq Listing Means for AI Chips

- Cerebras: WSE-3 Product Page