Cerebras $5.5B IPO Pops 68% - Biggest US Tech Debut Since 2020

Cerebras raised $5.55B and surged 68% on Nasdaq debut, the largest US tech IPO since Snowflake in 2020, but a 200x revenue multiple and a single-customer backlog tell a more complicated story.

Cerebras Systems rang the Nasdaq bell on Thursday with $5.55 billion in its pocket and shares closing 68% above the IPO price - handing the AI chip market its loudest signal yet that public investors are ready to bet on alternatives to NVIDIA.

The company priced 30 million Class A shares at $185 the night before, already above an upsized range of $150 to $160. On Thursday morning, shares opened at $350 and touched $385 before settling at $311.07. By Friday, they had pulled back roughly 10% as the opening euphoria met sober arithmetic. It's still the largest US tech IPO since Snowflake's $3.8 billion debut in 2020 - and the opening act for what Wall Street expects will be a wave of AI infrastructure listings.

TL;DR

- Cerebras priced at $185/share on May 13, raising $5.55B from 30M Class A shares

- Day-1 close: $311.07 (+68%); day-2: pulled back ~10% on profit-taking

- Fully diluted market cap reached ~$95B on just $510M of 2025 revenue - a P/S multiple roughly 7x NVIDIA's

- 86% of 2025 revenue came from two UAE-linked customers; OpenAI accounts for over 80% of the $24.6B backlog

- G42's stake was restructured to non-voting shares in October 2025, clearing the CFIUS block that killed the 2024 IPO attempt

The Numbers Behind the Bell

The oversubscribed roadshow had drawn more than $10 billion in orders against a $3.5 billion offering before pricing landed at $185. Morgan Stanley, Citigroup, Barclays, and UBS ran the books. The result beat Snowflake's $3.8 billion 2020 debut - the previous US tech IPO benchmark - and set a high mark for the year by a wide margin.

The complication starts with the revenue math. Cerebras reported $510 million in 2025 revenue, up 76% from $290 million the prior year. That growth rate is real. But the price the market put on it isn't modest: at Thursday's close, the basic-share market cap sat around $70 billion. On a fully diluted basis - accounting for restricted shares, options, and warrants - it reached roughly $95 billion. That puts the trailing price-to-sales ratio at around 200x, compared to roughly 28x for NVIDIA.

| Metric | Cerebras (CBRS) | NVIDIA (NVDA) | Snowflake IPO (2020) |

|---|---|---|---|

| IPO raise | $5.55B | N/A | $3.8B |

| Day-1 close market cap | ~$70B (basic) | ~$3.2T | ~$33B |

| 2025 revenue | $510M | ~$130B | $592M |

| Trailing P/S ratio | ~200x | ~28x | ~120x |

| Top customer revenue share | 86% (2 customers) | Under 5% | Distributed |

| CFIUS clearance required | Yes (2024-2025) | No | No |

The bulls will argue Cerebras is being valued on backlog, not trailing revenue. The $24.6 billion total backlog is the headline number. The problem: more than $20 billion of that backlog comes from a single deal with OpenAI, covering 750 megawatts of inference capacity through 2028.

The Technology Bet

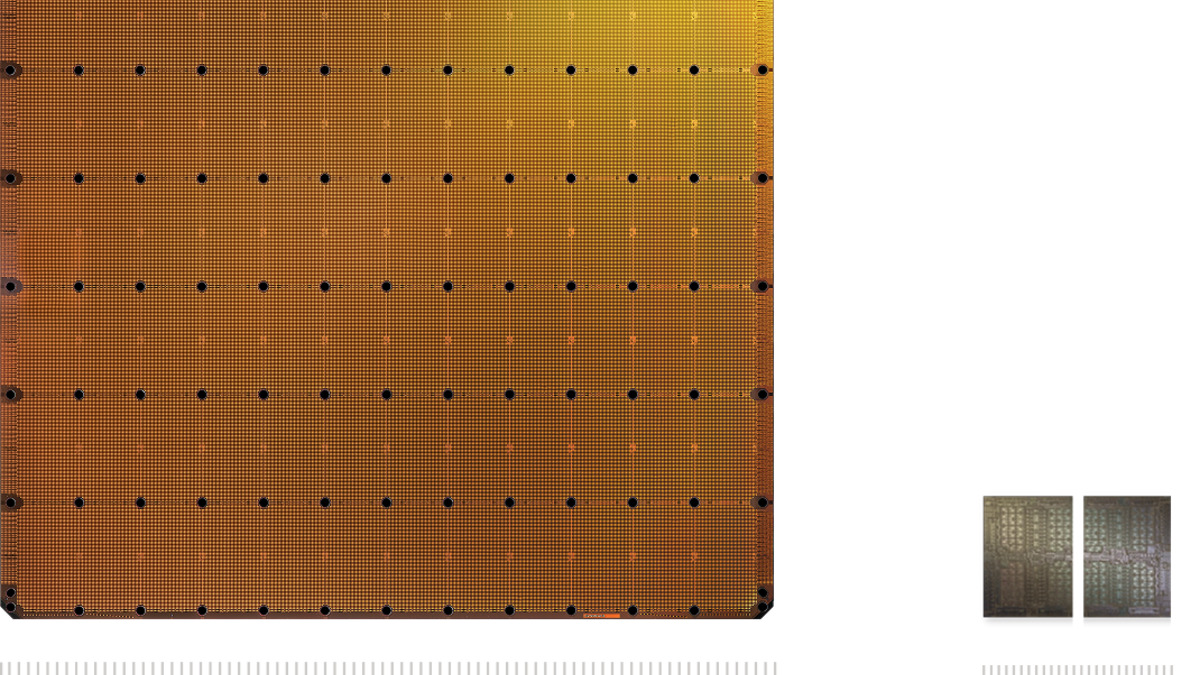

Cerebras makes a single product: the Wafer-Scale Engine 3 (WSE-3), a processor built from an entire silicon wafer rather than a cluster of smaller chips. At 46,225 square millimeters and 4 trillion transistors, it is 58 times larger than a leading GPU chip by area. The architecture removes the inter-chip communication overhead that forces GPU clusters to coordinate constantly - a bottleneck that compounds as model sizes grow.

The WSE-3 is the world's largest commercialized AI chip at 46,225 mm², containing 4 trillion transistors. CEO Andrew Feldman held one during the Nasdaq debut.

Source: cerebras.ai

The WSE-3 is the world's largest commercialized AI chip at 46,225 mm², containing 4 trillion transistors. CEO Andrew Feldman held one during the Nasdaq debut.

Source: cerebras.ai

Why inference, not training

Cerebras has positioned itself in inference - the compute required for deployed AI models to respond to queries. Training large models still runs almost exclusively on NVIDIA hardware. The WSE-3 targets the deployment side: organizations running millions of requests per day where latency matters more than absolute parameter count.

That positioning has won real contracts. OpenAI launched its first model running on Cerebras infrastructure earlier in 2026. Amazon Web Services is a listed customer. The inference market is expected to grow faster than training over the coming years as model adoption scales beyond the frontier labs.

The manufacturing question

Analyst Gil Luria at D.A. Davidson flagged a concern that gets less attention than the valuation debate: the WSE-3's unusual size means a single manufacturing defect can affect the whole chip rather than just one die in a cluster. Yield rates - the proportion of chips that come off the fab working correctly - are harder to confirm publicly for wafer-scale devices. Converting $24.6 billion in backlog to actual revenue depends on manufacturing, data center construction, and power availability all executing cleanly.

Side-by-side: the WSE-3 wafer chip versus a standard GPU die. Cerebras argues the single-die approach removes the inter-chip latency bottleneck that limits GPU cluster performance.

Source: cerebras.ai

Side-by-side: the WSE-3 wafer chip versus a standard GPU die. Cerebras argues the single-die approach removes the inter-chip latency bottleneck that limits GPU cluster performance.

Source: cerebras.ai

Who Benefits

Cerebras founders. CEO Andrew Feldman's stake at Thursday's close is worth about $3.2 billion. CTO Sean Lie holds roughly $1 billion. Both are now paper billionaires from a company that spent a decade betting that the GPU wasn't the end of the story.

OpenAI's inner circle. This is where the deal gets complicated. OpenAI is simultaneously Cerebras' largest customer, a $1 billion loan provider secured by warrants for more than 33 million Cerebras shares, and the employer of several angel investors - including CEO Sam Altman, president Greg Brockman, former chief scientist Ilya Sutskever, and board member Adam D'Angelo. Altman held roughly 89,000 shares at last filing, worth around $16.5 million at Thursday's close. The relationship has drawn scrutiny as a potential conflict of interest and was cited in Elon Musk's ongoing lawsuit against Altman and OpenAI.

The inference market. A non-NVIDIA chip company reaching this scale on public markets verifies the thesis that AI infrastructure isn't a one-company story. That benefits every firm currently raising capital on a similar premise, and signals to sovereign AI programs that competition for GPU alternatives is viable.

Who Pays

Retail investors chasing momentum. Nicholas Smith at Renaissance Capital called the asking price "quite high even out to 2028." Luria at D.A. Davidson suggested a fairer value closer to $115 per share based on backlog fundamentals alone - 38% below the IPO price. The 10% day-2 pullback suggests a portion of institutional investors agree the first-day close overshot.

Shareholders carrying customer concentration risk. Two UAE-linked entities represented 86% of 2025 revenue. The CFIUS review that blocked the 2024 IPO attempt was resolved by restructuring G42's investment to non-voting shares - but the revenue dependence on those customers did not disappear with the legal restructuring. If Abu Dhabi-linked AI spending contracts or redirects, the revenue picture changes faster than a $95 billion valuation absorbs.

Anyone counting on broad customer diversification. There is a term in the OpenAI deal that restricts Cerebras from selling to other frontier AI labs while the contract runs. That clause caps how fast the company can diversify away from its current backlog concentration. If OpenAI eventually builds its own inference chips - a well-reported ambition - or moves volume to a competitor, a large fraction of that $24.6 billion disappears.

"This is the right way to fund our growth," Feldman told CNBC on Thursday, standing at the Nasdaq MarketSite with a WSE-3 chip in hand. The chip looked out of place against the trading floor backdrop - a dinner-plate-sized processor that represents either a genuine architectural leap or a very expensive bet on a single customer holding.

Cerebras just ran the biggest US tech IPO in six years. The first earnings call as a public company - and the rate at which it converts $24.6 billion in backlog to recognized revenue - is now Feldman's to lose.

Sources:

- TechCrunch - Cerebras raises $5.5B, then stock pops 108%

- Yahoo Finance - Cerebras stock surges 70%

- ts2.tech - Why Cerebras stock is down today

- Cerebras press release - IPO pricing

- TechCrunch - OpenAI's cozy partner Cerebras on track for blockbuster IPO

- The Next Web - Cerebras raises $5.55bn in biggest US tech IPO since Snowflake