Five Frontier AI Labs Now Under US Pre-Release Review

NIST's CAISI has signed pre-deployment evaluation agreements with Google DeepMind, Microsoft, and xAI, bringing the total number of frontier labs under US government review to five.

The US government has quietly expanded its grip on frontier AI. The Center for AI Standards and Innovation (CAISI) - the renamed successor to Biden's AI Safety Institute - announced this week that Google DeepMind, Microsoft, and xAI have signed formal pre-deployment evaluation agreements, joining OpenAI and Anthropic in a testing program that has now completed more than 40 assessments.

Every major Western frontier lab is now under some form of US government review before their models reach the public. That sentence would have been unthinkable six months ago.

TL;DR

- CAISI signed pre-deployment evaluation agreements with Google DeepMind, Microsoft, and xAI on May 5, 2026

- Five frontier labs now in the program - OpenAI and Anthropic signed in 2024, three new additions this week

- Testing happens in classified environments with safety guardrails partially or fully removed

- The Trump administration, which removed "safety" from CAISI's name, is now drafting an executive order to extend mandatory reviews to all labs

- Anthropic's Mythos cybersecurity model is widely cited as the trigger for the policy reversal

Five Labs, One Framework

OpenAI and Anthropic First, Now Three More

The program's roots go back to November 2024, when Biden's US AI Safety Institute struck voluntary agreements with OpenAI and Anthropic for pre-deployment model evaluations. When the Trump administration took over in January 2025, it rebranded the institute as CAISI and stripped "safety" from its title - a symbolic move widely read as a signal that the new administration would focus on capability over caution.

The signal was wrong. Or at least incomplete.

This week's announcements bring the total number of participating labs to five. CAISI Director Chris Fall framed the expansion in blunt national security terms:

"Independent, rigorous measurement science is essential to understanding frontier AI and its national security implications."

The Business Software Alliance's Aaron Cooper, whose organization has pushed for exactly this kind of structured government-industry engagement, added that "CAISI brings the necessary expertise to work with private sector partners to evaluate frontier models for safety and national security risks."

What Testing Actually Involves

The agreements aren't rubber stamps. Under the terms, CAISI and the participating labs share technical information about models before public release. CAISI then conducts evaluations, develops best practices, and feeds findings into the broader Commerce Department AI policy framework aligned with Trump's AI Action Plan.

The scope extends well beyond release day. Post-deployment assessment is also part of the framework, meaning government scrutiny doesn't end when a model goes live.

CAISI has been building its track record quietly. In April 2026, it published a full evaluation of DeepSeek V4 Pro - the first major Chinese model to go through the process - finding significant shortcomings in accuracy, security characteristics, and cost efficiency relative to domestic alternatives.

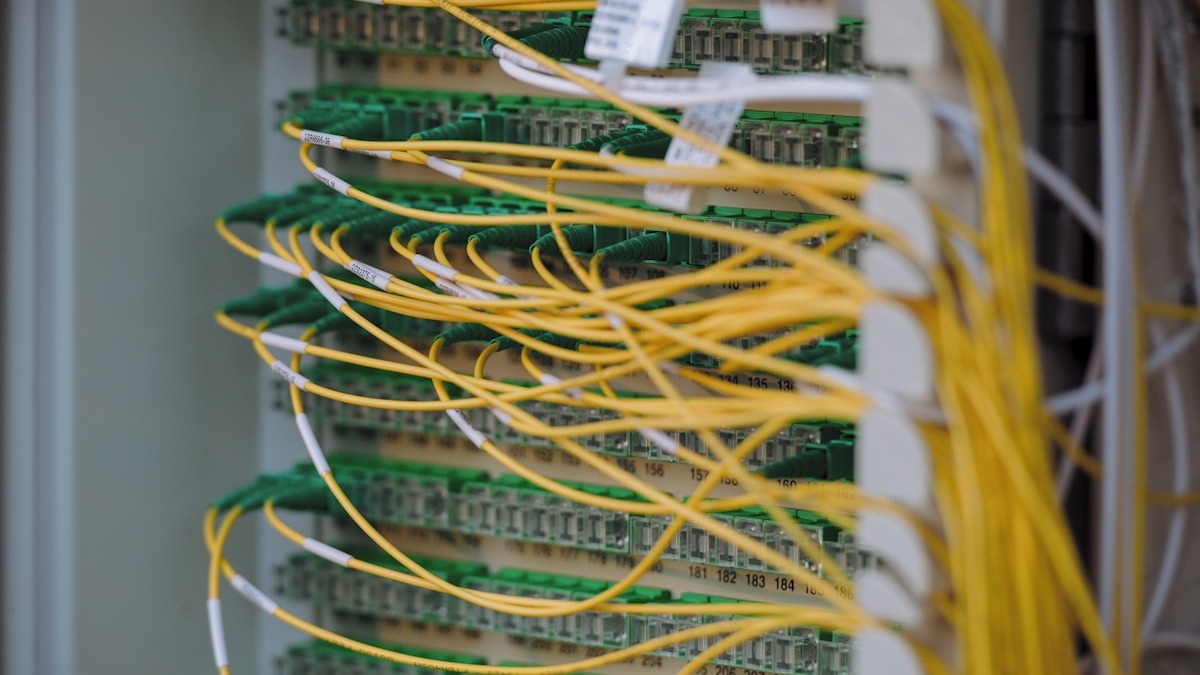

AI model evaluations require major classified computing infrastructure, running models in isolated environments where their unfiltered capabilities can be probed without public risk.

Source: unsplash.com

AI model evaluations require major classified computing infrastructure, running models in isolated environments where their unfiltered capabilities can be probed without public risk.

Source: unsplash.com

Guardrails Off - The Classified Dimension

The detail in the agreements that has drawn the most attention from security researchers is this: CAISI will study models "that have reduced or removed safeguards to better understand their unmitigated capabilities."

In plain terms, labs will hand over versions of their models with the safety filters switched off. The evaluations happen inside classified settings with a multi-agency interagency task force that includes the NSA, the White House Office of the National Cyber Director, and the Director of National Intelligence.

The evaluation scope is deliberately broad. CAISI's mandate covers cybersecurity, biosecurity, and chemical weapons concerns - plus assessments of foreign AI systems, backdoors, and covert malicious behavior baked into model weights. That last category is particularly sensitive: it implies CAISI believes adversaries could be hiding capabilities inside models distributed through apparently legitimate channels.

The logic for stripped-down testing is defensible - you cannot evaluate what a model can actually do if its outputs are shaped by the production alignment stack. But the operational reality raises its own questions. Who has access to these guardrail-free versions? Under what legal authority are findings stored and shared? The agreements do not appear to specify.

Cybersecurity policy expert Devin Lynch has been vocal about the limitations: "Capability assessments are only as good as the threat models behind them." His concern is that CAISI must publicly define its testing criteria, not just announce its participants. A list of labs in a program isn't the same as a rigorous evaluation framework.

From "Safety" to "Standards" - A Deliberate Rename

To understand how strange this week's news is, it helps to remember the trajectory. The Trump administration did not just rebrand Biden's safety institute. It actively positioned AI policy as an economic competitiveness issue, not a safety one. VP JD Vance said at the Paris AI Summit in February 2025 that the AI race would be won "by building," not through safety concerns. The White House blueprint preempting state AI laws earlier this year was explicitly framed as a deregulatory move to give US companies room to accelerate.

CAISI itself was created to reflect this shift. Dropping "safety" from the name was not an accident. The institute was repositioned as a standards and measurement body - technical, not normative.

The Mythos Moment

Then Anthropic's Mythos model arrived. Capable of identifying and exploiting software vulnerabilities at a scale and speed no human team can match, Mythos was described by Anthropic itself as "too dangerous to publicly release." The company disclosed the model's capabilities internally before sitting on them - a disclosure that triggered immediate attention from national security officials.

The Mythos situation made the abstract concrete. For the first time, a major AI lab had built something powerful enough to be a genuine offensive cybersecurity tool, acknowledged it, and then had to explain to the government why they thought that was acceptable.

That conversation didn't stay private.

The White House is drafting an executive order that would formalize pre-release AI vetting across all frontier labs, not just voluntary participants.

Source: unsplash.com

The White House is drafting an executive order that would formalize pre-release AI vetting across all frontier labs, not just voluntary participants.

Source: unsplash.com

An Executive Order in the Works

The voluntary agreements now on the table aren't the ceiling. They're the floor.

National Economic Council Director Kevin Hassett told Fox Business that the White House is "studying possibly an executive order" that would require AI models to go through a government review process before public release - "just like an FDA drug." The working group under consideration would include both government officials and tech executives.

The admission is a striking one. Hassett is describing mandatory pre-release review for private AI products. That's not a conservative deregulatory position - it's closer to what Biden's AI safety advocates were asking for two years ago, and it is being floated by the same administration that revoked Biden's executive order on AI the week Trump took office.

Policy analyst Rumman Chowdhury, who has tracked AI governance closely, called it plainly: "This is a 180 for the Trump administration, that has very explicitly been anti-any sort of regulation."

What the Critics Are Saying

Not everyone is convinced the voluntary agreements accomplish much. Industry analyst Nick Patience argues these deals function mainly as "political insurance" for CIOs - companies can point to government participation as a signal of legitimacy when pitching enterprise contracts, especially for federal work. The actual security value, he suggests, depends entirely on what CAISI's classified evaluations find and whether those findings are acted upon.

The structural concern is deeper. CAISI's testing relies on what companies voluntarily submit. There's no requirement to hand over full training details, no independent audit of whether the model being evaluated matches the one being launched, and no enforcement mechanism if a company disagrees with CAISI's findings.

Microsoft's chief responsible AI officer acknowledged the complexity, noting that AI safety "requires close collaboration between industry and governments with deep technical and security expertise." What that collaboration looks like in practice - who leads, who follows, who can say no - remains unspecified.

For the NIST CAISI effort on AI agent standards, which addresses a related but distinct governance gap, see the NIST AI Agent Standards Initiative.

The expansion to five labs is truly significant. Six months ago, a framework in which every major Western frontier lab submits models for classified government review didn't exist. Now it does. Whether that framework has teeth depends on what happens when an evaluation raises a red flag - and on whether the White House executive order, if it comes, gives CAISI the authority to act on what it finds, not just observe it.

Sources:

- NIST will test three major tech firms' frontier AI models for cybersecurity risks - Cybersecurity Dive

- Commerce AI center will evaluate Google DeepMind, Microsoft and xAI models - Nextgov/FCW

- Microsoft, Google and xAI will let the government test their AI models before launch - CNN

- Tech giants agree to US government AI testing programme - Euronews

- CAISI Signs Frontier AI Testing Agreements With 3 Companies - ExecutiveGov

- White House considers mandatory government vetting of AI models - Tom's Hardware

- White House prepares order to boost AI security, Hassett says - Claims Journal

- CAISI adds Google DeepMind, Microsoft, and xAI to pre-deployment review program - Tech Jacks Solutions