Arm Launches AGI CPU, Its First Chip in 35 Years

At its Arm Everywhere event in San Francisco, Arm unveiled the AGI CPU - a 136-core data center processor co-developed with Meta and the company's first owned silicon product in its 35-year history.

Arm spent 35 years as semiconductor's Switzerland - designing architectures, licensing them to anyone, never competing with the companies it supplied. That model ends today.

At an event in San Francisco on March 24, CEO Rene Haas unveiled the Arm AGI CPU: the company's first proprietary data center processor since its 1990 founding. Meta co-developed it and is the launch customer. OpenAI, Cerebras, and Cloudflare are among the signed partners. Arm stock surged roughly 13% in premarket trading on March 25.

TL;DR

- First owned silicon product in Arm's 35-year history, purpose-built for AI inference orchestration

- Up to 136 Neoverse V3 cores on TSMC's 3nm process; claims 2x performance per rack vs. x86

- Meta co-developed the chip; OpenAI, Cerebras, Cloudflare among initial launch partners

- CEO Rene Haas projects $15B in AGI CPU revenue by FY2031, against ~$4B in total company revenue today

- Production ramp expected H2 2026; Qualcomm notably absent from partner list

Thirty-Five Years of Neutrality, Ended

Arm's business model was elegant. Design an architecture once. License it to hundreds of companies - Apple, Qualcomm, Amazon, Google, Nvidia, Samsung. Collect royalties on every chip they ship. Never compete with your customers.

The ceiling on that model is real. On a $500 chip, Arm's royalty might be $5. It captures 1-2% of each device's value while its licensees capture the rest. For 35 years, that was acceptable. Agentic AI just changed the calculation.

"People thought CPUs were dead," CEO Rene Haas told attendees at the Arm Everywhere event. "We need more and more CPUs."

Haas argues that agentic AI workloads require four times as many CPU cores per gigawatt of data center capacity as conventional inference - a demand signal that didn't exist three years ago. The AGI CPU is Arm's direct attempt to capture that growth rather than watching licensees do it.

Why Meta Came Calling in 2023

Development started when Meta approached Arm with a specific request: build a processor designed for the orchestration layer of large-scale AI inference. Not for training, not for transformer attention - for coordinating memory allocation, storage access, and accelerator scheduling that agentic workloads require.

Meta already had its own accelerator roadmap (by now on its fourth MTIA generation), but needed a CPU partner who'd build to exact specification rather than adapting an existing product. The co-development arrangement gave Arm its first committed customer before a single line of RTL was written.

Specifications

| Spec | SP113012 (Flagship) | SP113012S (TCO) | SP113012A (Bandwidth) |

|---|---|---|---|

| Cores | 136 | 128 | 64 |

| Architecture | Armv9.2 / Neoverse V3 | Armv9.2 / Neoverse V3 | Armv9.2 / Neoverse V3 |

| Process node | TSMC N3P (3nm) | TSMC N3P | TSMC N3P |

| Clock | 3.2 GHz base / 3.7 GHz boost | same | same |

| TDP | 300W (configurable 230-420W) | 300W | 300W |

| Memory | 12-channel DDR5-8800 | same | same |

| Memory bandwidth | >800 GB/s aggregate | >800 GB/s | 6-13 GB/s per core |

| PCIe | Gen 6.0, 96 lanes | same | same |

| CXL | 3.0 Type 3 | same | same |

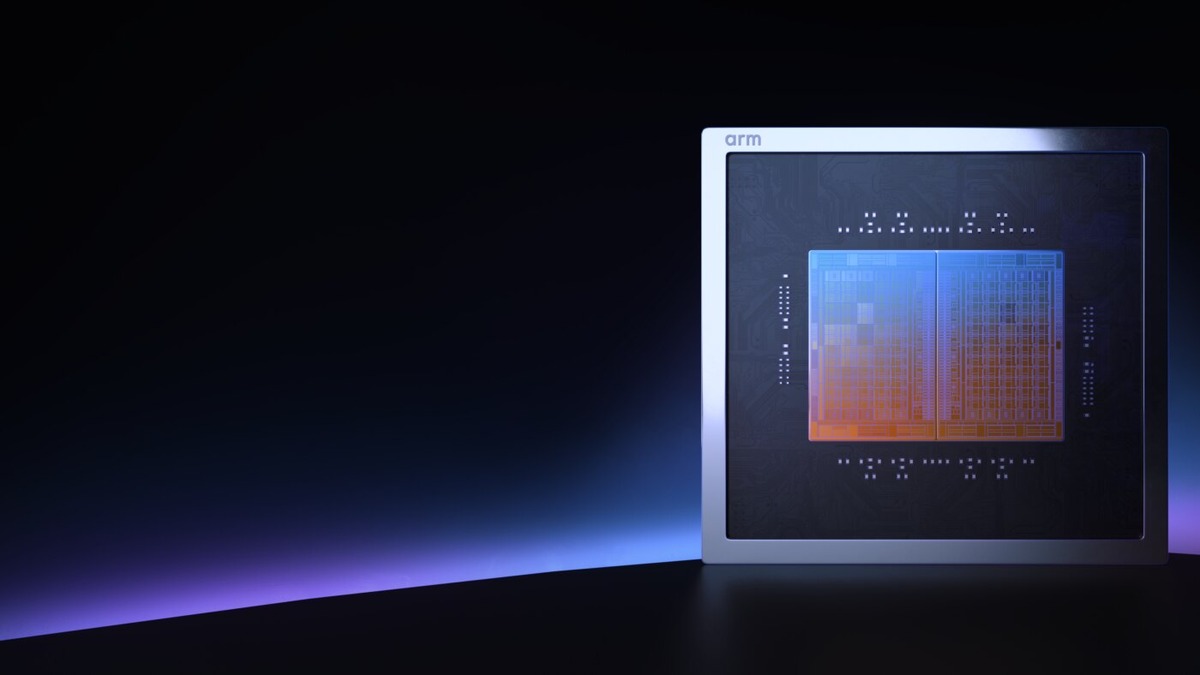

Each core carries dual 128-bit SVE2 units with bfloat16 and INT8 support. The chip ships as a dual-die chiplet package - two dies fused together for yield management at 3nm. No hyperthreading: one thread per core, by design, for deterministic scheduling under agentic workloads.

The Arm AGI CPU in its dual-die chiplet configuration. Three SKUs cover different core-count and memory-bandwidth trade-offs.

Source: newsroom.arm.com

The Arm AGI CPU in its dual-die chiplet configuration. Three SKUs cover different core-count and memory-bandwidth trade-offs.

Source: newsroom.arm.com

Density Numbers

Arm claims an air-cooled 36kW rack holds 8,160 total cores. Liquid-cooled configurations scale to a 200kW rack with 45,696+ cores - which Arm says represents 2.03x the rack density of Nvidia's Vera CPU product. A $10 billion per gigawatt capex savings claim follows from that density advantage, though the calculation assumes a specific workload profile Arm hasn't published in full.

Fabrication is at TSMC on the N3P process. This is Arm's first time contracting TSMC to fab silicon it owns and sells directly, rather than licensing IP for others to manufacture.

Partners - and the Absence That Matters

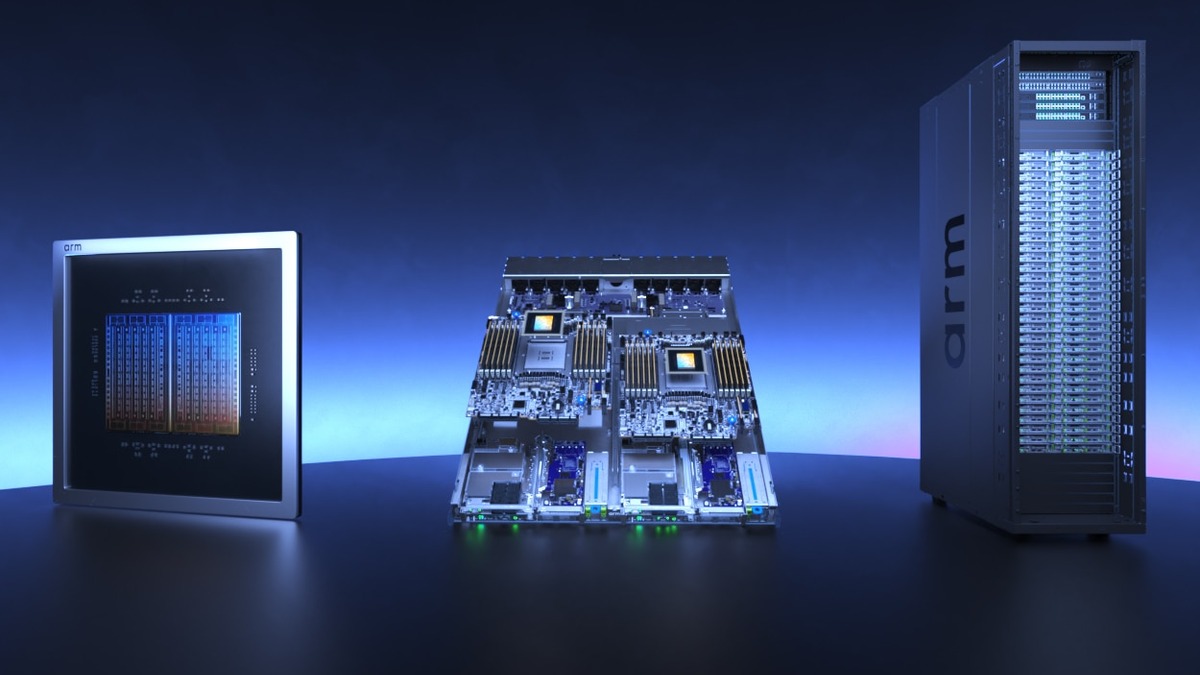

The launch partner list covers most major AI buyers. Meta is co-developer and first production customer, integrating the AGI CPU with its MTIA accelerators. OpenAI highlighted the chip's role in coordinating agentic tool calls and its power efficiency at scale. Cerebras, Cloudflare, SAP, F5, and SK Telecom signed on as customers. OEM server partners - Lenovo (the HR650a V3), Supermicro, ASRock Rack, and Quanta Computer - are building systems around it.

The ecosystem backer list is broader: AWS, Google, Microsoft, Nvidia, Broadcom, Marvell, Micron, Samsung, SK hynix, Red Hat, and TSMC. Each has reason to keep the Arm CPU platform viable even where it competes at the margin with their own products.

Qualcomm isn't on either list. The ongoing legal dispute - Arm attempted to cancel Qualcomm's architecture license in an earlier IP feud - casts a long shadow over the launch. Qualcomm has argued publicly that Arm's hardware pivot violates the terms of its neutrality as a licensor. Apple is absent from active customers, though Apple Silicon is built on Arm architecture and Apple appears in the broader ecosystem documentation.

Intel, AMD, and the x86 Incumbents

Intel and AMD aren't listed among customers or backers. Both hold dominant positions in enterprise software certification ecosystems - Oracle database licensing, SAP HANA, Windows Server workloads - that Arm hasn't historically addressed and can't easily unseat. AMD is launching up to 256-core EPYC CPUs later in 2026, which will compete directly in core-count comparisons.

Arm's counter-argument is architectural: no hyperthreading, TSMC 3nm process, and a memory subsystem tuned for AI workload access patterns rather than general-purpose compute. Whether enterprise buyers accept that trade-off outside of greenfield AI deployments is the central commercial question for the AGI CPU's first year.

The AGI CPU in a server board configuration. OEM partners including Lenovo, Supermicro, and Quanta Computer are building systems around it for H2 2026 availability.

Source: arm.com

The AGI CPU in a server board configuration. OEM partners including Lenovo, Supermicro, and Quanta Computer are building systems around it for H2 2026 availability.

Source: arm.com

The Financial Case

Haas projected $15 billion in AGI CPU annual revenue by FY2031, against total company revenue reaching $25 billion that year. Current royalty revenue sits just above $4 billion - making this roughly a sixfold company growth projection built on top of an already profitable business.

Analysts moved fast. HSBC upgraded Arm to Buy from Reduce and more than doubled its price target to $205 from $90 - one of the more dramatic single-day revisions in recent semiconductor coverage. Raymond James upgraded to Outperform with a $166 target, noting that Meta's involvement de-risks the commercial case substantially: tapping even a fraction of Meta's projected multi-gigawatt infrastructure capex could justify the revenue targets independently.

Production ramp is H2 2026. Meaningful revenue flows from FY2028, per Haas's own timeline. The chip is orderable now, but volume shipments won't arrive before late in the year.

"We think that the CPU is going to be fundamental to ultimately achieving AGI." - Mohamed Awad, EVP Cloud AI at Arm

The Amazon Trainium buildout showed how alternative AI chips require years of software tooling and compiler work before reaching meaningful enterprise adoption. Arm starts from a stronger ecosystem position - hundreds of companies already develop for Neoverse - but the optimization gap for the AGI CPU's specific agentic workload profile is real and unquantified.

What It Does Not Tell You

The "2x vs. x86" performance claim is Arm's own benchmark against an unspecified x86 configuration. The advantage is specific to AI inference orchestration - coordinating accelerators, routing memory, managing I/O. For general-purpose compute, transactional databases, or x86-native workloads, the comparison doesn't apply, and Arm hasn't claimed otherwise.

The neutral licensor model also isn't fully abandoned. Arm's chip business and its IP licensing business will coexist, and the company depends on licensee royalties for its existing revenue base. How it manages the relationship with Apple and Qualcomm - both major licensees who now face a direct competitor at the table - will shape whether the IP business erodes as Arm moves into silicon.

No independently verified benchmarks exist yet. Performance and density numbers came from Arm's internal testing against configurations Arm selected. Third-party results from cloud providers, research labs, or benchmark bodies like MLPerf haven't been published. First-generation hardware from new market entrants often ships somewhat below initial spec-sheet claims - a risk the company hasn't addressed explicitly. The recent wave of CPU-based inference chips, from Alibaba's RISC-V C950 to Nvidia's own Vera, shows that claims and production reality can diverge notably in the first hardware generation.

Arm handed its licensees a difficult situation. The company that built the modern mobile and cloud chip industry by staying out of the silicon business has decided that agentic AI is worth breaking that commitment. The business logic is clear, the Meta co-development removed the most obvious commercial risk, and the 13% premarket move on March 25 shows markets found the numbers credible. Whether Qualcomm's lawyers, Apple's procurement teams, and AMD's roadmap leave room for the AGI CPU to hit $15 billion by FY2031 is a question that won't be answered before H2 2026.

Sources:

- Arm AGI CPU product page

- TechCrunch: Arm's first in-house chip in 35-year history

- Arm Newsroom: AGI CPU launch

- The Register: Arm AGI CPU analysis

- Tom's Hardware: Arm launches first data center CPU

- Back2Gaming: Arm debuts AGI CPU with Meta

- The Motley Fool: Arm debuts AI CPU

- The Stock Observer: Arm AGI CPU strategic analysis