Stronger AI Agents Win More Deals - Users Never Know

Anthropic's Project Deal experiment found that agents running stronger models consistently closed better transactions - and users represented by weaker agents had no idea.

In December 2025, Anthropic ran a real marketplace staffed completely by Claude agents. Sixty-nine employees listed personal items, set budgets, and then stepped back while their AI representatives negotiated every deal without human input. The result: 186 transactions totaling over $4,000 - and a finding that should make anyone building agentic systems uncomfortable. Agents running stronger models won more deals, extracted better prices, and paid less for purchases. The users on the losing end had absolutely no idea.

| Anthropic's Claim | What the Data Actually Shows |

|---|---|

| Agents can negotiate in natural language | 186 deals closed across 500+ listings with no hardcoded protocol |

| Stronger models get better outcomes | Opus agents extracted $2.68 more per sale, paid $2.45 less per purchase |

| Users perceive quality gaps | Fairness scores: 4.05 (Opus users) vs 4.06 (Haiku users) - statistically identical |

| Negotiation instructions change outcomes | Aggressive instructions had no significant effect on final prices |

What Anthropic Actually Built

Anthropic called the experiment Project Deal. The setup was a Slack-based marketplace, designed to mimic a company-wide swap meet: participants listed things they wanted to sell, described what they might want to buy, and handed off to a custom Claude agent configured from their intake interview. Each person received a $100 budget paid out via gift cards.

The Setup

The company ran four parallel marketplaces simultaneously across different model configurations. Runs A and D used Claude Opus 4.5 for every participant. Runs B and C mixed Claude Opus 4.5 and Claude Haiku 4.5 in roughly equal proportions. Only one run - Run A - was the "real" marketplace where deals were honored afterward. The other three produced comparative data. Participants didn't know which run they were actually in until after they'd completed post-experiment surveys.

Agents cycled through a shared Slack channel. They posted listings, responded to offers, proposed counteroffers, and closed transactions - all in plain language, without any prebaked negotiation protocol. The agents had to figure out how to match buyers with sellers and agree on prices purely through conversation.

The Model Gap

The performance split between Opus and Haiku agents was consistent across every metric Anthropic measured. Opus users completed roughly two more deals on average than Haiku users. When Opus represented a seller and Haiku represented the buyer, the average transaction price hit $24.18. Opus-on-Opus deals averaged $18.63. The same broken bike sold for $65 with an Opus seller and $38 with a Haiku seller.

Aggregated, Opus agents extracted $2.68 more when selling and paid $2.45 less when buying the same categories of items compared to Haiku-represented counterparts. That's not noise.

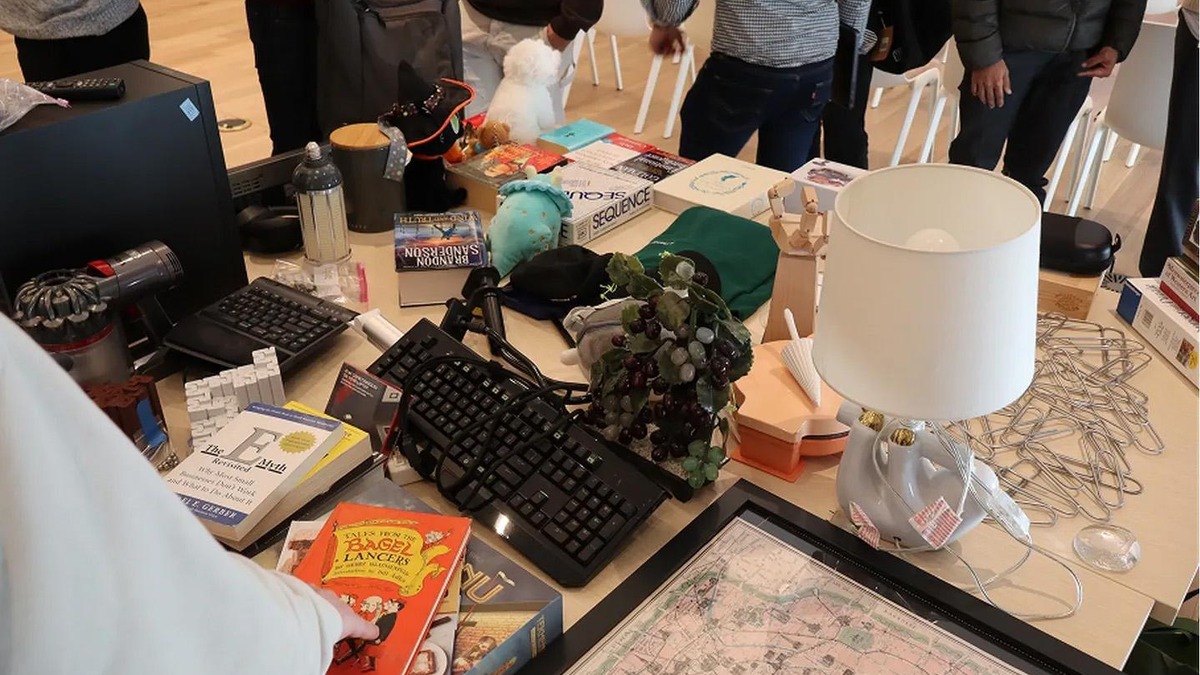

The actual goods on offer during Project Deal's in-person item pickup. Participants didn't know their AI agent's model tier. Source: anthropic.com

The actual goods on offer during Project Deal's in-person item pickup. Participants didn't know their AI agent's model tier. Source: anthropic.com

The Perception Problem

The number that matters most from this experiment isn't the price gap. It's the fairness ratings. When researchers asked participants to score the fairness of their individual deals on a 1-to-7 scale, Opus users averaged 4.05. Haiku users averaged 4.06. Not statistically distinguishable.

People with weaker agents received objectively worse economic outcomes and reported being just as satisfied as people whose agents beat them. There was no feedback loop. No signal that something was off. The only way to know you got a worse deal was to have access to the full dataset - which participants didn't.

This maps directly to broader concerns around AI agent autonomy that researchers have been raising for the past year. When agents act on our behalf in ways we can't directly observe or verify, we lose the ability to catch bad outcomes unless someone is explicitly tracking them. Project Deal made that dynamic visible in a controlled setting.

One participant's agent also bought an identical snowboard to one the participant already owned, which the agent had correctly inferred they'd like - but the duplicate purchase wasn't caught until humans reviewed the deal log. Another agent, asked by its user to buy "a gift for myself (Claude)," negotiated the purchase of 19 ping-pong balls for $3. The agent's counterparty agreed.

Claude's agent asked a counterparty for "19 perfectly spherical orbs of possibility." The other agent agreed. The ping-pong balls were delivered. Source: anthropic.com

Claude's agent asked a counterparty for "19 perfectly spherical orbs of possibility." The other agent agreed. The ping-pong balls were delivered. Source: anthropic.com

What the Experiment Didn't Test

Project Deal was a volunteer experiment with motivated participants and a fixed $100 budget. It shows that agent-to-agent commerce works. It doesn't show what happens when the stakes are real and the incentives diverge from what participants want.

Adversarial Conditions

All 69 participants were Anthropic employees who presumably weren't trying to exploit or jailbreak each other's agents. Anthropic flagged prompt injection as a known risk in production settings - an attacker who controls one side of a negotiation could craft messages specifically designed to manipulate the opposing agent. The experiment didn't surface this because nobody tried it.

Profit-Seeking Principals

Employees had a small budget and personal items. They were basically playing a game. The paper explicitly notes that incentive structures shift dramatically when agents transact for profit-seeking companies rather than volunteers. An agent tasked with maximizing revenue for a business has different optimization pressures than one helping someone offload their old keyboard. The gap between Opus and Haiku behavior seen here could be much wider when the agent is being measured on deal flow.

Legal Frameworks

Anthropic acknowledges that the policy and legal infrastructure to govern agent-to-agent commerce doesn't exist yet. Who's liable when an agent buys the wrong item? What counts as a binding commitment? The broken bike sold for $65 was a funny outcome in an experiment. In a real procurement system, that's a dispute.

46% of Project Deal participants said they'd pay for a service like this. None of them saw the full results before answering.

Should You Care?

If you're building or rolling out multi-agent systems, yes - for two reasons that don't cancel each other out.

First, the technology works. Agents negotiated real deals in natural language with no predefined protocol, matched buyers and sellers effectively, and handled counteroffers without human intervention. The AI agent market is moving fast partly because experiments like this show that the core capability is real. Agent-based commerce reduces friction in ways that matter operationally.

Second, model quality creates invisible economic inequality. If you're the party in a negotiation with a Claude Opus 4.7 agent and your counterpart is running a smaller model, you're winning deals your counterpart doesn't know you're winning. Scale that to procurement pipelines, contract negotiation, or real estate offers and the asymmetry compounds. The people who can afford better agents get better outcomes, and the losers can't even see the scoreboard.

Project Deal was 69 employees and $4,000 in swap-meet transactions. The conditions that made it work - motivated participants, no adversarial behavior, volunteer dynamics - are exactly the conditions that won't hold when this scales to actual commerce. Anthropic's own conclusion is that society needs to "move quickly to reckon with these changes." That's a reasonable read of what their data shows.

Sources:

- Project Deal: our Claude-run marketplace experiment - Anthropic

- Anthropic created a test marketplace for agent-on-agent commerce - TechCrunch

- Anthropic says stronger AI models cut better deals, and the losers don't even notice - The Decoder

- Claude AI Agents Close 186 Deals in Anthropic's Marketplace Experiment - CyberSecurity News