Anthropic Launches Managed Agents - Runs Your AI for You

Anthropic released Claude Managed Agents in public beta today, a fully managed platform that handles sandboxing, state, and tool execution so developers can skip building agent infrastructure from scratch.

Anthropic dropped Claude Managed Agents into public beta today - a hosted platform that takes over the operational scaffolding of running autonomous AI agents so developers don't have to build it themselves.

The announcement lands as production agent deployments are increasingly hitting a predictable wall: the model works in a notebook, then six weeks disappear managing containers, state recovery, credential isolation, and error loops before anything ships. Claude Managed Agents is Anthropic's bet that the infrastructure layer should be a service, not a side project.

TL;DR

- Public beta available today for all Claude API users via a beta header

- $0.08 per session hour on top of standard token costs

- Anthropic manages sandboxing, state persistence, tool execution, and error recovery

- Runs on Anthropic infrastructure only - no AWS Bedrock, no Google Vertex support

- Agent-spawning, parallel coordination, and memory management are on the waitlist

What Gets Managed

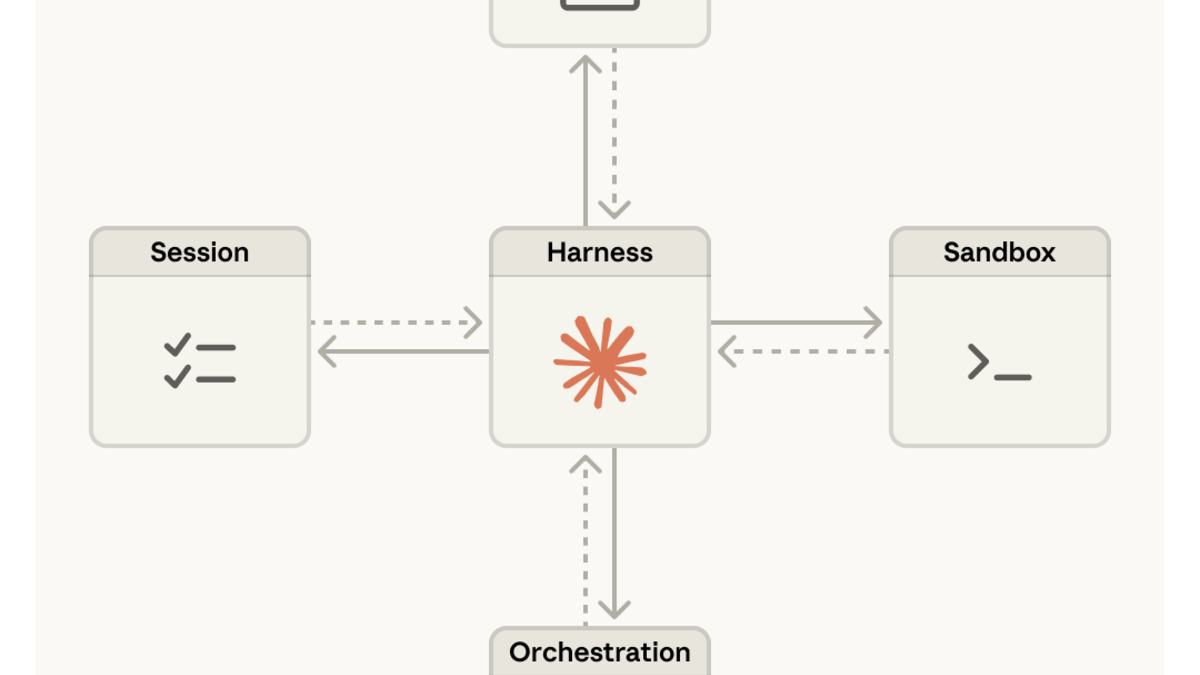

The platform splits a running agent into three discrete components: the Brain (Claude plus harness logic), the Hands (sandboxes and tool execution), and the Session (a durable event log stored outside Claude's context window).

Brain, Hands, Session

The separation matters more than it sounds. In a traditional setup, if the harness process crashes mid-task, the agent's state goes with it. With Claude Managed Agents, a failed harness can reboot using a wake(sessionId) call and reconstruct the full event history via getSession(). The session log is append-only and lives outside of Claude's context, so it survives harness failures completely.

Containers - the "Hands" - are provisioned on demand rather than kept warm. Anthropic says this lazy provisioning reduces time-to-first-token by roughly 60% at the median and over 90% at the 95th percentile. Failed containers are treated as recoverable errors passed back to Claude for retry logic, not as terminal failures that kill the whole run.

Credential Isolation

The security design is worth noting. Credentials never exist in the sandbox environments where Claude produces and runs code. Two patterns handle authentication:

- Resource-bundled auth: Git tokens clone repositories during sandbox initialization; following push/pull operations work without re-exposing the credential.

- Vault-based auth: OAuth tokens live in an external secure vault; Claude calls MCP tools through a proxy that fetches credentials at request time.

This prevents the obvious failure mode where a misconfigured prompt or a model reasoning error leaks a token into produced code that gets executed in a container Claude also controls.

Context Without Compaction

Rather than compacting or irreversibly trimming Claude's context when sessions run long, the session log lets harnesses programmatically fetch positional slices of the event stream and transform them before passing to Claude. Anthropic's engineering post describes this as enabling "context organization optimized for prompt caching while maintaining full historical recovery capability" - though in practice it means long-running tasks stay coherent without the model losing track of what happened two hours ago.

How to Access It

Three entry points are available:

- Claude Console - web-based interface for building and testing agents without code

- Claude Code - scripting environment for developers who want full programmatic control

- New CLI - command-line tool designed for CI/CD pipelines, with versioning and environment promotion built in

The service activates via a beta header on standard Claude API calls. Quickstart documentation is at platform.claude.com.

Anthropic's earlier Claude Code Auto Mode research preview gave developers a taste of reduced interruptions during long agent runs. Managed Agents extends that premise to the full infrastructure layer - not just fewer prompts, but fewer infrastructure decisions completely.

Anthropic's three-layer architecture separates the model (Brain), execution environments (Hands), and session state - each component can fail independently without losing the overall task.

Source: anthropic.com

Anthropic's three-layer architecture separates the model (Brain), execution environments (Hands), and session state - each component can fail independently without losing the overall task.

Source: anthropic.com

Who Is Using It

Anthropic listed five early adopters at launch. Rakuten built Slack and Teams agents for sales, marketing, and finance workflows and rolled out in a week. Sentry is running debugging agents that autonomously create patches and open pull requests. Notion is delegating workspace tasks. Asana and Vibecode round out the initial cohort.

The Rakuten timeline is the one that cuts against the usual "six months to production" complaint for enterprise agent projects. Whether that's typical or exceptional depends on how much existing infrastructure each team brought to the table - Rakuten didn't disclose details.

Anthropic's earlier study on agent autonomy showed Claude agents were already running 45 minutes without human input in regular API usage. Managed Agents provides the durable infrastructure to support sessions that run notably longer than that.

Requirements and Compatibility

| Feature | Status |

|---|---|

| Claude Opus 4.6 support | Available |

| MCP server integration | Available |

| Custom tool definitions | Available |

| AWS Bedrock | Not supported |

| Google Vertex AI | Not supported |

| Agent-spawning / parallel coordination | Waitlist |

| Memory management | Waitlist |

| Budget / cost caps | Not announced |

The infrastructure exclusivity is the most limiting factor for teams already invested in multi-cloud deployments. If your organization runs on AWS and uses Bedrock for everything else, managed agents don't fit that stack yet.

The session event log stores every agent thought, tool call, and result outside Claude's context window - enabling full state reconstruction after harness failures.

Source: anthropic.com

The session event log stores every agent thought, tool call, and result outside Claude's context window - enabling full state reconstruction after harness failures.

Source: anthropic.com

Pricing

The cost structure is $0.08 per session hour plus standard token rates.

The session fee isn't the expensive part. Token costs dominate at any meaningful workload, and the $0.08/hour charge is modest relative to what a two-hour agentic session will consume in input and output tokens.

What you're paying for with the session fee is the operational layer: state persistence, container lifecycle, credential handling, and monitoring. For teams that would otherwise spend engineer time building that layer, $0.08/hour is cheap. For teams that already have a working agent infrastructure, it's an unnecessary addition.

Where It Falls Short

Single-provider lock-in. Anthropic is explicit that the service runs on their infrastructure only. There's no timeline for Bedrock or Vertex support, and neither AWS nor Google was mentioned in the launch. Organizations with multi-cloud or cloud-agnostic policies can't use this without carving out an exception.

Missing features are behind a waitlist. Agent-spawning - where one agent coordinates parallel sub-agents - and memory management are both listed as "research preview" with a waitlist. These are the capabilities that make multi-step agentic workflows actually scale. Launching without them available is fine, but calling it a complete managed agent platform is a stretch until they ship.

No budget controls. There's no mention of per-session cost caps, budget alerts, or spending limits. Agents that run into unexpected loops or retry storms will keep accumulating token costs. For teams launching agents that touch production systems, this is a real operational risk.

Context management is opaque. The engineering post describes how the session log enables flexible context retrieval, but what developers actually control versus what Anthropic manages internally isn't fully documented in the public release. That matters when you're debugging an agent that's making bad decisions based on stale context.

For teams building agents from scratch, the value is clear: skip six weeks of infrastructure plumbing. For teams with existing production deployments, the calculus is less obvious. The architecture is solid, the security model is thoughtful, and the pricing for the managed layer is reasonable. The hard question is whether single-provider lock-in is acceptable at whatever scale you're running.

If you're building your first production agent deployment, the best agent sandbox tools guide covers what you'd be giving up by relying on managed infrastructure versus running your own. The comparison is worth reading before committing to either direction.

Sources: