AGI in 2026? Andrew Ng Has Some Bad News for Musk

Andrew Ng says AGI is decades away and the real AI bubble risk is in the training layer - not inference. We examine both claims against the data.

"We are closer than before, yet many decades away from an AI that matches human intelligence."

- Andrew Ng, in a February 2026 interview with Fast Company

That single sentence, buried in a longer conversation about AI economics, has been doing rounds in AI research circles all week. Coming from the co-founder of Google Brain, former chief scientist at Baidu, and arguably the person who made machine learning accessible to more engineers than anyone alive, it lands differently than the usual skeptic take.

Ng isn't some detached philosopher worried about science fiction. He's been building and funding AI systems for two decades. When he says AGI is "many decades away," he's making a technical claim - and simultaneously targeting the infrastructure investment thesis that has driven trillions of dollars into AI training clusters. Both claims deserve scrutiny.

TL;DR

| What They Said | What We Found |

|---|---|

| Musk: AGI arrives in 2026; xAI and Tesla are leading | Serial deadline misses: AGI by 2025 (2024 prediction), by 2026 (2025 prediction) |

| Ng: Real bubble risk is in training, not inference | Confirmed: 2026 is the first year inference cloud spend surpasses training |

| Ng: Training complexity makes AGI "decades away" | Frontier models hit IMO gold-medal math - but still hallucinate, fail at multi-step tasks |

| Ng: Inference demand is massive and will keep growing | Inference projected at 67% of all AI compute by 2026, 75% by 2030 |

The Claim

Musk's Side of the Equation

Elon Musk has been very consistent in his approach to AGI timelines - consistently wrong, and consistently undeterred. In 2024, he told xAI staff the company could achieve AGI "as early as 2026," with $20-30 billion per year in available funding. When 2025 arrived without AGI, he declared we had "entered the Singularity" and that "2026 is the year of the Singularity."

As recently as March 4 of this year, Musk was telling Tesla shareholders the automaker would be "one of the companies to make AGI" - in "humanoid/atom-shaping form" through its Optimus robot program. This arrived in the same week Tesla reported its first-ever revenue decline (down roughly 3% to $94.8 billion), deliveries falling 9% year-over-year, and BYD overtaking Tesla as the world's largest EV seller.

What Ng Actually Said

Ng's position is more nuanced than a simple "no." Speaking to Fast Company in late February, he accepted that AI capabilities are improving quickly. But he drew a sharp line between task-specific performance and the broader claim that training compute alone will deliver AGI.

"I look at how complex the training recipes are and how manual AI training and development is today," Ng said in a separate December 2025 interview with NBC, "and there's no way this is going to take us all the way to AGI just by itself."

His second claim was equally pointed: the real bubble risk isn't in AI applications, where he sees solid demand, but in the training layer - the multi-billion-dollar compute investments that produce foundation models. If open-source alternatives continue closing the gap with proprietary models, some of those training bets may never yield a return.

The Evidence

On AGI: What the Benchmarks Actually Show

The honest picture here is truly complicated. Frontier models have made astonishing gains in 2025. They can now achieve gold-medal performance on International Mathematical Olympiad problems - a benchmark that stumped the entire field as recently as three years ago. On science graduate-level benchmarks, they exceed expert human performance on multiple subsets.

But the International AI Safety Report 2026 - published February 3rd by over 100 AI experts under Turing Award winner Yoshua Bengio - adds important texture. The report found that performance "remains uneven across tasks and domains." Specifically: models produce hallucinations, degrade on tasks requiring many sequential steps, and show lower performance in unfamiliar languages or cultural contexts.

These aren't minor edge cases. A system that scores 90% on HumanEval but fails at multi-step planning, or that invents court citations when asked to cite real law, is not approaching the "any intellectual task a human can perform" bar that Ng uses as his AGI definition.

The math olympiad AI leaderboard captures the capability gains well. But benchmark performance and general cognitive capability are different claims, and Ng's critique is that the industry keeps conflating them.

On the Training Bubble: The Numbers Are Surprising

This is where Ng's argument finds the most support. For the first time in the history of commercial AI, inference spend in cloud AI services is set to surpass training spend in 2026.

Deloitte estimates inference made up roughly half of all AI compute in 2025. In 2026, that figure rises to approximately two-thirds. Brookfield's longer-range projections put inference at 75% of all AI compute needs by 2030. In dollar terms: AI cloud infrastructure will hit around $37.5 billion in 2026, with an estimated $20.6 billion going to inference vs. a smaller slice for training - the first time inference wins.

This isn't a trivial data point. It suggests the commercial value of AI is increasingly in using pre-trained models at scale, not in the act of training new frontier models from scratch. If that's true, it creates a structural question for the companies spending hundreds of billions on training clusters: will the frontier always justify the training cost, or will the curve eventually flatten?

Ng's specific concern is the open-source pressure on that curve. If models like Qwen 3.5 or DeepSeek can get within striking distance of proprietary frontier models at a fraction of the training cost - or by distilling from the frontier models themselves - then the ROI on expensive from-scratch training investments looks shakier.

What the Counterargument Looks Like

It'd be easy to read Ng's comments as dismissive of the frontier effort, but that's not quite right. "Inference demand is massive, and I'm very confident inference demand will continue to grow," he told Fast Company. His concern isn't that AI infrastructure spending is wrong in total - it's that the training layer specifically may be where the speculative froth builds up.

The counterargument runs like this: inference only exists because of training. Every trillion tokens that runs through inference at scale today is only possible because someone made a large, expensive training bet. The companies that skip that investment and rely completely on distillation or open-source are free-riding on the economics of frontier research. At some point, if no one trains frontier models, there's nothing to distill from.

Sam Altman, Dario Amodei, and Demis Hassabis have all staked their companies on the thesis that the frontier keeps moving, that each generation of models opens new applications that justify the next generation's training cost. That thesis isn't obviously wrong. The overall LLM rankings for February 2026 show frontier models still hold a meaningful capability lead over open alternatives in complex reasoning tasks.

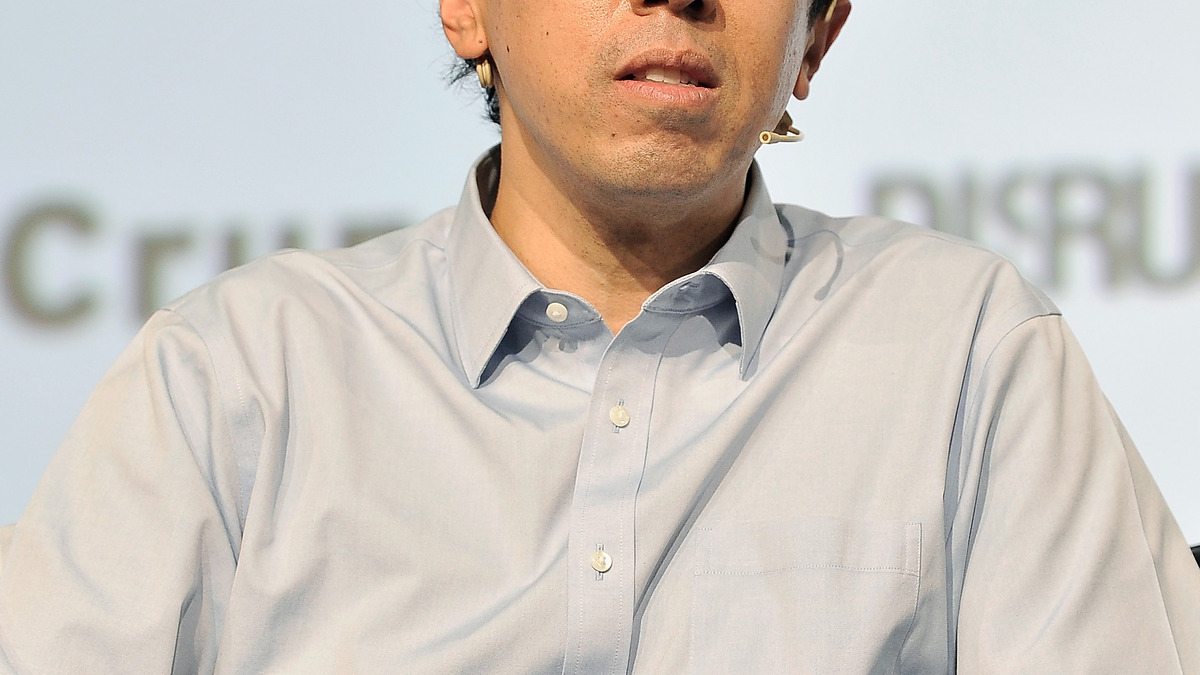

Andrew Ng has been one of AI's most prominent voices calling for calibrated expectations with genuine progress in AI capabilities.

Andrew Ng has been one of AI's most prominent voices calling for calibrated expectations with genuine progress in AI capabilities.

What They Left Out

Ng's framing has a few gaps worth naming.

First, the AGI definition problem cuts both ways. Ng insists on the "any intellectual task" bar, which is almost certainly decades away. But Altman and others have been quietly using softer definitions - AI that can do "most economically valuable work" or AI that "can do science autonomously." By those definitions, some researchers believe the window is under a decade. Calling those definitions "misleading" doesn't make them irrelevant.

Second, Ng has his own history of calibration errors. Google Brain, which he co-founded, was an early bet that deep learning would scale to broad capability. The bet was right, but not in the timeline or way he initially anticipated. Being right eventually about AI's power doesn't mean the specific prediction mechanism was sound.

Third, the training bubble argument depends heavily on open-source dynamics that are themselves uncertain. If governments (the US, EU, or China) restrict open-weight model releases for national security reasons - a scenario that's actively being debated right now in Washington - the open-source pressure on proprietary training ROI weakens much. The reasoning benchmarks leaderboard still shows a measurable gap between the true frontier and the best open models on complex tasks.

| Claim | Reality Check | Verdict |

|---|---|---|

| AGI arrives in 2026 (Musk) | Musk predicted AGI by 2025 in 2024; timeline shifts annually | Doesn't hold |

| Training layer is the bubble (Ng) | Inference now surpasses training in cloud AI spend; open-source closing gap | Largely holds |

| AGI requires "decades" by real definition (Ng) | Models still hallucinate and fail at multi-step tasks; but capabilities accelerating faster than expected | Partially holds |

| Inference demand is safe from the bubble (Ng) | Enterprise and consumer deployment growing; robust across both proprietary and open models | Holds |

Ng's skepticism about the AGI timeline is probably the most defensible technical position in this debate. The gap between "better than any human on this specific test" and "capable of any intellectual task a human can perform" is wide, and current training recipes aren't obviously going to close it without significant architectural or methodological breakthroughs that don't yet exist on the roadmap.

His training bubble thesis is more speculative, but the data is moving in his direction. The first year inference beats training in cloud AI spend is a real milestone. Whether it signals a structural shift or just the natural maturation of a technology adoption cycle is the question that'll define which AI bets pay off.

What Musk's serial deadline-setting shows, above all, is that confident timelines in AI research are a form of marketing. The technology advances. The deadlines don't.

Sources:

- Davos 2026: Andrew Ng Says Fears of AI-Driven Job Losses Are Exaggerated - Storyboard18

- AI Pioneer Andrew Ng Expects Decades-Long Wait For AGI - PYMNTS

- Andrew Ng Says AI Is Limited, Won't Replace Humans Soon - NBC News

- Musk Claims Tesla Will Make AGI - Electrek

- International AI Safety Report 2026 - AIGL Blog

- Where Is AI Spending Going in 2026 - Market Clarity

- Elon Musk Predicts AGI by 2026 - Gizmodo

- xAI AGI by 2026 - i10x.ai

- AI Capex 2026: The $690B Infrastructure Sprint - Futurum

- International AI Safety Report 2026 - Inside Privacy