Anti-AI Suspect Throws Molotov at Altman's Home

A suspect linked to the Pause AI movement threw a homemade incendiary at OpenAI CEO Sam Altman's San Francisco home, then threatened to burn the company's headquarters.

At 3:40 in the morning on April 10, a man walked up to Sam Altman's North Beach home in San Francisco and threw a lit Molotov cocktail at the front gate. The bottle hit the exterior and caught fire. No one was hurt. But the attacker wasn't finished: roughly an hour later, he appeared outside OpenAI's Mission Bay headquarters carrying a jug he claimed contained kerosene and threatened to burn the building down.

By Friday afternoon, Daniel Alejandro Moreno-Gama, 20, had been booked into San Francisco County Jail on suspicion of attempted murder, criminal threats, and possession or manufacture of a destructive device. San Francisco police confirmed the gate fire was quickly extinguished. The DA's office said it would decide on charges the following week, weighing local prosecution against possible federal referral.

The specific shape of this case matters. Moreno-Gama wasn't a random vandal. He left a paper trail: six lengthy Substack essays, months of Discord posts under the handle "Butlerian Jihadist" - a reference to Dune's fictional anti-machine holy war - and a written belief that artificial intelligence would exterminate humanity. He was, according to investigators and reporting by The Decoder, apparently a follower of the Pause AI movement. The group had removed him from its server months earlier after he called for action.

The Attack - Police responded to a fire alarm at Altman's Russian Hill residence shortly after 3:40 a.m. The homemade device struck the exterior gate and ignited it. Security personnel extinguished the flames before they spread. Altman and his family weren't physically harmed. Moreno-Gama fled on foot into the pre-dawn city.

The Threat at OpenAI HQ - About an hour after the attack, an individual matching Moreno-Gama's description showed up at OpenAI's San Francisco office. He was holding a container he claimed was filled with kerosene and made verbal threats to destroy the building. Police arrived and took him into custody.

The Digital Trail - Moreno-Gama's online presence told a clear story. His Substack, active from January through March, included a post titled "A Eulogy for Man" that warned of human extinction through AI, comparing a future encounter between humanity and machine superintelligence to historical patterns where "a more advanced human civilization has made contact with a less advanced one, the less advanced group is often met by conquest and genocide." On Pause AI's Discord, using the "Butlerian Jihadist" username, he wrote in early December: "We are close to midnight, it's time to actually act." A moderator warned that calls for violence would result in removal. The group subsequently removed him from the server.

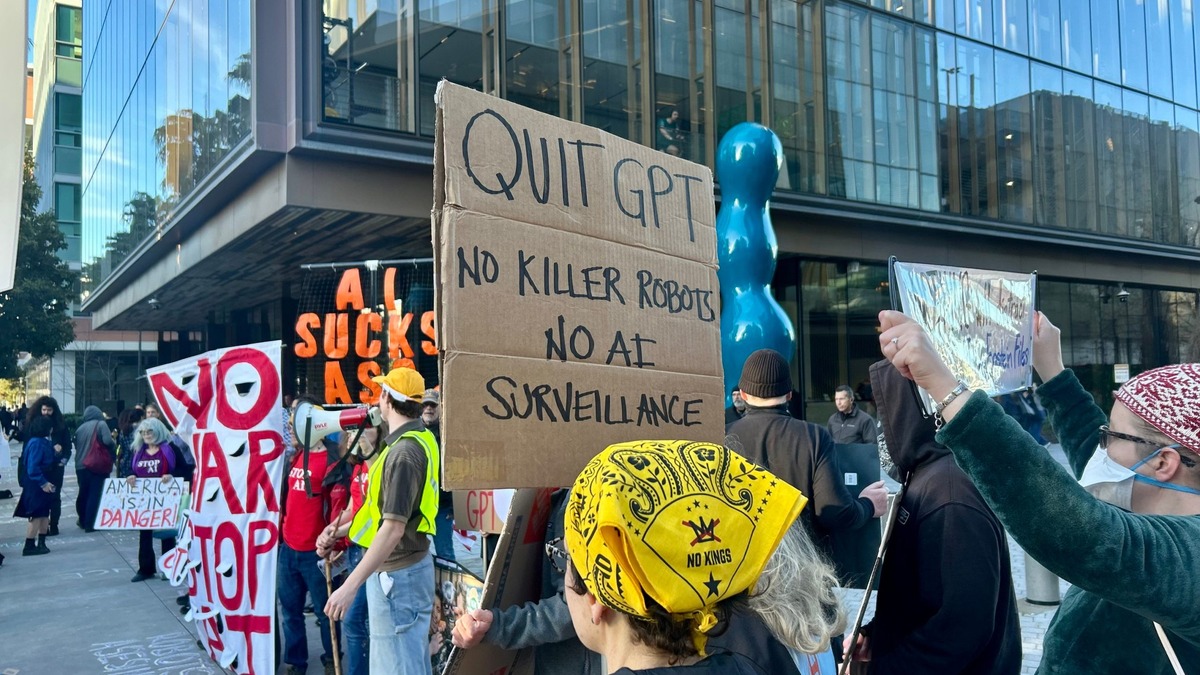

Demonstrators outside OpenAI's San Francisco office on March 3, 2026, opposing the company's Pentagon contract. Anti-AI protests have become a regular fixture at the company's offices.

Source: localnewsmatters.org

Demonstrators outside OpenAI's San Francisco office on March 3, 2026, opposing the company's Pentagon contract. Anti-AI protests have become a regular fixture at the company's offices.

Source: localnewsmatters.org

The Movement Distances Itself

Both Pause AI and the related Stop AI group moved quickly to separate themselves from Friday's events.

Stop AI issued a statement the same day: "Stop AI seeks to protect human life. We do not condone any violence whatsoever." The group, which has organized marches outside OpenAI, Anthropic, and xAI offices in San Francisco, said it hoped the industry would "cease developing frontier AI systems" through legal and political means - not through arson.

Pause AI, which calls for a conditional pause on frontier AI development if every major lab agrees simultaneously, had already removed Moreno-Gama months earlier. The December warning from a moderator suggested the organization knew it had members drifting toward extreme views.

The problem for both groups is that the distance they're trying to maintain keeps getting harder to enforce. Moreno-Gama's writings drew directly from extinction-risk arguments that serious AI safety researchers also make - the same civilizational framing, the same urgency, the same belief that the stakes are total. What distinguished him wasn't the premise but the conclusion: that waiting for policy change wasn't enough.

An Escalating Threat Pattern

This attack didn't come out of nowhere. It's part of a pattern of physical threats tied to anti-AI sentiment that has been building for months.

| Date | Target | Incident | Link to AI Opposition |

|---|---|---|---|

| Nov 2025 | Unidentified | Anti-AI activist goes missing | Told friends he had planned violent action |

| Apr 10, 2026 | Sam Altman's home | Molotov cocktail thrown at gate | Suspect held explicit AI extinction beliefs |

| Apr 10, 2026 | OpenAI headquarters | Kerosene threat, verbal | Same suspect, same morning |

| Apr 2026 | Indiana official | Shooting at home | Note left at scene: "No data centers" |

Security experts have been warning for months that AI executives face a different threat profile than their tech-industry predecessors. After the 2024 murder of UnitedHealthcare CEO Brian Thompson, corporations quietly increased executive protection budgets. The AI sector, where the public debate is louder and more existentially charged, has been slower to absorb the effects.

Kent Moyer, CEO of The World Protection Group, warned in the aftermath of Friday's incident that "executives are more vulnerable than ever" and noted that residential addresses for figures like Altman are often findable through basic open-source research. Altman reportedly maintains multiple properties, none of which are secret.

Altman Responds

Altman published a statement on his personal blog the following day. He didn't minimize the attack but he didn't escalate either. He wrote:

"I empathize with anti-technology sentiments and clearly technology isn't always good for everyone."

On the broader question of rhetoric, he asked people to "de-escalate the tactics and try to have fewer explosions in fewer homes, figuratively and literally." The statement addressed both the physical incident and the New Yorker investigation into his leadership that had dropped days earlier - framing both as part of the same heightened moment.

Altman met with Prime Minister Modi at the India AI Impact Summit in New Delhi in February 2026, one of several high-visibility diplomatic appearances in recent months.

Source: wikimedia.org

Altman met with Prime Minister Modi at the India AI Impact Summit in New Delhi in February 2026, one of several high-visibility diplomatic appearances in recent months.

Source: wikimedia.org

SFPD told reporters it was still working to determine whether the incident was "a mental health situation, a disgruntled current or former employee or some form of domestic terrorism." That framing reflects genuine uncertainty: Moreno-Gama's writings read as ideological, but the line between radicalization, mental illness, and personal grievance isn't always clear in cases like this.

The AI Safety Paradox

The uncomfortable part of this case for the broader AI safety community is that Moreno-Gama's intellectual framework is largely borrowed from mainstream discourse. Extinction risk from misaligned superintelligence isn't a fringe position. It's debated in academic journals and at major conferences. Researchers who have left OpenAI or Anthropic over alignment concerns have made versions of the same argument Moreno-Gama was making, with considerably more rigor.

That doesn't make the safety community responsible for what happened. The difference between a careful researcher who thinks AI poses civilizational risk and someone who responds by throwing a Molotov cocktail isn't a small one. But it does mean the field has to reckon with how its ideas travel - and what happens to them when they reach people with a very different threshold for action.

The pattern of resistance to AI development has shifted from criticism and legal challenges to physical threats. The industry has spent years discussing whether its most powerful systems could pose risks to humanity. Now it faces the reality that belief in that claim, taken to an extreme, poses an immediate physical risk to the people building them.

What Happens Next

Criminal charges - The San Francisco DA's office will decide whether to charge Moreno-Gama locally or refer the case to federal prosecutors. The attempted-murder charge and potential domestic terrorism statutes could both apply given his stated motivations.

Security upgrades for AI executives - The incident will accelerate security reviews at major AI labs. OpenAI, Anthropic, and others already maintain significant protective measures for senior leadership. This attack will push those further.

Questions for the anti-AI movement - Groups like Pause AI and Stop AI will face sustained pressure to address how extinction-risk framing is received by members with a lower threshold for action. Removing a single user from Discord months before an attack is not a policy.

Regulatory attention - Federal involvement could open scrutiny of online communities organized around AI opposition - raising questions about speech, radicalization, and whether the legal framework for ideologically motivated attacks on tech executives even exists yet.

Sources: The Decoder, ABC7 San Francisco, SF Standard, National Today