Altman Pitches AI as Metered Utility Like Electricity

At BlackRock's Infrastructure Summit, Sam Altman laid out OpenAI's vision of selling AI compute by the token like a metered utility, backed by a $110 billion war chest and the Stargate buildout.

Sam Altman told an audience of infrastructure investors on March 11 that OpenAI's business will converge on selling tokens of AI compute the way utilities sell kilowatt-hours of electricity. Speaking at BlackRock's 2026 Infrastructure Summit in Washington, D.C., Altman described a future where "intelligence is a utility like electricity or water and people buy it from us on a meter." The pitch came twelve days after OpenAI closed a $110 billion funding round - the largest private financing in history - from Amazon, NVIDIA, and SoftBank.

TL;DR

- Altman says OpenAI's business model converges to selling tokens like a utility sells electricity, with costs dropping 10x per year

- He floated "universal basic compute" - distributing a share of global AI output to every person on Earth

- A coding task that once took days of expert work now costs "less than a dollar's worth of compute tokens"

- OpenAI's $110B round plus the $500B Stargate project are the infrastructure backbone

- Critics say the utility framing disguises corporate control of a resource Altman himself calls as important as human cognition

The Numbers Behind the Pitch

Altman's core claim is that each unit of AI intelligence has gotten roughly 10x cheaper per year for the last five years. He pointed to reasoning models specifically: the cost drop from o1's launch to current models has been "about 1,000x" in roughly sixteen months.

| Metric | Then | Now |

|---|---|---|

| Cost per coding task (expert-level) | Days of engineer time | "Less than a dollar" in tokens |

| Cost reduction since o1 | Baseline | ~1,000x cheaper |

| Annual cost decline rate | - | ~10x per year |

| OpenAI funding (Feb 2026) | - | $110B (Amazon $50B, NVIDIA $30B, SoftBank $30B) |

| Stargate commitment | - | Up to $500B by 2029 |

| Pre-money valuation | - | $730B |

The economic logic runs like this: if the cost of intelligence falls fast enough, it converges toward the cost of the energy needed to run it. "An electron is an electron," Altman said. Energy becomes the floor.

"We've been able to drive down the cost of each unit of intelligence by more than a factor of 10 each year for the last five years."

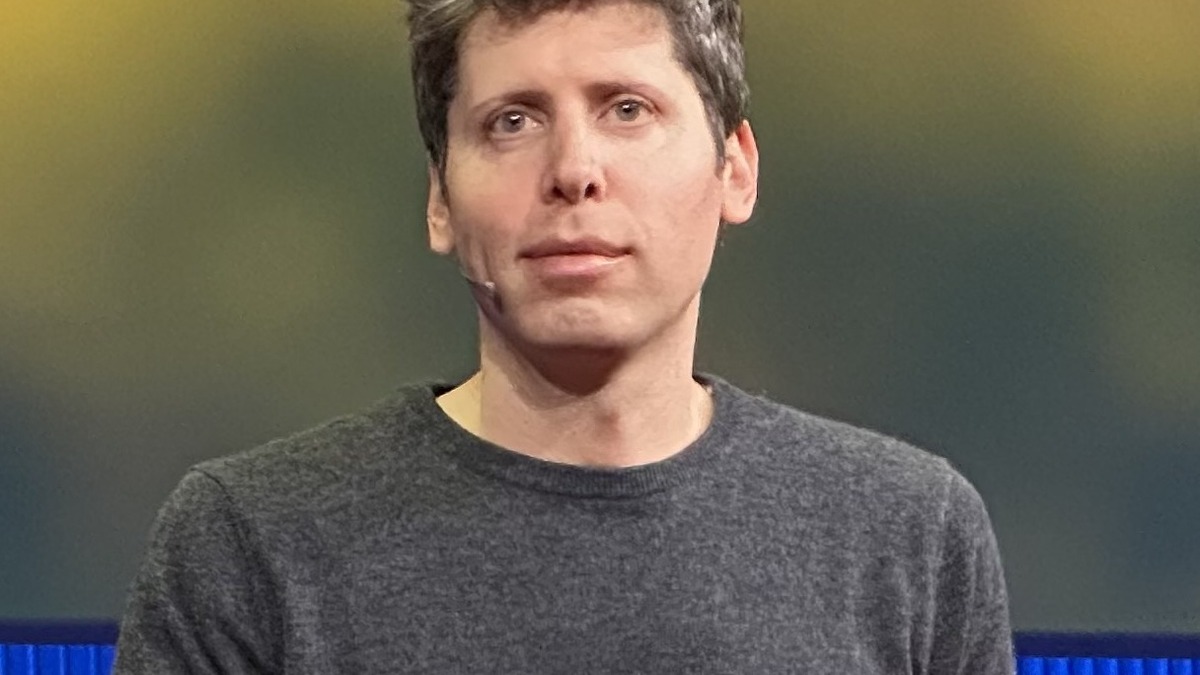

Sam Altman laid out OpenAI's utility vision at BlackRock's 2026 Infrastructure Summit in Washington, D.C.

Source: flickr.com

Sam Altman laid out OpenAI's utility vision at BlackRock's 2026 Infrastructure Summit in Washington, D.C.

Source: flickr.com

The Infrastructure Bet

The utility model requires massive supply. That's where the Stargate project and OpenAI's recent fundraise come in.

Stargate is a joint venture with SoftBank, Oracle, and Abu Dhabi's MGX fund targeting up to 10 gigawatts of AI data center capacity across the United States by 2029. Altman confirmed the first site in Abilene, Texas is already running training workloads. Five additional sites were announced in September 2025 across Texas, New Mexico, and Ohio, though the Abilene expansion was later scrapped after Oracle negotiations fell apart.

The $110 billion round closed February 27 with Amazon contributing $50 billion, NVIDIA $30 billion, and SoftBank $30 billion. It valued OpenAI at $730 billion pre-money. Combined with the broader Stargate commitment, OpenAI is betting hundreds of billions that demand for AI compute will behave like demand for electricity did in the twentieth century - growing to fill whatever capacity exists.

Universal Basic Compute

Altman's most speculative proposal was distributing a share of global AI output directly to individuals. If the world produces 20 quintillion tokens per year, he suggested allocating a portion - say 8 quintillion - equally among 8 billion people. Each person would receive roughly 1 billion tokens to use, sell, or pool with others. He called it "universal basic compute."

The idea echoes his earlier OpenAI Worldcoin project and the broader concept of universal basic income, but denominated in AI capacity rather than currency. No timeline or mechanism was proposed.

OpenAI's Stargate project targets up to 10 gigawatts of AI data center capacity across the United States by 2029.

Source: unsplash.com

OpenAI's Stargate project targets up to 10 gigawatts of AI data center capacity across the United States by 2029.

Source: unsplash.com

Counter-Argument

The utility analogy flatters OpenAI. Actual utilities operate under public regulatory frameworks - rate-setting commissions, universal service obligations, transparency requirements. OpenAI is a private for-profit company (since its restructuring in October 2025) that sets its own prices, controls access, and has no obligation to serve anyone.

Zoho co-founder Sridhar Vembu pushed back directly: "I do not want to see a world where we equate a piece of technology to a human being." Altman's framing conflates compute capacity with cognitive capacity in a way that makes corporate control of AI feel natural and inevitable rather than a policy choice.

Matt Stoller was blunter, criticizing Altman's comparison of AI energy use to human energy consumption: "He's saying a really big spreadsheet and a baby are morally equivalent."

The "too cheap to meter" line drew particular scrutiny. Altman self-consciously borrowed it from Lewis Strauss's 1954 promise about nuclear power - a prediction that became synonymous with technological hubris. Nuclear power never got cheap. Critics argue the analogy is more apt than Altman intended.

Washington Monthly published an analysis arguing that powerful AI "cannot coexist with extreme wealth inequality except through dystopian mass immiseration." If the companies that own AI infrastructure also set the price, the utility metaphor masks a concentration of power that actual utility regulation was designed to prevent.

"If we don't have enough, we either can't sell it or the price gets really high and it kind of goes to rich people."

Altman acknowledges the scarcity problem. His solution is to build so much capacity that supply overwhelms any attempt to hoard access. "The best thing to me throughout all the history of capitalism," he said, "is to just flood the market."

The Timing Problem

Altman also acknowledged that the transition will hurt. "The next few years are going to be a painful adjustment," he said, predicting "very intense and uncomfortable debates." He noted President Trump's warning that AI faces "a major public relations problem" - data centers get blamed for electricity price hikes, companies get blamed for layoffs they attribute to AI.

OpenAI deleted the word "safely" from its mission statement during the for-profit restructuring. Combined with the Pentagon contracts, the $730 billion valuation, and now the utility framing, the path is clear: OpenAI is positioning itself as essential infrastructure, not a research lab.

Altman's analogy: AI compute should flow like electricity - cheap, abundant, and metered by usage.

Source: unsplash.com

Altman's analogy: AI compute should flow like electricity - cheap, abundant, and metered by usage.

Source: unsplash.com

What the Market Is Missing

Altman predicted that cognitive capacity inside data centers will exceed human cognitive capacity outside them "potentially by late 2028." That's an extraordinary claim to drop at an infrastructure investor summit and move past without elaboration. If he's even directionally right, the pricing models, regulatory frameworks, and competitive dynamics of every industry change in ways that make current valuation methods irrelevant. If he's wrong, OpenAI is a company burning hundreds of billions on overcapacity with a for-profit structure that needs to show returns. The utility analogy works in both directions - and investors at BlackRock should remember that utilities are the most regulated sector in the American economy for a reason.

Sources:

- Sam Altman at BlackRock Infrastructure Summit - Full Transcript (Rev)

- OpenAI raises $110B in funding - TechCrunch

- OpenAI completes for-profit recapitalization - TechCrunch

- Altman defends AI energy usage - TechCrunch

- Sam Altman on AI cost converging to energy cost - OfficeChai

- AI and extreme wealth inequality - Washington Monthly

- OpenAI deletes "safely" from mission - The Conversation

- Sam Altman's gentle singularity message - Faster Please

- Stargate LLC - Wikipedia