Alibaba Qwen3.6-Plus Launches With 1M Context Window

Alibaba officially launches Qwen3.6-Plus, a 1-million-token context model built for enterprise agentic coding and multimodal reasoning, now free on OpenRouter.

Alibaba's Qwen3.6-Plus landed on April 2, 2026, with a 1-million-token context window, mandatory chain-of-thought reasoning, and a clear enterprise target. It soft-launched on OpenRouter a few days earlier - March 30 - under the model string qwen/qwen3.6-plus-preview:free. The formal press release came April 2, pointing at Alibaba's own products and third-party coding agents as the primary delivery mechanism.

This isn't a research demo. It's an enterprise product built for what Alibaba calls "the shift towards agentic AI."

TL;DR

- 1-million-token context window; max 32K tokens per response

- Mandatory always-on reasoning - no lightweight mode option

- Three stated use cases: agentic coding, visual coding, multimodal reasoning

- Free on OpenRouter preview (prompts collected for model training)

- Integrates with OpenClaw, Claude Code, and Cline out of the box

- Smaller open-source variants from the Qwen3.6 series promised - no timeline given

What Actually Changed From Qwen 3.5

Alibaba's previous open-source flagship, Qwen 3.5, was a 397B parameter model with a 256K context window and broad multimodal support. Qwen3.6-Plus extends that context to 1 million tokens and closes the open-source option for the flagship tier - at least for now. Smaller Qwen3.6 variants are supposedly coming to the developer community, but no date has been given.

The other significant shift is the reasoning model default. Qwen3.5 offered optional chain-of-thought. With Qwen3.6-Plus, it's always on. That's a different latency and cost profile than most users are used to from the Plus-tier Qwen models.

| Feature | Qwen3.6-Plus | Qwen3.5 (previous open-source) |

|---|---|---|

| Context window | 1,000,000 tokens | 256,000 tokens |

| Max output tokens | 32,000 | 8,192 |

| License | Closed (preview) | Apache 2.0 |

| Mandatory reasoning | Yes | No |

| API modality | Text only | Multimodal |

| API pricing | Free (preview) | Free (open weights) |

The trade-off is real: you gain a much longer context and stronger reasoning, and lose the open weights and native multimodal at the API level.

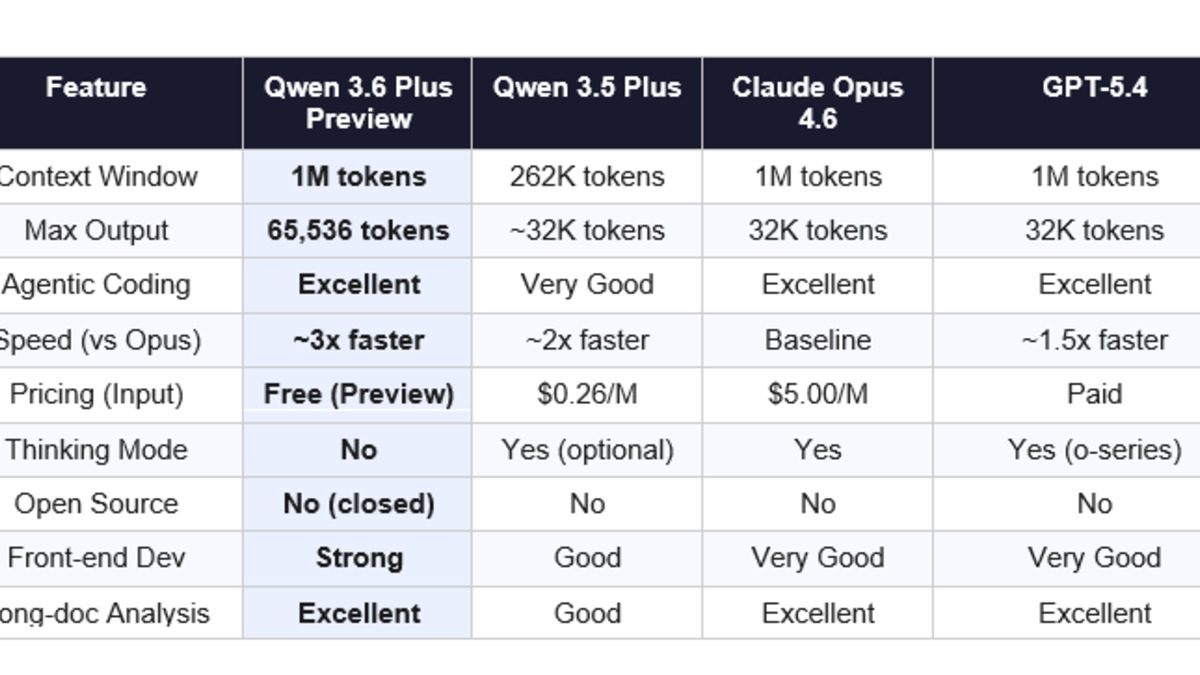

Third-party reviewers have compiled feature and performance comparisons for Qwen3.6-Plus against other frontier models.

Source: buildfastwithai.com

Third-party reviewers have compiled feature and performance comparisons for Qwen3.6-Plus against other frontier models.

Source: buildfastwithai.com

The Three Bets

Alibaba structured the Qwen3.6-Plus launch around three specific capability pillars.

Agentic Coding

This is the headline pitch. The model is built to "autonomously plan, test, and iterate on code to deliver production-ready solutions" at the repository level. That means navigating multi-file codebases, executing full test suites, and completing tasks that require multiple sequential decisions - not just single-file completions.

Alibaba's own SWE-CI research showed that most coding agents build up technical debt over time, and only a handful stay above 50% zero-regression over months of maintenance. Qwen3.6-Plus is partly a commercial answer to that finding. Whether the production behavior matches the benchmark framing remains to be seen, but Alibaba has at least put the right research problem on the table.

Visual Coding

Qwen3.6-Plus can "interpret user interface screenshots, hand-drawn wireframes, or product prototypes and instantly generate functional frontend code." This isn't new territory for multimodal models, but the 1M context window changes what's possible for large design systems with hundreds of components. Feeding an entire Figma export into context is now at least plausible.

Multimodal Reasoning (Pipeline, Not API)

This is where the launch announcement gets imprecise. Alibaba claims capabilities for "high-density document parsing, physical-world visual analysis, and long-form video reasoning" - moving "beyond simple recognition toward sophisticated analysis and decision-making." But the OpenRouter API spec is text-only. The multimodal capabilities exist in the enterprise products built on top of Qwen3.6-Plus, not in the raw API.

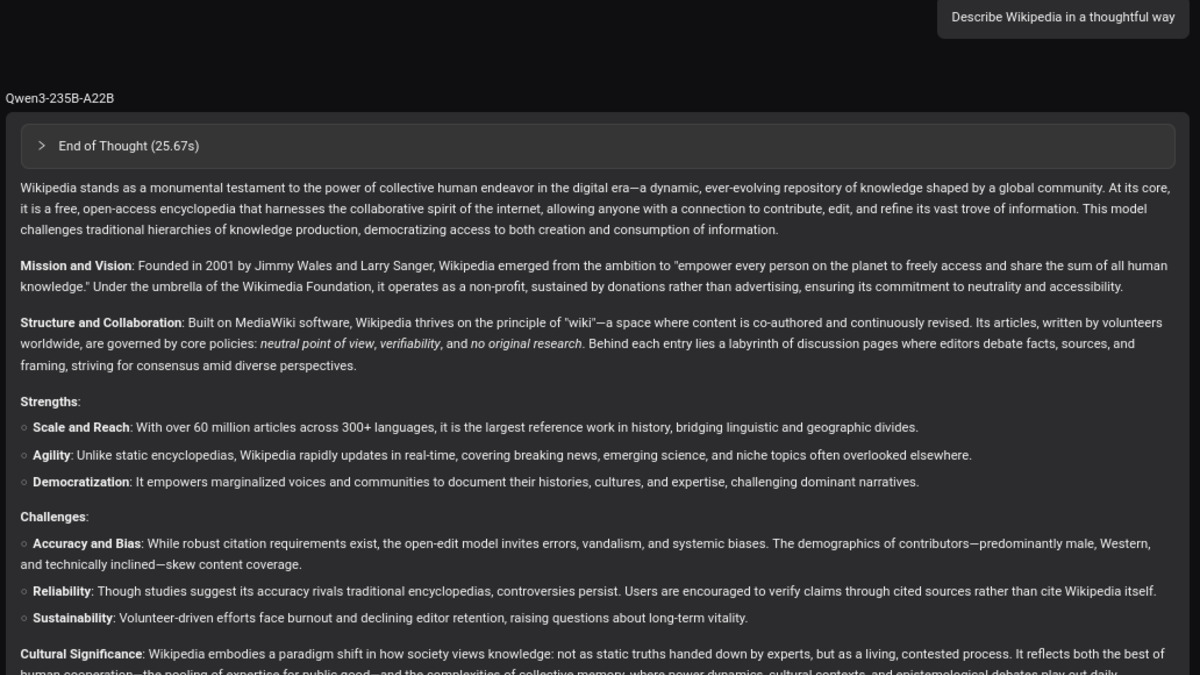

Qwen Chat demonstrates chain-of-thought reasoning with the "Thinking" feature - now mandatory in Qwen3.6-Plus.

Source: commons.wikimedia.org

Qwen Chat demonstrates chain-of-thought reasoning with the "Thinking" feature - now mandatory in Qwen3.6-Plus.

Source: commons.wikimedia.org

The Enterprise Layer

Qwen3.6-Plus powers two Alibaba products directly:

Wukong - an invitation-only AI-native enterprise platform that routes complex business tasks through multi-agent workflows. It integrates with DingTalk (over 20 million corporate users), with expansion planned to Taobao and Tmall. Alibaba claims enterprise customers have seen "50% reduction in time and effort for system upgrades" and "20-60% productivity improvements" in credit-risk analysis - figures that need independent audit before any procurement decision.

Qwen App - Alibaba's consumer-facing flagship AI assistant, which now runs on Qwen3.6-Plus as its foundation.

For developers not inside Alibaba's ecosystem, the model is accessible via Alibaba Cloud's Model Studio, Qwen Chat, and free on OpenRouter. It's also compatible with Claude Code, OpenClaw, and Cline - which signals that Alibaba is actively courting the developer tooling ecosystem rather than expecting adoption to flow through Alibaba infrastructure alone.

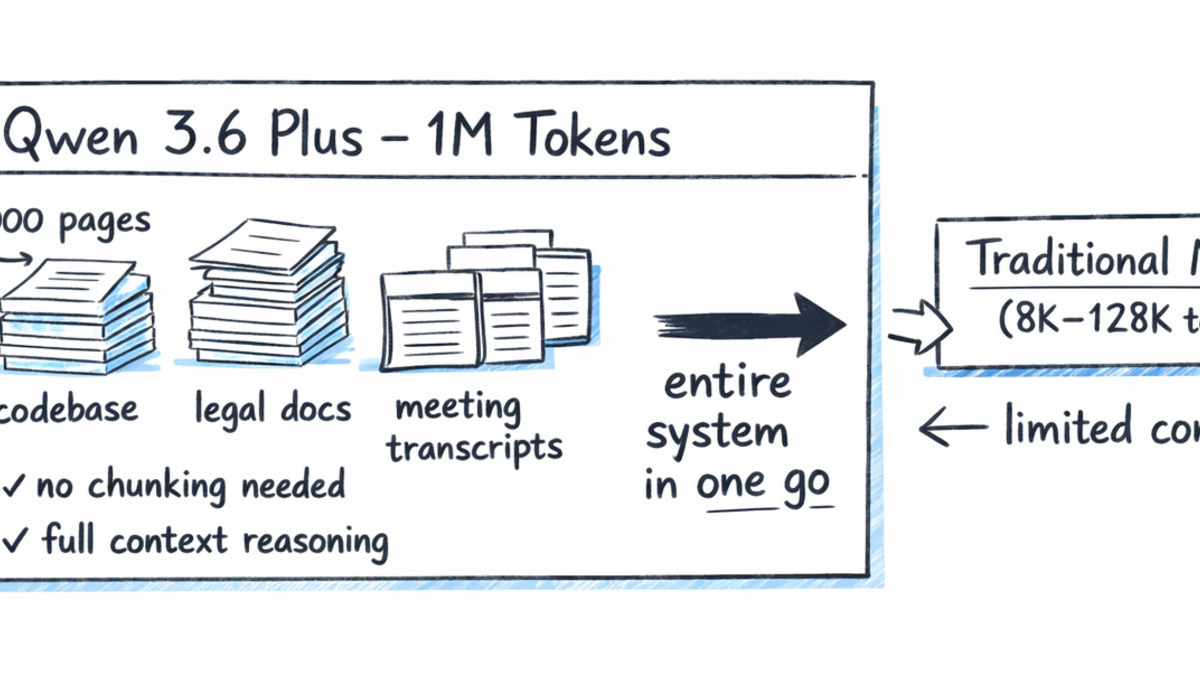

A 1-million-token context window changes what's possible for large document and codebase analysis tasks.

Source: buildfastwithai.com

A 1-million-token context window changes what's possible for large document and codebase analysis tasks.

Source: buildfastwithai.com

What It Does Not Tell You

Official benchmark scores on SWE-bench, HumanEval, or MMLU weren't published with the launch. The Alibaba press release focuses on qualitative capability descriptions and enterprise testimonials. That's not unusual for a commercial product announcement, but it makes independent comparison difficult.

The Open-Source Hedge

"Selected smaller Qwen3.6 models" are promised for the developer community, but without version details, parameter counts, or a release timeline. Given Alibaba's track record with the Qwen 3.5 series - releasing models at multiple size points - it's reasonable to expect something, but the absence of specifics is a hedge worth noting.

The Reasoning Tax

Always-on chain-of-thought is a deliberate product decision. It means Qwen3.6-Plus will be slower and more expensive than a non-reasoning model of similar capability for simple tasks. For users who want fast, cheap completions for low-complexity requests, this isn't the right model. Cursor's agent work and similar tools have shown that mixing reasoning and non-reasoning calls per-task is often more efficient than blanket reasoning.

The OpenRouter Data Policy

The preview is free on OpenRouter, but Alibaba collects and trains on prompt data from the preview tier. This is standard practice for preview access and is disclosed in the model card, but enterprise users handling sensitive code should route through Model Studio with appropriate data agreements rather than the free OpenRouter tier.

Qwen3.6-Plus arrives at a moment when the Chinese AI labs are no longer trailing on enterprise features. The 1M context window puts it on par with the longest-context Western commercial models, and the compatibility with Western developer tooling suggests Alibaba isn't limiting its ambitions to domestic markets. The benchmark silence is a gap, not a red flag - independent evaluations will fill it quickly now that the model is live. The more interesting question is whether the open-source releases that follow can repeat what Qwen3.5 did for the broader developer community.

Sources: