NVIDIA Ising

NVIDIA Ising is the world's first open AI model family for quantum computing - a 35B MoE VLM for quantum processor calibration and 3D CNN decoders for real-time surface code error correction.

NVIDIA Ising is a family of open AI models targeting two of the hardest operational problems in quantum computing: calibrating quantum processors and correcting quantum errors in real time. Released on April 14, 2026, it's the first open model family purpose-built for quantum hardware workflows. The name references Ernst Ising, the physicist whose 1925 model of ferromagnetism became foundational to statistical mechanics - an appropriate nod from a company trying to put AI at the center of quantum control.

TL;DR

- Two model families: Ising Calibration 1 (35B MoE VLM) for quantum processor calibration, Ising Decoder SurfaceCode 1 (912K / 1.79M params) for real-time error correction

- On NVIDIA's own QCalEval benchmark, Ising Calibration 1 scores 74.7 mean vs 72.3 for Gemini 3.1 Pro, 67.8 for Claude Opus 4.6, and 64.6 for GPT-5.4 - a specialized task these models weren't trained for

- Decoder Fast is 2.5x faster than pyMatching; Decoder Accurate reduces logical error rate 1.53x at d=13, p=0.003 - already rolled out across 20+ research organizations

The family has two branches with different scopes. Ising Calibration is a 35-billion-parameter MoE vision-language model that reads experiment plots from a quantum processing unit (QPU) and infers what adjustments to make - reducing calibration cycles from days to hours when paired with an automation agent. Ising Decoding consists of two compact 3D CNN models (912K and 1.79M parameters) that act as pre-decoders for surface code quantum error correction, working with the standard pyMatching decoder to cut latency and improve accuracy.

Both sets of weights are available on Hugging Face and GitHub under permissive licenses, and NVIDIA has packaged them as NIM microservices for fine-tuning on proprietary hardware. The release comes with QCalEval, a new benchmark dataset NVIDIA built in collaboration with quantum hardware partners - 243 samples across 87 scenario types from 22 experiment families spanning superconducting qubits and neutral atoms.

Key Specifications

| Specification | Details |

|---|---|

| Provider | NVIDIA |

| Model Family | Ising |

| Calibration Model | Ising Calibration 1-35B-A3B |

| Calibration Architecture | MoE VLM (256 experts, 8 active); Qwen3.5-35B-A3B base + vision encoder |

| Calibration Parameters | 35B total, 3B active per token |

| Context Window | 262,144 tokens |

| Decoder Models | Ising Decoder SurfaceCode 1 Fast (912K params), Accurate (1.79M params) |

| Decoder Architecture | 3D CNN pre-decoder for surface code error correction |

| Min Hardware (Calibration) | 2x L40S (48GB) or 1x H100 (80GB) |

| License | Apache 2.0 (Decoder); NVIDIA Open Model License + Apache 2.0 (Calibration) |

| Release Date | April 14, 2026 |

| Availability | Hugging Face, GitHub, NVIDIA NIM, build.nvidia.com |

The Calibration model's base is Qwen3.5-35B-A3B with a vision encoder added for experiment plot images. The Decoder models are lightweight enough to run on hardware already present at quantum labs - no H100 needed.

Benchmark Performance

Ising Calibration 1 - QCalEval Scores (Zero-Shot)

All scores from NVIDIA's QCalEval paper (arXiv:2604.25884). QCalEval has six question types: Q1 = technical description, Q2 = experimental conclusion, Q3 = experimental significance, Q4 = fit quality assessment, Q5 = parameter extraction, Q6 = experiment success.

| Model | Q1 | Q2 | Q3 | Q4 | Q5 | Q6 | Mean |

|---|---|---|---|---|---|---|---|

| Ising Calibration 1 | 87.8 | 67.1 | 64.7 | 90.5 | 62.5 | 75.3 | 74.7 |

| Gemini 3.1 Pro | 88.5 | 57.2 | 61.1 | 84.4 | 71.5 | 71.2 | 72.3 |

| Claude Opus 4.6 | 90.8 | 49.0 | 65.5 | 76.1 | 64.7 | 60.5 | 67.8 |

| GPT-5.4 | 90.9 | 52.7 | 63.7 | 54.7 | 64.3 | 61.3 | 64.6 |

Read these numbers carefully. The biggest gains for Ising Calibration 1 are Q2 (experimental conclusions: 67.1 vs 49.0 - 18 points over Claude Opus 4.6), Q4 (fit quality: 90.5 vs 54.7 - 36 points over GPT-5.4), and Q6 (experiment success: 75.3 vs 60.5). These are exactly the judgment tasks that require understanding quantum calibration context - tasks frontier LLMs weren't fine-tuned for. General language capabilities (Q1 technical description) actually favor the closed models, which isn't surprising.

The benchmark is vendor-created, which means treat it as directionally useful rather than independently verified. The in-context learning results are worth noting: Claude Opus 4.6 jumps to 85.1 and Gemini 3.1 Pro to 85.2 mean when given demonstrations - beating Ising Calibration 1's zero-shot numbers. The specialized training advantage narrows when general-purpose models get examples.

Ising Decoder SurfaceCode - Performance vs pyMatching (d=13, p=0.003)

| Model | Speed vs pyMatching | LER Improvement | Latency (μs/round) |

|---|---|---|---|

| Decoder Fast + pyMatching | 2.5x faster | 1.11x | Not disclosed |

| Decoder Accurate + pyMatching | 2.25x faster | 1.53x | 2.33 μs (GB300, FP16) |

| pyMatching baseline | 1x | 1x | ~5.25 μs (estimated) |

At surface code d=31 (a more realistic scale), the Accurate decoder shows 3x improvement in logical error rate versus pyMatching when trained on d=13 data - a strong generalization result. Both decoders work as pre-decoders that feed corrected syndromes into pyMatching rather than replacing it entirely.

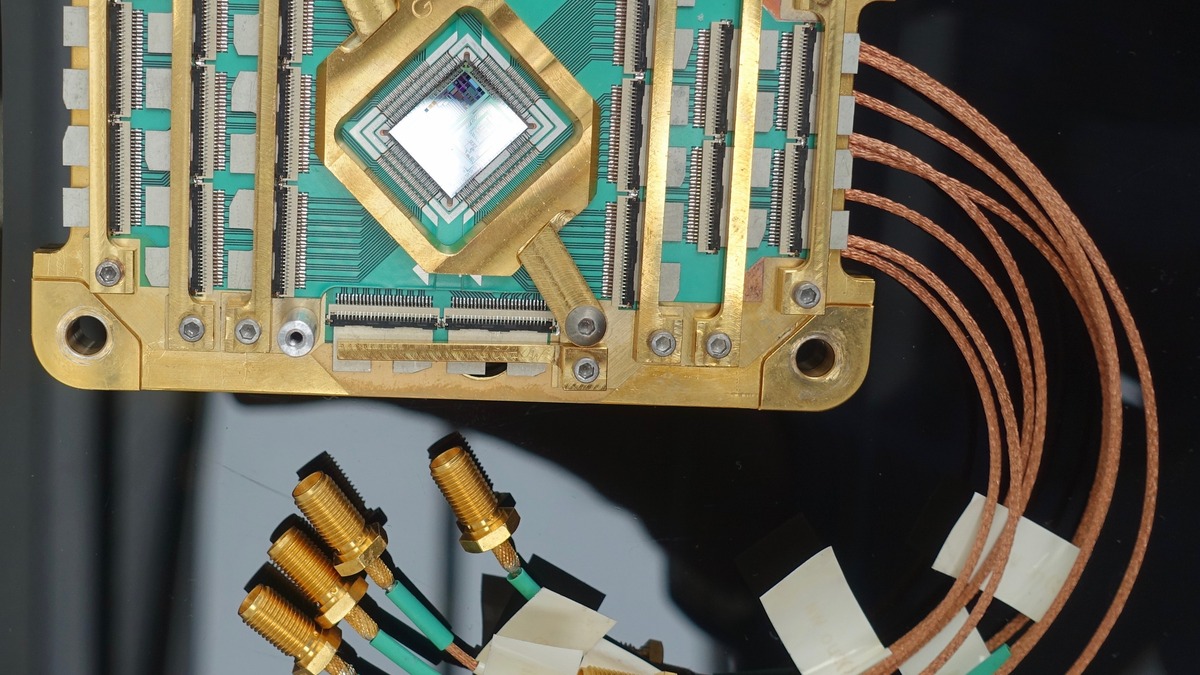

Superconducting quantum processor hardware - the type of QPU that Ising Calibration automates calibration for.

Source: commons.wikimedia.org

Superconducting quantum processor hardware - the type of QPU that Ising Calibration automates calibration for.

Source: commons.wikimedia.org

Key Capabilities

Quantum Processor Calibration

Ising Calibration 1 ingests PNG or JPEG experiment plots from a QPU and generates text: technical descriptions of what the plot shows, conclusions about the experiment, significance assessments, fit quality evaluation, parameter extraction, and a classification of whether the experiment succeeded. It supports any qubit modality its training data covered - superconducting qubits, quantum dots, ions, neutral atoms, and electrons on helium.

The context window is 262,144 tokens with multi-image input support, which matters for calibration workflows that compare multiple measurement plots simultaneously. The model was trained in two phases: 23.8K in-context-learning formatted entries in phase one, then 48.7K zero-shot entries with LLM-augmented labels in phase two, using Qwen3.5 397B as the annotation model. The zero-shot framing in phase two is what makes it practically deployable - users don't need to assemble shot banks of prior calibration examples to get useful output.

When paired with a calibration agent loop, the model can automate continuous QPU tuning. Adoption includes Harvard, Fermilab, IQM Quantum Computers, Academia Sinica, Lawrence Berkeley National Laboratory's Advanced Quantum Testbed, and 12 other organizations.

Real-Time Quantum Error Correction

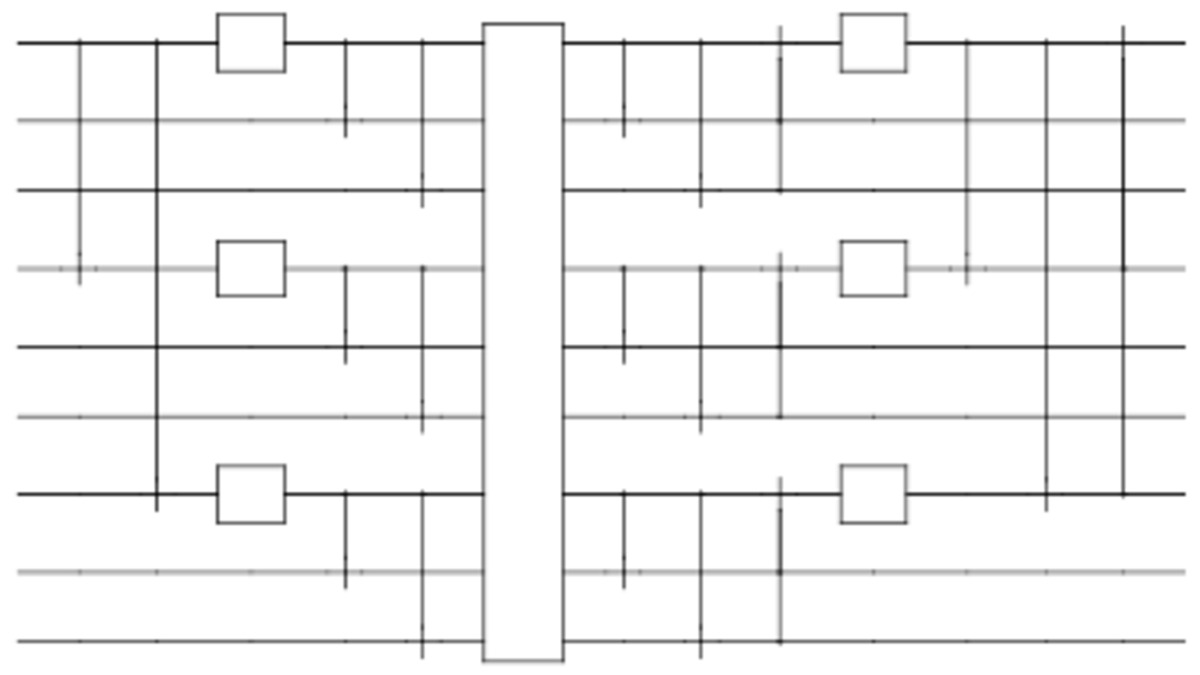

Shor code circuit - quantum error correction encodes logical qubits across physical qubits to detect and fix errors without measuring the quantum state directly.

Source: commons.wikimedia.org

Shor code circuit - quantum error correction encodes logical qubits across physical qubits to detect and fix errors without measuring the quantum state directly.

Source: commons.wikimedia.org

Surface code quantum error correction generates a flood of syndrome measurement data that must be decoded before the next correction cycle. The standard tool is pyMatching - a minimum-weight perfect matching decoder. Ising Decoder SurfaceCode 1 doesn't replace pyMatching; it runs upstream of it, cleaning the syndrome data and reducing the work pyMatching has to do.

The Fast variant (912K parameters, receptive field 9, trained on 9x9x9 input volumes) prioritizes latency: 2.5x faster than pyMatching alone with 1.11x better accuracy at d=13. The Accurate variant (1.79M parameters, receptive field 13, trained on 13x13x13 input volumes) trades some speed for accuracy: 2.25x faster with 1.53x better logical error rate. At surface code d=31 - roughly 961 physical qubits for a logical qubit - the Accurate decoder delivers 3x LER improvement when trained only on d=13 data, which is an important demonstration that the model generalizes across code distances.

Deployment support includes FP8 quantization for production throughput, CUDA-Q QEC integration, and NIM microservices for custom fine-tuning on specific QPU noise profiles. Cornell, Sandia Labs, UC San Diego, UC Santa Barbara, University of Chicago, and 15 other organizations are actively using the decoders.

Pricing and Availability

Both model families are free and open weights. The Decoder models are Apache 2.0 licensed with no restrictions. Ising Calibration 1 uses a dual license: Apache 2.0 from the Qwen3.5 base plus NVIDIA's Open Model License, which adds patent termination provisions for litigation against NVIDIA - the same setup as NVIDIA Nemotron 3 Super.

For inference, the Calibration model requires at minimum 2x L40S (48GB total VRAM) or a single H100 (80GB). It's served via vLLM with FlashAttention in BF16 precision. NVIDIA has published all training data provenance and fine-tuning tools so quantum labs can adapt both models to their specific hardware and noise characteristics.

No API pricing because there's no paid API - these are self-hosted research tools. A free trial endpoint exists at build.nvidia.com for the Calibration model.

Strengths and Weaknesses

Strengths

- First open model for quantum control: No comparable open alternative exists; quantum teams previously adapted general-purpose VLMs

- Strong calibration specialization: Q2, Q4, Q6 QCalEval scores are substantially above frontier LLMs on calibration-specific judgment

- Decoder generalization: Trained on d=13, works at d=31 - the model learns noise structure rather than memorizing specific code distances

- Real-world deployment: 20+ research institutions actively using the models, not just benchmarks

- Fully open: Weights, datasets, training methodology, and NIM microservices all available

- Modality coverage: Calibration model trained on superconducting qubits, ions, neutral atoms, quantum dots, electrons on helium

- Fast decoder latency: 2.33 μs per round on GB300 GPUs at d=13 is within realistic real-time decoding requirements

Weaknesses

- Vendor benchmark: QCalEval is NVIDIA-designed and NVIDIA-published; no independent replication yet

- ICL narrows the gap: Frontier LLMs with few-shot examples match or exceed Ising Calibration 1's zero-shot performance

- Narrow application domain: Useful only to quantum hardware teams; irrelevant to 99.9% of ML practitioners

- Calibration context window still limited: 262K tokens is generous for text, but calibration workflows with hundreds of experiment plots need careful prompt management

- NVIDIA Open Model License: Patent termination clause; not purely Apache 2.0 for the Calibration model

- No independent decoding benchmark: Decoder performance is measured against pyMatching only - no comparison to other learned decoders like BeliefMatching or MWPM variants

Related Coverage

- Our review: NVIDIA Ising Review

- News: NVIDIA Ising: Open AI for Quantum Error Correction

- Related model: Nemotron 3 Super Review: Best Open Model for Agents

- Compare: NVIDIA Nemotron 3 Super 120B-A12B

FAQ

What is NVIDIA Ising used for?

Ising Calibration 1 automates quantum processor tuning by interpreting measurement plots. Ising Decoder SurfaceCode 1 accelerates surface code quantum error correction. Both target quantum computing hardware teams, not general AI users.

Is NVIDIA Ising free to use?

Yes. Both model families are open weights. The Decoder models are Apache 2.0. The Calibration model uses NVIDIA Open Model License plus Apache 2.0 from its Qwen3.5 base.

How does Ising Calibration compare to GPT-5.4 on QCalEval?

Ising Calibration 1 scores 74.7 mean vs 64.6 for GPT-5.4 on zero-shot QCalEval - a 14.5% gap driven mainly by fit quality (Q4: 90.5 vs 54.7) and experimental conclusion (Q2: 67.1 vs 52.7). With few-shot examples, GPT-5.4 and similar frontier models close the gap significantly.

What hardware does Ising Calibration 1 require?

Minimum 2x NVIDIA L40S (48GB VRAM) or 1x H100 (80GB). Supported on Hopper and Blackwell microarchitectures. Served via vLLM in BF16 precision.

How does Ising Decoder compare to pyMatching?

The Fast decoder is 2.5x faster with 1.11x better accuracy at d=13, p=0.003. The Accurate decoder is 2.25x faster with 1.53x better logical error rate under the same conditions. At d=31, the Accurate decoder reaches 3x LER improvement.

Sources:

- NVIDIA Ising launch - NVIDIA Newsroom

- NVIDIA Ising Technical Blog

- NVIDIA Ising product page

- Ising Calibration 1-35B-A3B - Hugging Face

- Ising Decoder SurfaceCode 1 Fast - Hugging Face

- Ising Decoder SurfaceCode 1 Accurate - Hugging Face

- Ising Calibration 1 on NVIDIA NIM

- NVIDIA Ising GitHub

- QCalEval paper - arXiv:2604.25884

- NVIDIA Ising - InfoQ

✓ Last verified May 11, 2026