GPT-OSS 20B

OpenAI's open-weight 21B MoE reasoning model with 131K context, Apache 2.0 license, and o3-mini-level benchmark performance running in 16 GB of memory.

OpenAI released gpt-oss-20b on August 5, 2025, alongside its larger sibling gpt-oss-120b. It's the first open-weight model from OpenAI since the GPT-2 era and a deliberate answer to the traction that DeepSeek R1, Qwen 3.6 35B A3B, and Kimi K2 had been getting on open benchmarks. The Apache 2.0 license means commercial self-hosting is unrestricted - no additional terms, no usage caps in the license itself.

The model uses a Mixture-of-Experts transformer with 20.9 billion total parameters but only 3.6 billion active per token. That ratio keeps memory demand low enough that MXFP4 quantization brings the full checkpoint to 12.8 GiB, and the model runs on any 16 GB consumer GPU. That's an unusual combination: frontier-trained weights, in a form factor that fits on a gaming card.

Training followed the same reinforcement learning recipe OpenAI used on o3 and o4-mini: pretraining on a large STEM-heavy corpus through May 2024, then post-training with chain-of-thought RL to produce the variable-effort reasoning behavior OpenAI calls "reasoning levels."

TL;DR

- MoE architecture: 20.9B total parameters, 3.6B active - runs in 16 GB memory with MXFP4 quantization

- 131K context window, three reasoning levels (low/medium/high), full tool-use stack built in

- Matches OpenAI o3-mini on most standard benchmarks; beats it on AIME 2024 and 2025 math

Key Specifications

| Specification | Details |

|---|---|

| Provider | OpenAI |

| Model Family | GPT-OSS |

| Parameters | 20.9B total, 3.6B active (MoE) |

| Architecture | 24-layer MoE transformer, 32 experts, Top-4 routing, GQA with 8 KV heads |

| Context Window | 131,072 tokens (YaRN extension) |

| Max Output | 8,192 tokens |

| Input Price (API) | $0.03/M tokens |

| Output Price (API) | $0.10/M tokens |

| Release Date | August 5, 2025 |

| Knowledge Cutoff | May 31, 2024 |

| License | Apache 2.0 |

| Quantization | MXFP4 (MoE weights) |

| Checkpoint Size | 12.8 GiB |

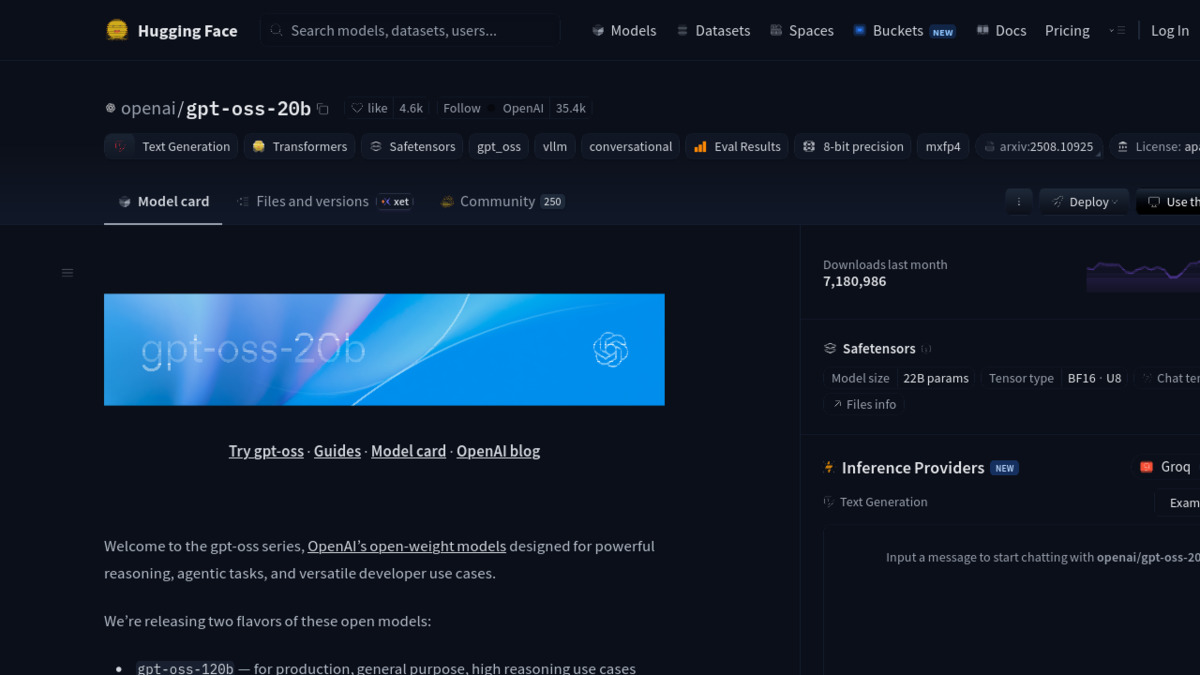

The model page on Hugging Face, where gpt-oss-20b recorded over 7 million downloads in its first month.

Source: huggingface.co

The model page on Hugging Face, where gpt-oss-20b recorded over 7 million downloads in its first month.

Source: huggingface.co

Benchmark Performance

All scores below are at the "high" reasoning level unless noted. Figures come from the official model card (arXiv 2508.10925) and the Artificial Analysis benchmark suite.

| Benchmark | GPT-OSS 20B | o3-mini (high) | DeepSeek R1 |

|---|---|---|---|

| MMLU | 85.3% | ~86% | 90.8% |

| GPQA Diamond (no tools) | 71.5% | 79.7% | 71.5% |

| GPQA Diamond (with tools) | 74.2% | - | - |

| AIME 2024 (with tools) | 96.0% | 90.0% | 87.5% |

| AIME 2025 (with tools) | 98.7% | ~91% | 87.5% |

| SWE-Bench Verified | 60.7% | 49.3% | ~49% |

| Codeforces Elo (with tools) | 2516 | ~1820 | ~2029 |

The math numbers are striking for a 20B model. GPT-OSS 20B scores 98.7% on AIME 2025 - edging out its larger 120B sibling (97.9%) and coming close to the proprietary o3 result. That suggests the RL post-training transfers well to competition math at this scale, and the smaller active parameter count may actually help by forcing tighter reasoning paths.

On SWE-Bench, 60.7% at "high" reasoning puts it well past o3-mini and into territory previously occupied only by much larger closed models. That result aligns with what you'd expect from a model trained with tool-use RL: it's been explicitly optimized to navigate real code repositories, not just create plausible patches in isolation. Compare that to the SWE-bench coding agent leaderboard where most smaller models cluster under 40%.

GPQA Diamond at 74.2% (with tools) is solid for a 20B model but trails larger open competitors like DeepSeek R1 on knowledge-heavy science tasks. The MMLU gap is consistent with the same pattern: knowledge retrieval scales with parameters, and 3.6B active weights can't match a 70B+ dense model's breadth.

Key Capabilities

The model ships with three runtime reasoning modes: low, medium, and high. At low effort it behaves like a fast chat model; at high effort it produces extended chains of thought. The trade-off is speed - Artificial Analysis measured 244 tokens per second at high reasoning on shared infrastructure, ranking it fourth fastest among models in its class. For local inference, throughput depends on GPU and quantization choice.

Tool use is native, not a wrapper. The model supports function calling with defined schemas, web search, Python execution, and structured output, all integrated through OpenAI's Harmony response format. That format is required for correct multi-turn agentic operation - vLLM, Ollama, LM Studio, SGLang, and the Transformers library all have Harmony support. For anyone building agents locally, this is a meaningful differentiator over models that require external scaffolding for the same capabilities.

Fine-tuning is fully supported through parameter fine-tuning. The Apache 2.0 license allows derivative models without restrictions, and OpenAI published a reference implementation with the weights. Several fine-tuned variants appeared within weeks of release targeting domain-specific coding and instruction following.

Pricing and Availability

The weights are free on Hugging Face under Apache 2.0. OpenAI also hosts the model through their API at $0.03 per million input tokens and $0.10 per million output tokens, which undercuts o4-mini notably. OpenRouter offers a free tier with rate limits.

Third-party inference is available across Azure, AWS Bedrock, Google Cloud, Fireworks AI, Together AI, Groq, Baseten, Databricks, Cloudflare Workers AI, Oracle OCI, and Vercel. For self-hosting, Ollama distributes it as gpt-oss:20b - pulling and running on a 16 GB GPU takes about ten minutes from scratch. The MXFP4 checkpoint means even consumer cards like a RTX 4080 can handle it at full context.

At $0.03 input / $0.10 output through the OpenAI API, it's cheaper than every o-series model and cheaper than GPT-4o. For cost-sensitive applications that don't need 200K context or image understanding, the pricing profile is hard to argue with.

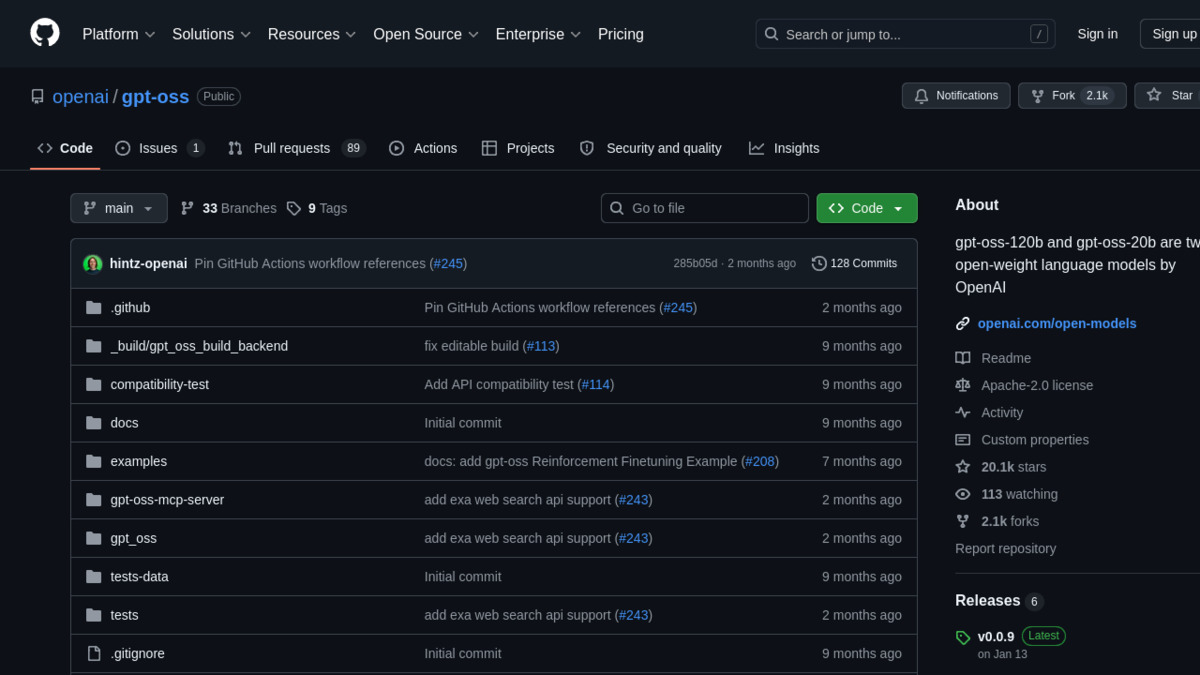

The official GitHub repository at openai/gpt-oss, covering inference setup for vLLM, Ollama, LM Studio, and SGLang.

Source: github.com

The official GitHub repository at openai/gpt-oss, covering inference setup for vLLM, Ollama, LM Studio, and SGLang.

Source: github.com

Strengths and Weaknesses

Strengths

- AIME 2024/2025 performance beats o3-mini and equals frontier models on competition math

- Runs in 16 GB GPU memory with MXFP4; no special hardware required

- Native tool use (function calling, code execution, web search) without external scaffolding

- Apache 2.0 license with no additional commercial restrictions

- Three reasoning levels let you trade cost for quality at inference time

- SWE-Bench Verified at 60.7% puts it in the top tier among self-hostable models

- 244 tokens/second output throughput - fast for a reasoning model

Weaknesses

- 3.6B active parameters limits knowledge breadth; GPQA and MMLU trail larger dense models

- Knowledge cutoff is May 2024 - over a year behind current frontier models

- Text-only: no image, audio, or video input

- Context window (131K) is smaller than GPT-5, Gemini 3 Pro, and Claude Opus 4.7 which offer 200K+

- Max 8,192 output tokens per turn constrains long-document generation

- Harmony response format is required for full functionality and isn't yet universal across all deployment stacks

Related Coverage

- Open Source LLM Leaderboard - see how GPT-OSS 20B ranks against Qwen, DeepSeek, Llama, and other open-weight models

- Coding Benchmarks Leaderboard - SWE-Bench and Codeforces comparisons across frontier and open models

- Reasoning Benchmarks Leaderboard - AIME and GPQA rankings in context

- OpenAI o3 - the frontier proprietary model that informed GPT-OSS training

- OpenAI o4-mini - the closed API alternative at a higher price point

Sources

- Introducing gpt-oss - OpenAI

- gpt-oss-120b & gpt-oss-20b Model Card (arXiv 2508.10925)

- openai/gpt-oss-20b - Hugging Face

- gpt-oss-20B Analysis - Artificial Analysis

- OpenAI GPT-OSS Benchmarks vs GLM-4.5, Qwen3, DeepSeek, Kimi K2 - Clarifai

- OpenAI gpt-oss Overview - Fireworks AI

- gpt-oss-20b on OpenRouter

- gpt-oss-20b on LM Studio

✓ Last verified May 11, 2026