EXAONE 4.5

LG AI Research's first open-weight vision-language model packs 33B parameters, 262K context, and STEM scores above GPT-5-mini - but ships under a non-commercial license.

Overview

EXAONE 4.5 is LG AI Research's first open-weight vision-language model, released April 9, 2026. The 33B VLM pairs a 1.29B vision encoder with the EXAONE 4.0 32B language backbone, targeting document analysis, STEM reasoning, and Korean-language tasks. At roughly one-seventh the size of LG's K-EXAONE flagship, the benchmark numbers are unusually aggressive.

TL;DR

- First open-weight VLM from LG AI Research, tuned for document understanding and STEM reasoning

- 33B params, 262K context, 6 languages, runs on a single H200 or 4x A100-40GB

- Posts a 77.3 STEM average against GPT-5-mini's 73.5 - but the license blocks commercial deployment

The model ships on Hugging Face with a GGUF variant and zero-day support for vLLM, SGLang, TensorRT-LLM, and llama.cpp. The weights carry the EXAONE AI Model License Agreement 1.2 - NC, restricted to research, academic, and educational contexts. That caveat is the gap between a "release" and an actual competitor to Gemma 4 or Qwen 3.5-35B-A3B, both under Apache 2.0.

The design bias is industrial. Exaone Lab head Jinsik Lee has said LG wants "AI that makes real decisions in industrial settings," and the training corpus reflects that: heavy OCR data, interleaved image-text documents, Korean-English bilingual captions, and grounding tasks. It's a document-processing engine that answers STEM questions well, not a general-purpose chatbot.

Key Specifications

| Specification | Details |

|---|---|

| Provider | LG AI Research |

| Model Family | EXAONE |

| Parameters | 33B total (31.7B LLM + 1.29B vision encoder) |

| Context Window | 262,144 tokens |

| Input Price | Free weights, research only, no first-party API |

| Output Price | Free weights, research only, no first-party API |

| Release Date | 2026-04-09 |

| License | EXAONE 1.2 - NC (non-commercial) |

| Languages | Korean, English, Spanish, German, Japanese, Vietnamese |

| Knowledge Cutoff | December 2024 |

| Inference Frameworks | vLLM, SGLang, TensorRT-LLM, llama.cpp |

The architecture is a hybrid-attention transformer: 64 main layers plus one Multi-Token Prediction layer, 40 query heads, 8 KV heads, hidden dimension 5,120. The attention pattern repeats 16 times through a 3:1 block of sliding-window attention (4,096 token window) followed by global attention. Global layers use NoPE; the vision encoder uses 2D RoPE with grouped query attention.

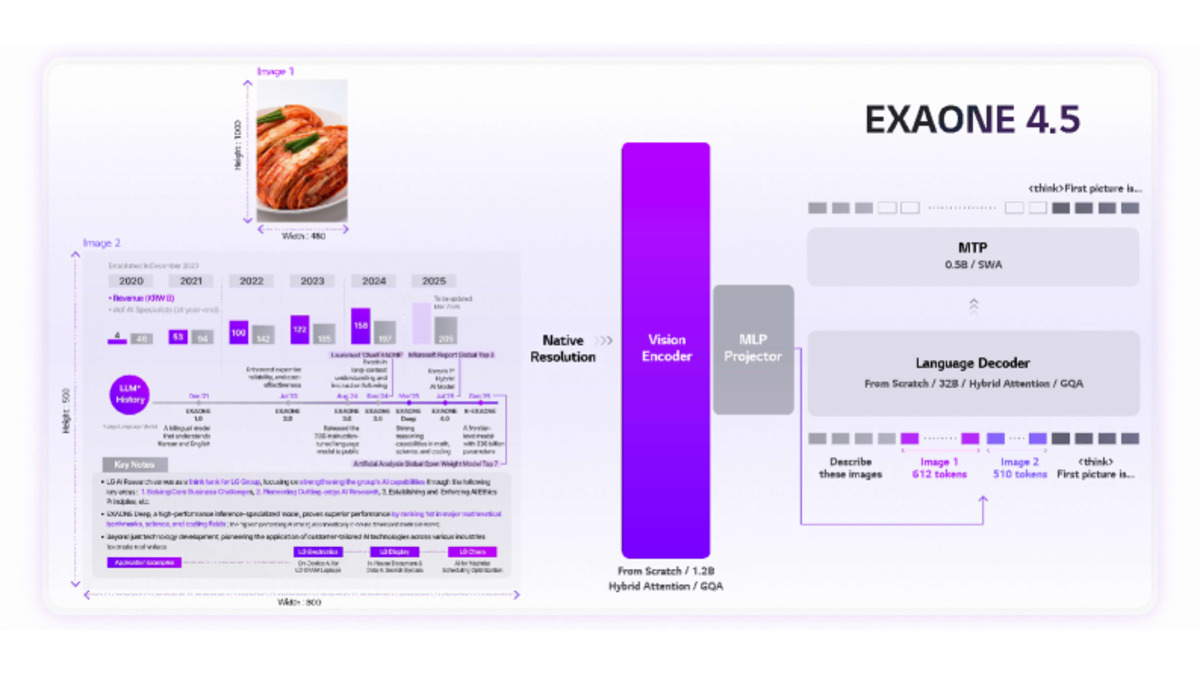

Figure 1 from the EXAONE 4.5 technical report: native-resolution inputs flow through the 1.29B vision encoder, project into the language decoder via an MLP projector, and decode with a Multi-Token Prediction (MTP) head.

Source: arxiv.org

Figure 1 from the EXAONE 4.5 technical report: native-resolution inputs flow through the 1.29B vision encoder, project into the language decoder via an MLP projector, and decode with a Multi-Token Prediction (MTP) head.

Source: arxiv.org

Benchmark Performance

LG published scores across 13 tasks. The headline claim is the STEM average.

| Benchmark | EXAONE 4.5 | GPT-5-mini | Claude 4.5 Sonnet | Qwen-3 235B |

|---|---|---|---|---|

| STEM average (5 tasks) | 77.3 | 73.5 | 74.6 | 77.0 |

| LiveCodeBench v6 | 81.4 | 78.1 | - | - |

| AIME 2025 | 92.9 | 91.1 | - | - |

| GPQA-Diamond | 80.5 | 82.3 | - | - |

| MMLU-Pro | 83.3 | - | - | - |

| MMMU | 78.7 | 79.0 | - | - |

| MMMU-Pro | 68.6 | 67.3 | - | - |

| ChartQA Pro | 62.2 | - | - | - |

| OmniDocBench v1.5 | 81.2 | - | - | - |

| MathVision | 75.2 | - | - | 74.6 |

EXAONE 4.5 edges GPT-5-mini on coding (LiveCodeBench v6 81.4 vs 78.1), competition math (AIME 2025 92.9 vs 91.1), and expert multimodal reasoning (MMMU-Pro 68.6 vs 67.3). GPT-5-mini holds narrow leads on GPQA-Diamond and standard MMMU. MMLU-Pro at 83.3 lands where mid-tier frontier reasoners sit on our MMLU-Pro rankings.

The LiveCodeBench v6 result matters more than the GPT-5-mini framing. On our coding benchmarks leaderboard, 81.4 edges Gemma 4's 80.0 among open-weight VLMs - and Gemma 4 ships with a license teams can deploy. Against proprietary frontier reasoners like GPT-5.2 or Claude Opus 4.7, EXAONE 4.5 trades blows from a size class below.

AIME 2025 at 92.9% is the standout. A 33B model clearing that - and holding 92.6 on AIME 2026 - suggests hybrid attention plus the inherited reasoning mode is doing real work. These are LG's in-house numbers; independent evaluations haven't landed.

Key Capabilities

EXAONE 4.5 is built for document work. OmniDocBench v1.5 at 81.2 covers OCR, table parsing, and structured document reasoning - the workflows LG targets for contracts, financial statements, and technical drawings. ChartQA Pro at 62.2 handles multi-axis visualizations that trip smaller vision models. The model accepts native-resolution image input instead of fixed tiles, which helps on dense pages.

Korean capability is the second pillar. A K-AUT cultural-context module plus Korean-specific training drive scores like KRETA 91.9. For non-Korean users this doesn't matter much, but it's why LG built the model - the broader K-EXAONE program sits inside South Korea's Proprietary AI Foundation Model project, which funnels state GPU resources to teams that clear evaluation gates.

The 262K context is the third differentiator, covering about 200 pages of dense text or long documents plus high-resolution images in one call. Reasoning and non-reasoning modes toggle through enable_thinking. LG recommends temperature=1.0, top_p=0.95, presence_penalty=1.5, dropped to temperature=0.6 for OCR and Korean tasks.

Pricing and Availability

There is no EXAONE 4.5 API from LG. Weights distribute through Hugging Face, with a GGUF repository offering Q8_0, Q6_K, Q5_K_M, Q4_K_M, and IQ4_XS variants plus BF16.

The license is EXAONE AI Model License Agreement 1.2 - NC. It permits evaluation, academic research, coursework, benchmarking, and non-commercial experimentation. It doesn't permit commercial use of the model, outputs, or derivatives. Fine-tuning for commercial deployment, hosting paid inference, and shipping products with EXAONE 4.5 in the stack all need a separate agreement with LG.

Running EXAONE 4.5 at full 256K context needs a single H200 GPU or four A100-40GB cards with tensor parallelism. Practical deployment sits in data-center territory regardless of the "open weight" framing.

Source: unsplash.com

Running EXAONE 4.5 at full 256K context needs a single H200 GPU or four A100-40GB cards with tensor parallelism. Practical deployment sits in data-center territory regardless of the "open weight" framing.

Source: unsplash.com

Hardware is non-trivial. A single H200 (141GB HBM3e) handles full context, but H200s trade at $30,000 to $40,000. The four-A100-40GB alternative runs $8,000 to $12,000 per card. Quantized GGUF variants cut memory hard - IQ4_XS fits LLM weights under 20GB - but full-context multimodal inference wants professional hardware.

Compare the license to Gemma 4, which carries Apache 2.0 and has a review on our site. The "open weight" label is real - weights download freely. It isn't open the way buyers usually assume.

Strengths and Weaknesses

Strengths

- Top-tier open-weight scores on LiveCodeBench v6 (81.4) and AIME 2025 (92.9) for its size class

- 262K context is among the longest on any open-weight VLM

- Native-resolution vision encoder handles dense documents without aggressive downsampling

- Day-one vLLM, SGLang, TensorRT-LLM, and llama.cpp support plus official GGUFs

- OCR and document reasoning (OmniDocBench 81.2, ChartQA Pro 62.2) make it a credible document engine

Weaknesses

- Non-commercial license blocks production deployment without a custom LG agreement

- Knowledge cutoff of December 2024 is 16 months stale at release

- Benchmark comparisons chosen by LG point at GPT-5-mini, not GPT-5.4 or Claude Opus 4.7

- No first-party API; teams own their inference stack

- Hardware floor of one H200 or four A100-40GBs puts deployment outside consumer reach

Related Coverage

- Launch coverage: EXAONE 4.5: LG's Open VLM Beats GPT-5-mini on STEM

- Closest deployable open-weight peer: Gemma 4 (Apache 2.0)

- Comparable size-class MoE peer: Qwen 3.5-35B-A3B

- Rankings: coding benchmarks, MMLU-Pro, math olympiad AI

FAQ

Is EXAONE 4.5 open source?

Weights are freely downloadable, but the license is non-commercial (EXAONE 1.2 - NC). Research, academic, and educational use is permitted; commercial deployment needs a separate agreement with LG AI Research.

What hardware does EXAONE 4.5 need?

Full 256K context runs on a single NVIDIA H200 or four A100-40GB cards with tensor parallelism. GGUF variants (IQ4_XS, Q4_K_M) cut memory for text-only workloads.

How does EXAONE 4.5 compare to GPT-5-mini?

On LG's benchmarks, EXAONE 4.5 leads on STEM average (77.3 vs 73.5), LiveCodeBench v6 (81.4 vs 78.1), and AIME 2025 (92.9 vs 91.1). GPT-5-mini leads narrowly on GPQA-Diamond and MMMU.

Does EXAONE 4.5 support Korean?

Yes, with a K-AUT cultural context module and category-leading Korean scores (KRETA 91.9). Supported languages: Korean, English, Spanish, German, Japanese, Vietnamese.

Can I deploy EXAONE 4.5 in production?

Not under the standard license. Commercial use, derivatives, and paid endpoints are blocked by EXAONE 1.2 - NC. LG offers commercial licensing on request.

Sources

- EXAONE-4.5-33B official model card on Hugging Face

- EXAONE-4.5-33B-GGUF quantized release

- EXAONE 4.5 Technical Report (arXiv 2604.08644)

- LG AI Research official announcement (PR Newswire)

- LG says new AI beats GPT-5 mini in visual tests (Korea Herald)

- LG Reveals Next-Gen Multimodal AI EXAONE 4.5 (Korea Herald)

- LG AI Research organization on Hugging Face

- EXAONE 4.0 repository (GitHub)

- LG AI Research

Last updated

✓ Last verified April 21, 2026