Switching from AWS Bedrock to Azure OpenAI

A developer's guide to migrating from AWS Bedrock to Azure OpenAI Service, covering SDK changes, model mapping, pricing differences, and authentication gotchas.

TL;DR

- Yes, you can switch - but expect to rewrite your inference code since the SDKs are completely different (boto3 vs openai Python package)

- Model mapping isn't one-to-one: Bedrock offers Claude, Llama, and Mistral with Nova; Azure locks you into the OpenAI family (GPT-5, GPT-4o, o3/o4-mini)

- Azure's OpenAI SDK uses the familiar

openaiPython library, which simplifies the migration if your team already knows the OpenAI API - Medium difficulty, roughly 1-2 weeks for a production codebase depending on how many Bedrock-specific features you use

Why Switch to Azure OpenAI

Most teams moving from Bedrock to Azure OpenAI do it for one of three reasons: they need exclusive access to OpenAI's latest models, their organization is already deep in the Microsoft ecosystem, or they want tighter integration with Microsoft 365 and Azure Active Directory.

AWS Bedrock's strength is model diversity. It pools over 40 foundation models from Anthropic, Meta, Mistral, Cohere, DeepSeek, and Amazon's own Nova lineup, all behind a unified API. Azure OpenAI takes the opposite approach - it curates a smaller set of models, all from OpenAI, but gives you early and often exclusive access to the GPT-5 series, GPT-4.1, o3, o4-mini, and specialized variants like GPT-5.4 that aren't available on Bedrock.

If your workloads rely heavily on Claude or Llama 4, switching to Azure OpenAI means losing access to those models entirely. But if GPT-class models are your primary need and you want Microsoft's compliance stack (FedRAMP High, DoD IL5/IL6, HIPAA BAA built in), Azure OpenAI is a strong fit.

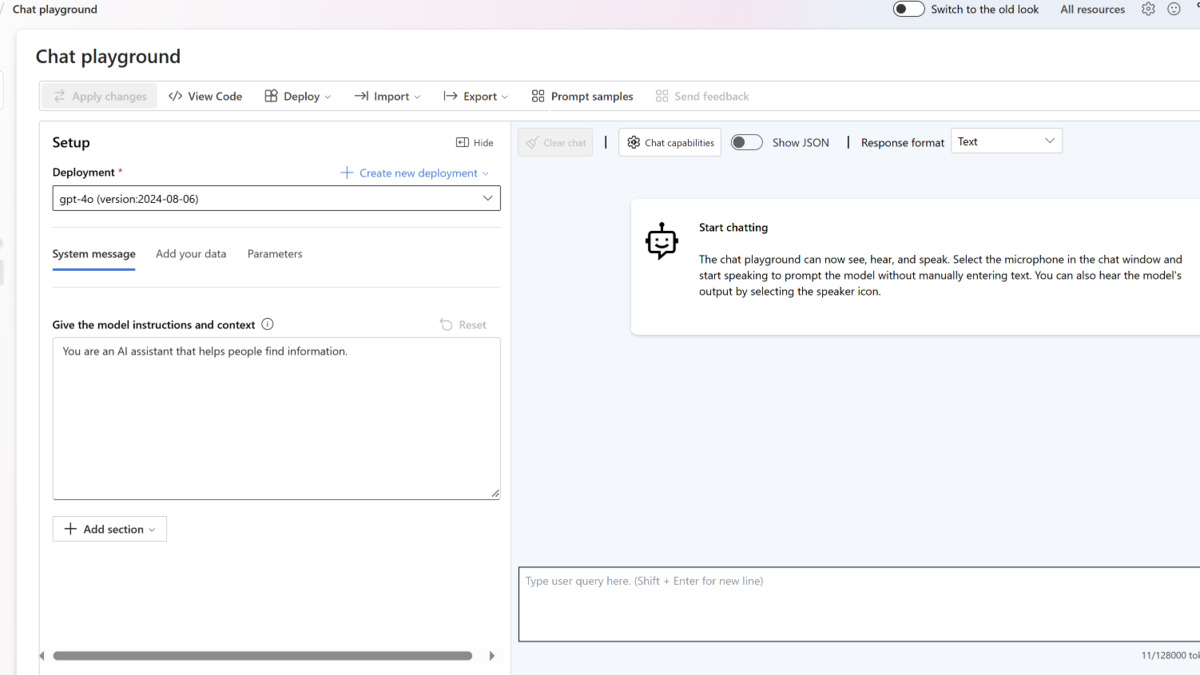

Azure OpenAI's chat playground in the Microsoft Foundry portal, where you can test deployments and configure parameters before writing code.

Source: learn.microsoft.com

Azure OpenAI's chat playground in the Microsoft Foundry portal, where you can test deployments and configure parameters before writing code.

Source: learn.microsoft.com

Feature Parity Table

| Feature | AWS Bedrock | Azure OpenAI | Notes |

|---|---|---|---|

| Chat completions | Converse API (brt.converse()) | Chat Completions API (client.chat.completions.create()) | Different SDK, similar concepts |

| Streaming | ConverseStream | stream=True parameter | Both support SSE streaming |

| Function/tool calling | Converse API toolConfig | tools[] parameter (OpenAI format) | Different schema structure |

| Image input | Supported via Converse API | Supported via image_url in messages | Both handle vision tasks |

| Embeddings | Cohere Embed, Amazon Titan Embed | text-embedding-3-large/small, Ada | Different model families |

| Image generation | Amazon Nova Canvas, Stability AI | DALL-E 3 | Bedrock has more options |

| Model variety | 40+ models from 10+ providers | OpenAI models only | Bedrock wins on diversity |

| Fine-tuning | Supported for select models | Supported for GPT-4o, GPT-4o-mini | Both offer customization |

| Authentication | AWS IAM + SigV4 | Azure AD (Entra ID) or API key | Completely different auth systems |

| Content filtering | Guardrails (configurable) | Built-in content filters | Azure filters are on by default |

| Batch inference | 50% discount, async | Batch API, 50% discount, 24h window | Similar economics |

| Provisioned throughput | Provisioned Throughput Units | Provisioned Throughput Units (PTUs) | Both offer reserved capacity |

SDK and API Mapping

This is where the migration gets hands-on. Bedrock uses AWS's boto3 SDK with the bedrock-runtime service client. Azure OpenAI uses the standard openai Python package with an AzureOpenAI (or just OpenAI) client class pointed at your Azure endpoint.

Basic Chat Completion

Before (AWS Bedrock):

import boto3

client = boto3.client("bedrock-runtime", region_name="us-east-1")

response = client.converse(

modelId="anthropic.claude-sonnet-4-6",

messages=[

{

"role": "user",

"content": [{"text": "What is cloud computing?"}],

}

],

inferenceConfig={"maxTokens": 512, "temperature": 0.7},

)

print(response["output"]["message"]["content"][0]["text"])

After (Azure OpenAI):

import os

from openai import OpenAI

client = OpenAI(

api_key=os.getenv("AZURE_OPENAI_API_KEY"),

base_url="https://YOUR-RESOURCE.openai.azure.com/openai/v1/",

)

response = client.chat.completions.create(

model="gpt-5", # your deployment name

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is cloud computing?"},

],

max_tokens=512,

temperature=0.7,

)

print(response.choices[0].message.content)

Notice the structural differences. Bedrock wraps message content in a list of typed objects ([{"text": "..."}]), while Azure OpenAI uses the flat OpenAI format ("content": "..."). Bedrock puts inference parameters under inferenceConfig; Azure OpenAI puts them as top-level parameters on the API call.

Migrating inference code requires rewriting client initialization, message formatting, and response parsing.

Source: pexels.com

Migrating inference code requires rewriting client initialization, message formatting, and response parsing.

Source: pexels.com

Streaming Response

Before (AWS Bedrock):

response = client.converse_stream(

modelId="anthropic.claude-sonnet-4-6",

messages=[{"role": "user", "content": [{"text": "Explain Docker."}]}],

inferenceConfig={"maxTokens": 1024},

)

for event in response["stream"]:

if "contentBlockDelta" in event:

print(event["contentBlockDelta"]["delta"]["text"], end="")

After (Azure OpenAI):

stream = client.chat.completions.create(

model="gpt-5",

messages=[{"role": "user", "content": "Explain Docker."}],

max_tokens=1024,

stream=True,

)

for chunk in stream:

if chunk.choices[0].delta.content:

print(chunk.choices[0].delta.content, end="")

The streaming APIs differ substantially. Bedrock sends events wrapped in a dictionary with event type keys (contentBlockDelta, messageStop). Azure OpenAI streams typed chunk objects with a .choices[0].delta.content accessor. If you're using LiteLLM or LangChain as an abstraction layer, the migration is simpler since these libraries handle both backends - check our LangChain to LlamaIndex migration guide for framework-level switching patterns.

Tool Calling

Before (AWS Bedrock - Converse API):

tool_config = {

"tools": [

{

"toolSpec": {

"name": "get_weather",

"description": "Get current weather for a city",

"inputSchema": {

"json": {

"type": "object",

"properties": {

"city": {"type": "string", "description": "City name"}

},

"required": ["city"],

}

},

}

}

]

}

response = client.converse(

modelId="anthropic.claude-sonnet-4-6",

messages=[{"role": "user", "content": [{"text": "Weather in London?"}]}],

toolConfig=tool_config,

)

After (Azure OpenAI):

tools = [

{

"type": "function",

"function": {

"name": "get_weather",

"description": "Get current weather for a city",

"parameters": {

"type": "object",

"properties": {

"city": {"type": "string", "description": "City name"}

},

"required": ["city"],

},

},

}

]

response = client.chat.completions.create(

model="gpt-5",

messages=[{"role": "user", "content": "Weather in London?"}],

tools=tools,

)

The tool schemas look similar but nest differently. Bedrock uses toolSpec with inputSchema.json; Azure OpenAI uses function with parameters. You'll need to restructure your tool definitions during migration.

Model Availability

One of the biggest trade-offs in this migration is model access. AWS Bedrock and Azure OpenAI overlap on zero models - they serve completely different provider ecosystems.

| Use Case | AWS Bedrock Option | Azure OpenAI Equivalent |

|---|---|---|

| Flagship reasoning | Claude Opus 4.6 | GPT-5.4 |

| Fast general purpose | Claude Sonnet 4.6, Nova Pro | GPT-5, GPT-4o |

| Cost-efficient | Claude Haiku 4.5, Nova Micro | GPT-5-mini, GPT-5-nano |

| Code generation | DeepSeek V3.2, Claude Sonnet | GPT-5.4, GPT-4.1 |

| Embeddings | Cohere Embed v4, Amazon Titan | text-embedding-3-large/small |

| Image generation | Nova Canvas, Stable Diffusion 3.5 | DALL-E 3 |

| Open-source models | Llama 4, Mistral Large 3, Qwen 3 | Not available |

If you currently use Claude through Bedrock and want to keep using it, Azure OpenAI isn't the right destination. Consider the Anthropic API directly instead.

Pricing Comparison

Both platforms charge per token on a pay-as-you-go basis, but the specific rates and model tiers differ. For a sample workload of 10 million input tokens and 2 million output tokens per month on a mid-tier model:

| Model Tier | AWS Bedrock (per 1M tokens) | Azure OpenAI (per 1M tokens) | Monthly Cost (10M in / 2M out) |

|---|---|---|---|

| Flagship | Claude Opus 4.6: ~$15 in / $75 out | GPT-5.4: ~$15 in / $120 out | Bedrock: $300 / Azure: $390 |

| Mid-tier | Claude Sonnet 4.6: ~$6 in / $30 out | GPT-5: $1.25 in / $10 out | Bedrock: $120 / Azure: $32.50 |

| Budget | Nova Micro: $0.035 in / $0.14 out | GPT-5-nano: $0.05 in / $0.40 out | Bedrock: $0.63 / Azure: $1.30 |

The mid-tier comparison is striking. Azure OpenAI's GPT-5 is substantially cheaper per token than Claude Sonnet on Bedrock, though model quality varies by task. At the budget tier, Amazon Nova Micro undercuts everything. For detailed pricing breakdowns across more providers, see our LLM API pricing comparison.

Both platforms offer prompt caching to reduce costs on repeated context. Bedrock charges 1.25x for cache writes and 0.1x for cache reads; Azure OpenAI offers cached input tokens at roughly 90% discount on select models.

Authentication and Deployment

This is the migration step that often takes longest because it involves infrastructure changes, not just code.

Step 1 - Set Up Azure OpenAI Resource

- Create an Azure OpenAI resource in the Azure portal

- Deploy your chosen model (GPT-5, GPT-4o, etc.) through the Microsoft Foundry portal at ai.azure.com

- Note your endpoint URL and either create an API key or configure Microsoft Entra ID authentication

Step 2 - Update Authentication

Bedrock uses AWS IAM credentials with SigV4 signing, usually through environment variables (AWS_ACCESS_KEY_ID, AWS_SECRET_ACCESS_KEY) or IAM roles. Azure OpenAI supports two authentication methods:

API Key (simpler):

client = OpenAI(

api_key=os.getenv("AZURE_OPENAI_API_KEY"),

base_url="https://YOUR-RESOURCE.openai.azure.com/openai/v1/",

)

Microsoft Entra ID (recommended for production):

from azure.identity import DefaultAzureCredential, get_bearer_token_provider

token_provider = get_bearer_token_provider(

DefaultAzureCredential(), "https://ai.azure.com/.default"

)

client = OpenAI(

base_url="https://YOUR-RESOURCE.openai.azure.com/openai/v1/",

api_key=token_provider,

)

Step 3 - Update Dependencies

Remove boto3 (or at least the Bedrock-specific usage) and add the openai package. If using Entra ID, also add azure-identity:

pip install openai azure-identity

Step 4 - Rewrite Inference Calls

Using the code examples above, update every converse() or invoke_model() call to chat.completions.create(). The response parsing also changes - Bedrock returns nested dictionaries while Azure OpenAI returns typed Python objects.

Step 5 - Update Monitoring

Replace CloudWatch metrics and logging with Azure Monitor. If you're using Bedrock Guardrails for content filtering, note that Azure OpenAI has built-in content filters enabled by default, which you can customize but not fully disable.

Known Gotchas

Model deployment is required. Unlike Bedrock where you simply reference a model ID, Azure OpenAI requires you to explicitly deploy each model before calling it. The

modelparameter in API calls refers to your deployment name, not the model name itself.Content filters are on by default. Azure OpenAI applies content filtering to all requests. Some prompts that worked on Bedrock may get blocked. You can adjust severity thresholds through the Azure portal but can't remove filters entirely without applying for a managed customer exemption.

No multi-provider access. On Bedrock, you could call Claude for reasoning, Llama for code, and Nova for cheap tasks in the same codebase. Azure OpenAI only serves OpenAI models. If you need model diversity, you'll need additional providers.

Region availability differs. Not all Azure regions offer all models. GPT-5.4 and the latest reasoning models may only be available in specific regions. Check Azure's model availability page before choosing your region.

Quota and rate limits need pre-approval. Azure OpenAI assigns default tokens-per-minute quotas that are often lower than Bedrock's defaults. For production workloads, you'll likely need to request quota increases through the Azure portal.

The Converse API has no direct equivalent. Bedrock's Converse API unified multi-model access behind one schema. On Azure OpenAI, the chat completions API already follows OpenAI's standard format, so there's less need for unification - but if your code relied on Converse's model-agnostic structure, you'll need to update your abstraction layer.

Embedding dimensions differ. If you're migrating embeddings alongside chat, Cohere Embed v4 and Amazon Titan Embed produce different vector dimensions than OpenAI's text-embedding-3-large (3072) or text-embedding-3-small (1536). You'll need to re-embed your entire vector store. See our embedding models pricing guide for cost estimates.

FAQ

Can I use the same SDK for both platforms?

No. Bedrock requires boto3 while Azure OpenAI uses the openai Python package. Libraries like LiteLLM can abstract both, but native SDK code must be rewritten.

Will my Bedrock prompts work on Azure OpenAI without changes?

Most prompts transfer well since both platforms follow chat completion conventions. Prompts relying on Claude's XML tag style or model-specific features will need adjustment.

Is there a compatibility layer between Bedrock and Azure OpenAI?

LiteLLM and LangChain both support both backends. You can also build a thin wrapper that translates Bedrock's Converse format to OpenAI's chat completions format.

How long does a typical migration take?

For a production codebase with 5-10 inference endpoints, expect 1-2 weeks including testing. The authentication and deployment setup adds 1-3 days depending on your Azure environment.

Can I keep using Claude after migrating to Azure?

Not through Azure OpenAI. You'd need to call the Anthropic API directly or use a proxy service. Azure OpenAI only serves OpenAI models.

Which platform has better compliance coverage?

Both are strong. Bedrock holds FedRAMP High, HIPAA, SOC 1/2/3, ISO 27001, and PCI DSS. Azure OpenAI matches this and adds DoD IL5/IL6 authorization and built-in Microsoft Purview integration.

Sources:

✓ Last verified March 26, 2026