Video Generation Benchmarks Leaderboard 2026

Rankings of AI video generation models across VBench, VBench-2.0, and the Artificial Analysis Video Arena Elo system, covering text-to-video and image-to-video performance.

The video generation space has had a chaotic few months. OpenAI discontinued Sora on March 24, 2026, less than six months after its public launch, after download numbers had dropped roughly 66% from peak and the product was reportedly burning through $15 million per day in inference costs. ByteDance paused the global rollout of Seedance 2.0 in mid-March following cease-and-desist letters from Disney, Paramount, Warner Bros., and the Motion Picture Association. And a model called HappyHorse-1.0 appeared on Artificial Analysis in early April, topped every ranking within days, then disappeared from public access - before Alibaba confirmed it was their work.

This leaderboard covers where the major models actually stand, based on two types of evidence: the Artificial Analysis Video Arena (blind Elo from user preference votes) and the VBench/VBench-2.0 academic benchmarks (automated metrics across 16-18 dimensions). The two systems measure different things and sometimes disagree. Both matter.

TL;DR

- HappyHorse-1.0 (Alibaba) leads both T2V and I2V Elo rankings by a wide margin - Elo 1,361 for text-to-video, 1,398 for image-to-video - but its public access status is unclear as of publication

- Sora is dead. OpenAI shut down the product March 24; the API stays live until September 24, 2026

- Seedance 2.0 sits at Elo 1,268 (T2V) despite its suspended global launch - accessible in China, patchy elsewhere

- Best reliably available proprietary pick: Kling 3.0 at Elo 1,247 (T2V) with native 4K and multi-shot consistency

- Open-source leader: Wan 2.2, which posted a 84.7% overall VBench score

How These Benchmarks Work

Artificial Analysis Video Arena

Artificial Analysis runs blind pairwise comparisons. A user sees two videos generated from the same prompt, doesn't know which model produced which, and picks a winner. Elo scores update continuously from these votes, the same mechanism used in chess ratings. A gap of 20-30 Elo points translates to roughly 53% win rate in direct head-to-head comparisons.

The leaderboard separates text-to-video and image-to-video tasks, and also splits by whether audio output is included. This matters: models that produce synchronized audio (native sound, not post-dubbed) tend to score higher on the audio leaderboard regardless of visual quality alone.

As of April 2026, the leaderboard covers more than 80 models across 24 API providers.

VBench and VBench-2.0

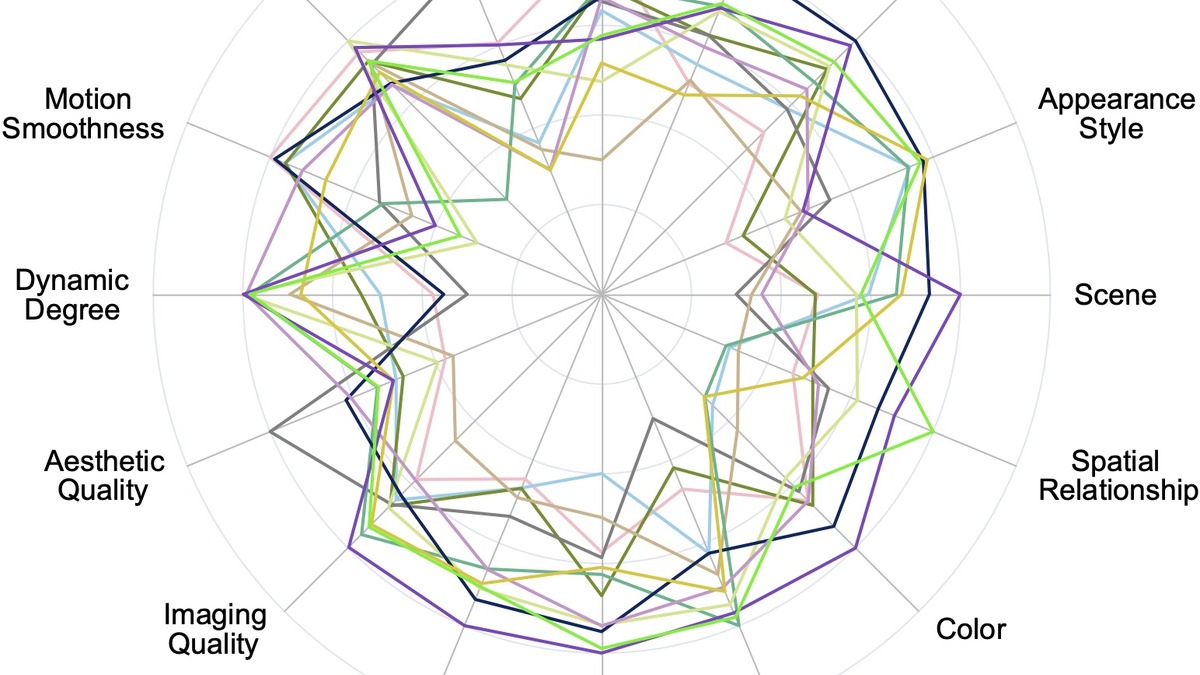

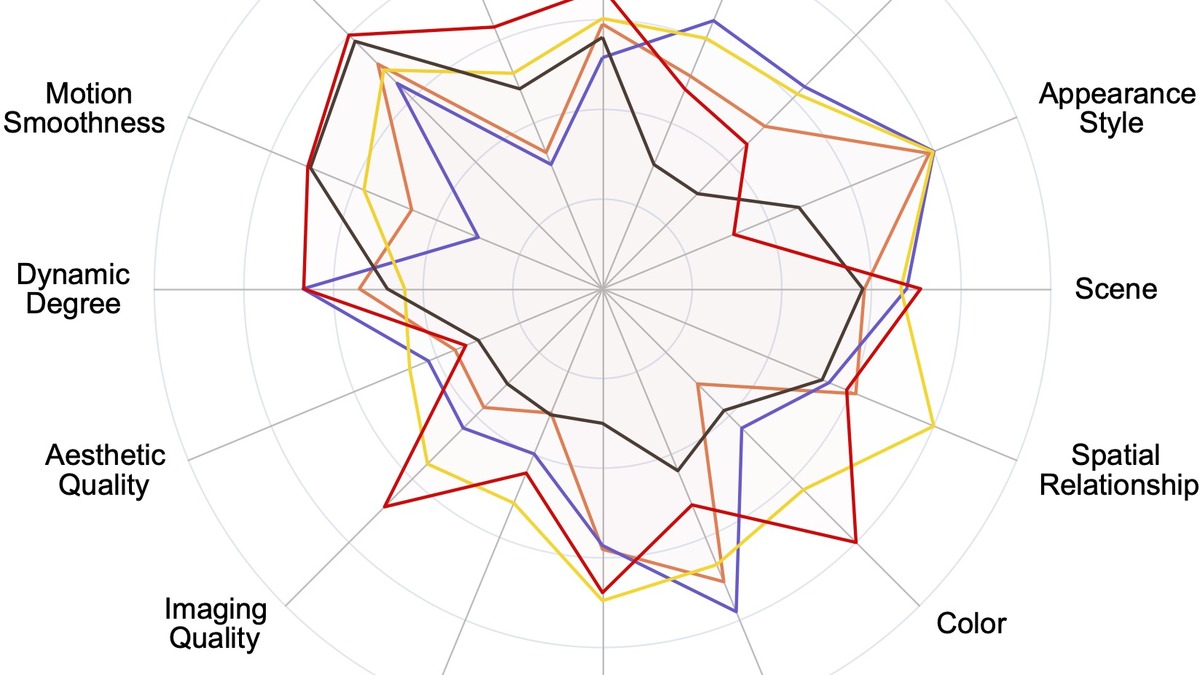

VBench, introduced at CVPR 2024 by the Vchitect group, decomposes video generation quality into 16 automated dimensions covering two categories - video quality (subject consistency, motion smoothness, temporal flickering, dynamic degree, aesthetic quality, imaging quality) and video-text alignment (object class, multiple objects, human action, color, spatial relationship, scene, temporal style, appearance style, overall consistency). Each dimension uses a purpose-built automated evaluator rather than a single aggregate score.

VBench-2.0, released in March 2026, extends the framework to test "intrinsic faithfulness" - five new categories (Human Fidelity, Controllability, Creativity, Physics, Commonsense) with 18 fine-grained sub-dimensions. The original VBench caught many technical defects well; VBench-2.0 is designed for models that have already cleared those. Even leading models currently score around 50% on action faithfulness in the new framework, suggesting plenty of headroom remains.

The two benchmarks answer different questions. VBench rewards smooth, coherent video that matches prompts. VBench-2.0 rewards plausible physics, human motion, and real-world causality. A model can score well on one while failing the other.

VBench radar chart comparing closed-source video generation models across all 16 evaluation dimensions. Subject consistency and motion smoothness tend to separate top models from the middle tier.

Source: github.com/Vchitect/VBench

VBench radar chart comparing closed-source video generation models across all 16 evaluation dimensions. Subject consistency and motion smoothness tend to separate top models from the middle tier.

Source: github.com/Vchitect/VBench

Text-to-Video Rankings - Artificial Analysis Elo

Rankings as of April 17, 2026. "No Audio" tracks pure visual quality; "With Audio" includes native synchronized audio output as a preference signal.

| Rank | Model | Provider | Elo (No Audio) | Elo (With Audio) |

|---|---|---|---|---|

| 1 | HappyHorse-1.0 | Alibaba-ATH | 1,361 | 1,225 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,268 | 1,221 |

| 3 | Kling 3.0 1080p (Pro) | KlingAI | 1,247 | 1,100 |

| 4 | SkyReels V4 | Skywork AI | 1,234 | 1,135 |

| 5 | grok-imagine-video | xAI | 1,232 | - |

| 6 | Kling 3.0 Omni 1080p (Pro) | KlingAI | 1,230 | 1,101 |

| 7 | Kling 3.0 Omni 720p (Std) | KlingAI | 1,223 | - |

| 8 | Vidu Q3 Pro | Vidu | 1,221 | - |

| 9 | Veo 3 | 1,217 | - | |

| 10 | PixVerse V5.6 | PixVerse | 1,217 | - |

Source: Artificial Analysis Text-to-Video Leaderboard

Notes on the table above: HappyHorse-1.0 leads but its public availability is uncertain - Alibaba confirmed authorship on April 10 but hasn't announced a standard API or subscription product. Seedance 2.0 is accessible primarily in China following its global rollout pause. Runway Gen-4.5 was previously reported with an Elo near 1,247, but doesn't appear in the current top-10 by No Audio Elo today. The leaderboard updates continuously.

Image-to-Video Rankings - Artificial Analysis Elo

| Rank | Model | Provider | Elo (No Audio) | Elo (With Audio) |

|---|---|---|---|---|

| 1 | HappyHorse-1.0 | Alibaba-ATH | 1,398 | 1,165 |

| 2 | Dreamina Seedance 2.0 720p | ByteDance Seed | 1,347 | 1,176 |

| 3 | grok-imagine-video | xAI | 1,326 | 1,087 |

| 4 | PixVerse V6 | PixVerse | 1,314 | - |

| 5 | SkyReels V4 | Skywork AI | 1,286 | 1,087 |

| 6 | Kling 2.5 Turbo 1080p | KlingAI | 1,286 | - |

| 7 | Vidu Q3 Pro | Vidu | 1,285 | - |

| 8 | Kling 3.0 Omni 1080p (Pro) | KlingAI | 1,284 | - |

| 9 | Kling 3.0 1080p (Pro) | KlingAI | 1,282 | - |

| 10 | PixVerse V5.6 | PixVerse | 1,280 | - |

Source: Artificial Analysis Image-to-Video Leaderboard

The image-to-video gap between first and second place is 51 Elo points, which is one of the largest margins in the arena's history. For I2V with audio, Seedance 2.0 leads (1,176) over HappyHorse (1,165) and SkyReels V4 (1,087) - the audio pairing is where Seedance retains an advantage.

Open-Source VBench Rankings

The VBench leaderboard tracks 50+ text-to-video models. These are the notable open-source entries by overall score:

| Model | Developer | VBench Total (%) | Notes |

|---|---|---|---|

| Wan 2.2 | Alibaba Cloud | ~84.7 | Current open-source leader; trained on 1.5B videos |

| Wan 2.1 | Alibaba Cloud | ~83.1 | Previous version; widely deployed in ComfyUI workflows |

| HunyuanVideo 1.5 | Tencent | - | 96.4% visual quality; 68.5% text alignment on sub-metrics |

| LTX-2.3 | Lightricks | - | Fastest open-source model; optimized for speed |

| Mochi 1 | Genmo AI | - | 10B parameter; Asymmetric Diffusion Transformer architecture |

| Open-Sora 2.0 | Various | - | 11B params; community benchmark scores near HunyuanVideo |

The Wan series (from Alibaba's open research team, distinct from the HappyHorse product) leads open-source VBench scoring. Wan 2.2 trained on 1.5 billion videos and 10 billion images, and its 84.7% aggregate VBench score is the highest publicly verified number in that tier.

HunyuanVideo 1.5 from Tencent generates at roughly 75 seconds per clip on a RTX 4090 at 480p. Not fast, but more accessible than most proprietary options for developers who want GPU-local generation. LTX-2.3 from Lightricks is the speed pick - it runs notably faster than HunyuanVideo at comparable quality on many prompt types. James covered it when it launched in the LTX-2.3 model profile.

VBench radar chart for open-source video generation models. The Wan series consistently leads in temporal consistency and multiple-object tracking.

Source: github.com/Vchitect/VBench

VBench radar chart for open-source video generation models. The Wan series consistently leads in temporal consistency and multiple-object tracking.

Source: github.com/Vchitect/VBench

Key Takeaways

HappyHorse's Dominance and the Access Problem

HappyHorse-1.0 is the most technically impressive model on these rankings, and also the least accessible. It appeared without attribution on Artificial Analysis around April 7, climbed to first across both T2V and I2V leaderboards within days, then vanished from the platform before Alibaba confirmed ownership on April 10. The Alibaba-HappyHorse announcement acknowledged the product but didn't announce a commercial availability date.

The architecture details leaked via the model's documentation suggest a 40-layer single-stream Self-Attention Transformer without Cross-Attention, running inference in only 8 denoising steps. It's optimized specifically for human-centric video - facial expression, lip sync, body motion - which explains why it scores particularly well on human-heavy preference prompts in the arena. You can read more in the news coverage of HappyHorse topping the leaderboard.

The practical question for anyone building a pipeline: right now, HappyHorse isn't something you can reliably call. It is a signal of where Alibaba's video research is, not a product.

Sora's Exit and What It Means

OpenAI shut down the Sora consumer app on March 24, 2026. The API continues until September 24. Download numbers had fallen to roughly 1.1 million by February - a 66% decline from peak - and infrastructure costs were estimated at $15 million per day. The TechCrunch analysis of the shutdown describes a product that failed commercially while technically competitive. Disney had committed $1 billion to a partnership; that agreement ended when Sora did.

OpenAI has said the research team continues working on "world simulation" for robotics applications. Whether a successor video model appears under a different product structure isn't clear.

Seedance's Legal Limbo

ByteDance's Seedance 2.0 is technically strong - Elo 1,268 for T2V, second only to HappyHorse - but its commercial arc is uncertain. The Motion Picture Association sent a cease-and-desist letter in February 2026 over "unauthorized use of US copyrighted works on a massive scale," with Disney, Warner Bros., Paramount, Netflix, and Sony joining. SAG-AFTRA cited unauthorized use of member voice and likeness. ByteDance paused the global rollout in March. More context in our Seedance Hollywood copyright coverage.

The model remains accessible in China and through some API providers. For international commercial production use, its legal exposure is a real risk to factor in.

The T2V vs. I2V Split

Text-to-video and image-to-video are distinct tasks that require different model strengths. T2V demands creative interpretation of prompts and consistent world-building from nothing. I2V demands that the model respect an existing visual starting point - maintain object identity, honor the lighting and composition, produce motion that feels native to the input image.

Kling's performance shows the distinction well. Kling 3.0 ranks 3rd in T2V (Elo 1,247) and 9th in I2V (Elo 1,282 for the same Pro 1080p model). PixVerse V6, which doesn't appear in the T2V top-10, reaches rank 4 in I2V (Elo 1,314). Users building workflows should test both tasks explicitly rather than assuming a single ranking applies to both use cases. Our best AI video generators guide covers this trade-off in more depth.

VBench-2.0's Harder Questions

The original VBench caught consistency, smoothness, and alignment failures that plagued 2024-era models. Most 2026 frontier models clear those thresholds comfortably. VBench-2.0 turns the dial harder by asking whether videos show physically plausible interactions. When a character pours water, does it flow correctly? When two objects collide, does the motion follow conservation laws? When a person walks, do their limbs move in anatomically plausible sequence?

On action faithfulness alone, even leading models score around 50% in the VBench-2.0 paper's initial evaluation. The four models tested in the March 2026 paper - HunyuanVideo, CogVideoX-1.5, Sora 480p, and Kling 1.6 - showed consistent weaknesses in dynamic attribute changes, where most scored between 8% and 29%.

Physics is the next frontier that benchmarks have put numbers to. Expect this to become the primary differentiator in 2027 rankings.

Practical Guidance

For professional video production (reliably available): Kling 3.0 Pro at Elo 1,247 T2V is the strongest option with stable API access and no current legal complications. It supports native 4K output, multi-shot scene logic, and subject consistency across cuts - which matters for anything longer than a 5-second clip. Our Kling 3.0 review covers hands-on results.

For social media and short-form at low cost: Hailuo 02 from MiniMax generates at $0.28 per clip, runs fast, and scores well in user preference testing for casual and social video styles. It won't beat Kling on cinematic quality, but for volume generation the cost difference is significant.

For open-source / local deployment: Wan 2.2 leads VBench with a 84.7% aggregate score and is Apache 2.0 licensed. LTX-2.3 is the speed pick if latency matters more than absolute quality. HunyuanVideo 1.5 runs on a RTX 4090 at consumer GPU prices and performs well on visual quality sub-metrics even if raw VBench totals aren't publicly confirmed.

For image-to-video specifically: PixVerse V6 (Elo 1,314) outperforms its T2V ranking markedly and is worth testing separately from any T2V benchmarks you're relying on.

For audio-native video: Seedance 2.0 leads the With Audio I2V category (Elo 1,176) and is accessible through some API providers despite the global rollout pause. SkyReels V4 at Elo 1,087 is a reliable alternative with audio.

FAQ

Is Sora still available in April 2026?

The Sora app was shut down March 24, 2026. The Sora API continues until September 24, 2026. No successor product has been announced.

What is VBench-2.0 and how is it different from VBench?

VBench tests 16 dimensions covering video quality and text alignment. VBench-2.0 adds 18 fine-grained dimensions testing intrinsic faithfulness - physics plausibility, human motion realism, commonsense causality, and creative composition. Most current models score below 60% on VBench-2.0 physics tasks.

Which video model has the best Elo score right now?

HappyHorse-1.0 from Alibaba leads both T2V (Elo 1,361 no-audio) and I2V (Elo 1,398 no-audio) on Artificial Analysis as of April 2026, but public API access isn't yet available.

What is the best video model I can actually use today?

For general availability with strong quality, Kling 3.0 Pro (T2V Elo 1,247) or Veo 3 from Google (Elo 1,217) are solid choices. Open-source developers should look at Wan 2.2 or LTX-2.3.

Can I use Seedance 2.0?

Seedance 2.0 is accessible in China and through some API providers. ByteDance paused the global consumer rollout in March 2026 due to copyright disputes with major Hollywood studios. Commercial use in international markets carries legal uncertainty.

Sources:

- Artificial Analysis Text-to-Video Leaderboard

- Artificial Analysis Image-to-Video Leaderboard

- VBench Project Page (Vchitect, CVPR 2024)

- VBench GitHub Repository

- VBench-2.0 Paper - arXiv 2503.21755

- Why OpenAI Really Shut Down Sora - TechCrunch, March 29 2026

- Sora Shutdown Coverage - CNN Business, March 24 2026

- Disney Exits OpenAI Deal After Sora Closure - Hollywood Reporter

- MPA Cease-and-Desist to ByteDance Over Seedance 2.0 - Hollywood Reporter

- ByteDance Pledges Fixes to Seedance 2.0 After Hollywood Claims - Al Jazeera

- Alibaba Reveals HappyHorse AI Video Model - Creati.ai, April 10 2026

- Runway Gen-4.5 Introduction - Runway Research

✓ Last verified April 17, 2026