Multilingual LLM Leaderboard: March 2026 Rankings

Rankings of the best AI models for multilingual tasks, covering 16 languages across the Artificial Analysis Multilingual Index and MGSM benchmarks.

Most benchmark coverage focuses on English performance. English MMLU, English coding, English reasoning. That's fine if your users are American academics or San Francisco developers, but it misses the majority of the world. Right now, over 80% of internet users don't speak English as their first language. If you're building something that people will actually use globally, you need to know which models hold up when the prompts come in Hindi, Arabic, Swahili, or Yoruba.

This leaderboard covers two multilingual benchmarks that test LLM performance in exactly that context: the Artificial Analysis Multilingual Index, which aggregates reasoning scores across 16 languages, and MGSM (Multilingual Grade School Math), which tests mathematical reasoning translated into 10 linguistically varied languages. Together they reveal which models generalize beyond English - and which ones quietly fall apart when you switch languages.

TL;DR

- Gemini 3.1 Pro Preview leads the Artificial Analysis Multilingual Index at 93, edging out Google's own Gemini 3 Pro (92) and Claude Opus 4.6 (92)

- Low-resource languages remain a serious weakness across all models, with performance gaps up to 24.3% between high-resource and low-resource languages

- Open-source picks Qwen 3.5 122B (MMMLU: 88.5) for multilingual cost efficiency; Llama 4 Maverick (MMMLU: 84.6) for on-premises deployments

What These Benchmarks Measure

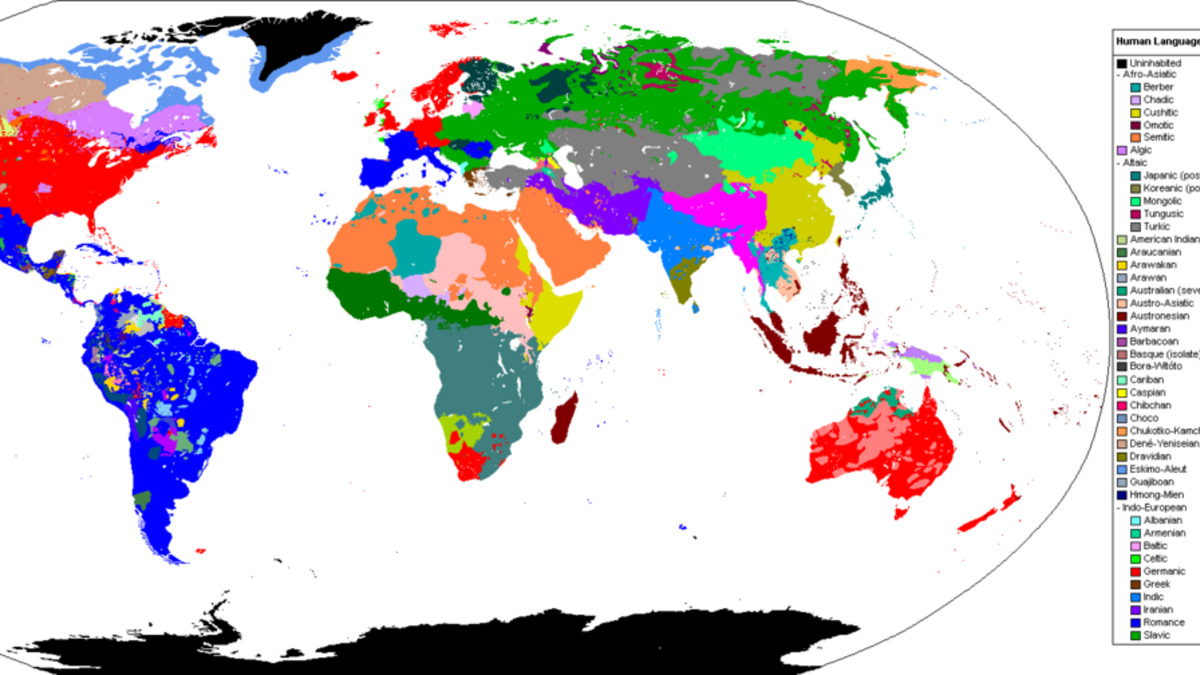

The world's language families. Most LLM training data skews heavily toward English and a handful of European languages.

Source: commons.wikimedia.org

The world's language families. Most LLM training data skews heavily toward English and a handful of European languages.

Source: commons.wikimedia.org

Artificial Analysis Multilingual Index

Artificial Analysis runs the Global-MMLU-Lite evaluation methodology across 16 languages: Arabic, Bengali, English, Chinese, French, German, Hindi, Indonesian, Italian, Japanese, Korean, Portuguese, Spanish, Swahili, Yoruba, and Burmese. The result is a single aggregate score per model, normalized so that the highest scorer in each language gets a perfect representation and the lowest gets a floor value. Scores are displayed on a 0-100 scale.

This approach captures both high-resource language performance (Spanish, French, Chinese) and low-resource performance (Yoruba, Swahili, Burmese). A model that scores 93 overall is truly strong across the board, not just great at Spanish because it saw a lot of it during training.

The limitation is coverage: Artificial Analysis has evaluated a small set of frontier models. You won't find open-weight models like Llama 4 or Qwen 3.5 here yet.

MGSM: Multilingual Grade School Math

MGSM translates 250 grade school math problems into 10 languages - Bengali, Chinese, French, German, Japanese, Russian, Spanish, Swahili, Telugu, and Thai - and measures whether models can solve them correctly in each. It's a useful stress test for two things: can the model understand the problem in a non-English language, and can it perform the reasoning while working in that language?

MGSM used to be a meaningful differentiator. It isn't anymore at the frontier. Claude Opus 4.5 in thinking mode hits 95.2%. The benchmark is saturating among reasoning models, so its main value now is comparing open-source and mid-tier models against each other, where gaps of 5-15 points still exist.

The caveat: MGSM only covers math. A model that scores well on MGSM might still struggle on open-ended multilingual instruction following or cultural knowledge questions. Use it alongside broader benchmarks, not as a standalone verdict.

Rankings: Artificial Analysis Multilingual Index

These scores come directly from the Artificial Analysis multilingual leaderboard, measured as of March 2026.

| Rank | Model | Provider | Overall Score | Chinese | Hindi | Swahili / Low-Resource |

|---|---|---|---|---|---|---|

| 1 | Gemini 3.1 Pro Preview | 93 | 94 | 94 | Significant drop | |

| 2 | Gemini 3 Pro Preview | 92 | 94 | 91 | Significant drop | |

| 2 | Claude Opus 4.6 | Anthropic | 92 | 94 | 92 | Significant drop |

| 4 | Gemini 3 Flash | 91 | 93 | - | Significant drop | |

| 4 | Claude Opus 4.5 | Anthropic | 91 | 92 | - | Significant drop |

Note: Artificial Analysis scores for Swahili, Yoruba, and Burmese aren't broken out in their summary view. "Significant drop" reflects the documented 24.3% average gap between high-resource and low-resource languages observed across the AI industry - none of these models are exceptions.

MGSM Benchmark: Mathematical Reasoning Across Languages

| Rank | Model | Provider | MGSM Score |

|---|---|---|---|

| 1 | Claude Opus 4.5 (Thinking) | Anthropic | 95.2% |

| 2 | Claude 3.5 Sonnet | Anthropic | 91.6% |

| 3 | Claude 3 Opus | Anthropic | 90.7% |

| 4 | GPT-4o | OpenAI | 90.5% |

| 5 | Claude 3.5 Haiku | Anthropic | 85.6% |

| 6 | Claude 3 Sonnet | Anthropic | 83.5% |

| 7 | Nemotron 3 Nano | NVIDIA | 83.0% |

| 8 | DeepSeek V3 | DeepSeek | 79.8% |

MGSM data sourced from llmdb.com and vals.ai. Note that newer frontier models (Claude Opus 4.6, GPT-5.x) aren't included in current public MGSM tracking - the benchmark has largely saturated for models with built-in reasoning modes.

Key Takeaways

Google Holds the Multilingual Crown - But Only Slightly

Gemini 3.1 Pro Preview scores 93 on the Artificial Analysis Multilingual Index, one point ahead of both Gemini 3 Pro and Claude Opus 4.6. That margin is real but not dramatic. All three models perform at 94 in Chinese, and Spanish scores are tied across five different models at 94. The race at the top is truly close.

What separates Gemini 3.1 Pro is Hindi. It scores 94 in Hindi while Claude Opus 4.6 sits at 92 in the same language. India has roughly 530 million Hindi speakers. If your application serves that market, the 2-point gap matters.

Claude Leads in Chinese - and MGSM is an Anthropic Stronghold

On Chinese specifically, Claude Opus 4.6 matches Gemini 3.1 Pro and Gemini 3 Pro at 94. This stands out because Chinese is both high-resource and linguistically complex in ways that trip up models trained primarily on English. Claude's parity here reflects genuine training investment, not lucky benchmark alignment.

The MGSM rankings tell a similar story. Five of the top seven positions belong to Anthropic models. Claude 3.5 Sonnet (91.6%) and Claude 3 Opus (90.7%) beat GPT-4o (90.5%) on multilingual math, and Claude Opus 4.5 in thinking mode sets the current verified high-water mark at 95.2%. Whether newer frontier models from OpenAI break that ceiling is unclear - GPT-5.x series models don't yet have published MGSM results in the benchmarking databases I track.

Open-Source Models Are Narrowing the Gap - Unevenly

Open-source models have made significant strides in multilingual coverage, but gaps against proprietary frontier models remain in low-resource languages.

Source: unsplash.com

Open-source models have made significant strides in multilingual coverage, but gaps against proprietary frontier models remain in low-resource languages.

Source: unsplash.com

Llama 4 Maverick scores 84.6% on the Multilingual MMLU benchmark, per training documentation. Llama 4 Maverick was pre-trained on over 200 languages with 10 times more multilingual tokens than Llama 3. Meta's investment shows: 84.6% is a meaningful step above what open-weight models scored 18 months ago.

Qwen 3.5 (the 122B-parameter dense model) does better, hitting 88.5 on MMMLU. Qwen 3.5's multilingual training specifically covers Chinese, Japanese, Korean, and other East Asian languages in depth, and the score reflects that. The 9B variant scores 81.2 on the same benchmark, which is remarkable for a model small enough to run on a high-end consumer GPU.

Neither of these models appears in the Artificial Analysis Multilingual Index yet. When they do, expect Qwen 3.5's aggregate to sit somewhere in the 86-88 range, with Llama 4 Maverick around 82-84. That's a real gap against the 91-93 frontier tier - but it's also free and self-hostable.

DeepSeek V3.2 scored 79.8% on MGSM in its original V3 form. DeepSeek V3.2 reduced language-mixing errors and improved multilingual output consistency, but specific MGSM scores for V3.2 aren't yet in the public benchmarking databases I use. Given the architecture improvements, assume a modest upward revision from the 79.8% baseline.

The Low-Resource Language Problem Hasn't Gone Away

This is the part of multilingual AI that the press releases skip. Research consistently finds that LLMs drop 15-24% in performance when you move from high-resource languages (English, Spanish, French, Chinese) to low-resource ones (Swahili, Yoruba, Bengali, Burmese). Artificial Analysis scores model performance across Swahili, Yoruba, and Burmese specifically - those languages are included in the index for this reason.

The industry average gap documented in recent multilingual AI research sits at 24.3% between high-resource and low-resource language performance. That's not a small deviation. That's a different tier of capability. A model scoring 92 overall might score 76 in Yoruba.

None of the models reviewed here are exceptions. Anthropic and Google have both invested clearly in multilingual training, and both still show major drops in low-resource languages. If you're building for users in sub-Saharan Africa, South Asia beyond Hindi, or Southeast Asian markets with limited digital content, benchmark your actual target languages before choosing a model. Overall scores hide a lot.

MGSM Is Reaching Its Ceiling

The benchmark was designed for grade school math problems. At 95.2% on Claude Opus 4.5 (Thinking), we're running out of headroom. What this tells you is that frontier reasoning models have basically solved the specific task of doing grade school math across 10 languages. It doesn't tell you much about whether they can reason in those languages on harder problems, handle culturally specific phrasing, or maintain coherence in extended multilingual conversations.

For harder cross-lingual reasoning, look to newer benchmarks like MMLU-ProX (which covers 29 languages on graduate-level problems) or the AI Language Proficiency Monitor, which aggregates across up to 200 languages and tasks. Neither has the clean single-number summaries that make for easy leaderboards, but they capture what MGSM can't.

Practical Guidance

For global SaaS products serving high-resource language markets (Europe, Latin America, East Asia): The top five models on the Artificial Analysis index are all practical choices. Claude Opus 4.6 and Gemini 3.1 Pro Preview are interchangeable for French, Spanish, German, Chinese, and Korean. Pick based on pricing and API reliability for your deployment region, not on the 1-2 point score difference.

For South Asian markets: Gemini 3.1 Pro Preview's 94 in Hindi gives it a real edge. Google has also put more training investment into Bengali and Indonesian than most other labs. Check Artificial Analysis's per-language breakdowns before committing.

For African language markets (Swahili, Yoruba, Hausa, etc.): No frontier model performs reliably here, full stop. The benchmark scores look acceptable at the aggregate level but mask poor performance on actual low-resource language tasks. Test your specific use case with real prompts in the target language. If you're serious about this market, consider fine-tuning on local data rather than relying on a general multilingual model.

For cost-sensitive deployments with multilingual requirements: Qwen 3.5 is the open-source pick. The 122B model at 88.5 MMMLU is the strongest open-weight multilingual option available right now, particularly for East Asian languages. Llama 4 Maverick at 84.6 is the alternative if you need strong English-language performance with decent multilingual support, or if you're already in Meta's ecosystem. See our guide to choosing an LLM for a fuller breakdown of the cost and performance tradeoffs across deployment scenarios.

For understanding what these numbers actually mean: Read our guide to AI benchmarks before treating any of these numbers as gospel. Benchmark scores are a starting point, not a conclusion.

Sources:

✓ Last verified March 9, 2026