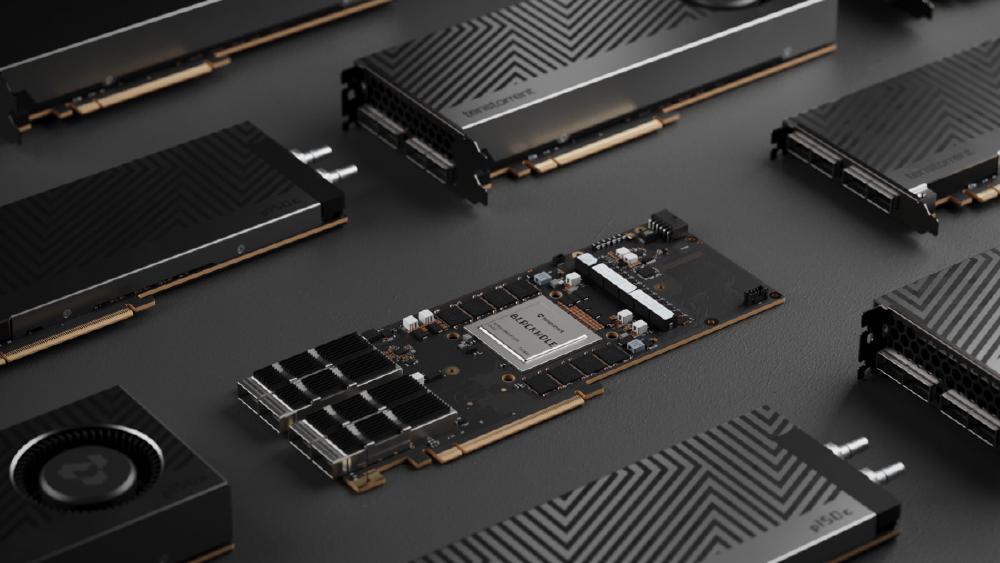

Tenstorrent Blackhole p150a - RISC-V AI Card

Complete specs, benchmarks, and analysis of the Tenstorrent Blackhole p150a - the $1,399 PCIe AI accelerator with 120 Tensix cores, 768 RISC-V processors, 32GB GDDR6, and fully open-source software.

TL;DR

- $1,399 PCIe AI accelerator with 120 Tensix cores and 768 RISC-V processors on TSMC 6nm - the first AI card where every core runs open-source RISC-V

- 664 TFLOPS BLOCKFP8 and 332 TFLOPS BF16 from 32GB GDDR6 at 512 GB/s bandwidth

- Fully open-source software stack - TT-Metalium, TT-NN, and TT-Forge published under Apache 2.0

- 4x QSFP-DD 800G Ethernet ports built into the card for direct chip-to-chip networking without external switches

- Budget variant p100a at $999 with 28GB and no QSFP-DD; QuietBox workstation with 4x p150c cards at $11,999

Overview

Tenstorrent's Blackhole p150a is the most unusual AI accelerator you can actually buy today. In a market where every serious competitor uses proprietary ISAs, proprietary interconnects, and proprietary software stacks, Tenstorrent has built a high-performance AI card around RISC-V cores with a fully open-source software stack. And they are selling it for $1,399 - roughly the price of an NVIDIA RTX 4090 and a fraction of what any datacenter-class AI accelerator costs.

The Blackhole chip is Tenstorrent's third-generation AI silicon, following Grayskull and Wormhole. It's built on TSMC's 6nm process with about 600mm^2 of die area. The core compute comes from 120 Tensix cores - Tenstorrent's custom compute tile that pairs a matrix engine (for tensor operations) with a vector engine (for element-wise operations) and local SRAM. Each Tensix core contains multiple RISC-V processors (called "Baby RISC-V" cores) that control the compute pipelines. Across the full chip, there are 768 RISC-V processors, making the Blackhole one of the largest RISC-V implementations in any shipping product.

The p150a card ships with 32GB of GDDR6 at 512 GB/s, 300W TDP, and a PCIe 5.0 x16 host interface. What makes it unusual among AI accelerators is the built-in networking: 4x QSFP-DD ports supporting 800G Ethernet each, giving the card 3.2 Tbps of direct chip-to-chip bandwidth without requiring an external network switch. For multi-card deployments, this means you can daisy-chain Blackhole cards directly, avoiding the cost and complexity of InfiniBand or RoCE switching infrastructure.

Tenstorrent is led by Jim Keller, the legendary chip architect behind AMD's Zen architecture, Apple's A-series processors, and Tesla's FSD chip. Keller joined as CEO in 2023 and has been vocal about his belief that AI hardware needs to break free from the CUDA monoculture. The Blackhole is the first product that fully reflects his vision: RISC-V everywhere, open-source everything, and a price point that makes AI hardware accessible to researchers and startups, not just hyperscalers.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | Tenstorrent |

| Architecture | Blackhole (3rd generation) |

| Process Node | TSMC 6nm |

| Die Area | ~600 mm^2 |

| Tensix Cores | 120 |

| RISC-V Processors | 768 (Baby RISC-V) |

| BLOCKFP8 Compute | 664 TFLOPS |

| BF16 Compute | 332 TFLOPS |

| FP32 Compute | 83 TFLOPS |

| Memory | 32 GB GDDR6 |

| Memory Bandwidth | 512 GB/s |

| Memory Channels | 8 |

| Host Interface | PCIe 5.0 x16 |

| Networking | 4x QSFP-DD 800G Ethernet (3.2 Tbps total) |

| TDP | 300W |

| Form Factor | Full-height, dual-slot PCIe card |

| Cooling | Active fan (p150a) or passive (p150c for QuietBox) |

| Price | $1,399 |

Tensix Core Architecture

Each Tensix core is a self-contained compute tile with:

- Matrix Engine: Handles tensor (matrix multiplication) operations. Supports BLOCKFP8, BF16, FP32, and INT8 precision formats.

- Vector Engine: Handles element-wise operations, activations, normalization, and other non-GEMM compute. Programmable via RISC-V cores.

- Local SRAM: Each Tensix core has dedicated SRAM for intermediate data, reducing the need to round-trip to GDDR6 for temporary values.

- RISC-V Control Processors: Multiple Baby RISC-V cores per Tensix tile manage data movement, scheduling, and compute dispatch.

The Tensix architecture is closer to a network-on-chip design than a traditional GPU. Each core operates semi-independently, with data flowing between cores through an on-chip mesh interconnect. This design enables high use for dataflow-style computation (like transformer inference) where different layers of the model can be mapped to different Tensix cores and data streams through the chip without global synchronization.

Performance Benchmarks

| Metric | p150a (Blackhole) | NVIDIA RTX 4090 | NVIDIA H100 SXM |

|---|---|---|---|

| FP8 Compute | 664 TFLOPS (BLOCKFP8) | 660 TFLOPS (FP8) | 1,979 TFLOPS (FP8 sparse) |

| BF16 Compute | 332 TFLOPS | 330 TFLOPS | 990 TFLOPS |

| Memory | 32 GB GDDR6 | 24 GB GDDR6X | 80 GB HBM3 |

| Memory Bandwidth | 512 GB/s | 1,008 GB/s | 3,350 GB/s |

| TDP | 300W | 450W | 700W |

| Price | $1,399 | ~$1,600 | ~$25,000 |

| Networking (built-in) | 3.2 Tbps | None | None |

| Software | TT-Forge (open source) | CUDA | CUDA |

Raw Compute Context

On paper, the Blackhole p150a matches the RTX 4090 in FP8 and BF16 TFLOPS at a slightly lower price and clearly lower power draw (300W vs 450W). Against the H100, it delivers roughly one-third the FP8 compute at one-eighteenth the price.

The real question is utilization. NVIDIA's CUDA ecosystem has decades of kernel optimization, and frameworks like TensorRT-LLM extract near-peak performance from NVIDIA hardware. Tenstorrent's software stack is younger and less optimized. Early community benchmarks suggest the Blackhole reaches 40-60% of its theoretical TFLOPS on real LLM workloads, compared to 60-80% for NVIDIA GPUs with mature software. This gap is real but narrowing as Tenstorrent's open-source community contributes optimizations.

Networking Advantage

The built-in 3.2 Tbps of Ethernet networking is the p150a's most underappreciated feature. In a multi-GPU training or inference setup, networking is usually the most expensive component after the GPUs themselves. An InfiniBand switch for 8 GPUs can cost $10,000-50,000 depending on the configuration. With the p150a, you connect cards directly via QSFP-DD cables - no switches, no InfiniBand, no additional cost beyond the cables themselves.

For a 4-card setup, the total cost is roughly $5,600 for cards plus $200-400 for cables, versus $6,400+ for four RTX 4090s plus $500-2,000 for networking. For larger deployments, the networking cost savings scale proportionally.

Key Capabilities

Fully Open-Source Software Stack. Tenstorrent's entire software stack is published under Apache 2.0:

- TT-Metalium: Low-level runtime and kernel library. Direct access to the Tensix cores, memory management, and data movement primitives. Equivalent to CUDA's driver API and PTX assembly.

- TT-NN: Neural network library with optimized operators for common AI operations (attention, linear, convolution, normalization). Equivalent to cuDNN.

- TT-Forge: High-level model compiler that ingests PyTorch, ONNX, and other frameworks and compiles them to Blackhole executables. Equivalent to TensorRT.

The open-source approach means anyone can inspect, modify, and optimize the entire stack from framework integration down to individual kernel implementations. This is a fundamentally different model from NVIDIA's closed-source CUDA ecosystem, and it has attracted a growing community of contributors - particularly from the RISC-V and open-hardware communities.

RISC-V Throughout. Every processor on the Blackhole - the 768 Baby RISC-V cores, the management processors, the network controllers - runs the RISC-V instruction set. This matters for two reasons. First, RISC-V is an open ISA with a large ecosystem of compilers, debuggers, and profiling tools. Second, it means Tenstorrent avoids licensing fees to ARM, x86, or any other ISA vendor, keeping the bill of materials lower.

BLOCKFP8 Precision. Tenstorrent uses the BLOCKFP8 format rather than standard FP8 (E4M3/E5M2). BLOCKFP8 groups elements into blocks that share a common exponent, providing better dynamic range coverage for neural network weights and activations than per-element FP8. The 664 TFLOPS figure represents the peak BLOCKFP8 throughput of the matrix engines across all 120 Tensix cores.

Pricing and Availability

The Blackhole lineup launched in early 2025 with three configurations:

| Product | Price | Memory | Networking | Cooling | Notes |

|---|---|---|---|---|---|

| p150a | $1,399 | 32 GB GDDR6 | 4x QSFP-DD 800G | Active fan | Flagship PCIe card |

| p100a | $999 | 28 GB GDDR6 | None | Active fan | Budget variant, no networking |

| QuietBox | $11,999 | 4x p150c cards | Passive | Workstation chassis | 4-card workstation system |

The p150a is available direct from Tenstorrent's website and through select channel partners. Lead times have varied from immediate availability to 2-4 week backorders depending on demand cycles.

The Core Count Controversy

The Blackhole chip was originally announced with 140 Tensix cores, but shipped with 120 enabled. Tenstorrent has stated this is a yield optimization decision - disabling 20 cores from the ~600mm^2 die on a 6nm process significantly improves manufacturing yield and allows more chips per wafer to pass quality validation. The 664 TFLOPS specification is based on the 120-core configuration, so the shipped performance matches the specification.

The community reaction was mixed. Some viewed the 14% core reduction as a bait-and-switch; others recognized it as standard practice in the semiconductor industry (NVIDIA has done this with many GPU launches, including the RTX 4090 which ships with 128 of 144 SMs enabled). The key question is whether the 120-core performance meets workload requirements at the $1,399 price point, and for most use cases, it does.

Cost-Per-TFLOPS Analysis

| Metric | p150a | RTX 4090 | H100 SXM |

|---|---|---|---|

| Price | $1,399 | ~$1,600 | ~$25,000 |

| FP8 TFLOPS | 664 | 660 | 1,979 |

| $/TFLOPS (FP8) | $2.11 | $2.42 | $12.63 |

| Memory (GB) | 32 | 24 | 80 |

| $/GB Memory | $43.72 | $66.67 | $312.50 |

On a pure dollar-per-TFLOPS basis, the p150a is competitive with the RTX 4090 and dramatically cheaper than the H100. The 32GB of memory at $43.72/GB is also the best value in the comparison. The caveat remains software maturity - reaching those TFLOPS on real workloads depends on the quality of kernel implementations in TT-NN.

Strengths

- $1,399 price point makes datacenter-class AI compute accessible to individual researchers, startups, and universities

- Fully open-source software stack (Apache 2.0) enables community optimization and removes vendor lock-in

- Built-in 3.2 Tbps Ethernet networking eliminates the need for expensive InfiniBand switches in multi-card deployments

- 768 RISC-V processors make this the largest shipping RISC-V implementation in AI hardware

- 300W TDP is power-efficient relative to the compute delivered - fits in standard PCIe server chassis

- 32GB GDDR6 is more memory than the RTX 4090's 24GB at a lower price

- Jim Keller's leadership brings credibility and a track record of successful chip architectures

- BLOCKFP8 precision format provides better dynamic range than standard FP8 for neural network workloads

Weaknesses

- Software stack maturity lags NVIDIA's CUDA ecosystem by years - real-world use is 40-60% vs NVIDIA's 60-80%

- 512 GB/s memory bandwidth is half the RTX 4090's 1,008 GB/s - bandwidth-bound workloads (LLM decode) will underperform

- Core count reduction from 140 to 120 Tensix cores raised community trust concerns

- TSMC 6nm is two full nodes behind the 3nm/4nm silicon used by NVIDIA B200 and AWS Trainium3

- Limited model coverage - not all popular models have optimized TT-NN kernels yet

- GDDR6 (not HBM) limits bandwidth-to-compute ratio for memory-bound workloads

- No equivalent of TensorRT-LLM's advanced serving features (continuous batching, speculative decoding) in TT-Forge yet

- Small company risk - Tenstorrent is privately funded and competing against NVIDIA, AMD, and cloud providers with vastly more resources

Related Coverage

- NVIDIA H100 SXM - The AI Training Benchmark - The datacenter GPU that defines the price/performance baseline for AI accelerators

- NVIDIA RTX 4090 - Consumer AI Powerhouse - The closest price-point competitor from NVIDIA

- Groq LPU - Deterministic Inference at Scale - Another non-NVIDIA ASIC taking a different architectural approach

- Cerebras WSE-3 - Wafer-Scale AI Engine - A radically different approach to AI compute at the opposite end of the scale range

- AMD MI300X - The NVIDIA Challenger - AMD's datacenter AI accelerator competing for the same market