Skymizer HTX301: 700B LLMs at 240W on PCIe

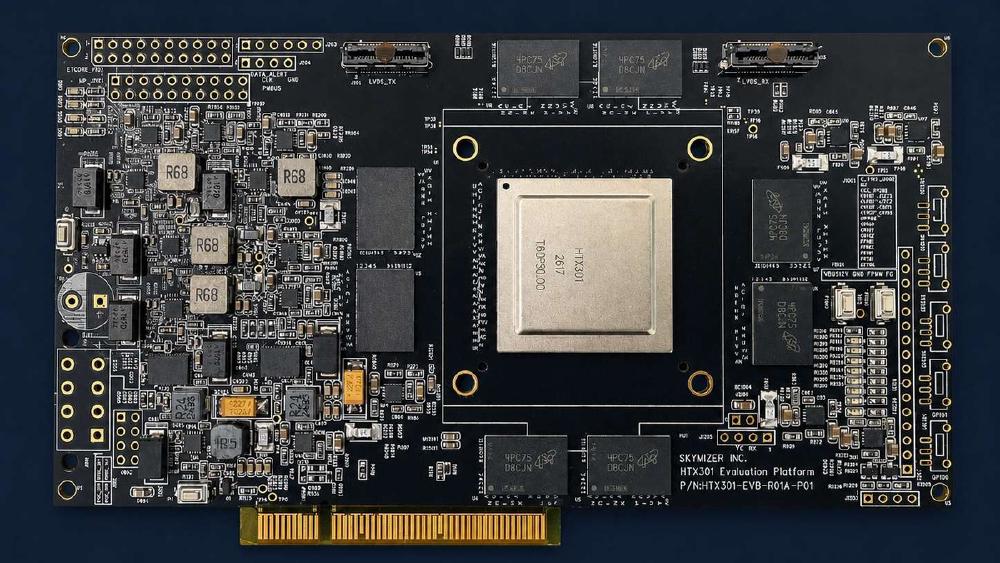

Skymizer's HTX301 uses six 28nm chips and 384 GB LPDDR5 to run 700B-parameter LLMs on a single PCIe card at just 240W.

TL;DR

- Six HTX301 chips on a single PCIe card - 384 GB LPDDR5 at ~240W, enough memory to fit a 700B-parameter model in 4-bit

- Purpose-built for the decode phase of LLM inference; prefill still requires a GPU, making this a complement, not a standalone accelerator

- 28nm process and LPDDR5 memory aren't impressive on paper, but they're intentional - decode is bandwidth-bound, not compute-bound, so cheap memory capacity beats expensive FLOPS

- 0.5 TOPS per chip is extremely low by modern AI accelerator standards; Skymizer's real differentiator is the memory-to-power ratio, not raw compute

- Announced April 23, 2026 ahead of Computex 2026; evaluation access available, no commercial pricing or shipping date announced

Overview

Skymizer Taiwan Inc. announced the HTX301 on April 23, 2026 - the first product from their HyperThought silicon platform. On the surface, the pitch is attention-grabbing: a single PCIe Add-in-Card running 700B-parameter LLM inference at roughly 240W. That's the kind of number that would normally require a rack of H100s. The reason it works - and the reason the chip looks unremarkable by every conventional benchmark metric - comes down to a specific idea about what LLM inference actually needs.

The HTX301 isn't trying to compete with NVIDIA on FLOPS. It isn't even trying to do the same job. Skymizer built a chip optimized exclusively for the decode phase of transformer inference - the part where the model creates tokens one at a time. That phase is memory-bandwidth-bound, not compute-bound, and it doesn't need 3,958 TFLOPS of FP8 arithmetic. What it needs is a lot of fast, cheap memory so that all 700 billion parameters can be read efficiently per produced token. The HTX301 card delivers 384 GB of LPDDR5 across six chips, which fits a Llama-class 700B model in 4-bit quantization (about 350 GB needed) with room to spare.

The trade-off is that Skymizer's architecture requires a GPU for the prefill phase - building the KV cache from the user's input prompt. The HTX301 card handles decode; a conventional GPU handles prefill. Skymizer calls this "disaggregated inference," and it's a real architectural pattern being explored by several research groups and inference serving projects. Whether Skymizer's implementation delivers the latency and throughput numbers that actually matter for production use is a question the company hasn't fully answered yet.

Skymizer is headquartered in Taipei with offices in Hsinchu - Taiwan's semiconductor cluster - and has been developing hardware IP since well before this announcement. Their HyperThought platform won "Best IP/Processor of the Year" and "Most Promising Product" at EE Awards Asia 2025. The HTX301 is the first chip to use HyperThought silicon in a reference design. Luba Tang, Skymizer's CTO, put the architectural philosophy plainly: "Purpose-built decode hardware paired with an intelligent software stack that orchestrates every inference workload - that's how you disaggregate P/D at scale."

Key Specifications

| Specification | Per Chip | Per Card (6 chips) |

|---|---|---|

| Manufacturer | Skymizer Taiwan Inc. | Skymizer Taiwan Inc. |

| Platform | HyperThought | HyperThought |

| ISA | LISA (proprietary) | LISA (proprietary) |

| Process Node | 28nm | 28nm |

| Compute | 0.5 TOPS | ~3 TOPS (estimated) |

| Memory Type | LPDDR4 / LPDDR5 | LPDDR4 / LPDDR5 |

| Memory Capacity | ~64 GB | 384 GB |

| Memory Bandwidth | 100 GB/s | ~600 GB/s (estimated) |

| TDP | ~40W | ~240W |

| Form Factor | - | PCIe Add-in-Card |

| Target Workload | Decode | Decode (pairs with GPU for prefill) |

| Architecture | Decode-first | Disaggregated inference |

| Status | Preview / Evaluation | Preview / Evaluation |

| Pricing | Not disclosed | Not disclosed |

The 0.5 TOPS per chip deserves direct comparison to what else is on the market. The NVIDIA H100 delivers 3,958 TFLOPS of FP8 compute - roughly 8,000x more per chip. The Tenstorrent Blackhole p150a delivers 664 TFLOPS on TSMC 6nm. On raw compute metrics, the HTX301 isn't competitive with any modern AI accelerator. That's the point: Skymizer isn't selling FLOPS. They're selling memory density at low power.

How Disaggregated Inference Works

Modern LLM inference has two distinct phases, and they have almost nothing in common computationally.

Prefill is the first phase. The model processes the user's entire input prompt in parallel - a highly parallelizable, compute-heavy operation that scales well with more FLOPS. GPUs excel here. A long prompt means a lot of matrix multiplications happening simultaneously, and a H100's thousands of CUDA cores can absorb that work efficiently. The output of prefill is the KV cache - a set of attention key/value tensors that encode the context from the prompt.

Decode is what happens next. The model produces the response, one token at a time, autoregressively. Each step is sequential - you can't produce token N+1 until you have token N. Each step also requires reading every single weight in every layer of the model. For a 700B model in 4-bit, that's roughly 350 GB of data read per token. The bottleneck isn't arithmetic - it's memory bandwidth. A chip with massive FLOPS but limited bandwidth will sit mostly idle during decode, waiting for memory reads to complete. This is why a H100 running a 70B model produces tokens at roughly 20-30 tokens per second for a single user, despite having nearly 4 petaflops of FP8 compute.

The HTX301 targets exactly this bottleneck. With 100 GB/s of bandwidth per chip and six chips per card totaling ~600 GB/s, the card's bandwidth-to-capacity ratio is optimized for streaming large models through the compute units as fast as possible per token. On compute, the chip is deliberately minimal - 0.5 TOPS per chip is enough for the arithmetic involved in decode, which isn't the bottleneck anyway.

The necessary flip side is that prefill still needs a GPU. An enterprise deployment using HTX301 cards for decode would need at least one GPU handling prefill workloads. This disaggregated architecture has real engineering and operational complexity: the prefill GPU must hand off the KV cache to the HTX301 card(s) over a fast link, latency from that handoff adds to time-to-first-token, and orchestrating the split workload requires software coordination that Skymizer says their HyperThought platform provides.

For a single-user local deployment, the complexity may be manageable. For a high-throughput serving system with hundreds of concurrent users, the orchestration requirements get harder fast.

Performance Benchmarks

Skymizer has published limited performance figures, and the ones available require careful interpretation.

The company reports that an octa-core configuration (8 HTX301 chips) reaches:

- 240 tokens per second on Llama 2 7B prefill

- Up to 1,200 tokens per second multi-chip connected

The 240 tok/s figure is for prefill on a 7B model - not decode on a 700B model. These are different operations across vastly different model sizes, and conflating them overstates the relevance to the 700B use case that headlines the announcement. A 7B model prefill benchmark on 8 chips doesn't tell us the decode throughput for a 700B model on a 6-chip card.

What Skymizer hasn't published - and what practitioners most need to know - is:

- Decode tokens per second for a 70B model on one card

- Decode tokens per second for a 700B model on one card

- Time-to-first-token with the disaggregated prefill/decode architecture

- Latency overhead from the prefill-to-decode KV cache handoff

- Sustained throughput under concurrent user load

No independent benchmarks have been published as of May 2026. Skymizer is offering preview and evaluation access through skymizer.ai/htx301 ahead of Computex 2026, so third-party numbers may emerge in the coming weeks. Until then, the performance story is completely self-reported.

The Groq LPU is a useful comparison point for architecture-first inference thinking: Groq also made unconventional memory choices (SRAM instead of HBM) to optimize for latency, published aggressive single-stream benchmark numbers early, and has since had those numbers confirmed by independent users at scale. Skymizer is at the stage Groq was in 2023 - a compelling architectural argument, credible credentials, and no independent data yet.

Key Capabilities

LPDDR Economics vs HBM. The most substantive technical argument for the HTX301 isn't the chip itself - it's the cost structure of LPDDR5 versus HBM. HBM3E, used in the H100 and AMD MI300X, costs roughly $10-15 per gigabyte at volume. LPDDR5 costs closer to $3-4 per GB. For an application like large-model decode - where you need hundreds of gigabytes just to hold the weights - this gap matters enormously. A hypothetical 384 GB of HBM would cost north of $3,800 at current market rates just for the memory component; 384 GB of LPDDR5 is well under $1,500.

The bandwidth trade-off is real: HBM3E on a H100 delivers 3,350 GB/s across 80 GB. The HTX301 card's estimated ~600 GB/s across 384 GB is roughly 5.5x less bandwidth per dollar of compute-but that arithmetic is the wrong lens. The H100's 3,350 GB/s bandwidth is there because 80 GB of HBM isn't enough to hold a 700B model at all. LPDDR's lower bandwidth is enough for decode throughput at the token rates that matter in practice. What LPDDR gives you is capacity - enough to hold the model in the first place.

LISA ISA and HyperThought Platform. Skymizer has developed a proprietary instruction set architecture called LISA (Language Instruction Set Architecture) and the HyperThought platform that spans from on-device to on-premises deployment. The platform is designed to scale from 4B to 700B models under a unified architecture. Details on the compiler, runtime, and software stack are sparse in the current announcement materials. Unlike the Tenstorrent Blackhole - which published its full software stack under Apache 2.0 - Skymizer's toolchain is proprietary and not yet available for external evaluation.

On-Premises Focus. Skymizer is explicitly targeting the enterprise on-premises market: organizations that want to run large models locally for data sovereignty reasons, avoiding per-token cloud inference costs and keeping sensitive data off third-party infrastructure. The 700B model capability on a single PCIe card - if the throughput numbers hold up - is a real value proposition for that market. A hospital, law firm, or financial institution that wants to run a frontier-class model internally today is looking at a significant GPU cluster investment. A PCIe card at a fraction of that cost, even needing one GPU for prefill, would be a meaningfully different economic proposition.

28nm Process Node. The 28nm node looks old by AI accelerator standards - the H100 is on TSMC 4nm, the Blackhole on 6nm, and even the Groq LPU uses 14nm. For decode-first silicon, this is defensible. Decode throughput scales with memory bandwidth, not transistor density, and you can hit the required bandwidth targets with LPDDR arrays on 28nm. The power stays manageable because the compute die isn't doing much work in the first place. Process node matters most when you're trying to maximize FLOPS per watt - the HTX301 isn't trying to maximize FLOPS.

Still, a more advanced node would allow more SRAM per die, tighter integration with the LPDDR controllers, and potentially higher per-chip bandwidth. Skymizer's choice of 28nm is economically rational given the current TSMC capacity environment and their startup cost constraints, but it does cap the ceiling for future bandwidth density.

Pricing and Availability

No commercial pricing has been announced. Skymizer is offering preview and evaluation access through skymizer.ai/htx301 for organizations interested in assessing the hardware ahead of broader availability. The announcement was timed to Computex 2026 in late May 2026, where a broader preview is expected.

The lack of pricing is a significant gap. The economics of LPDDR versus HBM are compelling in theory, but the actual customer cost depends heavily on the card price, the required GPU co-deployment, and the software licensing model. Without those numbers, it's impossible to compare the total cost of ownership against a GPU cluster or a cloud inference deployment.

Skymizer is a relatively small company with offices in Taipei and Hsinchu. They raised an undisclosed amount for the HyperThought platform and have been developing silicon IP since at least 2024 based on earlier product releases. The company doesn't appear to have the manufacturing scale of Groq or Tenstorrent, and the timeline from preview to volume production for a 28nm chip from a smaller fab shouldn't be taken as given without further announcements.

Strengths and Weaknesses

Strengths

- 384 GB of addressable memory on a single PCIe card is the clearest differentiator - no other PCIe card comes close for holding very large models

- LPDDR5 is dramatically cheaper per GB than HBM, which matters at this scale

- ~240W total card TDP is truly low for a 700B model inference deployment

- Disaggregated inference architecture aligns with where the research community is heading for efficient LLM serving

- 28nm process and LPDDR keep manufacturing costs reasonable for a startup without TSMC advanced node allocation

- On-premises value proposition is real for regulated industries with data sovereignty requirements

Weaknesses

- 0.5 TOPS per chip is the lowest compute density of any announced AI accelerator - enough for decode, but zero headroom if the workload shifts

- No independent benchmarks as of May 2026 - every performance claim is self-reported by Skymizer

- Decode-only silicon means you must have a GPU for prefill - this is not a standalone inference solution

- KV cache handoff latency between prefill GPU and HTX301 decode cards adds to time-to-first-token; Skymizer hasn't quantified this overhead

- Proprietary LISA ISA and closed software stack create vendor dependency with no community toolchain to fall back on

- No commercial pricing or availability date announced - "preview access" is not a product roadmap

- No decode throughput figures published for the 70B or 700B model sizes that matter most for the target use case

- Small company risk: Skymizer lacks the capital and manufacturing relationships of Groq, Cerebras, or Tenstorrent

Related Coverage

- Tenstorrent Blackhole p150a - RISC-V AI Card - Another alternative PCIe AI accelerator taking a non-NVIDIA architectural path, with a fully open-source software stack

- Groq LPU - Deterministic Inference at Scale - The closest architectural parallel: a chip built around an unconventional memory hierarchy to optimize inference latency

- NVIDIA RTX 4090 - The Home Lab AI Standard - The baseline GPU for local inference; capable of running 70B models with quantization but not 700B

- NVIDIA H100 - The datacenter standard that HTX301 implicitly positions against for 700B inference use cases

Sources

✓ Last verified May 15, 2026