Positron Atlas - FPGA Inference Server

The Positron Atlas is an 8-card FPGA inference server delivering 4.5x better performance per watt than the NVIDIA DGX H200 at 2000W in a single 1U chassis.

TL;DR

- 8x Archer FPGA accelerators, 32GB HBM each, 256GB total - purpose-built for transformer inference only

- Runs at 2,000W versus 5,900W for the NVIDIA DGX H200, with 4.54x better performance per watt

- 280 tokens per second per user on Llama 3.1 8B (BF16) - 56% more than the H200 at one-third the power

- Ships today, American-made; raised $230M Series B at $1B+ valuation in February 2026

Overview

Positron AI launched in 2023 with a bet that the GPU is wrong for inference. While NVIDIA's hardware leads training, Positron argues that the autoregressive nature of transformer decode - producing one token at a time, with the entire model weight loaded on each step - fits FPGA silicon far better than the GPU's compute-heavy design. The result is the Atlas server: eight Archer FPGA accelerators in a single chassis drawing 2,000 watts, shipping since August 2025.

The company built the Atlas around one observation: modern inference throughput is bound almost entirely by memory bandwidth, not arithmetic compute. A GPU like the H200 delivers enormous TFLOPS, but can only use 10-30% of its memory bandwidth on typical inference workloads. The Archer chips achieve 93% memory bandwidth utilization by design. That gap drives everything.

In February 2026, Positron closed a $230M Series B at over $1B valuation - 34 months after founding. Co-lead investors included Arena Private Wealth and Jump Trading, with participation from Arm, the Qatar Investment Authority, and returning investors Valor Equity Partners and Atreides Management. The round positions Positron as the first FPGA-based company to reach unicorn status in AI hardware.

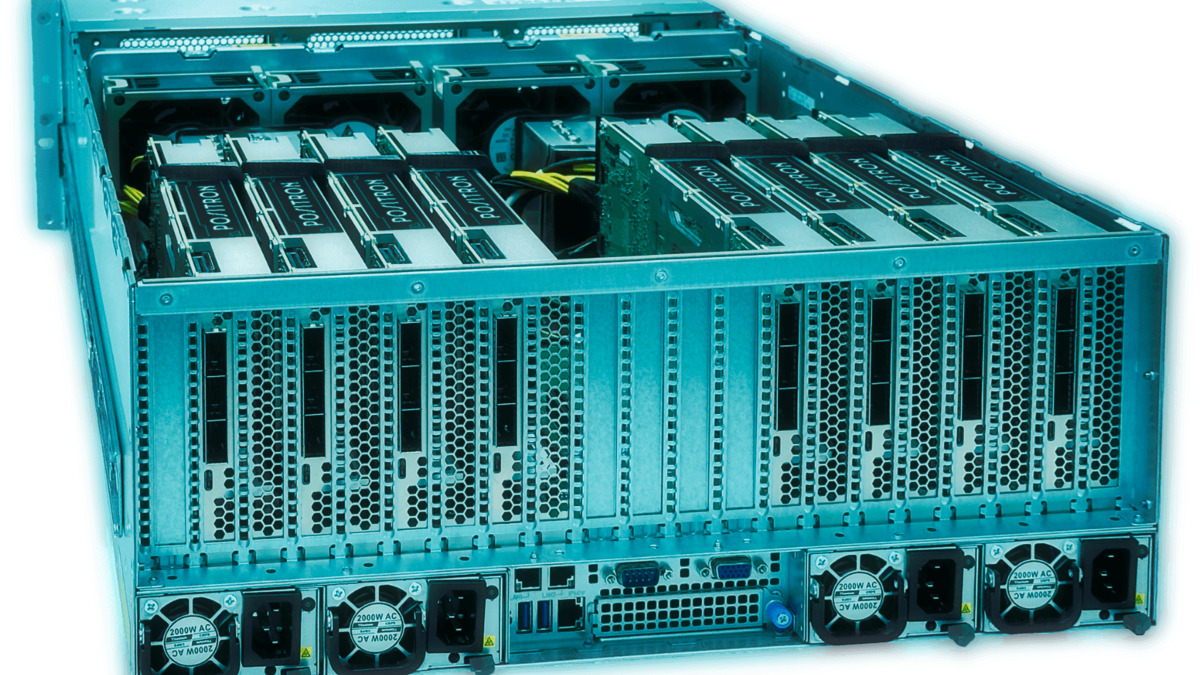

The Positron Atlas server packs eight Archer FPGA accelerators into a 2000W chassis that air-cools without liquid infrastructure.

Source: positron.ai

The Positron Atlas server packs eight Archer FPGA accelerators into a 2000W chassis that air-cools without liquid infrastructure.

Source: positron.ai

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | Positron AI |

| Product Family | Atlas |

| Chip Type | FPGA (Archer Transformer Accelerator) |

| Accelerator Count | 8x Archer cards |

| Memory (Accelerators) | 256GB HBM total (32GB per Archer) |

| Memory (System) | 384GB DDR5 (24-channel RDIMM, up to 2TB) |

| CPU | Dual AMD EPYC Genoa 9374F (64 cores, 3.85 GHz base) |

| Memory Bandwidth Utilization | 93% (vs. 10-30% for GPU-based systems) |

| System TDP | 2,000W |

| Cooling | Air-cooled |

| Storage | 1x 1.92TB NVMe + 1x 1.92TB U.2 SSD |

| Networking | 1x 10Gb/s LAN + 1x 1Gb/s management |

| OS | Ubuntu 22.04.4 LTS |

| Software | Positron Inference Engine |

| Dimensions | 7.0" H × 19.0" W × 29.25" L |

| Weight | 100 lbs (45.4 kg) |

| Origin | U.S.-manufactured |

| Availability | Shipping now |

| Pricing | Not disclosed |

Performance Benchmarks

The benchmark Positron uses most is tokens per second per user on Llama 3.1 8B in BF16 precision, which measures real-time inference latency in production - not peak batch throughput.

| Metric | Positron Atlas | NVIDIA DGX H200 | Ratio |

|---|---|---|---|

| Inference throughput (Llama 3.1 8B BF16) | 280 tok/s/user | ~180 tok/s/user | 1.56x |

| System power draw | 2,000W | 5,900W | 0.34x (Atlas) |

| Performance per watt | - | - | 4.54x (Atlas) |

| Performance per dollar | - | - | 3.08x (Atlas) |

| Memory BW utilization | 93% | 10-30% | - |

| Max model size (single server) | ~500B params | ~500B params (FP8) | - |

The performance-per-watt claim is the most defensible number here. At 280 tok/s on 2,000W versus 180 tok/s on 5,900W, the math works out: (280/2000) ÷ (180/5900) = 4.57x per watt, consistent with Positron's published 4.54x figure. The performance-per-dollar comparison depends on the Atlas purchase price, which Positron doesn't disclose. I'd treat the 3.08x dollar claim as directionally correct but unverifiable until pricing is public.

One important caveat: all published benchmarks are vendor-supplied. Positron's Atlas is distributed through BittWare and serves requests via an OpenAI-compatible API, but no independent third-party benchmark has copied the 280 tok/s figure in a public comparison. The architecture's 93% memory bandwidth use claim also comes from Positron. These numbers are plausible given the FPGA design, but pending external validation.

Key Capabilities

The Archer accelerators are purpose-built for one thing: autoregressive transformer inference. They don't train models. They don't do multi-purpose compute. This single-focus design is why the efficiency numbers look the way they do.

FPGAs offer a structural advantage over GPUs in memory-bound workloads. A GPU's memory controller is shared across thousands of general-purpose cores. The Archer chip can dedicate its entire interconnect architecture to feeding transformer attention heads with minimal overhead. The 93% bandwidth utilization figure reflects this: nearly every byte of memory bandwidth goes directly to inference compute, not to controller overhead or cache misses.

Software compatibility is handled through the Positron Inference Engine, which exposes an OpenAI-compatible endpoint. This means existing applications that call OpenAI's API can switch to Atlas without code changes. It also integrates with the Hugging Face transformers library and accepts .pt and .safetensors model formats directly. Setup time, per Positron, is under 40 minutes from unboxing to serving requests.

Next-Generation Asimov

Positron's second-generation chip, Asimov, targets tape-out at end of 2026 with production in early 2027. The Asimov switches from HBM to LPDDR5x memory with CXL expansion, scaling from 864GB to 2.3TB per chip. A full Titan 8-card system would carry 8TB of memory - enough to run models in the multi-trillion parameter range that today require multi-node clusters. Positron claims five times more tokens per watt versus Vera Rubin for core inference workloads, though this is a forward claim against hardware that isn't shipping yet.

Pricing and Availability

Atlas ships today from Positron directly and through BittWare. Pricing isn't published; customers need to contact sales for a quote. The DGX H200 system lists at roughly $300,000-$400,000 depending on configuration. Positron claims 3x better economics, which would put an Atlas system somewhere in the $100,000-$150,000 range - but this is inference from their published claims, not confirmed pricing.

Power infrastructure is a real advantage here. Five Atlas servers fit in a 10kW power envelope, versus one DGX H100. In data centers where power is the binding constraint, that density difference is significant and directly comparable to Groq's LPU, which also targets power-efficient inference. The 2,000W draw also means air cooling works - no liquid cooling infrastructure required, which reduces deployment cost.

Cloud API access isn't currently offered; the Atlas is an on-premises appliance. This matters for teams that want cost predictability and data privacy over the flexibility of a cloud API.

Strengths

- 4.54x better performance per watt than DGX H200 on transformer inference

- Ships today, U.S.-manufactured, OpenAI API-compatible

- Air-cooled in 2,000W envelope - no liquid cooling infrastructure

- 93% memory bandwidth use versus 10-30% for GPU-based systems

- Arm investment suggests broad ecosystem integration coming

Weaknesses

- Inference only - no training, fine-tuning, or multi-purpose compute

- Pricing undisclosed - limits apples-to-apples cost comparison

- All benchmarks are vendor-supplied; no independent third-party validation published

- Single model per server (model switching adds latency)

- No cloud API option for customers who want usage-based pricing

Related Coverage

- Groq LPU - another SRAM/inference-first accelerator competing in the same power-efficient space

- NVIDIA H200 - the GPU the Atlas mainly benchmarks against

- NVIDIA Vera Rubin NVL144 - the flagship system Positron's Asimov targets for next-gen competition

Sources

✓ Last verified April 15, 2026