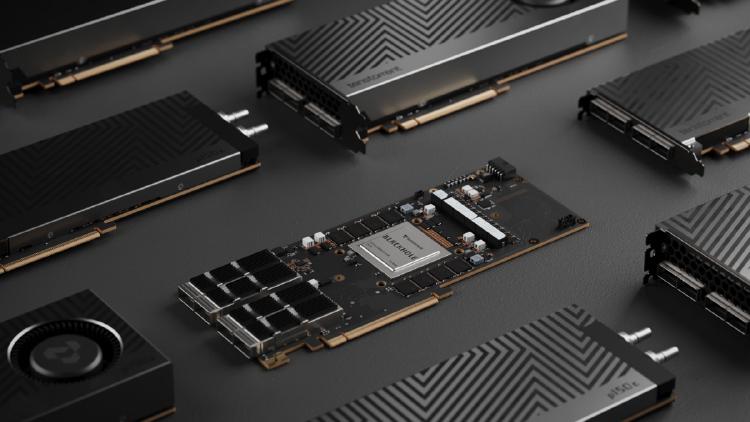

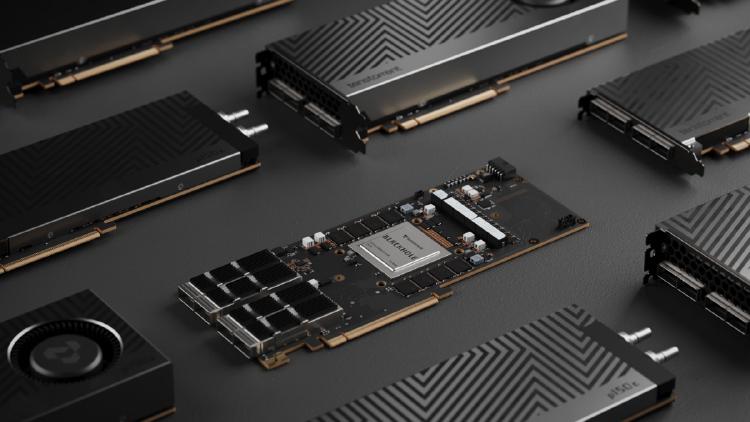

Tenstorrent Blackhole p150a - RISC-V AI Card

Complete specs, benchmarks, and analysis of the Tenstorrent Blackhole p150a - the $1,399 PCIe AI accelerator with 120 Tensix cores, 768 RISC-V processors, 32GB GDDR6, and fully open-source software.

They summarize our coverage. We write it.

Newsletters like this one rebroadcast our headlines - often without the full review, the source reading, or the analysis underneath. Our weekly briefing sends the work they paraphrase, straight from the desk, before they get to it.

Free, weekly, no spam. One email every Tuesday. Unsubscribe anytime.

Complete specs, benchmarks, and analysis of the Tenstorrent Blackhole p150a - the $1,399 PCIe AI accelerator with 120 Tensix cores, 768 RISC-V processors, 32GB GDDR6, and fully open-source software.

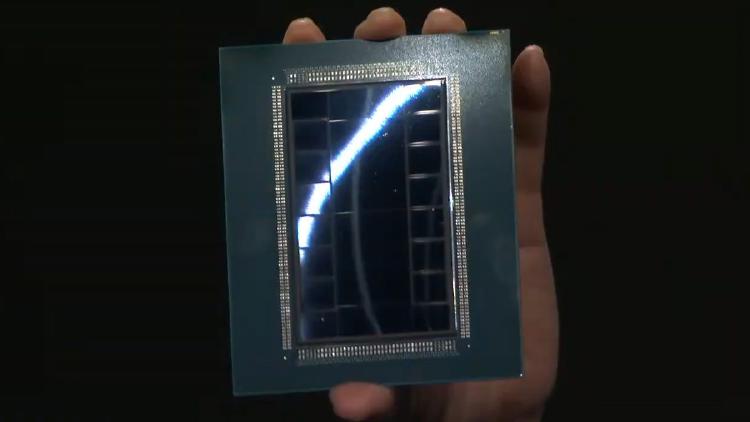

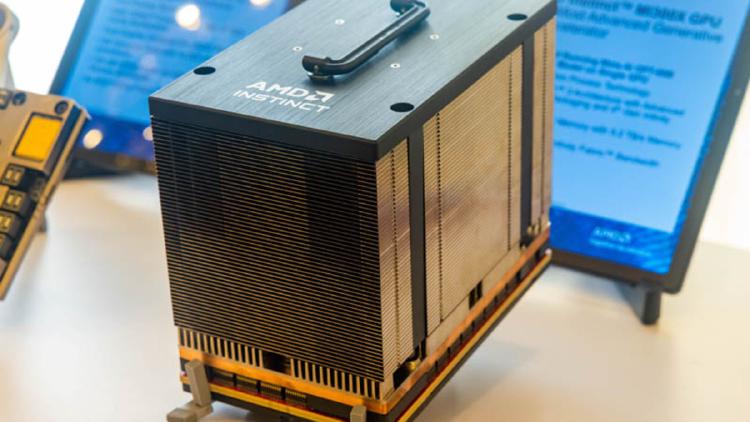

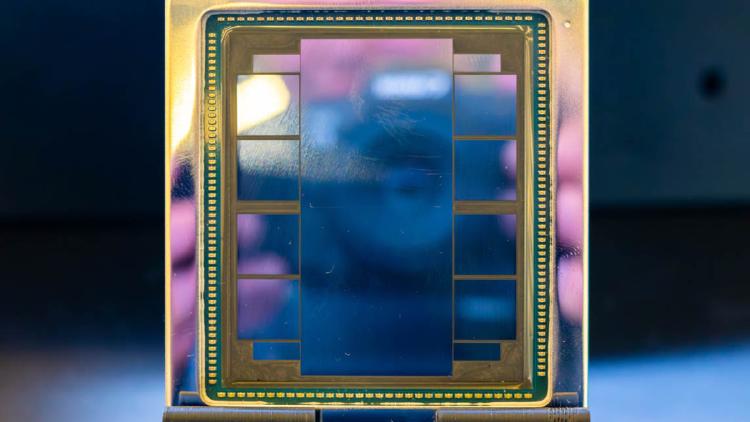

Complete specs and analysis of the AMD Instinct MI440X - a CDNA 5 enterprise GPU with 432GB HBM4, 19.6 TB/s bandwidth, and TSMC 2nm compute chiplets.

Intel Crescent Island specs and analysis - an Xe3P inference GPU with 160GB LPDDR5X, air cooling, and a cost-optimized approach to AI serving.

Complete specs, benchmarks, and analysis of the NVIDIA Rubin R200 GPU - the post-Blackwell flagship with 288GB HBM4, 22 TB/s bandwidth, and 50 PFLOPS FP4.

Qualcomm AI200 specs and analysis - a Hexagon-based inference accelerator with 768GB LPDDR per card, rack-scale design, and a focus on inference TCO.

Complete specs and analysis of SambaNova's SN50 RDU - a TSMC 3nm dataflow chip with 3.2 PFLOPS FP8, three-tier memory, and claimed 5x speed over NVIDIA B200.

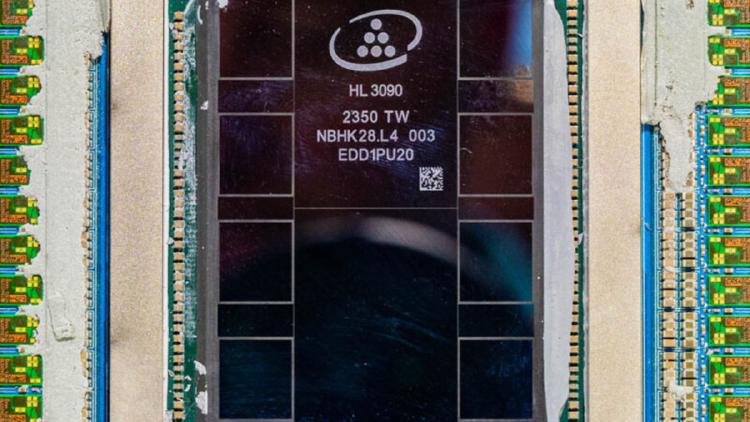

AMD Instinct MI300X specs, benchmarks, and real-world performance data. 192GB HBM3, 5,300 GB/s bandwidth, 2,610 TFLOPS FP8 on CDNA 3 chiplet architecture.

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

Full specs and benchmarks for the Apple M4 Max SoC - up to 128GB unified memory at 546 GB/s, 3nm process, and why it has become the quiet favorite for running 70B+ models locally.

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.

Google Cloud TPU v6e Trillium specs, benchmarks, and pricing. 32GB HBM per chip, ~1,600 GB/s bandwidth, optimized for Transformer training and inference at cloud scale.