AMD Instinct MI350X

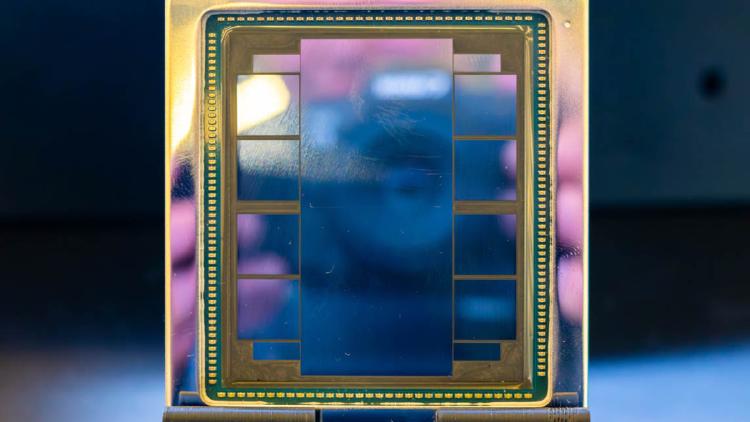

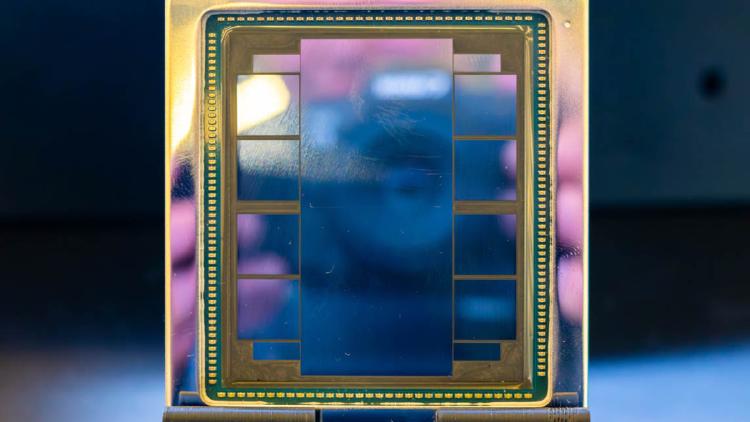

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

Full specs and benchmarks for the Apple M4 Max SoC - up to 128GB unified memory at 546 GB/s, 3nm process, and why it has become the quiet favorite for running 70B+ models locally.

AWS Trainium2 is Amazon's second-generation custom AI training chip, powering EC2 Trn2 instances with 96GB HBM2e per chip and tight integration with the AWS Neuron SDK and SageMaker ecosystem.

Full specs and analysis of the Cambricon MLU590 - 192GB HBM2e, ~2,400 GB/s bandwidth, TSMC 7nm, and what it means for AI inference outside the NVIDIA ecosystem.

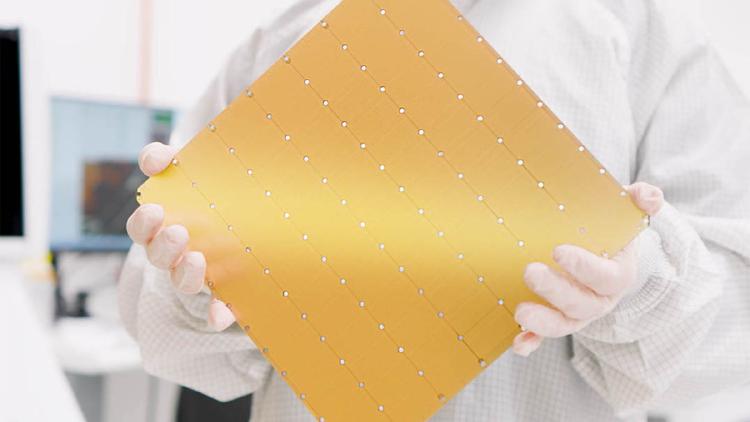

The Cerebras Wafer-Scale Engine 3 is the largest chip ever built - an entire TSMC 5nm wafer with 900,000 AI cores, 44GB of on-chip SRAM, and 21 PB/s of memory bandwidth powering the CS-3 AI supercomputer.

Google Cloud TPU v6e Trillium specs, benchmarks, and pricing. 32GB HBM per chip, ~1,600 GB/s bandwidth, optimized for Transformer training and inference at cloud scale.