NVIDIA Vera Rubin NVL144

NVIDIA's Rubin-based rack system with 144 R200 GPUs, 3.6 ExaFLOPS FP4, 20 TB HBM4 - arriving H2 2026.

TL;DR

- 144 Rubin R200 GPUs packaged as 72 Vera Rubin Superchips, each combining one 88-core Vera CPU with two R200 GPUs and 288 GB HBM4 in a single package

- 3.6 NVFP4 ExaFLOPS system-wide (~25 PFLOPS FP4 per R200 GPU) and 1.2 FP8 ExaFLOPS system-wide (~8.3 PFLOPS FP8 per GPU) - roughly 3.3x more FP4 than the GB300 NVL72

- 20,736 GB (roughly 20 TB) of HBM4 total across the rack, with each R200 GPU carrying 144 GB and each Superchip package holding 288 GB at up to 20.5 TB/s per GPU

- H2 2026 availability - no pricing announced, but the GB200 NVL72 was reportedly in the $2-3M range and the NVL144 will almost certainly cost more

- No independent benchmarks exist yet; all performance figures are NVIDIA-sourced projections

Overview

The NVIDIA Vera Rubin NVL144 is NVIDIA's rack-scale AI supercomputer built on the Rubin architecture - the successor to the GB300 NVL72 Blackwell Ultra system. Where the GB300 packs 72 GPUs into a rack, the NVL144 doubles the GPU count to 144, organized as 72 Vera Rubin Superchips. Each Superchip integrates one Vera CPU (88 ARM cores) with two R200 GPUs in a single package connected via NVLink-C2C, collapsing traditional CPU-GPU communication boundaries. The result is a rack that NVIDIA claims delivers 3.3x more FP4 compute than the GB300 NVL72 - and 1.6x more FP8.

The NVL144 hasn't shipped yet. NVIDIA confirmed H2 2026 availability, which means the system is in production planning but hasn't been through public deployment or independent testing. Every performance figure in this article comes from NVIDIA's own disclosures. That's worth keeping in mind before anyone pulls out a purchase order.

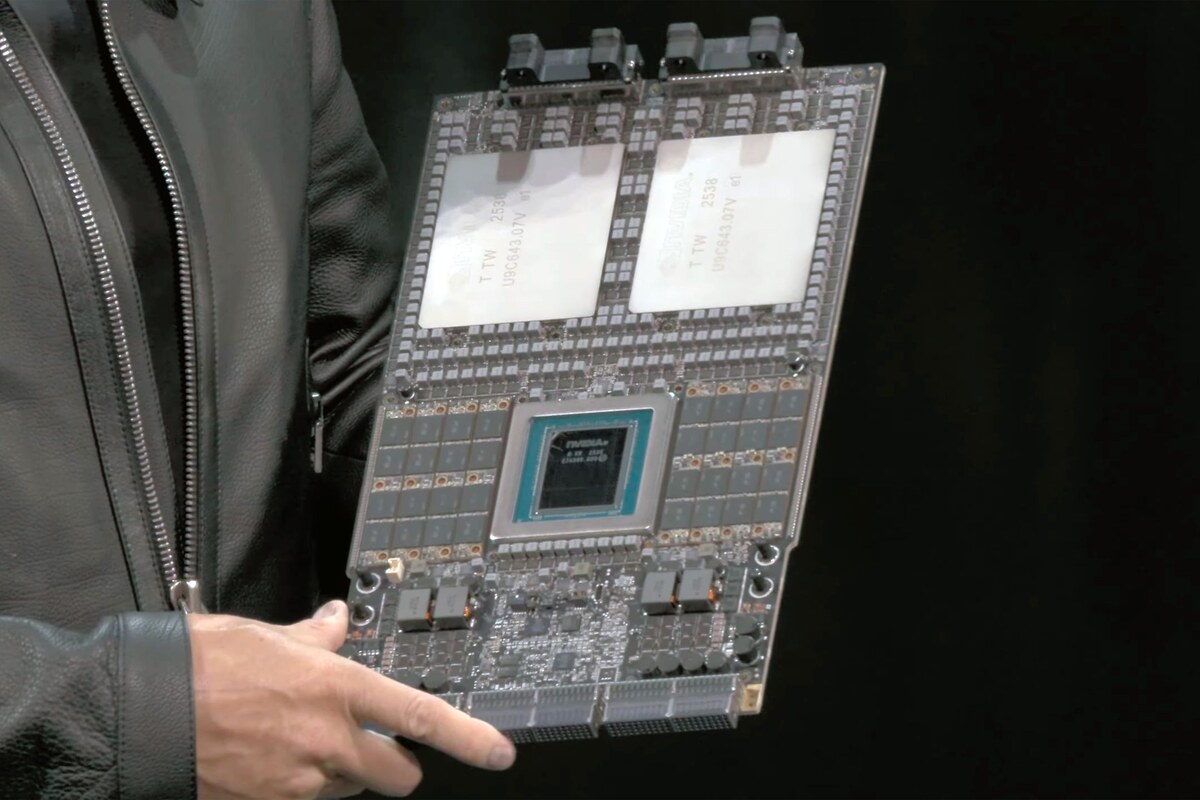

The Vera Rubin Superchip, shown at GTC 2025. Each unit houses one 88-core Vera CPU flanked by two Rubin R200 GPUs, connected by NVLink-C2C. 72 of these modules fill a complete NVL144 rack.

Source: tomshardware.com

The Vera Rubin Superchip, shown at GTC 2025. Each unit houses one 88-core Vera CPU flanked by two Rubin R200 GPUs, connected by NVLink-C2C. 72 of these modules fill a complete NVL144 rack.

Source: tomshardware.com

The Superchip concept is what makes the NVL144 architecturally distinct from prior NVIDIA rack systems. In the GB200 and GB300 generation, the GB200 "Superchip" was a Grace CPU + 2 Blackwell GPUs package - but the NVL144 takes this further by using a new Vera CPU specifically co-designed for the Rubin generation. Vera's 88 Olympus-class ARM cores run 176 threads via spatial multithreading, support up to 1.5 TB of LPDDR5X, and connect to each R200 GPU pair via NVLink-C2C at 1.8 TB/s. That bidirectional coherent link means the CPU and GPUs share an address space, cutting the overhead of CPU-GPU data transfers that bottleneck agentic and multi-step inference workloads.

The 144-GPU density also matters for NVLink domain scale. All 144 R200 GPUs in the rack form a unified NVLink scale-up fabric, enabling any GPU to access any other GPU's HBM4 at NVLink speeds without crossing an InfiniBand hop. For model parallelism - where a frontier model's layers are split across dozens of GPUs - staying within a single NVLink domain is a significant advantage. The full aggregate NVLink bandwidth within the rack is major, though NVIDIA hasn't published an exact NVL144 total figure; the R200 contributes 3.6 TB/s bidirectional per GPU via NVLink 6.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | NVIDIA |

| System Type | Rack-scale AI supercomputer |

| Architecture | Rubin (R200 GPU + Vera CPU) |

| GPUs per Rack | 144 (Rubin R200) |

| Superchips per Rack | 72 (each: 1 Vera CPU + 2 R200 GPUs) |

| CPUs per Rack | 36 Vera CPUs (88-core Arm-based each) |

| Total GPU Memory | 20,736 GB HBM4 (~20.7 TB) |

| Memory per Superchip | 288 GB HBM4 |

| Memory per R200 GPU | 144 GB HBM4 |

| Memory Bandwidth per GPU | Up to 20.5 TB/s (HBM4 at 10 Gbps) |

| FP4 Performance (system) | 3.6 NVFP4 ExaFLOPS |

| FP4 Performance per GPU | ~25 PFLOPS |

| FP4 Performance per Superchip | ~50 PFLOPS |

| FP8 Performance (system) | 1.2 FP8 ExaFLOPS |

| FP8 Performance per GPU | ~8.3 PFLOPS |

| FP8 Performance per Superchip | ~16 PFLOPS |

| NVLink per GPU | NVLink 6 (3.6 TB/s bidirectional) |

| NVLink-C2C (CPU-GPU) | 1.8 TB/s per Superchip |

| GPU TDP | ~1,800W per GPU (estimated) |

| Total Rack Power | ~120-130 kW (estimated) |

| Cooling | Liquid cooling (required) |

| Availability | H2 2026 |

| Pricing | Not announced |

Per-GPU Specifications

| Specification | Rubin R200 (per GPU) |

|---|---|

| Architecture | Rubin (dual compute die) |

| HBM4 Memory | 144 GB |

| Memory Bandwidth | Up to 20.5 TB/s |

| NVFP4 Compute (sparse) | ~25 PFLOPS |

| FP8 Compute (dense) | ~8.3 PFLOPS |

| NVLink 6 Bandwidth | 3.6 TB/s bidirectional |

| NVLink-C2C (to Vera CPU) | 1.8 TB/s |

| Estimated TDP | ~1,800W |

| Process Node | TSMC N3P (estimated) |

Note on memory: each R200 GPU in the Superchip package has 144 GB HBM4. The "288 GB per Superchip" figure refers to both GPUs in the package combined. NVIDIA's marketing often cites per-Superchip memory, which can be misleading when comparing to single-GPU products.

Performance Benchmarks

NVIDIA's claimed NVL144 numbers against prior systems:

| Metric | GB300 NVL72 | Vera Rubin NVL144 | Improvement |

|---|---|---|---|

| FP4 Performance | ~1.09 ExaFLOPS | 3.6 ExaFLOPS | 3.3x |

| FP8 Performance | ~0.75 ExaFLOPS | 1.2 ExaFLOPS | 1.6x |

| GPU Count | 72 | 144 | 2x |

| GPU Memory per GPU | 288 GB HBM3e | 144 GB HBM4 | -50% capacity, higher bandwidth |

| Memory Bandwidth per GPU | 8 TB/s | 20.5 TB/s | 2.6x |

| Total Rack Memory | ~20.7 TB | ~20.7 TB | Similar |

A few of these numbers deserve scrutiny. The FP4 performance jump from ~1.09 to 3.6 ExaFLOPS (3.3x) comes from doubling the GPU count and a significant per-GPU FP4 uplift - the R200 delivers ~25 PFLOPS FP4 versus ~15 PFLOPS for the B300 in the GB300 NVL72, a 1.67x per-GPU gain. The FP8 improvement is more modest at 1.6x system-wide.

The memory situation is interesting. Per-GPU HBM4 capacity is 144 GB on the R200 versus 288 GB on the B300 in the GB300 NVL72. The NVL144 packs twice the GPUs so total rack memory stays roughly the same at ~20.7 TB - but the memory bandwidth per GPU more than doubles (20.5 TB/s vs 8 TB/s) thanks to HBM4's wider interface. For memory-bandwidth-bound inference - which describes most autoregressive LLM decoding - that bandwidth jump is arguably more impactful than raw FP4 compute numbers.

vs. AMD Helios (MI450X)

AMD's competing rack-scale system, the Instinct MI450X Helios, targets similar workloads for a similar H2 2026 timeframe. Direct specification comparisons are difficult because AMD has disclosed fewer details. AMD has showed competitive FP8 performance and HBM4 deployment, but hasn't published system-level ExaFLOPS figures for Helios that can be directly compared. Until both systems ship and independent benchmarks run on real workloads, any head-to-head comparison would be premature. The competitive landscape here is truly uncertain.

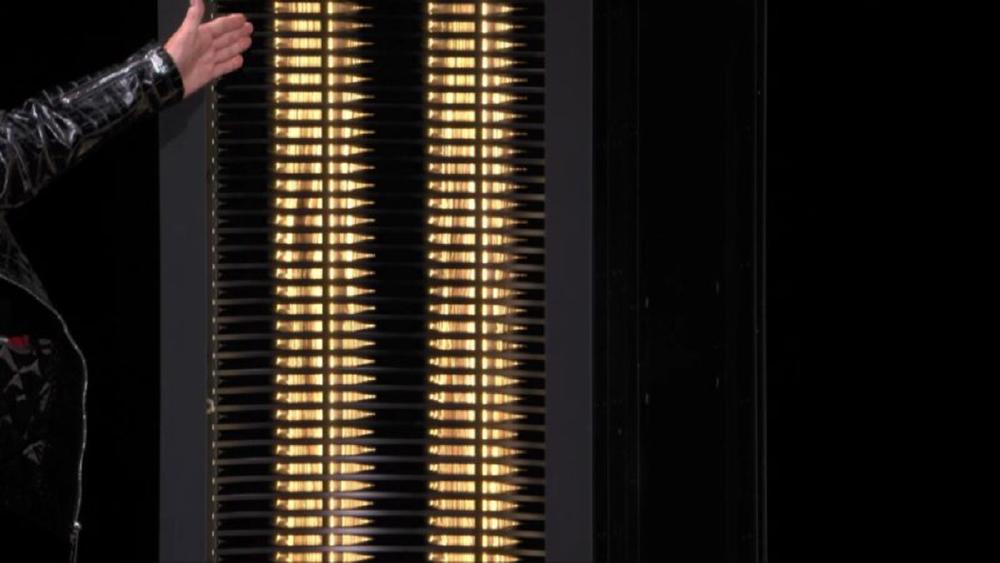

The Vera Rubin rack system shown at CES 2026. The NVL144 puts 144 R200 GPUs across 72 Superchip modules into a single fully liquid-cooled rack.

Source: servethehome.com

The Vera Rubin rack system shown at CES 2026. The NVL144 puts 144 R200 GPUs across 72 Superchip modules into a single fully liquid-cooled rack.

Source: servethehome.com

Key Capabilities

Vera CPU Integration. The Vera CPU is the biggest architectural departure from prior NVIDIA rack systems. With 88 Olympus-class ARM v9.2 cores (176 threads via spatial multithreading), up to 1.5 TB LPDDR5X at 1.2 TB/s bandwidth, and NVLink-C2C coherent connectivity to both R200 GPUs in the Superchip, the Vera CPU is a serious compute unit - not just a control plane. This matters for agentic AI workloads, where multi-step inference pipelines alternate between reasoning on the GPU and orchestration logic on the CPU. A faster, higher-bandwidth CPU reduces the coordination overhead between steps, which can compound across long reasoning chains.

The NVLink-C2C link at 1.8 TB/s per Superchip means the Vera CPU and its two R200 GPUs share a coherent memory address space. In practice, this means a GPU can access LPDDR5X on the Vera CPU - and the CPU can access HBM4 on the GPU - without explicit copy operations. For workloads that mix CPU-side data processing with GPU inference, this collapses a bottleneck that costs real throughput on systems where PCIe is the CPU-GPU bridge.

NVLink 6 Interconnect. Each R200 GPU supports 36 NVLink 6 connections at 3.6 TB/s bidirectional. Across 144 GPUs in the NVL144, this forms a large all-to-all scale-up fabric within the rack. NVIDIA hasn't disclosed the total aggregate NVLink bandwidth for the NVL144 (the NVL72 figure for the standard Vera Rubin NVL72 is 260 TB/s, and the NVL144 would be expected to deliver more - though the topology details aren't fully public). The key point is that all 144 GPUs share this fabric, enabling large model parallelism patterns without the latency penalty of crossing to InfiniBand.

Scale-Up vs Scale-Out. The NVL144's NVLink domain handles scale-up - tight communication within a rack. Scale-out to additional racks still relies on ConnectX-9 SuperNICs and InfiniBand (or Spectrum-X Ethernet). For training runs that span multiple racks, the inter-rack bandwidth is the bottleneck, not NVLink. This isn't unique to the NVL144; it applies to every multi-rack system NVIDIA has built. But it's worth being explicit: the performance advantages of the NVL144's NVLink fabric are fully realized only for workloads that fit within a single rack's 144-GPU domain.

HBM4 Memory Subsystem. The R200's HBM4 at up to 20.5 TB/s per GPU is nearly 2.6x the bandwidth of the B300's HBM3e (8 TB/s). This is the most meaningful spec for LLM inference, where autoregressive token generation is typically constrained by how fast the GPU can read model weights and KV-cache from memory. The 2.6x bandwidth improvement means the NVL144 can theoretically sustain 2.6x more tokens per second on memory-bandwidth-bound inference workloads, even before accounting for FP4 compute gains. For a 70B model serving latency-sensitive requests, memory bandwidth often matters more than peak FLOPS.

Pricing and Availability

NVIDIA hasn't announced pricing for the NVL144. For context, the GB200 NVL72 was reportedly priced around $2-3 million per rack. The NVL144 is expected to cost more - it doubles the GPU count, uses HBM4 (a newer and initially costlier memory technology), and adds the new Vera CPU. Industry estimates place it above the GB300 NVL72's projected $3-4 million range, but specific figures aren't available.

| Detail | Information |

|---|---|

| Pricing | Not announced |

| Reference: GB200 NVL72 | ~$2-3M reported |

| Reference: GB300 NVL72 | ~$3-4M estimated |

| Availability | H2 2026 |

| Cloud providers | AWS, Azure, GCP, OCI, CoreWeave, Lambda, Nebius (confirmed for Rubin) |

| Cooling requirement | Liquid cooling (required) |

Cloud availability for Vera Rubin instances is confirmed from AWS, Google Cloud, Microsoft Azure, and OCI, plus NVIDIA Cloud Partners including CoreWeave and Lambda. However, the NVL144 specifically - versus the standard Vera Rubin NVL72 (72-GPU variant) - hasn't been separately confirmed for all cloud providers. Some providers may initially deploy the NVL72 configuration before offering NVL144 capacity.

For on-premises deployment, the power and cooling requirements are substantial. NVIDIA hasn't released an exact NVL144 TDP figure, but with ~1,800W estimated per R200 GPU and 144 GPUs, the raw GPU power alone reaches around 260 kW before system overhead - which would imply total rack power well above the ~120 kW of the GB300 NVL72. NVIDIA's official rack power spec hasn't been published for the NVL144 specifically; the 120-130 kW estimate in the front matter is provisional and should be treated as such.

Strengths and Weaknesses

Strengths

- 3.6 NVFP4 ExaFLOPS system-wide represents the highest-density single-rack FP4 compute NVIDIA has announced

- 20.5 TB/s memory bandwidth per R200 GPU - 2.6x the GB300 NVL72 - directly addresses the memory bandwidth bottleneck in LLM inference

- 144-GPU NVLink 6 domain enables large model parallelism within a single rack without InfiniBand hops

- Vera CPU's 88-core design with NVLink-C2C coherency reduces CPU-GPU coordination overhead for agentic workloads

- Same rack form factor allows datacenters already built for Blackwell liquid cooling to adapt infrastructure

- HBM4 provides not just higher bandwidth but also improved energy efficiency per bit over HBM3e

- 72 Vera CPUs (36 Superchips) provide major CPU-side processing for data preprocessing and orchestration

Weaknesses

- Not shipping yet - H2 2026 at best, with supply constraints likely extending meaningful availability into 2027

- No independent benchmarks exist; all performance claims come from NVIDIA

- Per-GPU HBM4 capacity is 144 GB versus 288 GB on the B300 - half the capacity per GPU, which can matter for very large models or long KV-cache workloads

- Pricing not disclosed, and the hardware profile suggests a significant premium over the GB300 NVL72

- Estimated TDP per GPU around 1,800W requires liquid cooling infrastructure that isn't cheap to retrofit

- NVLink advantages are confined to within the rack; multi-rack scale-out still requires InfiniBand with its associated latency and cost

- Rubin Ultra (with HBM4e) is already on NVIDIA's roadmap for 2027, which may give some buyers pause about committing to the NVL144

Related Coverage

- NVIDIA GB300 NVL72 - Blackwell Ultra Rack - The predecessor system the NVL144 aims to replace

- NVIDIA Rubin R200 - Next-Gen AI Superchip - The individual R200 GPU that powers the NVL144

- NVIDIA Rubin CPX - Inference GPU With GDDR7 - The inference-specialized variant in the Vera Rubin NVL144 CPX platform

Sources

- NVIDIA Vera Rubin NVL72 Product Page

- NVIDIA Newsroom - Rubin Platform AI Supercomputer

- Inside the NVIDIA Rubin Platform - NVIDIA Technical Blog

- NVIDIA Vera Rubin Platform In Depth - Tom's Hardware

- NVIDIA Reveals Vera Rubin Superchip for the First Time - Tom's Hardware

- NVIDIA Launches Next-Generation Rubin AI Compute Platform at CES 2026 - ServeTheHome

- Supermicro NVIDIA Vera Rubin Platform Page

- Supermicro Announces NVL144 Support - PRNewswire

- NVIDIA Vera Rubin Platform Obsoletes Current AI Iron - The Next Platform

- NVIDIA Rubin Architecture - Wikipedia

✓ Last verified March 15, 2026