NVIDIA Rubin CPX - Inference GPU With GDDR7

Full specs, benchmarks, and analysis of the NVIDIA Rubin CPX - a purpose-built inference GPU with 128GB GDDR7, 30 PFLOPS NVFP4, and 3x faster attention versus Blackwell, targeting million-token context workloads.

TL;DR

- Purpose-built inference GPU with 128GB GDDR7 memory - a deliberate trade of HBM bandwidth for massive capacity at lower cost per byte

- 30 PFLOPS NVFP4 sparse compute and around 20 PFLOPS dense from 192 streaming multiprocessors on TSMC's N3P process

- 3x faster attention versus GB300 NVL72 through dedicated attention hardware - targeting million-token context windows

- PCIe Gen 6 and no NVLink - designed for disaggregated inference racks, not tightly-coupled training clusters

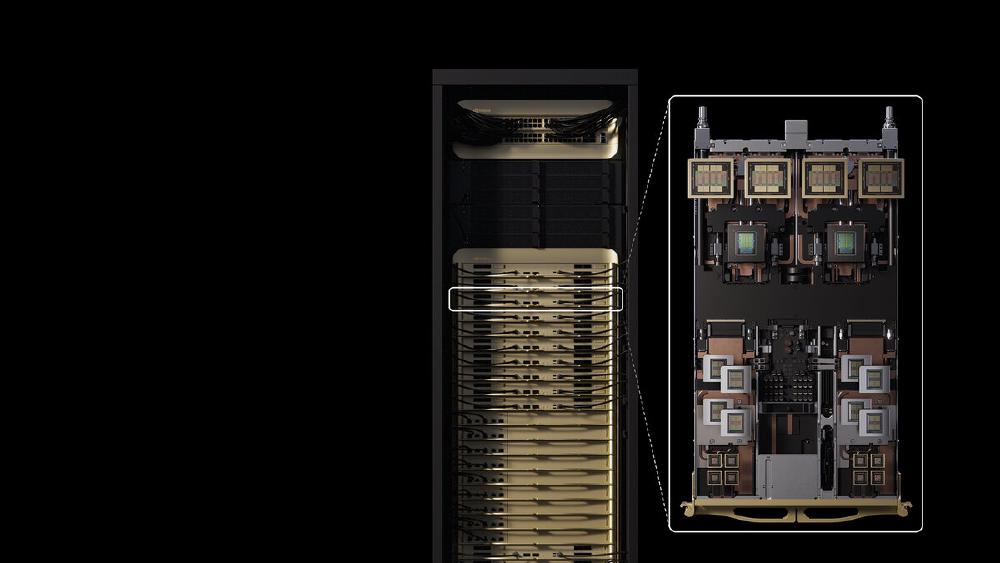

- Ships end of 2026 as part of the Vera Rubin NVL144 CPX platform - 144 CPX GPUs plus 144 Rubin GPUs and 36 Vera CPUs in a single rack

Overview

The NVIDIA Rubin CPX is something new from NVIDIA - a GPU designed exclusively for inference, and specifically for the prefill and context-processing phase of large language model serving. Announced at the AI Infrastructure Summit in September 2025, the CPX breaks several NVIDIA conventions simultaneously. It uses GDDR7 instead of HBM. It connects over PCIe Gen 6 without NVLink. And it ships as part of a heterogeneous rack system with standard Rubin GPUs rather than as a standalone product.

The reasoning behind the architecture is straightforward. The prefill phase of LLM inference - where the model processes the full input context before generating tokens - is compute-bound, not memory-bandwidth-bound. A million-token prompt needs enormous compute to process all the attention layers across the full context, but the memory access pattern is sequential and predictable. HBM's extreme bandwidth (8+ TB/s on a GB300 NVL72) is overkill for this access pattern. GDDR7, at roughly 2 TB/s and a fraction of the cost per gigabyte, provides enough bandwidth while enabling 128GB of capacity per GPU at a price point that HBM can't match.

The result is a chip that does one thing exceptionally well: it chews through the compute-intensive context-processing phase of inference, then hands off to the standard Rubin GPUs in the same rack for the memory-bandwidth-sensitive decode phase. This prefill/decode disaggregation is the architectural insight behind the entire Vera Rubin NVL144 CPX platform.

NVIDIA positioned the CPX against the growing trend of million-token context windows in production models. As context lengths scale from 128K to 1M tokens and beyond, the prefill compute cost grows quadratically with standard attention implementations and linearly with flash attention. The CPX's 3x attention performance improvement over Blackwell and its 128GB of model-weight capacity are specifically designed to make million-token prefill economically viable at scale.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | NVIDIA |

| Architecture | Rubin CPX |

| Process Node | TSMC N3P (3nm-class) |

| Die Design | Monolithic |

| Streaming Multiprocessors | 192 |

| NVFP4 Compute (sparse) | 30 PFLOPS |

| NVFP4 Compute (dense) | ~20 PFLOPS (estimated) |

| FP8 Compute | ~15 PFLOPS (estimated) |

| Attention Performance | 3x vs GB300 NVL72 |

| Memory | 128 GB GDDR7 |

| Memory Bandwidth | ~2,000 GB/s (~2 TB/s) |

| Memory Interface | Wide GDDR7 bus |

| Host Interface | PCIe Gen 6 |

| NVLink | None |

| TDP | ~800-880W |

| Cooling | Liquid cooling |

| Rack Configuration | 144 CPX GPUs per Vera Rubin NVL144 CPX |

| Release Date | End of 2026 |

The monolithic die design is remarkable. While the B200 and B300 use dual-die designs with two chiplets connected via a high-bandwidth bridge, the Rubin CPX is a single die manufactured on TSMC's N3P process. This means simpler manufacturing (no multi-chip module assembly), lower latency between SM clusters, and a potentially higher yield per wafer due to the single-die design - though the exact die size has not been disclosed.

The 192 SMs represent an increase over the B300's 160 SMs, enabled by the N3P process shrink from 4NP. More SMs means more parallel compute, which directly benefits the attention computation that dominates prefill workloads.

Performance Benchmarks

| Metric | GB300 NVL72 (per GPU) | Rubin CPX (per GPU) | Comparison |

|---|---|---|---|

| NVFP4 Compute (sparse) | ~21 PFLOPS | 30 PFLOPS | CPX +43% |

| Attention Performance | 1x (baseline) | 3x | CPX 3x faster |

| Memory Capacity | 288 GB HBM3e | 128 GB GDDR7 | GB300 2.25x |

| Memory Bandwidth | 8,000 GB/s | ~2,000 GB/s | GB300 4x |

| Memory Type | HBM3e | GDDR7 | Different class |

| Host Interface | NVLink-C2C (to Grace) | PCIe Gen 6 | Different |

| Inter-GPU Fabric | NVLink 5 (1,800 GB/s) | None | GB300 only |

| TDP | 1,400W | ~800-880W | CPX ~40% lower |

| Process Node | TSMC 4NP | TSMC N3P | CPX 1 node ahead |

Rack-Scale Comparison

| Metric | GB300 NVL72 | Vera Rubin NVL144 CPX (CPX GPUs only) | NVL144 CPX (full rack) |

|---|---|---|---|

| GPUs | 72 (B300) | 144 (Rubin CPX) | 144 CPX + 144 Rubin |

| NVFP4 Compute | ~1,080 PFLOPS | ~4,320 PFLOPS | 8 EXAFLOPS |

| Total Memory | 20.7 TB | ~18.4 TB (CPX) | ~100 TB |

| Memory Bandwidth | 576 TB/s | ~288 TB/s | ~1,700 TB/s |

| Power | ~120 kW | ~127 kW (CPX) | ~370 kW |

The Vera Rubin NVL144 CPX rack is the full system that puts the CPX in context. It contains 144 Rubin CPX GPUs for prefill processing, 144 standard Rubin GPUs (with HBM4 for high-bandwidth decode), and 36 Vera ARM CPUs. The combined 8 EXAFLOPS of NVFP4 compute and 100TB of total memory represent a generational leap over the GB300 NVL72, though direct comparison is complicated by the heterogeneous architecture.

Attention Performance Analysis

The 3x attention improvement over Blackwell is the CPX's defining feature. NVIDIA hasn't disclosed the exact hardware mechanism, but the improvement likely combines:

- Dedicated attention hardware blocks beyond the general-purpose Tensor Cores - potentially hardwired attention patterns similar to how the Groq LPU hardwires the entire transformer computation

- Larger on-chip SRAM / Tensor Memory to hold attention intermediates (QK^T products, softmax results) without round-tripping to GDDR7

- Architectural improvements in the SM from the N3P process node, providing more transistors per SM for dedicated attention logic

For a million-token prefill on a 70B model, the attention compute is roughly 10x the linear projection compute. A 3x improvement in attention throughput translates to approximately 2.5x faster end-to-end prefill for long-context workloads - a massive improvement that directly reduces time-to-first-token for users waiting on long document processing.

Key Capabilities

GDDR7 Instead of HBM. The CPX is the first NVIDIA datacenter GPU to use GDDR7 since the Tesla line. The choice is economic rather than technical. GDDR7 provides around 2 TB/s of bandwidth from 128GB of capacity at a fraction of the cost of an equivalent HBM3e configuration. For prefill workloads where the memory access pattern is dominated by sequential weight reads (not the random-access patterns that benefit from HBM's 8+ TB/s), GDDR7 bandwidth is sufficient.

The cost advantage is significant. HBM3e at 288GB (as in the GB300) adds an estimated $5,000-8,000 to the GPU's bill of materials. GDDR7 at 128GB likely costs $500-1,000 for the memory modules. This 5-10x cost reduction in memory directly translates to a lower per-GPU price, enabling NVIDIA to pack 144 CPX GPUs into a rack at a price point that would be impossible with HBM.

The trade-off is real: at ~2 TB/s versus ~8 TB/s, the CPX can't serve decode (autoregressive token generation) workloads efficiently. Each generated token requires reading the full KV-cache from memory, and the bandwidth-to-compute ratio of GDDR7 is too low for this access pattern. This is why the CPX is paired with standard Rubin GPUs (which have HBM4) for decode.

Disaggregated Inference Architecture. The Vera Rubin NVL144 CPX platform implements what NVIDIA calls "disaggregated inference" - splitting the prefill and decode phases across specialized hardware within a single rack. The CPX GPUs handle prefill (compute-heavy, bandwidth-light), and the Rubin GPUs handle decode (bandwidth-heavy, compute-light).

This disaggregation addresses a fundamental inefficiency in current LLM serving. On a standard GPU like the H100 or B200, the same hardware serves both prefill and decode, meaning it's alternately compute-underutilized (during decode) and bandwidth-underutilized (during prefill). By splitting these phases across purpose-built hardware, the NVL144 CPX platform achieves higher overall use and better cost efficiency.

PCIe Gen 6, No NVLink. The absence of NVLink is a deliberate design choice. Prefill workloads on a single model can be parallelized across CPX GPUs via PCIe Gen 6 at ~256 GB/s per GPU, which is sufficient for distributing prefill context chunks across GPUs. The tightly-coupled NVLink fabric (at 1,800 GB/s per GPU in the GB300 NVL72) is unnecessary because prefill doesn't require the constant all-reduce communication patterns that training workloads demand.

Removing NVLink also removes the NVLink switches, reduces the die area dedicated to interconnect, and simplifies the rack-level wiring - all of which contribute to fitting 144 GPUs into a single rack at manageable cost and power.

Pricing and Availability

NVIDIA hasn't disclosed per-GPU or per-rack pricing for the Vera Rubin NVL144 CPX platform. Based on the GDDR7 memory cost advantage and the overall system configuration, the per-CPX-GPU cost is expected to be notably lower than Rubin GPUs with HBM4. The full NVL144 CPX rack, containing 288 total GPUs (144 CPX + 144 Rubin) plus 36 Vera CPUs, will likely be priced in the $5-10 million range based on the component count and system complexity.

| Configuration | Estimated Range |

|---|---|

| Vera Rubin NVL144 CPX (full rack) | $5,000,000 - $10,000,000 (estimated) |

| Per Rubin CPX GPU (estimated) | Notably less than HBM-equipped Rubin |

| Power per rack | ~370 kW |

| Cooling | Liquid cooling (required) |

Availability is expected at end of 2026. This positions the Rubin CPX as a late-2026 product, roughly coinciding with the broader Vera Rubin platform launch.

Who Should Wait for Rubin CPX

| Scenario | Recommendation |

|---|---|

| Serving models with 100K+ token contexts | Strong candidate - 3x attention improvement directly reduces prefill latency |

| High-throughput inference API provider | Strong candidate - disaggregated architecture improves GPU use |

| Training workloads | Not relevant - CPX has no NVLink and isn't designed for training |

| Small-scale inference (single GPU) | Not relevant - CPX only ships as part of NVL144 rack system |

| Need compute before end of 2026 | Deploy GB300 NVL72 or existing Blackwell now |

Strengths

- 3x attention performance over GB300 NVL72 directly addresses the prefill bottleneck for million-token context workloads

- 128GB GDDR7 provides large model-weight capacity at a fraction of HBM cost per byte

- 30 PFLOPS NVFP4 sparse compute from 192 SMs on TSMC N3P - the most compute-dense NVIDIA inference GPU announced

- Monolithic die design avoids multi-chip-module complexity and inter-chiplet latency

- ~800-880W TDP is 40% lower than the B300's 1,400W while delivering more NVFP4 compute

- Disaggregated prefill/decode architecture in the NVL144 CPX rack improves overall GPU use for inference

- PCIe Gen 6 simplifies system design and removes NVLink switch cost

Weaknesses

- ~2 TB/s GDDR7 bandwidth is 4x lower than HBM3e - the CPX can't efficiently serve decode workloads alone

- No NVLink means no tightly-coupled multi-GPU training capability - this is an inference-only product

- Only available as part of the Vera Rubin NVL144 CPX rack system - no standalone SKU for smaller deployments

- End of 2026 availability means 12+ months of waiting from the announcement date

- ~370 kW per NVL144 CPX rack requires sizable datacenter power and liquid cooling infrastructure

- No independent benchmarks yet - all performance claims are NVIDIA-sourced

- GDDR7 memory has higher latency than HBM, which may affect certain memory access patterns during prefill

- Rack pricing (estimated $5-10M) limits the buyer pool to hyperscalers and large inference providers

Related Coverage

- NVIDIA GB300 NVL72 - Blackwell Ultra Rack - The current-generation rack system that Rubin CPX aims to succeed for inference workloads

- NVIDIA B200 - Blackwell Flagship GPU - Current-generation Blackwell GPU for comparison

- NVIDIA Rubin R200 - The standard Rubin GPU with HBM4 that pairs with CPX in the NVL144 rack

- Groq LPU - Deterministic Inference at Scale - Another inference-specialized ASIC taking a radically different architectural approach

- Google TPU v7 Ironwood - Google's latest AI accelerator competing at the rack scale

Sources

- NVIDIA Unveils Rubin CPX: A New Class of GPU Designed for Massive-Context Inference - NVIDIA Newsroom

- NVIDIA Rubin CPX GPU to Feature 128GB GDDR7 Memory - TweakTown

- NVIDIA Discloses Rubin CPX, an Unexpected Data-Center GPU - XPU.pub

- NVIDIA's New Rubin CPX GPU Delivers 30 PetaFLOPs Compute and 128GB Memory - TechRadar

- NVIDIA Rubin CPX Die Shot Reveals Graphics-Specific Hardware Blocks - Tom's Hardware

- NVIDIA Previews Rubin CPX Graphics Card for Disaggregated Inference - SiliconANGLE

- NVIDIA Vera Rubin Platform In Depth - Tom's Hardware