NVIDIA H200 - Inference-Optimized Hopper

Complete specs, benchmarks, and analysis of the NVIDIA H200 - the HBM3e-equipped Hopper GPU that delivers 76% more memory and 43% more bandwidth than the H100 for inference workloads.

TL;DR

- 141GB HBM3e with 4,800 GB/s bandwidth - 76% more memory and 43% more bandwidth than the H100 on the same Hopper die

- Identical compute to the H100: 3,958 TFLOPS FP8, 16,896 CUDA cores, 528 fourth-generation Tensor Cores, same Transformer Engine

- The memory and bandwidth upgrade that large language model inference has been waiting for - fits models on fewer GPUs and pushes tokens faster

- Available since late 2024, priced at $25,000-$35,000 - a modest premium over the H100 for a significant inference throughput gain

- Bridge product between Hopper and Blackwell - same software stack, same power envelope, more memory

Overview

The NVIDIA H200 is what happens when the bottleneck is not compute but memory. Released in late 2024, the H200 uses the exact same Hopper GH100 die as the H100 - same 16,896 CUDA cores, same 528 Tensor Cores, same Transformer Engine, same 700W TDP. The only difference is the memory subsystem: the H200 swaps the H100's 80GB of HBM3 for 141GB of HBM3e, increasing capacity by 76% and bandwidth by 43% (from 3,350 GB/s to 4,800 GB/s).

That sounds like a minor spec bump, but for large language model inference it is transformative. LLM inference is fundamentally memory-bandwidth-bound - the speed at which you can generate tokens is limited by how fast you can read model weights from memory, not by how fast you can multiply matrices. The H200's jump from 3,350 to 4,800 GB/s directly translates to faster token generation. NVIDIA's own benchmarks show the H200 delivering up to 1.9x the inference throughput of the H100 on Llama 2 70B, simply because it can feed data to the Tensor Cores faster.

The 141GB capacity is equally important. An unquantized 70B-parameter model in FP16 requires approximately 140GB of memory just for the weights. The H100's 80GB cannot hold this without quantization or multi-GPU sharding. The H200's 141GB can. This means workloads that required two H100s can now run on a single H200, cutting infrastructure costs, reducing inter-GPU communication overhead, and simplifying deployment. For organizations running large inference fleets, the math is compelling: fewer GPUs, higher throughput, same power budget.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | NVIDIA |

| Architecture | Hopper (GH100) |

| Process Node | TSMC 4N |

| Transistors | 80 billion |

| Die Size | 814 mm² |

| CUDA Cores | 16,896 |

| Tensor Cores | 528 (4th generation) |

| Streaming Multiprocessors | 132 |

| GPU Memory | 141 GB HBM3e |

| Memory Bandwidth | 4,800 GB/s (4.8 TB/s) |

| Memory Bus Width | 5,120-bit |

| L2 Cache | 50 MB |

| FP64 Performance | 33.5 TFLOPS (67 TFLOPS with sparsity) |

| FP32 Performance | 67 TFLOPS (133.8 TFLOPS with sparsity) |

| TF32 Performance | 495 TFLOPS (989 TFLOPS with sparsity) |

| FP16 / BF16 Performance | 989 TFLOPS (1,979 TFLOPS with sparsity) |

| FP8 Performance | 1,979 TFLOPS (3,958 TFLOPS with sparsity) |

| INT8 Performance | 1,979 TOPS (3,958 TOPS with sparsity) |

| Transformer Engine | 1st generation (FP8/FP16 dynamic) |

| NVLink | 4th generation, 900 GB/s |

| NVLink Switch System | Up to 256 GPUs |

| PCIe | Gen 5.0 x16 |

| Multi-Instance GPU | Up to 7 instances |

| TDP | 700W |

| Form Factor | SXM5 |

| Cooling | Passive |

| Release Date | Q4 2024 |

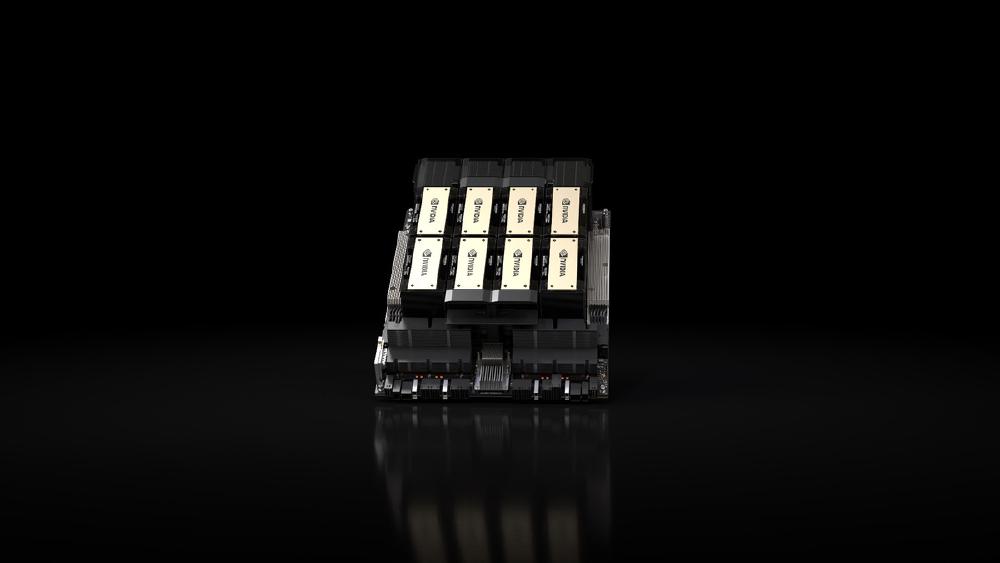

The H200 uses six HBM3e stacks compared to the H100's five HBM3 stacks. HBM3e (the "e" stands for "extended") is the latest generation of high-bandwidth memory from SK Hynix, Samsung, and Micron, offering higher density per stack (achieved through more layers and improved TSV - Through-Silicon Via - technology) and higher per-pin signaling rates. The result is 141GB of capacity at 4,800 GB/s bandwidth in a package that fits the same SXM5 socket as the H100.

The memory controller on the GH100 die was designed from the start to support HBM3e. This is not a retrofit - NVIDIA planned the H200 as part of the Hopper product family from the architecture phase. The memory controller's ability to drive HBM3e's higher signaling rates without a die respin is what allowed NVIDIA to bring the H200 to market quickly and at the same power envelope as the H100.

Performance Benchmarks

| Metric | A100 80GB | H100 SXM | H200 | B200 |

|---|---|---|---|---|

| FP8 Tensor TFLOPS | N/A | 3,958 (sparse) | 3,958 (sparse) | 9,000 (dense) |

| FP16 Tensor TFLOPS | 624 (sparse) | 1,979 (sparse) | 1,979 (sparse) | 4,500 (sparse) |

| Memory Capacity | 80 GB HBM2e | 80 GB HBM3 | 141 GB HBM3e | 192 GB HBM3e |

| Memory Bandwidth | 2,039 GB/s | 3,350 GB/s | 4,800 GB/s | 8,000 GB/s |

| NVLink Bandwidth | 600 GB/s | 900 GB/s | 900 GB/s | 1,800 GB/s |

| TDP | 400W | 700W | 700W | 1,000W |

| LLM Inference (Llama 2 70B) | 1x (baseline) | ~1.5x | ~1.9x | ~3.5x |

| Transistors | 54.2B | 80B | 80B | 208B |

The benchmark story for the H200 is entirely about memory. The compute numbers are identical to the H100 because the silicon is the same. What changes is throughput on memory-bandwidth-bound workloads - which describes most LLM inference at scale.

On Llama 2 70B inference, NVIDIA reports the H200 delivering approximately 1.9x the throughput of the H100. This is not a 1.9x improvement in raw compute - it is the result of 43% more memory bandwidth enabling the Tensor Cores to stay fed with data. The H100's Tensor Cores are frequently starved for data during inference because the 3,350 GB/s memory bandwidth cannot keep up with the 3,958 TFLOPS of FP8 compute. The H200 narrows this gap significantly with 4,800 GB/s.

Training Performance Analysis

For training, the H200's advantage is more modest. Training workloads are generally more compute-bound than inference, so the identical FLOPS rating means training throughput gains are smaller - typically in the 10-20% range depending on the model size and batch configuration. The extra memory helps with larger batch sizes and reduced activation checkpointing, but the raw speed improvement is a fraction of what inference workloads see.

Where the H200's extra memory does meaningfully impact training is in the activation checkpointing tradeoff. During training, intermediate activations must be stored for the backward pass. On the H100 with 80GB, large models require aggressive activation checkpointing - recomputing activations during the backward pass instead of storing them, which saves memory at the cost of ~30% additional compute. The H200's 141GB allows less aggressive checkpointing (or no checkpointing for smaller models), recovering that 30% compute overhead. For a 13B-parameter model that requires checkpointing on H100 but fits without it on H200, the effective training speedup can be 20-30% purely from the memory capacity difference.

Inference Performance Analysis

The H200's inference advantage is best understood through the roofline model. For autoregressive LLM inference, the performance bottleneck is determined by the ratio of compute to memory bandwidth. At batch-1 inference with a large model, the operational intensity (FLOPS per byte of memory accessed) is very low - you need to read the entire weight matrix for each generated token, but only perform a single matrix-vector multiplication. This puts the workload deep in the memory-bandwidth-bound regime.

The roofline model predicts that batch-1 inference throughput scales linearly with memory bandwidth, regardless of compute capacity. This is exactly what the H200 delivers: 43% more bandwidth translates to approximately 43% more tokens per second at batch-1. The 1.9x improvement that NVIDIA reports on Llama 2 70B comes from the combination of bandwidth improvement plus the elimination of multi-GPU sharding overhead (the model fits on one H200 but requires two H100s).

At higher batch sizes, the workload shifts toward the compute-bound regime, and the H200's advantage narrows because its compute is identical to the H100. The crossover point depends on the model size, but for a 70B model, batch sizes above 32 start to become compute-limited on both the H100 and H200. For inference providers serving high-traffic endpoints with large batches, the H200's advantage over the H100 shrinks from 1.9x to roughly 1.2-1.4x.

Compared to the B200, the H200 is outclassed on both axes: the B200 delivers 2.3x the compute and 1.67x the bandwidth in a larger memory pool. But the B200 also costs more, draws 1,000W, and is on a different architecture that requires Blackwell-specific optimization. For organizations already running Hopper infrastructure, the H200 is a drop-in upgrade that delivers substantial inference gains without any software migration.

Key Capabilities

HBM3e Memory Subsystem. The H200's defining feature is its 141GB of HBM3e memory running at 4,800 GB/s. HBM3e is the latest generation of high-bandwidth memory, offering higher density (allowing 141GB in the same physical footprint) and higher speed per pin compared to the H100's HBM3. The six HBM3e stacks on the H200 deliver nearly 1.5 TB/s more bandwidth than the H100, which directly translates to higher token throughput on autoregressive LLM inference. For KV-cache-heavy workloads with long context windows, the additional memory capacity also means more concurrent requests can be batched before running out of memory.

The KV-cache memory advantage is particularly significant for applications with long context windows. Each concurrent request's KV-cache grows linearly with sequence length. For a 70B model with 128K context at FP16, a single request's KV-cache can consume over 40GB of memory. On an H100 with 80GB total (of which ~70GB is available after loading model weights at FP8), you might serve only one or two long-context requests simultaneously. The H200's 141GB provides roughly 70GB of headroom for KV-cache after loading model weights, supporting 1-2 additional concurrent long-context requests. For inference providers billing by token, this translates directly to higher revenue per GPU per hour.

Drop-In Hopper Compatibility. Because the H200 uses the same GH100 die and the same Hopper architecture as the H100, it is a true drop-in replacement in terms of software. Any model optimized for H100 - using CUDA, cuDNN, TensorRT, or TensorRT-LLM - runs on the H200 without modification. The same MIG configurations, the same NVLink topologies, the same system software stack. This dramatically lowers the migration barrier for organizations with existing Hopper deployments. There is no new architecture to learn, no new compiler to qualify, no new inference engine to validate. You swap the GPU, and inference throughput goes up.

This compatibility extends to the full NVIDIA AI Enterprise software stack. Triton Inference Server, TensorRT-LLM, NeMo, and all other NVIDIA-maintained inference and training tools work on H200 without modification. Even custom CUDA kernels compiled for Hopper run without recompilation. For organizations with significant investment in Hopper-optimized software, this eliminates the migration risk entirely.

Inference Economics at Scale. The H200 reshapes inference fleet economics in a way that goes beyond the raw performance numbers. Consider a workload that requires sharding a 70B model across two H100s due to the 80GB memory limit. On the H200, the same model fits on a single GPU. This halves the GPU count, eliminates inter-GPU communication overhead, halves the server slots, and delivers higher per-GPU throughput on top of those savings. For large-scale inference providers running thousands of GPUs, this kind of consolidation can save millions of dollars in infrastructure costs annually while simultaneously reducing latency.

The latency improvement from single-GPU inference deserves emphasis. When a model is sharded across two GPUs, every layer that spans the GPU boundary incurs NVLink communication latency. For a 70B model with 80 layers split across 2 GPUs (40 layers each), each forward pass requires at least one NVLink synchronization point per boundary crossing. At the speeds relevant to interactive AI (target: <100ms first-token latency), these synchronization overheads can add 5-15ms per inference call. On a single H200, there are no GPU boundaries to cross, and first-token latency drops accordingly.

Architecture Deep Dive

HBM3e vs HBM3

The transition from HBM3 to HBM3e involves several technological improvements at the DRAM and packaging level. HBM3e increases the per-pin data rate from HBM3's ~6.4 Gbps to ~9.2 Gbps, enabling the higher aggregate bandwidth without widening the memory bus. The 12-Hi stacking (12 DRAM dies per stack) that HBM3e supports - compared to HBM3's typical 8-Hi or 16-Hi configurations - provides a sweet spot of capacity per stack. With six 12-Hi stacks, the H200 achieves 141GB total (approximately 23.5GB per stack).

The power efficiency of HBM3e is also improved. Despite the higher data rates, HBM3e operates at similar or lower voltage compared to HBM3, keeping the memory subsystem's power consumption within the H100's existing 700W thermal envelope. This is why the H200 can deliver 43% more bandwidth without increasing its TDP - the memory subsystem's power budget was redesigned within the same overall power limit.

Memory Capacity Implications for Model Deployment

The jump from 80GB to 141GB crosses several important model-size thresholds:

| Model Size | FP16 Weight Size | FP8 Weight Size | Fits on H100? | Fits on H200? |

|---|---|---|---|---|

| 7B | ~14 GB | ~7 GB | Yes (1 GPU) | Yes (1 GPU) |

| 13B | ~26 GB | ~13 GB | Yes (1 GPU) | Yes (1 GPU) |

| 30B | ~60 GB | ~30 GB | Yes (tight) | Yes (comfortable) |

| 70B | ~140 GB | ~70 GB | FP8 only (tight) | Yes (FP16 or FP8) |

| 120B | ~240 GB | ~120 GB | No (2+ GPUs) | FP8 only (tight) |

The 70B row is the most commercially significant. Llama 2 70B, Llama 3 70B, Qwen 2.5 72B, and similar models are the workhorse sizes for production AI applications. Being able to serve these models on a single GPU rather than two has an outsized impact on fleet economics.

MIG on H200

The H200 supports the same MIG profiles as the H100, but with proportionally more memory per instance. The largest 1g profile provides approximately 20GB of memory per instance (versus ~10GB on H100 80GB), which is sufficient to run quantized 7B models. A full 7g profile provides 141GB - enough for an unquantized 70B model. This means the H200 can serve a 70B model in a single MIG partition, which is not possible on the H100.

For multi-tenant inference providers, this opens new deployment options. A single H200 could simultaneously run a 70B model in one 4g partition (~80GB) and multiple smaller models in the remaining partitions. On the H100, the 70B model would consume the entire GPU, leaving no room for other workloads.

Pricing and Availability

The H200 is available from major OEMs and cloud providers, priced at a modest premium over the H100. Individual GPU pricing ranges from $25,000 to $35,000 depending on volume and supplier. The HGX H200 (8-GPU board) is available from partners including Dell, HPE, Lenovo, and Supermicro.

| Configuration | Estimated Price (2026) |

|---|---|

| H200 SXM (new) | $25,000 - $35,000 |

| HGX H200 (8x H200 SXM) | $250,000 - $350,000 |

| DGX H200 (8x H200 SXM) | $400,000 - $500,000 |

| Cloud rental (per GPU hour) | $3.00 - $5.50 |

Cloud availability is growing. AWS offers H200 instances via p5e, Google Cloud has a2-ultragpu instances, and several tier-2 providers including CoreWeave and Lambda have added H200 capacity. Availability is not yet at H100 levels - the H200 arrived later and competes for allocation with Blackwell systems - but supply has improved steadily through early 2026.

The strategic question for buyers is whether to invest in H200s or skip directly to Blackwell. For organizations that already have Hopper infrastructure and want an incremental inference upgrade without a full architecture migration, the H200 is compelling. For greenfield deployments or organizations willing to invest in new infrastructure, the B200 or GB200 NVL72 offer significantly more performance per GPU at the cost of higher power and cooling requirements.

Cost-Efficiency Analysis

The H200's value proposition depends entirely on the workload. For inference on 70B-class models, the comparison against two H100s is straightforward:

| Metric | 2x H100 SXM | 1x H200 SXM |

|---|---|---|

| GPU cost | $50,000 - $60,000 | $25,000 - $35,000 |

| Power draw | 1,400W | 700W |

| Annual power cost (at $0.10/kWh, 1.3 PUE) | ~$1,596 | ~$798 |

| Server slots | 2 (or 1 dual-GPU) | 1 |

| NVLink overhead | Yes | None |

| Inference latency | Higher (sharding) | Lower (single GPU) |

The H200 saves 40-50% on GPU acquisition, 50% on power, and delivers lower latency. For the specific use case of 70B model inference, there is no scenario where two H100s are preferable to one H200.

Strengths

- 141GB HBM3e fits models that require two H100s onto a single GPU - halving infrastructure for 70B-class inference

- 4,800 GB/s memory bandwidth delivers up to 1.9x inference throughput over the H100 on large language models

- Drop-in replacement for H100 - identical Hopper architecture means zero software migration effort

- Same 700W TDP as the H100 - no datacenter power or cooling upgrades required for the swap

- HBM3e's higher density and bandwidth come with improved power efficiency per bit compared to HBM3

- Full Hopper feature set including Transformer Engine, MIG, NVLink Switch System, and confidential computing

- Excellent price-to-inference-performance ratio when compared against deploying two H100s for the same workload

- MIG profiles with ~2x more memory per instance enable serving larger models in multi-tenant configurations

- KV-cache headroom supports more concurrent long-context requests per GPU

Weaknesses

- Identical compute to the H100 - no improvement for compute-bound training workloads

- At $25,000-$35,000, the premium over H100 may not be justified for workloads that are not memory-bandwidth-bound

- Already outperformed by the B200 on both compute and bandwidth - short lifecycle as the top Hopper option

- 141GB is generous but still falls short of the B200's 192GB, limiting headroom for the largest models and longest context windows

- NVLink bandwidth remains at 900 GB/s (4th gen) - no improvement over H100, while the B200 doubles it to 1,800 GB/s

- Limited to SXM form factor - no PCIe variant, restricting deployment options for smaller-scale infrastructure

- Supply competes with Blackwell allocations - NVIDIA's manufacturing priority has shifted to Blackwell silicon

- No FP4 support - the precision format that gives Blackwell its largest inference throughput advantage

Who Should Buy the H200

The H200 occupies a narrow but important niche: it is the best GPU for organizations that need more memory than the H100 provides but cannot or do not want to migrate to Blackwell.

Good Fit

Inference serving for 70B-class models. This is the H200's sweet spot. If your production workload centers on serving Llama 3 70B, Qwen 2.5 72B, or similar 70B-class models, the H200's 141GB of HBM3e eliminates the need for multi-GPU sharding at FP16 or provides generous KV-cache headroom at FP8. This single-GPU advantage reduces infrastructure costs by up to 50% compared to a two-H100 deployment for the same model.

Organizations with existing Hopper infrastructure. If you have already deployed H100 systems and want to expand capacity with better inference performance, the H200 drops directly into your existing software stack and operational procedures. No architecture migration, no new drivers, no new inference engine versions. Swap the GPU, keep everything else.

Long-context inference applications. Applications that require long context windows (128K+ tokens) benefit disproportionately from the H200's extra memory. The KV-cache for long-context requests grows rapidly, and the H200's headroom allows more concurrent long-context requests per GPU. For retrieval-augmented generation (RAG) systems, document analysis, and code understanding tasks with long inputs, the H200's memory advantage translates directly to higher concurrent throughput.

Bridge deployments while waiting for Blackwell. Organizations that need inference capacity now but have Blackwell orders in the pipeline can deploy H200s as an interim solution. When the Blackwell systems arrive, the H200s can be repurposed for workloads where their performance is sufficient (smaller model serving, fine-tuning, development), and the Blackwell systems can take over the most demanding workloads.

Poor Fit

Compute-bound training workloads. The H200 has identical compute to the H100. If your bottleneck is training throughput rather than memory capacity, the H200 provides no improvement over the H100. The premium is wasted on workloads that are not memory-bound.

Budget-constrained deployments serving small models. If you are serving 7B-13B models that fit comfortably on an H100 or even an A100, the H200's extra memory provides no practical benefit. The lower-cost alternatives deliver the same effective throughput for these workloads.

New large-scale deployments. If you are building infrastructure from scratch, the B200 offers 2.3x the compute and 1.67x the bandwidth for a modest price premium. The B200's larger memory (192GB vs 141GB), FP4 support, and higher bandwidth make it the better long-term investment for greenfield deployments.

Deployment Considerations

Migration from H100

The H200 migration from H100 is the simplest GPU upgrade in the datacenter GPU market. The key compatibility points:

- Software stack: Identical. Same CUDA compute capability (9.0), same driver versions, same library builds.

- Power delivery: Same 700W TDP. No power supply or PDU upgrades required.

- Cooling: Same thermal envelope. No cooling infrastructure changes needed.

- Form factor: Same SXM5 socket. Compatible with existing HGX H100 baseboards (with baseboard firmware update from OEM).

- NVLink topology: Same fourth-generation NVLink at 900 GB/s. Same NVSwitch compatibility.

The only required change is the physical GPU swap and a firmware update on the baseboard. This is a planned maintenance operation that can typically be completed in under 30 minutes per server with GPU hot-plug support, or during a standard maintenance window otherwise.

Network and Storage Considerations

The H200's higher inference throughput means it generates and consumes data faster than the H100. Organizations upgrading should verify that their network and storage infrastructure can keep up:

- Network egress: 1.9x higher inference throughput means 1.9x more response tokens per second. If your network was near capacity with H100 inference, the H200 may saturate it.

- Model loading: The 141GB of HBM3e takes longer to fill from storage on cold start. High-speed NVMe storage (at least 10 GB/s read throughput) is recommended to minimize model loading times.

- Monitoring: GPU memory utilization monitoring thresholds may need adjustment. What was "80% utilization" on an H100 (64GB) represents a different operating point on an H200 (113GB at 80%).

Generational Context

Where the H200 Fits in NVIDIA's Lineup

The H200 is an unusual product in NVIDIA's datacenter GPU history. It is a "refresh" rather than a new architecture - the same GH100 silicon with upgraded memory packaging. This is similar to how NVIDIA has historically released "Ti" or "Super" variants of consumer GPUs, but it is less common in the datacenter segment where new architectures typically dominate the product cycle.

The H200's position is strategically important for NVIDIA. It extends the Hopper product line's commercial relevance while Blackwell ramps production, gives customers a meaningful upgrade path without architecture migration risk, and demonstrates the performance headroom available from memory technology improvements alone. The fact that 43% more bandwidth translates to up to 1.9x inference throughput illustrates how memory-bandwidth-starved the H100 was for inference workloads.

H200 vs A100: A Full Generation Plus Memory

Compared to the A100, the H200 provides: FP8 support (the A100 has none), 1.76x memory capacity (141GB vs 80GB), 2.35x bandwidth (4,800 vs 2,039 GB/s), and the Transformer Engine for automatic precision management. The real-world inference improvement over the A100 is approximately 3-5x for transformer workloads, driven by the combination of FP8 compute and higher bandwidth.

At $25K-$35K versus $10K-$15K (used), the H200 costs approximately 2-3x as much as an A100. But it delivers 3-5x the inference throughput, making it more cost-efficient per token for workloads that can use FP8 precision. For training, the improvement is 2.5-3.5x at FP8 versus the A100 at BF16 - also cost-efficient given the price difference.

The H200 vs Blackwell Decision

The most common question about the H200 is whether to buy it or wait for Blackwell. The answer depends on timeline and workload.

| Factor | H200 Advantage | B200 Advantage |

|---|---|---|

| Availability | Shipping now | Limited supply, 3-6 month lead time |

| Software maturity | Hopper stack is battle-tested | Blackwell optimizations still maturing |

| Power requirements | 700W (air-coolable) | 1,000W (liquid cooling recommended) |

| Datacenter compatibility | Drops into existing Hopper infrastructure | May require power and cooling upgrades |

| Compute throughput | 3,958 TFLOPS FP8 | 9,000 TFLOPS FP8 (2.3x) |

| Memory bandwidth | 4,800 GB/s | 8,000 GB/s (1.67x) |

| Memory capacity | 141 GB | 192 GB (1.36x) |

| FP4 support | No | Yes (18,000 TFLOPS sparse) |

| Per-GPU price | $25,000 - $35,000 | $30,000 - $40,000 |

For organizations that need capacity within 60 days, the H200 wins. For organizations planning deployments 6+ months out, the B200 is the better investment. For organizations in between, the decision depends on how much the workload benefits from FP4 precision and whether the datacenter infrastructure supports Blackwell's power and cooling requirements.

Use Case Deep Dives

Serving 70B Models at Scale

The H200's signature use case is serving 70B-class models on a single GPU. Here is how the deployment economics compare across GPU options for a fleet serving Llama 3 70B:

| Configuration | GPUs per Model | Fleet for 10K tok/s | Total GPU Cost | Annual Power |

|---|---|---|---|---|

| A100 80GB (INT8, 2-GPU shard) | 2 | 26 GPUs | $325K | $11,856 |

| H100 SXM (FP8, 1-GPU tight) | 1 | 16 GPUs | $440K | $12,768 |

| H200 SXM (FP8, 1-GPU) | 1 | 10 GPUs | $300K | $7,980 |

| B200 (FP4, 1-GPU) | 1 | 4 GPUs | $140K | $4,560 |

The H200 delivers the best TCO among Hopper options for this workload. It requires 38% fewer GPUs than the H100 (10 vs 16) and 62% fewer than the A100 (10 vs 26), with proportionally lower power costs. Only the B200 at FP4 beats it on absolute economics, but with the caveat of supply constraints and infrastructure requirements.

Long-Context Applications

The H200 excels at long-context inference workloads where KV-cache memory is the primary bottleneck. For a 70B model at FP8 (occupying ~70GB), the available KV-cache memory on each GPU is:

| GPU | Total Memory | Model Weights (FP8) | Available for KV-Cache |

|---|---|---|---|

| H100 SXM | 80 GB | ~70 GB | ~10 GB |

| H200 SXM | 141 GB | ~70 GB | ~71 GB |

| B200 | 192 GB | ~70 GB | ~122 GB |

The H200's 71GB of KV-cache headroom versus the H100's 10GB is a 7x improvement. In practical terms, this means the H200 can serve approximately 7x more concurrent long-context requests for the same model. For applications like document analysis, codebase understanding, and multi-document RAG where input contexts routinely exceed 32K tokens, this difference determines whether the system can serve users at acceptable latency or queues requests.

Mixture-of-Experts Model Serving

MoE models benefit significantly from the H200's extra memory. Mixtral 8x22B (176B total parameters) requires approximately 176GB at FP16 or 88GB at FP8. The H100 cannot fit this model at any precision on a single GPU. The H200 fits it at FP8 (88GB < 141GB) with 53GB of KV-cache headroom. This eliminates the need for multi-GPU sharding, reducing deployment complexity and inter-GPU communication overhead.

For larger MoE models like DeepSeek V3.2 (671B total, 37B active), even the H200 requires multi-GPU sharding. At FP8, the model requires approximately 335GB of memory for weights alone. A 4-GPU H200 configuration provides 564GB of total memory (141GB x 4), which accommodates the model with generous KV-cache headroom. The same deployment on H100s would require at least 5-6 GPUs due to the 80GB per-GPU limit.

Cloud Provider Availability

Cloud availability for the H200 is growing but remains less extensive than the H100's coverage. Several providers have added H200 instances:

| Cloud Provider | Instance Type | GPUs per Instance | On-Demand Price (approx.) |

|---|---|---|---|

| AWS | p5e.48xlarge | 8x H200 141GB | ~$120/hr |

| Google Cloud | a2-ultragpu-8g (H200) | 8x H200 141GB | ~$110/hr |

| Lambda Cloud | gpu_8x_h200_sxm | 8x H200 141GB | ~$33/hr |

| CoreWeave | H200 141GB | 1-8x H200 141GB | ~$4.50/hr/GPU |

The H200's cloud pricing commands a 15-30% premium over H100 instances from the same provider. Whether this premium is justified depends entirely on the workload: for 70B model inference where the H200's extra memory eliminates multi-GPU sharding, the H200's per-token cost is actually lower despite the higher per-hour price.

For cost-sensitive workloads that fit comfortably within 80GB, H100 cloud instances remain the better value. The H200 premium is only worthwhile when the additional memory translates to fewer GPUs per model or higher KV-cache capacity that enables more concurrent requests.

Export Control and Regional Availability

The H200 is subject to the same US export control framework as the H100. NVIDIA has not announced an export-compliant variant of the H200 (analogous to the H800 for the H100), which means the H200 may not be available in restricted markets. Organizations operating in or serving customers in affected regions should verify H200 availability before committing to deployment plans.

The H200's Strategic Position

The H200 occupies a unique position in NVIDIA's datacenter GPU history as a "memory refresh" rather than a new architecture. This approach - shipping the same compute die with improved memory - is common in the consumer GPU market (the RTX 4080 Super, for example) but rare in the datacenter segment where new architectures typically drive each product cycle.

NVIDIA's decision to release the H200 reflects a specific market insight: that the transition from the H100 to Blackwell would take time, and that customers needed a memory upgrade during that transition period. The H200 gives NVIDIA a product to sell to inference-focused customers who need more than the H100 provides but cannot wait for (or afford) Blackwell's infrastructure requirements.

For organizations making purchasing decisions in early 2026, the H200 represents the last Hopper product and the safest incremental upgrade available. It carries zero architecture migration risk, zero software compatibility risk, and zero datacenter infrastructure risk relative to the H100. The only risk is opportunity cost - deploying H200s means not deploying Blackwell, which offers significantly higher performance.

The optimal strategy depends on time horizon. For deployments with a 1-2 year compute horizon, the H200 is a rational choice that delivers immediate value without infrastructure risk. For deployments with a 3-5 year horizon, investing in Blackwell infrastructure (liquid cooling, higher power density) positions the organization for higher performance throughout the deployment period.

Related Coverage

- NVIDIA H100 SXM - The AI Training Benchmark - The base Hopper GPU that the H200 upgrades

- NVIDIA A100 - The GPU That Built Modern AI - The Ampere predecessor still deployed at massive scale

- NVIDIA B200 - Blackwell Flagship GPU - The next-generation flagship with 2x+ the compute and bandwidth

- NVIDIA GB200 NVL72 - Rack-Scale Blackwell - 72-GPU rack system for trillion-parameter workloads

- NVIDIA GB300 NVL72 - Blackwell Ultra - Next-generation rack system with 288GB HBM3e per GPU