Lumai Iris Nova: Optical AI Inference Server

Lumai's optical AI inference server uses light-based computing to run billion-parameter LLMs with up to 90% less power than GPUs.

TL;DR

- First commercial optical computing system to run real-time inference on Llama 8B and 70B

- Optical tensor engine performs matrix multiplications using light - no transistors doing the multiplying

- Vendor claims up to 90% lower energy than GPU architectures; no independent benchmark data exists yet

- Available for evaluation by hyperscalers, neo-clouds, and research institutions - not generally sold

- Roadmap includes Aura and Tetra variants; Tetra targets 100 TOPS/W and 1 exaOPS in 10kW by 2029

- No TOPS figures disclosed for Iris Nova; all performance claims are Lumai's own

Overview

Lumai's Iris Nova is a genuine engineering first: a commercial inference server that uses light rather than electrons to execute the matrix multiplications central to LLM inference. The company, spun out of Oxford optics research in 2021, launched the product on April 28, 2026, after winning the Falling Walls Award for Science Breakthrough of the Year in 2025. The core idea - encoding vectors as patterns of light intensity, multiplying them through optical components, and reading the output as an analog electrical signal - has been a research goal for decades. Lumai is the first company to ship it in a form factor that datacenter operators can evaluate.

The architecture is a hybrid. Digital electronics handle system control, memory, data conversion, and the software layer. The optical tensor engine handles only one thing: matrix multiplications. Input vectors get encoded into 1,024 laser light sources, which are then duplicated by lenses across the optical volume. An electronic display with pixel-level brightness control encodes the weight matrix - darker pixels attenuate the light (multiplication), lighter pixels pass it through. A final combining lens sums the contributions across the optical path (addition). The result is an analog output that gets converted back to digital for the next layer. Each forward pass through the optical engine executes millions of multiply-accumulate operations simultaneously across the three-dimensional volume, which is where the claimed efficiency gains come from.

That's the theory. The practice is harder to evaluate now. Lumai hasn't published TOPS figures for Iris Nova, hasn't released head-to-head token throughput data against the H100 or any other accelerator, and hasn't put the system through any independent benchmark suite. What they have shown is that the system runs Llama 8B and Llama 70B in real time - which is a non-trivial achievement for an optical system - and that it fits into a standard PCIe form factor. Whether it competes economically with GPU-based inference at scale is a question nobody outside Lumai can answer yet.

The Lumai Iris Nova inference server uses standard PCIe card form factor. Image: Lumai

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | Lumai (UK) |

| Product Family | Iris |

| Chip Type | Optical Processor (hybrid digital-optical) |

| Process Node | Not applicable (no semiconductor node) |

| Optical Matrix Size | 2048 x 2048 (optical tensor operations) |

| Laser Sources | 1,024 input encoding channels |

| Supported Precision | INT4/INT8 equivalent |

| Supported Models | Llama 8B, Llama 70B (real-time inference) |

| TOPS | Not disclosed |

| TDP | Not disclosed |

| Memory | Not disclosed |

| Memory Bandwidth | Not disclosed |

| Form Factor | Standard PCIe cards |

| Cooling | Air-cooled, datacenter compatible |

| Target Workload | Inference (excels at prefill stage) |

| Availability | Evaluation only (hyperscalers, neo-clouds, enterprises, research) |

| Pricing | Not disclosed |

| Launch Date | April 28, 2026 |

Performance Benchmarks

No independent benchmark data exists for the Iris Nova. Everything in this section is vendor-reported.

Lumai's claims:

- Up to 90% lower energy consumption than conventional GPU architectures (for inference workloads)

- Up to 50x faster than conventional solutions (basis and conditions not specified)

- Runs Llama 8B and Llama 70B in real time

- Excels at the prefill stage of disaggregated inference

- Throughput scales quadratically with matrix size while power scales linearly (a theoretical property of optical matrix multiplication, not a measured result)

Lumai's own efficiency comparison chart. Independent verification is not yet available. Image: Lumai

What Lumai has not disclosed: tokens per second on any model, time-to-first-token, prefill latency versus a GPU baseline, throughput at any batch size, or system-level power consumption including the digital electronics required to run the optical engine.

The "90% lower energy" and "50x faster" figures are eye-catching, but they come with no denominator. Faster and more efficient than what, exactly, under which conditions? Lumai's press materials reference "conventional GPU architectures" without naming a specific chip or workload configuration. That's a red flag for any hardware claim. The 50x figure in particular conflicts with the 90% power reduction - if the system were truly 50x faster at 10% of the power, it'd represent a 500x improvement in FLOPS-per-watt over state-of-the-art GPU inference, which would be extraordinary and would require extraordinary evidence.

The more plausible interpretation is that the efficiency gains are real but apply specifically to the optical tensor engine component in isolation, not to the full system including digital control, memory, and data conversion. That's still interesting, but it's a different claim.

Diagram of Lumai's optical matrix multiplier architecture. Weights are encoded as pixel brightness; light propagation performs the multiplication. Image: Lumai

The prefill specialization is a meaningful admission. Disaggregated inference separates the prefill phase (processing the input prompt, which is compute-bound) from the decode phase (creating tokens one at a time, which is memory-bandwidth-bound). Lumai's system is built for compute-bound workloads, which makes sense given that optical computing excels at dense matrix operations. The memory-bound decode phase still needs conventional hardware. Any realistic deployment pairing Iris Nova for prefill would need GPU or other accelerators for decode - a hybrid stack that adds operational complexity.

Key Capabilities

Optical Tensor Engine. The central claim of the Iris Nova is that it performs matrix multiplication using light rather than transistors. The mechanism is closer to analog computing than digital: input vectors are converted to laser intensities, weight matrices are encoded as pixel-level brightness patterns on an electronic display, and the optical system computes the dot products through physical light propagation. This executes in the time it takes light to travel through the optical volume - effectively instantaneous from an electronics standpoint - and many multiplications happen simultaneously across the 3D volume. The 2048 x 2048 optical matrix size suggests the maximum dimension of the tensors the engine can process natively.

This approach has a real physical advantage. Optical multiply-accumulate operations don't dissipate heat the way transistor switching does. The energy cost comes from encoding inputs (the digital-to-analog conversion) and reading outputs (the analog-to-digital conversion), not from the multiplication itself. For large matrix multiplications - which dominate LLM inference - this theoretically shifts where energy is spent. Whether that shift is large enough to matter at system level, given all the surrounding digital infrastructure, is what real benchmark data would tell us.

Lumai's diagram showing how the optical engine fits into the broader inference stack. Image: Lumai

Iris Product Family and Roadmap. The Nova is the first of three planned servers in the Iris line. Lumai has named two successors: Aura (no specs disclosed) and Tetra. The Tetra has a concrete target: 100 TOPS/W at INT8 precision and 1 exaOPS in a 10 kW power envelope, both projected for 2029. For reference, NVIDIA's H100 delivers roughly 2-4 TOPS/W at INT8 depending on workload - so Tetra's target would be a 25-50x improvement in energy efficiency at scale, within a three-year timeline. That's an aggressive claim, and 2029 is far enough away that it should be treated as an aspiration rather than a commitment.

Prefill Specialization. The Iris Nova aims to handle the compute-bound prefill phase of disaggregated inference. This is the part of the inference pipeline that ingests the full prompt context and generates the KV cache. It's where large matrix multiplications are concentrated, and where an optical system's parallel computation advantage applies most directly. The decode phase - autoregressive token generation - involves smaller, sequential operations that are bottlenecked by memory bandwidth rather than compute. Lumai's architecture doesn't claim to address the decode bottleneck, which means the Nova is a prefill accelerator, not a complete inference solution on its own.

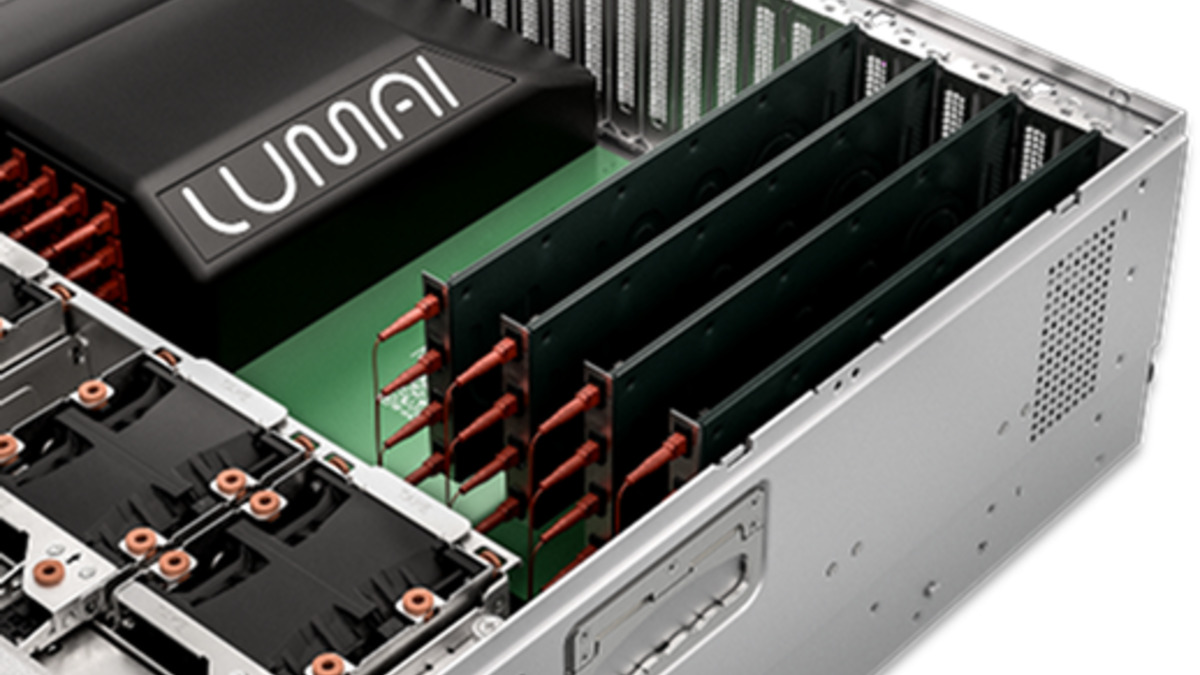

PCIe Form Factor. Fitting the optical engine into a standard PCIe card is a genuine achievement. Optical systems usually require precise mechanical alignment, vibration isolation, and temperature control that conflicts with the dense, hot environments of datacenter server slots. Lumai's engineering to get this into a PCIe form factor with air cooling is non-trivial and removes a major barrier to datacenter adoption.

Pricing and Availability

Lumai isn't selling Iris Nova yet. The product is available for evaluation by hyperscalers, neo-clouds, enterprises, and research institutions who request access at lumai.ai/eval. No pricing has been disclosed, which is normal for evaluation-stage hardware but makes any ROI calculation impossible at this point.

The Groq LPU - another inference-specialized accelerator with an unconventional architecture - similarly launched as a specialized product through controlled access before broader deployment. The question for Lumai is whether the optical manufacturing process can scale to volume production at competitive cost, which is a separate challenge from proving the technology works.

General commercial availability has no announced timeline. The 2029 Tetra roadmap suggests Lumai is thinking in multi-year product cycles, which is realistic for novel manufacturing technology but slow by datacenter procurement standards. Organizations evaluating Iris Nova today are assessing research partnerships as much as products.

Strengths and Weaknesses

Strengths

- Genuine technical novelty: the only shipping optical computing system for LLM inference

- PCIe form factor with air cooling enables datacenter integration without exotic infrastructure

- Physics-based advantage in energy for matrix multiplication could translate to real system-level gains at scale

- Oxford optics lineage and ARIA backing suggest credible scientific foundation

- Strong prefill specialization: compute-bound prefill is exactly where optical parallelism applies

- Roadmap to 100 TOPS/W by 2029, if achieved, would redefine the efficiency frontier

Weaknesses

- Zero independent performance data; all claims are self-reported without verifiable methodology

- No TOPS figures, no token throughput numbers, no latency benchmarks against any named baseline

- Prefill-only specialization means any deployment needs complementary decode hardware

- General sale timeline is unknown; current "availability" is evaluation access only

- Pricing undisclosed; cost-per-token versus GPU inference can't be calculated

- Optical manufacturing at scale is an unsolved industry problem - yield and cost are unknown

- Not competing with NVIDIA H100 or H200 on any published token throughput benchmark

Related Coverage

- Google TPU v7 Ironwood - Another inference-focused accelerator built around disaggregated serving architecture with large per-chip memory

- Groq LPU - Custom inference ASIC with a similarly radical architectural departure from GPU norms; cloud-only availability model

- Cerebras WSE-3 - Wafer-scale chip that also achieves massive spatial parallelism, though through silicon rather than optics

- Etched Sohu - Another purpose-built transformer inference chip claiming extreme efficiency gains over GPUs

Sources

- Lumai launch press release - GlobeNewswire

- Lumai product page

- Lumai technology overview

- HPCwire: Lumai Debuts Iris Optical Compute System

- Interesting Engineering: UK firm unveils world's first optical system for real-time LLM inference

- EE Times: Lumai Productizes Lens-Based Optical Computer

- Photonics Spectra: Lumai Launches Optical Computing System

✓ Last verified May 15, 2026