Huawei Atlas 350 - China's FP4 Inference Accelerator

Huawei Atlas 350 specs, benchmarks, and analysis. Ascend 950PR chip, 112GB HiBL 1.0 HBM, 1.56 PFLOPS FP4, 600W - China's first domestically developed FP4-capable AI accelerator.

Overview

Huawei unveiled the Atlas 350 accelerator card at the China Partner Conference 2026 on March 20, 2026, powered by the new Ascend 950PR NPU. The card is China's first AI accelerator with native FP4 inference support, and the first Huawei silicon to feature HiBL 1.0 - the company's own self-developed high-bandwidth memory, replacing dependence on SK Hynix or Samsung HBM.

TL;DR

- China's first FP4-capable AI inference chip, delivering 1.56 PFLOPS FP4 in a 600W envelope

- 112GB of Huawei's own HiBL 1.0 HBM at 1.4 TB/s - no longer reliant on third-party HBM suppliers

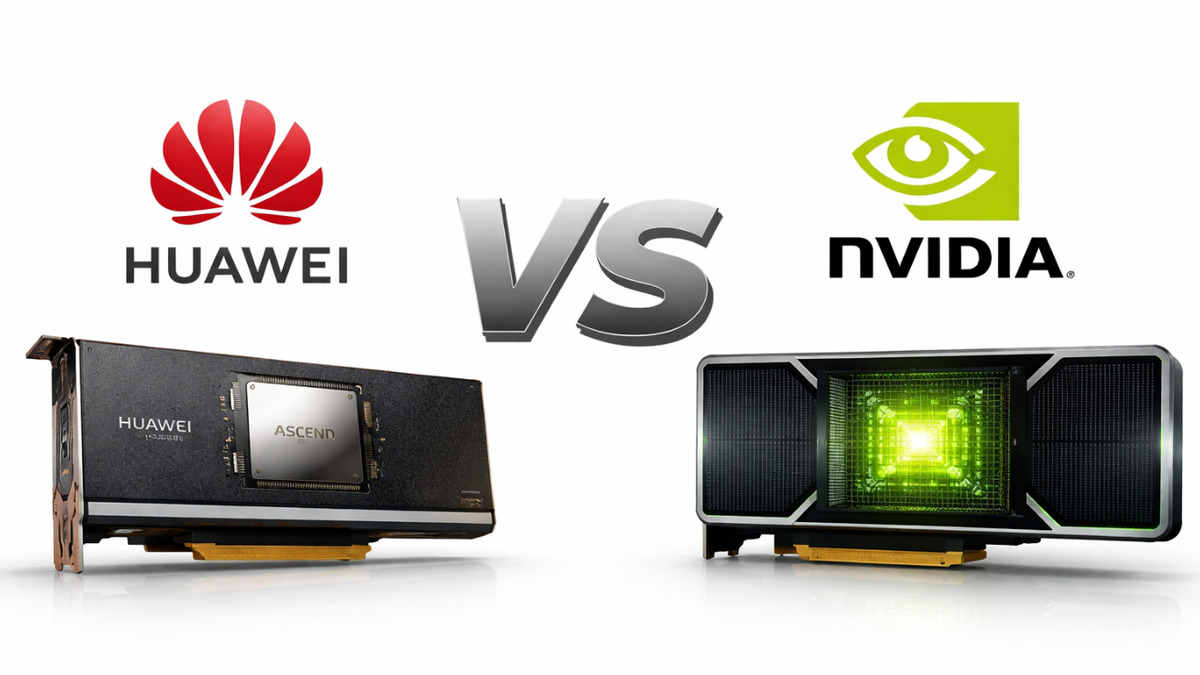

- Claims 2.87x the inference performance of NVIDIA's China-legal H20

- ByteDance and Alibaba confirmed orders; Huawei targets 750,000 units shipped in 2026

The Atlas 350 targets the China AI inference market, where Huawei competes against NVIDIA's H20 - the only NVIDIA datacenter chip still legally exportable to China under US trade restrictions. With the H20 restricted in capability by those same rules, the performance gap Huawei claims is partly a consequence of the H20's deliberately limited specs, not solely Huawei's engineering progress.

Still, the Atlas 350 represents a real step forward for domestic Chinese AI infrastructure. Both ByteDance and Alibaba have confirmed plans to order the 950PR after testing showed improved software compatibility with NVIDIA's CUDA ecosystem - a persistent weakness in earlier Ascend generations that historically limited adoption.

The chip also arrives as Chinese AI companies like DeepSeek continue pushing efficiency frontiers, making inference throughput per watt a competitive differentiator. See our coverage of DeepSeek V4 for context on how Chinese labs are driving demand for inference-optimized hardware.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | Huawei |

| Product Family | Atlas |

| Chip | Ascend 950PR |

| Chip Type | ASIC (Inference-focused) |

| Process Node | Not disclosed |

| Memory | 112GB HiBL 1.0 HBM |

| Memory Bandwidth | 1,400 GB/s (1.4 TB/s) |

| FP4 Performance | 1,560 TFLOPS (1.56 PFLOPS) |

| FP8 Performance | Not disclosed |

| FP16 Performance | Not disclosed |

| TDP | 600W |

| Interconnect (LingQu) | 2,000 GB/s (2 TB/s), 2.5x over 910 series |

| FP4 Support | Yes (first in China) |

| Release Date | Q1 2026 |

| Price |

Performance Benchmarks

No independent third-party benchmarks are publicly available. All figures below are from Huawei's own announcements at the March 2026 conference.

| Benchmark | Atlas 350 (Ascend 950PR) | NVIDIA H20 | Huawei Ascend 910C |

|---|---|---|---|

| FP4 Performance | 1.56 PFLOPS | Not supported | Not supported |

| Memory Capacity | 112GB | 96GB | 96GB |

| Memory Bandwidth | 1.4 TB/s | 4.0 TB/s | ~1.8 TB/s |

| Interconnect | 2 TB/s (LingQu) | NVLink 4.0 | ~1.0 TB/s |

| TDP | 600W | 400W | 400W |

| H20-relative throughput | 2.87x (Huawei claim) | 1.0x | ~0.6x (est.) |

| Multimodal gen. Speed | +60% vs H20 (Huawei claim) | Baseline | Below H20 |

The 2.87x claim deserves scrutiny. Huawei's comparison uses FP4 precision on the Atlas 350 against H20's highest supported precision (INT8/FP16), which isn't an apples-to-apples comparison. FP4 provides roughly 2x the theoretical throughput of FP8 at the same silicon area, so some of that performance gap is precision-level, not architectural. Still, 1.56 PFLOPS FP4 is a real number, and the H20's limitations are real - the chip is export-restricted to hobbled specs by design.

The memory bandwidth comparison actually favors H20: 4.0 TB/s versus 1.4 TB/s for the Atlas 350. That gap will hurt on memory-bandwidth-bound inference tasks, particularly decoding for long-context models.

Huawei's Atlas 350 performance comparison against NVIDIA H20, presented at the China Partner Conference 2026.

Source: gizmochina.com

Huawei's Atlas 350 performance comparison against NVIDIA H20, presented at the China Partner Conference 2026.

Source: gizmochina.com

Key Capabilities

HiBL 1.0 - Huawei's Self-Developed HBM

The most strategically significant aspect of the Atlas 350 isn't its FP4 numbers - it's the memory. HiBL 1.0 is Huawei's own high-bandwidth memory technology, developed to eliminate dependence on SK Hynix and Samsung HBM supply chains that US export controls can disrupt. The 950PR carries 128GB of HiBL 1.0 at 1.6 TB/s on the chip itself, with the Atlas 350 card exposing 112GB at 1.4 TB/s.

Memory access granularity was also reduced from 512 bytes to 128 bytes compared to the Ascend 910 series, which should meaningfully reduce memory bandwidth waste on sparse access patterns common in attention computations.

LingQu Interconnect

The 950PR introduces the LingQu interconnect protocol at 2 TB/s bandwidth - 2.5x the interconnect bandwidth of the prior Ascend 910 series. Huawei has not published detailed topology specifications for how multiple cards connect, but this improvement addresses one of the 910B/910C's documented weaknesses in multi-card scaling for large model serving.

Previous Ascend hardware, including the Ascend 910C, relied on a slower interconnect that limited the effective throughput when scaling across four or eight cards. Better interconnect bandwidth matters most for large model inference where attention layers must synchronize KV cache across cards.

CUDA Compatibility

Earlier Ascend chips suffered from incomplete CUDA compatibility, which required significant porting effort to run standard PyTorch-based inference stacks. According to ByteDance and Alibaba's testing (cited in Reuters reporting), the 950PR has improved this substantially. Both companies cited CUDA compatibility as a key factor in their decision to place orders. Huawei has not published specifics on which CUDA operations are now fully supported, so independent validation from the open-source community remains the benchmark that matters.

Pricing and Availability

Huawei priced the Atlas 350 at approximately 111,000 CNY, roughly $16,000 at current exchange rates. NVIDIA's H20 sells for $15,000-$25,000 in China depending on supplier and allocation, placing the Atlas 350 at the lower end of that range.

Huawei aims to ship 750,000 units in 2026, with mass production fully ramped in the second half of the year. For context, NVIDIA reportedly sold around 500,000 H20 units in China across 2024. If Huawei hits its shipment target, it'd represent a major shift in China's AI compute supply chain.

The Atlas 350 is only available for purchase within China. No international availability is planned given both NVIDIA's dominance in other markets and Chinese government interest in keeping domestic AI compute infrastructure inside its borders.

Strengths and Weaknesses

Strengths

- First Chinese AI chip with FP4 support, enabling modern quantized inference

- HiBL 1.0 removes dependence on foreign HBM supply chains

- 2 TB/s LingQu interconnect is 2.5x faster than prior Ascend generation

- ~$16,000 price sits at or below H20 market pricing in China

- ByteDance and Alibaba orders suggest real-world software compatibility has improved

- 60% multimodal generation speed improvement over H20 on Huawei's own tests

Weaknesses

- Memory bandwidth (1.4 TB/s) is well below H20 (4.0 TB/s) - a significant disadvantage for bandwidth-bound workloads

- Process node not disclosed, suggesting Huawei is cautious about revealing manufacturing partner

- All performance benchmarks are Huawei self-reported with no independent validation

- FP4-vs-H20 comparison uses different precision levels, inflating the headline figure

- No training capability - inference only

- Limited to China market

Related Coverage

- Huawei Ascend 910B - Previous generation chip

- Huawei Ascend 910C - Most recent prior chip the 950PR replaces

- China's AI chip self-sufficiency push

- DeepSeek V4 - Key inference workload driving demand for chips like the Atlas 350

Sources

✓ Last verified April 1, 2026