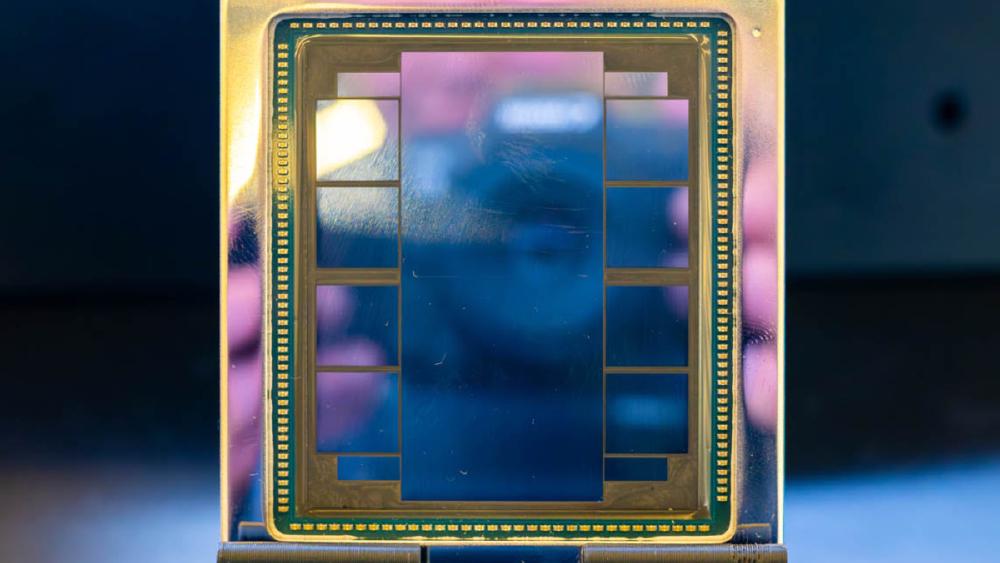

AMD Instinct MI350X

AMD Instinct MI350X specs and performance estimates. 288GB HBM3e, ~6,000 GB/s bandwidth, ~3,600 TFLOPS FP8 on CDNA 4 architecture at TSMC 3nm.

TL;DR

- 288GB HBM3e memory - a 50% increase over the MI300X's 192GB and 3.6x the NVIDIA H100's 80GB

- Estimated ~3,600 TFLOPS FP8 compute, roughly 38% higher than the MI300X's 2,610 TFLOPS

- CDNA 4 architecture on TSMC 3nm - a full node shrink from CDNA 3's 5nm/6nm chiplets

- New FP4 and FP6 data type support for next-generation quantization strategies

- Expected in H2 2025 at an estimated $15,000-$20,000 - AMD's direct answer to NVIDIA's B200 generation

Overview

The AMD Instinct MI350X is AMD's CDNA 4 flagship and the company's most aggressive bid yet to close the gap with NVIDIA in the data center AI accelerator market. Announced at AMD's Advancing AI event in late 2024 and targeted for H2 2025 availability, the MI350X builds on everything that made the MI300X compelling - massive memory capacity, competitive FP8 compute, chiplet architecture efficiency - and pushes every spec higher while moving to TSMC's 3nm process node.

The 288GB of HBM3e is the standout number. Where the MI300X's 192GB was already 2.4x the H100's capacity, the MI350X extends that lead to 3.6x. For inference workloads, this means a single MI350X can hold models that require two H100s or even two MI300Xs. The memory bandwidth also increases to an estimated 6,000 GB/s, ensuring the larger memory pool can actually feed the compute engine. AMD has been clear that memory capacity is their competitive wedge against NVIDIA, and the MI350X doubles down on that strategy.

CDNA 4 also introduces new data types. FP4 and FP6 support joins the existing FP8, FP16, and BF16 capabilities, giving quantization-focused inference deployments more options to trade precision for throughput. This matters because the industry is rapidly converging on aggressive quantization for production inference - running a model at FP4 with minimal quality degradation means you can serve roughly 2x the throughput compared to FP8. NVIDIA's Blackwell architecture introduced FP4 support as well, so this is AMD keeping pace on the format front rather than leading, but having parity matters for customers evaluating both platforms.

The ROCm software story remains the critical variable. AMD has invested heavily in ROCm 6.x, and framework support from PyTorch, JAX, and inference engines like vLLM continues to improve. But NVIDIA's CUDA ecosystem advantage is measured in millions of developer-hours of optimization, and each new hardware generation resets part of that race. The MI350X will need strong day-one software support to convert the hardware specs into real-world performance leadership.

Key Specifications

| Specification | Details |

|---|---|

| Manufacturer | AMD |

| Product Family | Instinct MI350 |

| Architecture | CDNA 4 |

| Process Node | TSMC 3nm |

| Chip Type | GPU |

| FP8 Performance | ~3,600 TFLOPS (estimated) |

| FP4 Performance | ~7,200 TFLOPS (estimated, 2x FP8) |

| FP16 / BF16 Performance | ~1,800 TFLOPS (estimated) |

| Memory | 288GB HBM3e |

| Memory Bandwidth | ~6,000 GB/s (estimated) |

| New Data Types | FP4, FP6 |

| Interconnect | AMD Infinity Fabric (next-gen) |

| TDP | 750W |

| Form Factor | OAM (expected) |

| Target Workload | Training and Inference |

| Release Date | H2 2025 (expected) |

| Estimated Price | $15,000-$20,000 |

Note: Many MI350X specifications are based on AMD's public roadmap disclosures and analyst estimates. Final specifications may differ at launch. This page will be updated with verified data once AMD publishes official specifications and independent benchmarks become available.

Performance Benchmarks (Estimated)

| Benchmark / Metric | MI350X (est.) | MI300X | NVIDIA H100 SXM | NVIDIA B200 |

|---|---|---|---|---|

| FP8 Peak (TFLOPS) | ~3,600 | 2,610 | 1,979 | ~4,500 |

| FP4 Peak (TFLOPS) | ~7,200 | N/A | N/A | ~9,000 |

| Memory Capacity | 288GB | 192GB | 80GB | 192GB |

| Memory Bandwidth | ~6,000 GB/s | 5,300 GB/s | 3,350 GB/s | 8,000 GB/s |

| LLaMA 70B Inference (est. tok/s) | ~55-65 | ~38-42 | ~22-28 | ~50-60 |

| Llama 405B fits (single GPU) | Yes (FP4/FP6) | No | No | Yes (FP4) |

| Power (TDP) | 750W | 750W | 700W | 1,000W |

| Price (estimated) | $15,000-$20,000 | $10,000-$15,000 | $25,000-$40,000 | $30,000-$40,000 |

These are estimates based on AMD's disclosed specifications and historical generational scaling. The MI350X's performance relative to NVIDIA's B200 will depend heavily on software maturity at launch. On raw silicon specs, the B200 appears to have higher peak compute and memory bandwidth, but the MI350X's memory capacity advantage (288GB vs 192GB) could matter for specific model sizes that fit on MI350X but spill on B200.

The Llama 405B inference capability is particularly interesting. At FP4 precision, a 405B parameter model requires approximately 200GB of memory. The MI350X's 288GB can handle this on a single accelerator with room for KV cache. On the B200 with 192GB, you would need aggressive quantization or a two-GPU setup for the same model. This kind of model-size sweet spot could be a meaningful differentiator for inference providers.

Key Capabilities

CDNA 4 Architecture on 3nm. The move from TSMC 5nm/6nm to 3nm is not just a die shrink - it enables AMD to pack significantly more compute into the same power envelope. The MI350X maintains the 750W TDP of its predecessor while delivering an estimated 38% more FP8 compute and 50% more memory. AMD's chiplet approach also benefits from the node shrink, as smaller compute dies mean higher yields and more flexibility in how compute and I/O are distributed across the package. The 3nm node puts AMD on process parity with NVIDIA's Blackwell generation.

FP4 and FP6 Quantization Support. The addition of FP4 and FP6 data types is a significant capability for inference optimization. FP4 quantization can roughly double the effective throughput compared to FP8 for models that tolerate the reduced precision, and FP6 provides an intermediate option. Research from multiple labs has shown that large language models can be quantized to FP4 with minimal quality degradation when proper calibration techniques are used. Having hardware-native FP4 support - rather than software emulation - means the MI350X can execute these quantized operations at full silicon speed.

Memory Capacity Leadership. At 288GB, the MI350X offers the highest memory capacity of any single AI accelerator in its generation. This is a deliberate strategic choice by AMD. Memory capacity determines which models fit on a single chip, and every time you split a model across multiple GPUs, you pay a performance and cost penalty for inter-GPU communication. By offering 288GB, AMD ensures that even the largest open-weight models - Llama 3.1 405B, DeepSeek V3.2, and their successors - can be served from a single MI350X with appropriate quantization.

Pricing and Availability

AMD has not announced official pricing for the MI350X. Based on the MI300X's pricing trajectory and the cost of the 3nm process node, analyst estimates place the MI350X at $15,000 to $20,000 per accelerator. This would represent a modest price increase over the MI300X while delivering significantly more compute and memory.

| Accelerator | Estimated Price | Memory | Price per GB | FP8 TFLOPS | Price per TFLOP |

|---|---|---|---|---|---|

| AMD MI350X (est.) | $15,000-$20,000 | 288GB | $52-$69/GB | ~3,600 | $4.2-$5.6 |

| AMD MI300X | $10,000-$15,000 | 192GB | $52-$78/GB | 2,610 | $3.8-$5.7 |

| NVIDIA B200 | $30,000-$40,000 | 192GB | $156-$208/GB | ~4,500 | $6.7-$8.9 |

| NVIDIA H100 SXM | $25,000-$40,000 | 80GB | $312-$500/GB | 1,979 | $12.6-$20.2 |

If these estimates hold, the MI350X would offer the best price-per-GB and competitive price-per-TFLOP in its generation. AMD's structural cost advantage from chiplet manufacturing continues to pay dividends here.

Availability is expected in H2 2025, with major OEM and cloud provider support. Microsoft Azure and Oracle Cloud have been early adopters of AMD Instinct accelerators and are likely to offer MI350X instances. Server platforms from Dell, HPE, Lenovo, and Supermicro are expected at or near launch.

Architecture Deep Dive

The MI350X continues AMD's chiplet strategy from the MI300X but advances every dimension - the process node, the memory generation, the data type support, and the on-package integration.

CDNA 4 Compute Architecture. While AMD has not disclosed the full CDNA 4 microarchitecture, several improvements are confirmed or strongly expected based on AMD's public roadmap and analyst briefings:

| Feature | CDNA 3 (MI300X) | CDNA 4 (MI350X) |

|---|---|---|

| Process Node | TSMC 5nm (XCD) / 6nm (IOD) | TSMC 3nm |

| Compute Dies (XCD) | 8 | Expected 8 (higher density per die) |

| FP8 TFLOPS | 2,610 | ~3,600 (estimated) |

| FP4 Support | No | Yes (hardware-native) |

| FP6 Support | No | Yes (hardware-native) |

| Sparsity Acceleration | Basic | Enhanced (2:4 structured sparsity expected) |

| Matrix Core Throughput | Baseline | ~1.4x per core (estimated) |

The move to TSMC's 3nm (N3E variant, most likely) allows AMD to pack more transistors per XCD while maintaining or reducing power consumption. Each CDNA 4 XCD is expected to contain more compute units than its CDNA 3 predecessor, and the matrix cores within each CU are redesigned for higher throughput on lower-precision data types. The combination of more CUs per die and more throughput per matrix core accounts for the estimated 38% FP8 performance improvement even before considering the new FP4 mode that doubles effective throughput again.

HBM3e Memory Subsystem. The MI350X moves from 8x HBM3 stacks (24GB each, 192GB total) to HBM3e stacks providing 288GB total. HBM3e offers both higher capacity per stack (up to 36GB in 12-Hi configurations) and higher bandwidth per stack (approximately 750-800 GB/s versus the MI300X's ~662 GB/s per stack). The aggregate ~6,000 GB/s bandwidth represents a 13% improvement over the MI300X.

| Memory Property | MI300X (HBM3) | MI350X (HBM3e) | Improvement |

|---|---|---|---|

| Total Capacity | 192GB | 288GB | +50% |

| Per-Stack Capacity | 24GB (8-Hi) | 36GB (12-Hi, est.) | +50% |

| Number of Stacks | 8 | 8 (expected) | Same |

| Per-Stack Bandwidth | ~662 GB/s | ~750 GB/s (est.) | +13% |

| Aggregate Bandwidth | 5,300 GB/s | ~6,000 GB/s | +13% |

| Memory Generation | HBM3 (SK Hynix) | HBM3e (SK Hynix/Samsung) | New gen |

The bandwidth improvement is proportionally smaller than the capacity improvement, which means the MI350X has a slightly lower bytes-per-FLOP ratio than the MI300X. For purely memory-bandwidth-bound workloads, the MI350X's performance scaling will be closer to 13% than 38%. The new FP4 data type partially offsets this - by halving the memory footprint of model weights, FP4 effectively doubles the available bandwidth for parameter reads.

Chiplet Packaging and Interconnect. AMD is expected to use an advanced 2.5D/3D packaging approach for the MI350X, similar to the MI300X's silicon interposer design but potentially incorporating TSMC's CoWoS-L (chip-on-wafer-on-substrate with local silicon interconnect) for higher inter-chiplet bandwidth. The Infinity Fabric between XCDs is expected to see a bandwidth increase, improving the on-package data movement that determines how efficiently all compute dies can work together on a shared workload. The external Infinity Fabric links for multi-GPU communication are also expected to improve, though AMD has not disclosed specific numbers.

| Packaging Property | MI300X | MI350X (expected) |

|---|---|---|

| Packaging Technology | CoWoS-S (silicon interposer) | CoWoS-L or CoWoS-S (advanced) |

| Die Count | 12 (8 XCD + 4 IOD) | 8+ (details TBD) |

| Compute Die Process | TSMC 5nm | TSMC 3nm |

| I/O Die Process | TSMC 6nm | TSMC 5nm or 6nm (est.) |

| On-Package IF Bandwidth | High | Higher (est.) |

| External IF Links | 7 links, 896 GB/s bidirectional | Improved (est.) |

| Package Size | Large (~5,300 mm2 total) | Similar or larger (est.) |

Power Efficiency Improvements. The move from 5nm to 3nm compute dies should deliver meaningful power efficiency gains. AMD claims the MI350X maintains the MI300X's 750W TDP while delivering 38% more FP8 compute - implying a roughly 38% improvement in performance-per-watt at the FP8 level. At FP4, the efficiency story is even better: the MI350X effectively doubles its throughput (via FP4 halving the data width) without increasing power, yielding approximately 2.76x the TFLOPS-per-watt of the MI300X at the lowest precision tier.

| Efficiency Metric | MI300X | MI350X (est.) | Improvement |

|---|---|---|---|

| FP8 TFLOPS/Watt | 3.48 | ~4.80 | +38% |

| FP4 TFLOPS/Watt | N/A | ~9.60 | New capability |

| GB HBM/Watt | 0.256 | 0.384 | +50% |

| Memory BW/Watt | 7.07 GB/s/W | ~8.00 GB/s/W | +13% |

FP4 and FP6 Data Type Implementation. The FP4 format supported by the MI350X uses a 1-sign, 2-exponent, 1-mantissa bit scheme (E2M1), matching the NVIDIA Blackwell FP4 format for interoperability. FP6 uses 1-sign, 3-exponent, 2-mantissa (E3M2). Both formats are executed natively in the matrix cores, meaning no software emulation overhead. The practical impact:

| Data Type | Bits per Element | Memory for 70B Model | Memory for 405B Model | Estimated Quality Loss |

|---|---|---|---|---|

| FP16/BF16 | 16 | 140GB | 810GB | Baseline |

| FP8 | 8 | 70GB | 405GB | Minimal (<1% accuracy) |

| FP6 | 6 | 52.5GB | 304GB | Small (1-2% accuracy) |

| FP4 | 4 | 35GB | 202.5GB | Moderate (2-5% accuracy, workload-dependent) |

At FP4 precision, the MI350X's 288GB can hold a 405B parameter model (202.5GB for weights) with 85.5GB remaining for KV cache and runtime overhead. This is the headline use case that AMD is targeting.

Structured Sparsity Acceleration. CDNA 4 is expected to support 2:4 structured sparsity - a technique where half the weights in each group of 4 are zeroed out, allowing the hardware to skip those multiply-accumulate operations. NVIDIA introduced 2:4 sparsity support in Ampere (A100) and continued it in Hopper (H100). AMD adding this capability closes another feature gap. With structured sparsity enabled:

| Precision + Sparsity | Effective TFLOPS (est.) | Memory for 70B Model | Notes |

|---|---|---|---|

| FP8 (dense) | ~3,600 | 70GB | Baseline CDNA 4 |

| FP8 (2:4 sparse) | ~7,200 | 70GB | 2x compute, same memory |

| FP4 (dense) | ~7,200 | 35GB | 2x compute via lower precision |

| FP4 (2:4 sparse) | ~14,400 | 35GB | 4x compute vs FP8 dense |

These theoretical maximums assume perfect sparsity utilization, which is rarely achieved in practice. Real-world gains from 2:4 sparsity are typically 1.3-1.7x rather than the theoretical 2x, due to overhead in sparse format conversion and not all layers being suitable for sparsification. Still, the combination of FP4 + structured sparsity could make the MI350X's effective throughput competitive with GPUs that have significantly higher peak dense compute.

Real-World Performance Analysis

Since the MI350X has not launched at the time of writing, real-world performance data is not available. However, we can construct informed projections based on the MI300X's known performance and the MI350X's disclosed specifications.

Projected LLM Inference Throughput. Using the MI300X's measured performance as a baseline and scaling by the MI350X's architectural improvements:

| Model | Precision | MI300X Measured | MI350X Projected | Projection Basis |

|---|---|---|---|---|

| Llama 70B | FP8 | 55-65 tok/s | 70-85 tok/s | +13% bandwidth, +38% compute |

| Llama 70B | FP4 | N/A | 120-150 tok/s | 2x effective throughput vs FP8 |

| Llama 405B | FP4 | N/A (OOM) | 25-35 tok/s | Single GPU, memory-bandwidth bound |

| Llama 405B | FP8 | N/A (OOM) | N/A (OOM) | 405GB > 288GB |

| Mixtral 8x22B | FP8 | N/A (OOM) | 30-40 tok/s | Single GPU, ~90GB FP8 |

These projections assume ROCm software maturity at launch is comparable to current MI300X optimization levels. Actual day-one performance could be 10-20% lower if kernel optimization lags the hardware launch, then improve over the first 6-12 months of production use.

Training Performance Expectations. For pre-training workloads, the MI350X should deliver approximately 30-40% higher per-GPU throughput than the MI300X on large Transformer models. The actual improvement will depend on whether AMD improves the Infinity Fabric inter-node bandwidth proportionally - if inter-GPU communication remains the bottleneck at scale, the compute improvement will not fully translate to training speedups.

AMD's competitive position in training is expected to improve relative to NVIDIA because the MI350X's price-per-TFLOP remains substantially lower than the B200's. Even if the B200 delivers 25% higher absolute training throughput, the MI350X's ~50% lower price could make it the more economical choice for organizations where cost matters more than time-to-train.

Total Cost of Ownership Projection. When planning infrastructure budgets, the full TCO comparison matters more than unit price:

| TCO Component (3-year, per GPU) | MI350X (est.) | NVIDIA B200 (est.) | Notes |

|---|---|---|---|

| Hardware Acquisition | $15,000-$20,000 | $30,000-$40,000 | Upfront capital |

| Power (3yr, $0.10/kWh) | ~$19,700 | ~$26,300 | 750W vs 1,000W TDP |

| Cooling Infrastructure | ~$3,000-$5,000 | ~$4,000-$7,000 | Liquid cooling for both |

| Software Engineering (migration) | $5,000-$15,000 | $0-$2,000 | ROCm porting cost, amortized |

| Support & Maintenance | ~$3,000-$5,000 | ~$5,000-$8,000 | Vendor support contracts |

| Total 3-Year TCO | $45,700-$64,700 | $65,300-$83,300 | MI350X: 22-30% lower |

Even with the ROCm migration cost factored in, the MI350X's lower hardware cost and lower power consumption produce a meaningfully lower 3-year TCO. The migration cost is a one-time expense that amortizes across the fleet - for a 100-GPU deployment, the per-GPU software engineering cost drops to near zero.

ROCm Software Readiness. AMD has stated that ROCm 7.x will ship alongside the MI350X with day-one support for PyTorch 2.x, vLLM, and other critical inference frameworks. The CDNA 3 to CDNA 4 transition is a significant architecture change that will require new kernel implementations and tuning - similar to what NVIDIA faces with each major GPU generation. AMD's track record on day-one software readiness has been mixed (the MI300X launch saw 3-4 months of software maturation before performance stabilized), so cautious optimism is warranted.

Key ROCm areas to watch at MI350X launch:

- FP4 kernel availability and performance for major model architectures

- Flash Attention v3 or equivalent for CDNA 4

- vLLM and SGLang integration quality

- DeepSpeed and FSDP multi-GPU training support

- Quantization tooling (GPTQ, AWQ, GGUF export) with FP4/FP6 targets

Cloud Provider Availability. AMD has stated that the MI350X will be available from major cloud providers at or near hardware launch. Expected availability:

| Cloud Provider | Expected Instance Type | Timing | Notes |

|---|---|---|---|

| Microsoft Azure | ND MI350X (likely) | H2 2025 or early 2026 | Azure is AMD's strongest cloud partner |

| Oracle Cloud | BM.GPU.MI350X (likely) | H2 2025 or early 2026 | OCI has been aggressive on AMD adoption |

| Google Cloud | Possible | TBD | GCP has been NVIDIA and TPU-focused |

| AWS | Uncertain | TBD | AWS has historically been NVIDIA-centric |

Microsoft Azure is the most likely early cloud partner for MI350X instances, given Azure's existing MI300X deployment and Microsoft's strategic interest in diversifying its AI accelerator supply chain away from exclusive NVIDIA dependence.

Structured Sparsity in Practice. While the MI350X's structured sparsity support is promising on paper, practical deployment requires model-level support. Not all models can be sparsified without quality degradation, and the tools for sparsification on ROCm are less mature than NVIDIA's ASP (Automatic Sparsity) tooling. Expect 6-12 months after MI350X launch before structured sparsity workflows are production-ready on ROCm. Early adopters should plan to use dense operations initially and add sparsity as tooling matures.

MI350X vs MI300X Feature Comparison. A comprehensive side-by-side for migration planning:

| Feature | MI300X (CDNA 3) | MI350X (CDNA 4, est.) | Delta |

|---|---|---|---|

| FP8 TFLOPS | 2,610 | ~3,600 | +38% |

| FP4 TFLOPS | N/A | ~7,200 | New |

| FP16/BF16 TFLOPS | 1,307 | ~1,800 | +38% |

| Memory Capacity | 192GB HBM3 | 288GB HBM3e | +50% |

| Memory Bandwidth | 5,300 GB/s | ~6,000 GB/s | +13% |

| Process Node | TSMC 5nm/6nm | TSMC 3nm | Full node shrink |

| TDP | 750W | 750W | Same |

| Price (est.) | $10,000-$15,000 | $15,000-$20,000 | +33-50% |

| Sparsity | Basic | 2:4 structured | Enhanced |

| ROCm Version | 6.x | 7.x (expected) | New major version |

| Software Compatibility | Baseline | Backward compatible | Recompile required |

Generational and Competitive Context

The MI350X arrives into a market that has shifted significantly since the MI300X launched.

vs. NVIDIA B200. The B200 is the MI350X's primary competitor. On raw specifications, the B200 has the edge in peak FP8 compute (~4,500 vs ~3,600 TFLOPS) and memory bandwidth (~8,000 vs ~6,000 GB/s). The MI350X counters with higher memory capacity (288GB vs 192GB) and a projected 40-50% lower price point. The competitive dynamics are nearly identical to MI300X vs H100 - AMD offers more memory at lower cost, NVIDIA offers higher peak performance and a more mature software stack. The question is whether the MI350X can capture more market share than the MI300X did, which will depend heavily on ROCm software quality at launch.

vs. AMD MI300X. For existing MI300X customers, the MI350X is a straightforward generational upgrade. The 50% memory increase, 38% FP8 improvement, and new FP4 support are all meaningful. The CDNA 3 to CDNA 4 software compatibility path should be smooth - AMD has committed to maintaining ROCm API stability. Organizations with large MI300X fleets should plan MI350X procurement for new capacity additions while continuing to run MI300X for workloads where 192GB is sufficient.

vs. Google TPU v7 Ironwood. The Ironwood shares the MI350X's inference-focused positioning but as a cloud-only product. Ironwood's 192GB per chip and 9,216-chip pod scale target a different deployment model - massive cloud inference farms operated by Google. The MI350X targets organizations that want to own their hardware, run PyTorch, and have flexibility in where they deploy. These products are not direct competitors in most procurement decisions because the buyer profile is fundamentally different.

vs. Huawei Ascend 910C. The 910C is in a different performance tier entirely. At ~800 TFLOPS FP16 versus the MI350X's estimated ~1,800 TFLOPS FP16, the MI350X delivers roughly 2.25x the compute per chip. The MI350X also has 3x the memory bandwidth. The only scenario where the 910C competes is within the Chinese domestic market where the MI350X is not available due to export restrictions.

AMD's Roadmap Beyond MI350X. AMD has disclosed that the Instinct roadmap continues beyond MI350X with annual cadence. The MI400 series (expected 2026) is anticipated to bring further memory and compute improvements on TSMC's next-generation process. For procurement planning, this means the MI350X is not a dead-end investment - it sits on a multi-year roadmap with continued software investment from AMD. The CDNA architecture evolution path gives existing ROCm investments longevity across multiple hardware generations.

Timing and Migration Planning. For organizations planning 2025-2026 infrastructure, the MI350X's H2 2025 launch date means hardware should be available for evaluation by late 2025 and for production deployment by early-to-mid 2026 (accounting for software maturation). If you need accelerators before H2 2025, the MI300X remains a strong choice. If you can wait, the MI350X offers significantly better value per dollar.

| Decision Factor | Buy MI300X Now | Wait for MI350X |

|---|---|---|

| Need hardware by Q2 2025 | Yes | No |

| Primary workload is 70B inference | MI300X handles this well | MI350X adds FP4 headroom |

| Need 405B single-GPU inference | Not possible (192GB) | Possible at FP4 (288GB) |

| Budget-constrained | MI300X is cheaper ($10-15K) | MI350X costs more ($15-20K) |

| ROCm migration not started | Start now on MI300X | Start now on MI300X, deploy MI350X later |

| Existing MI300X fleet | Expand with MI300X | Add MI350X for new capacity |

Use Case Recommendations

Strong Fit:

- Inference providers serving 200B-405B parameter models. The MI350X's 288GB with FP4 support is the defining use case - single-GPU deployment of Llama 405B-class models. No other purchasable accelerator (as opposed to cloud-only TPUs) can match this capability at the MI350X's projected price point.

- Organizations building multi-model inference platforms. The 288GB memory allows loading multiple smaller models simultaneously on a single GPU (for example, three 70B models at FP6, or a 70B model plus several smaller specialized models). This flexibility reduces the total GPU count needed for diverse inference workloads.

- MI300X fleet operators planning capacity expansion. Software compatibility from CDNA 3 to CDNA 4 means MI350X can slot into existing MI300X infrastructure with minimal engineering overhead. Mixed fleets are practical.

- Research labs working on next-generation quantization. Hardware-native FP4 and FP6 support makes the MI350X the ideal platform for research into aggressive quantization techniques - you can iterate on quantization strategies without software emulation overhead.

- Edge-to-cloud inference platforms. Organizations that need to run the same models across multiple environments benefit from AMD's broader hardware ecosystem. CDNA architecture for data center inference, RDNA for edge, and x86 CPUs for fallback - all under ROCm's unified software model.

- AI infrastructure companies offering multi-tenant GPU cloud. The 288GB memory per GPU allows serving multiple smaller customer models on a single accelerator, improving utilization rates and reducing per-customer infrastructure cost. Each tenant gets a model slice without requiring a dedicated GPU.

Key Risk Factors. Prospective MI350X buyers should track these risks:

| Risk | Probability | Impact | Mitigation |

|---|---|---|---|

| Launch delay beyond H2 2025 | Low-Medium | Delays deployment plans | Have MI300X fallback plan |

| Day-one ROCm kernel immaturity | Medium | 10-20% lower initial performance | Budget 3-6 months for optimization |

| HBM3e supply constraints | Low | Higher pricing or limited availability | Secure early allocation |

| NVIDIA B200 price cuts | Medium | Reduces MI350X value proposition | Monitor market before committing |

| FP4 quality issues for target models | Medium | Limits key capability | Validate FP4 quality on your models before buying |

Weak Fit:

- Organizations that need hardware immediately. The MI350X is not available yet. If you need GPUs in 2025 H1, buy MI300X or H100/B200 (if available).

- Pure training workloads at 1,000+ GPU scale. While the MI350X will train models effectively, NVIDIA's NVLink/NVSwitch interconnect ecosystem remains more mature for large-scale distributed training. If training throughput at scale is your sole metric, the B200 with NVLink is the safer bet.

- Teams with zero ROCm experience. The MI350X's value proposition assumes you are willing to invest in ROCm. If your entire stack is CUDA and you are not prepared to commit engineering resources to the port, the MI350X's lower hardware cost does not offset the software migration expense.

- Workloads that do not benefit from FP4. If your models cannot tolerate FP4 quantization (some scientific computing, medical AI, or high-precision applications), you lose the MI350X's most distinctive capability and the value proposition weakens relative to the B200.

- Organizations dependent on NVIDIA-exclusive software. TensorRT-LLM, NeMo Framework, and other NVIDIA-proprietary tools do not run on AMD hardware. If these are core to your workflow, the MI350X is not a viable alternative regardless of its hardware advantages.

Strengths

- 288GB HBM3e memory - the highest capacity in its generation, enabling single-GPU deployment of 405B-class models

- Estimated ~3,600 TFLOPS FP8 - a 38% generational improvement over the MI300X

- FP4 and FP6 data type support for next-generation quantization strategies

- TSMC 3nm process provides improved performance-per-watt and silicon density

- Projected price-per-GB significantly lower than NVIDIA's B200

- Chiplet architecture continues to provide manufacturing cost and yield advantages

- Maintained 750W TDP despite significant performance increases

Weaknesses

- All performance numbers are estimates until independent benchmarks are available at launch

- ROCm software ecosystem still trails CUDA in depth and breadth

- NVIDIA's B200 has higher peak bandwidth (8,000 GB/s vs ~6,000 GB/s) and peak compute (~4,500 vs ~3,600 TFLOPS FP8)

- Day-one software optimization quality is unknown - new architectures often need months of kernel tuning

- AMD's multi-GPU interconnect (Infinity Fabric) historically trails NVIDIA's NVLink for large-scale training

- FP4 quantization quality is model-dependent - not all workloads can tolerate the precision loss

- Market share gap means fewer production deployment references and community optimization resources

Related Coverage

- AMD Instinct MI300X - The current-generation MI300 predecessor

- Google TPU v7 Ironwood - Google's next-gen inference TPU, another non-NVIDIA alternative

- Huawei Ascend 910C - China's flagship AI accelerator on SMIC 7nm

- Google TPU v6e Trillium - Google's current-gen TPU for cloud workloads

- Huawei Ascend 910B - China's workhorse AI training chip